If this work somehow makes your day, maybe you can consider :)

small c++ library to quickly use onnxruntime to deploy deep learning models

Thanks to cardboardcode, we have the documentation for this small library. Hope that they both are helpful for your work.

Table of Contents

- Support inference of multi-inputs, multi-outputs

- Examples for famous models, like yolov3, mask-rcnn, ultra-light-weight face detector, yolox, PaddleSeg, SuperPoint, SuperGlue, LoFTR. Might consider supporting more if requested.

- (Minimal^^) Support for TensorRT backend

- Batch-inference

- build onnxruntime from source with the following script

# onnxruntime needs newer cmake version to build

bash ./scripts/install_latest_cmake.bash

bash ./scripts/install_onnx_runtime.bash

# dependencies to build apps

bash ./scripts/install_apps_dependencies.bashCPU

make default

# build examples

make appsGPU with CUDA

make gpu_default

make gpu_appsCPU

# build

docker build -f ./dockerfiles/ubuntu2004.dockerfile -t onnx_runtime .

# run

docker run -it --rm -v `pwd`:/workspace onnx_runtimeGPU with CUDA

# build

# change the cuda version to match your local cuda version before build the docker

docker build -f ./dockerfiles/ubuntu2004_gpu.dockerfile -t onnx_runtime_gpu .

# run

docker run -it --rm --gpus all -v `pwd`:/workspace onnx_runtime_gpu- Onnxruntime will be built with TensorRT support if the environment has TensorRT. Check this memo for useful URLs related to building with TensorRT.

- Be careful to choose TensorRT version compatible with onnxruntime. A good guess can be inferred from HERE.

- Also it is not possible to use models whose input shapes are dynamic with TensorRT backend, according to this

Usage

# after make apps

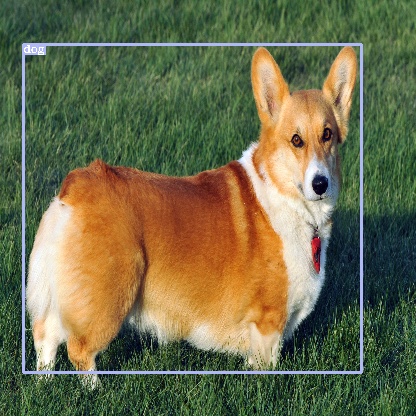

./build/examples/TestImageClassification ./data/squeezenet1.1.onnx ./data/images/dog.jpgthe following result can be obtained

264 : Cardigan, Cardigan Welsh corgi : 0.391365

263 : Pembroke, Pembroke Welsh corgi : 0.376214

227 : kelpie : 0.0314975

158 : toy terrier : 0.0223435

230 : Shetland sheepdog, Shetland sheep dog, Shetland : 0.020529

Usage

-

Download model from onnx model zoo: HERE

-

The shape of the output would be

OUTPUT_FEATUREMAP_SIZE X OUTPUT_FEATUREMAP_SIZE * NUM_ANCHORS * (NUM_CLASSES + 4 + 1)

where OUTPUT_FEATUREMAP_SIZE = 13; NUM_ANCHORS = 5; NUM_CLASSES = 20 for the tiny-yolov2 model from onnx model zoo

- Test tiny-yolov2 inference apps

# after make apps

./build/examples/tiny_yolo_v2 [path/to/tiny_yolov2/onnx/model] ./data/images/dog.jpgUsage

-

Download model from onnx model zoo: HERE

-

As also stated in the url above, there are four outputs: boxes(nboxes x 4), labels(nboxes), scores(nboxes), masks(nboxesx1x28x28)

-

Test mask-rcnn inference apps

# after make apps

./build/examples/mask_rcnn [path/to/mask_rcnn/onnx/model] ./data/images/dogs.jpgUsage

-

Download model from onnx model zoo: HERE

-

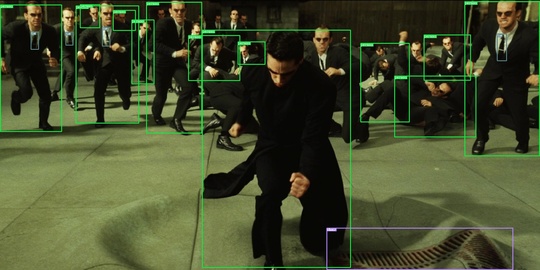

Test yolo-v3 inference apps

# after make apps

./build/examples/yolov3 [path/to/yolov3/onnx/model] ./data/images/no_way_home.jpgUsage

- App to use onnx model trained with famous light-weight Ultra-Light-Fast-Generic-Face-Detector-1MB

- Sample weight has been saved ./data/version-RFB-640.onnx

- Test inference apps

# after make apps

./build/examples/ultra_light_face_detector ./data/version-RFB-640.onnx ./data/images/endgame.jpgUsage

- Download onnx model trained on COCO dataset from HERE

# this app tests yolox_l model but you can try with other yolox models also.

wget https://github.com/Megvii-BaseDetection/YOLOX/releases/download/0.1.1rc0/yolox_l.onnx -O ./data/yolox_l.onnx- Test inference apps

# after make apps

./build/examples/yolox ./data/yolox_l.onnx ./data/images/matrix.jpgUsage

- Download PaddleSeg's bisenetv2 trained on cityscapes dataset that has been converted to onnx HERE and copy to ./data directory

You can also convert your own PaddleSeg with following procedures

- export PaddleSeg model

- convert exported model to onnx format with Paddle2ONNX

- Test inference apps

./build/examples/semantic_segmentation_paddleseg_bisenetv2 ./data/bisenetv2_cityscapes.onnx ./data/images/sample_city_scapes.png

./build/examples/semantic_segmentation_paddleseg_bisenetv2 ./data/bisenetv2_cityscapes.onnx ./data/images/odaiba.jpgUsage

- Convert SuperPoint's pretrained weights to onnx format

git submodule update --init --recursive

python3 -m pip install -r scripts/superpoint/requirements.txt

python3 scripts/superpoint/convert_to_onnx.py- Download test images from this dataset

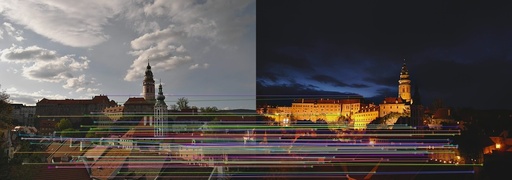

wget https://raw.githubusercontent.com/StaRainJ/Multi-modality-image-matching-database-metrics-methods/master/Multimodal_Image_Matching_Datasets/ComputerVision/CrossSeason/VisionCS_0a.png -P data

wget https://raw.githubusercontent.com/StaRainJ/Multi-modality-image-matching-database-metrics-methods/master/Multimodal_Image_Matching_Datasets/ComputerVision/CrossSeason/VisionCS_0b.png -P data- Test inference apps

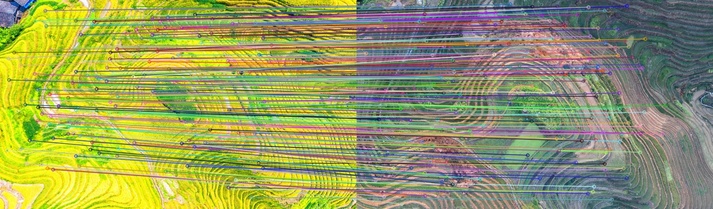

./build/examples/super_point /path/to/super_point.onnx data/VisionCS_0a.png data/VisionCS_0b.pngUsage

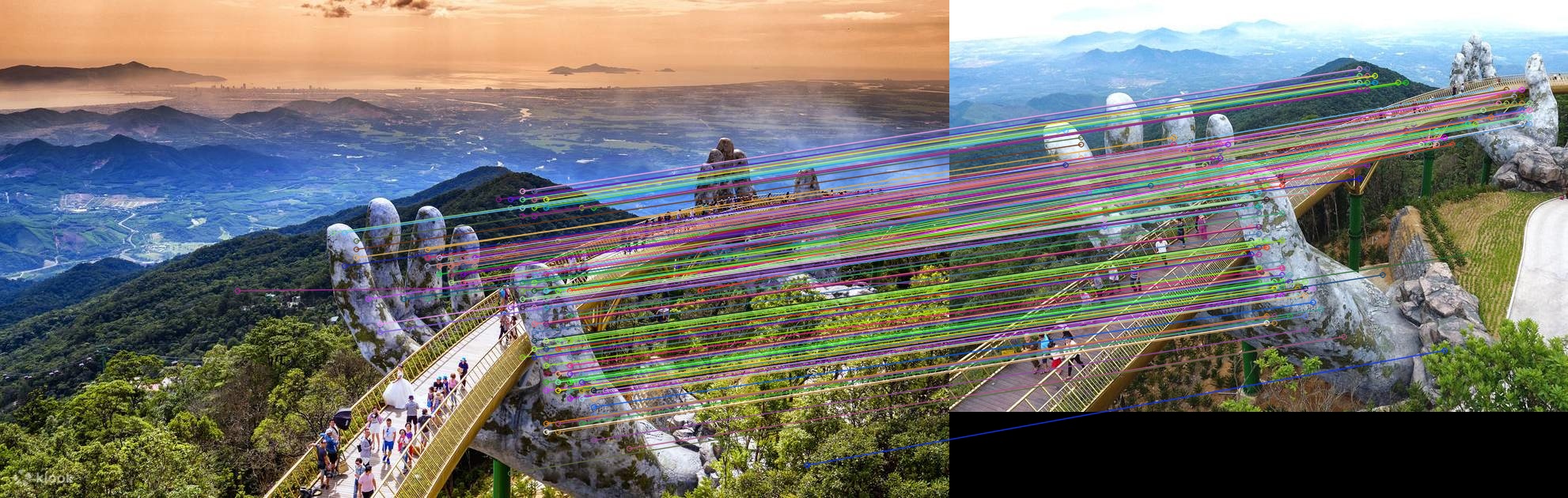

-

Convert SuperPoint's pretrained weights to onnx format: Follow the above instruction

-

Convert SuperGlue's pretrained weights to onnx format

git submodule update --init --recursive

python3 -m pip install -r scripts/superglue/requirements.txt

python3 -m pip install -r scripts/superglue/SuperGluePretrainedNetwork/requirements.txt

python3 scripts/superglue/convert_to_onnx.py-

Download test images from this dataset: Or prepare some pairs of your own images

-

Test inference apps

./build/examples/super_glue /path/to/super_point.onnx /path/to/super_glue.onnx /path/to/1st/image /path/to/2nd/imageUsage

-

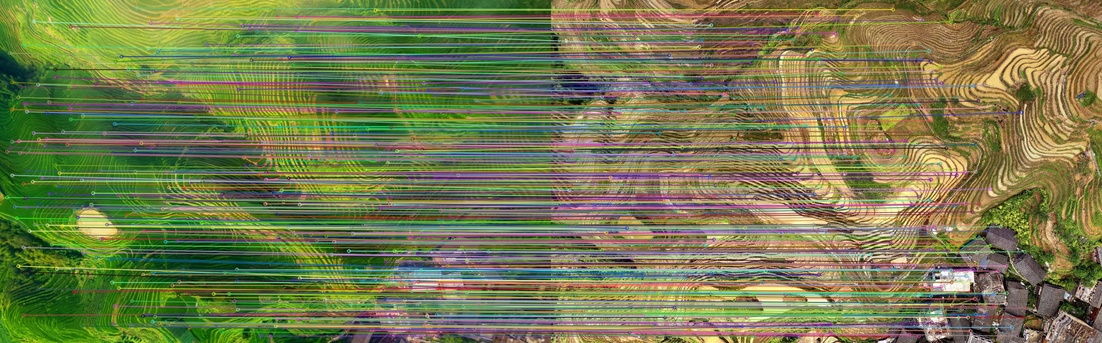

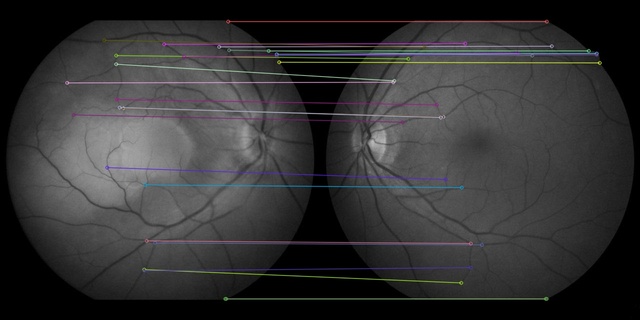

Download LoFTR weights indoords_new.ckpt from HERE. (LoFTR's latest commit seems to be only compatible with the new weights (Ref: zju3dv/LoFTR#48). Hence, this onnx cpp application is only compatible with _indoor_ds_new.ckpt weights)

-

Convert LoFTR's pretrained weights to onnx format

git submodule update --init --recursive

python3 -m pip install -r scripts/loftr/requirements.txt

python3 scripts/loftr/convert_to_onnx.py --model_path /path/to/indoor_ds_new.ckpt-

Download test images from this dataset: Or prepare some pairs of your own images

-

Test inference apps

./build/examples/loftr /path/to/loftr.onnx /path/to/loftr.onnx /path/to/1st/image /path/to/2nd/image