If you are interested in getting updates, please join our mailing list here.

- [2023/10/22] ImageNet training scripts for the EfficientViT L series have been released.

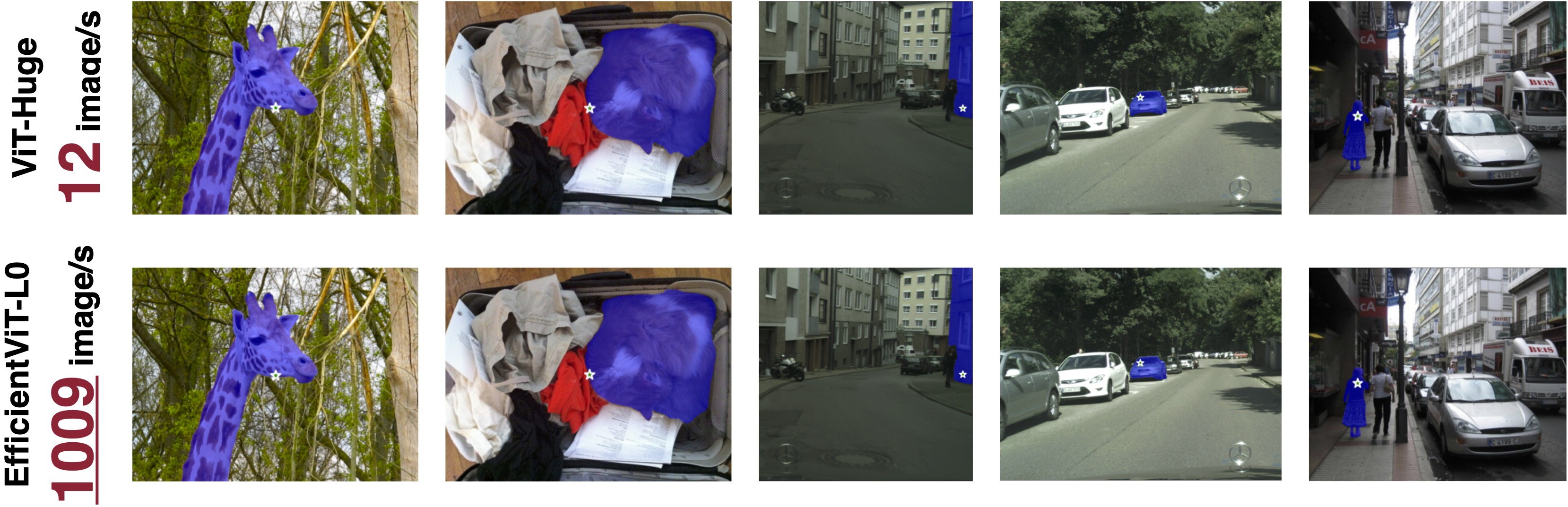

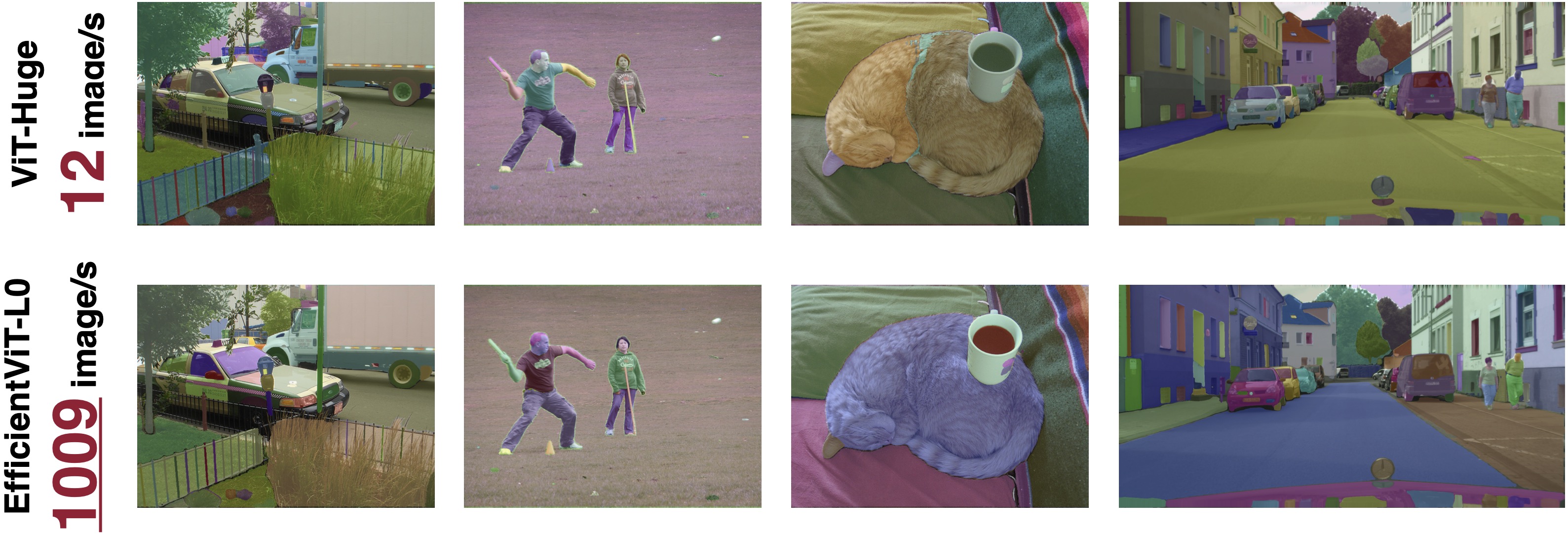

- [2023/09/18] EfficientViT for Segment Anything Model (SAM) is released. EfficientViT SAM runs at 1009 images/s on A100 GPU, compared to ViT-H (12 images/s), mobileSAM (297 images/s), and nanoSAM (744 image/s, but much lower mIoU)

- [2023/09/12] EfficientViT is highlighted by MIT home page and MIT News.

- [2023/07/18] EfficientViT is accepted by ICCV 2023.

EfficientViT-L0 for Segment Anything (1009 image/s on A100 GPU)

EfficientViT-L1 for Semantic Segmentation (45.9ms on Nvidia Jetson AGX Orin, 82.716 mIoU on Cityscapes)

EfficientViT is a new family of ViT models for efficient high-resolution dense prediction vision tasks. The core building block of EfficientViT is a lightweight, multi-scale linear attention module that achieves global receptive field and multi-scale learning with only hardware-efficient operations, making EfficientViT TensorRT-friendly and suitable for GPU deployment.

conda create -n efficientvit python=3.10

conda activate efficientvit

conda install -c conda-forge mpi4py openmpi

pip install -r requirements.txtImageNet: https://www.image-net.org/

Our code expects the ImageNet dataset directory to follow the following structure:

imagenet

├── train

├── valCityscapes: https://www.cityscapes-dataset.com/

Our code expects the Cityscapes dataset directory to follow the following structure:

cityscapes

├── gtFine

| ├── train

| ├── val

├── leftImg8bit

| ├── train

| ├── valADE20K: https://groups.csail.mit.edu/vision/datasets/ADE20K/

Our code expects the ADE20K dataset directory to follow the following structure:

ade20k

├── annotations

| ├── training

| ├── validation

├── images

| ├── training

| ├── validationLatency/Throughput is measured on NVIDIA Jetson Nano, NVIDIA Jetson AGX Orin, and NVIDIA A100 GPU with TensorRT, fp16. Data transfer time is included.

In this version, the EfficientViT segment anything models are trained using the image embedding extracted by SAM ViT-H as the target. The prompt encoder and mask decoder are the same as SAM ViT-H.

| Image Encoder | COCO-val2017 mIoU (all) | COCO-val2017 mIoU (large) | COCO-val2017 mIoU (medium) | COCO-val2017 mIoU (small) | Params | MACs | A100 Throughput | Checkpoint |

|---|---|---|---|---|---|---|---|---|

| NanoSAM | 70.6 | 79.6 | 73.8 | 62.4 | - | - | 744 image/s | - |

| MobileSAM | 72.8 | 80.4 | 75.9 | 65.8 | - | - | 297 image/s | - |

| EfficientViT-L0-SAM | 74.454 | 81.410 | 77.201 | 68.159 | 31M | 35G | 1009 image/s | link |

| EfficientViT-L1-SAM | 75.183 | 81.786 | 78.110 | 68.944 | 44M | 49G | 815 image/s | link |

| EfficientViT-L2-SAM | 75.656 | 81.706 | 78.644 | 69.689 | 57M | 69G | 634 image/s | link |

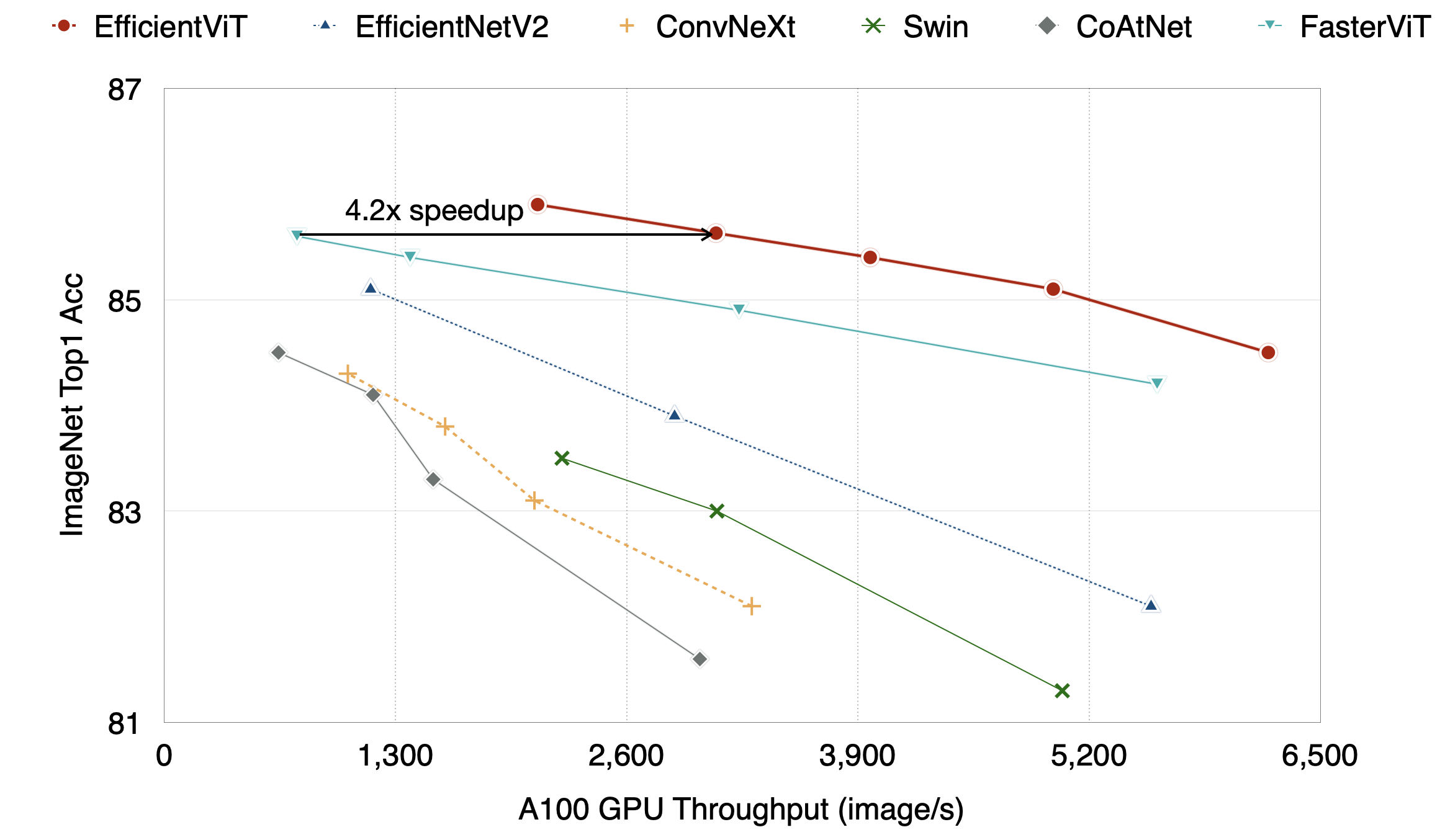

All EfficientViT classification models are trained on ImageNet-1K with random initialization (300 epochs + 20 warmup epochs) using supervised learning.

| Model | Resolution | ImageNet Top1 Acc | ImageNet Top5 Acc | Params | MACs | A100 Throughput | Checkpoint |

|---|---|---|---|---|---|---|---|

| EfficientNetV2-S | 384x384 | 83.9 | - | 22M | 8.4G | 2869 image/s | - |

| EfficientNetV2-M | 480x480 | 85.2 | - | 54M | 25G | 1160 image/s | - |

| EfficientViT-L1 | 224x224 | 84.484 | 96.862 | 53M | 5.3G | 6207 image/s | link |

| EfficientViT-L2 | 224x224 | 85.050 | 97.090 | 64M | 6.9G | 4998 image/s | link |

| EfficientViT-L2 | 256x256 | 85.366 | 97.216 | 64M | 9.1G | 3969 image/s | link |

| EfficientViT-L2 | 288x288 | 85.630 | 97.364 | 64M | 11G | 3102 image/s | link |

| EfficientViT-L2 | 320x320 | 85.734 | 97.438 | 64M | 14G | 2525 image/s | link |

| EfficientViT-L2 | 384x384 | 85.978 | 97.518 | 64M | 20G | 1784 image/s | link |

| EfficientViT-L3 | 224x224 | 85.814 | 97.198 | 246M | 28G | 2081 image/s | link |

| EfficientViT-L3 | 256x256 | 85.938 | 97.318 | 246M | 36G | 1641 image/s | link |

| EfficientViT-L3 | 288x288 | 86.070 | 97.440 | 246M | 46G | 1276 image/s | link |

| EfficientViT-L3 | 320x320 | 86.230 | 97.474 | 246M | 56G | 1049 image/s | link |

| EfficientViT-L3 | 384x384 | 86.408 | 97.632 | 246M | 81G | 724 image/s | link |

EfficientViT B series

| Model | Resolution | ImageNet Top1 Acc | ImageNet Top5 Acc | Params | MACs | Jetson Nano (bs1) | Jetson Orin (bs1) | Checkpoint |

|---|---|---|---|---|---|---|---|---|

| EfficientViT-B1 | 224x224 | 79.390 | 94.346 | 9.1M | 0.52G | 24.8ms | 1.48ms | link |

| EfficientViT-B1 | 256x256 | 79.918 | 94.704 | 9.1M | 0.68G | 28.5ms | 1.57ms | link |

| EfficientViT-B1 | 288x288 | 80.410 | 94.984 | 9.1M | 0.86G | 34.5ms | 1.82ms | link |

| EfficientViT-B2 | 224x224 | 82.100 | 95.782 | 24M | 1.6G | 50.6ms | 2.63ms | link |

| EfficientViT-B2 | 256x256 | 82.698 | 96.096 | 24M | 2.1G | 58.5ms | 2.84ms | link |

| EfficientViT-B2 | 288x288 | 83.086 | 96.302 | 24M | 2.6G | 69.9ms | 3.30ms | link |

| EfficientViT-B3 | 224x224 | 83.468 | 96.356 | 49M | 4.0G | 101ms | 4.36ms | link |

| EfficientViT-B3 | 256x256 | 83.806 | 96.514 | 49M | 5.2G | 120ms | 4.74ms | link |

| EfficientViT-B3 | 288x288 | 84.150 | 96.732 | 49M | 6.5G | 141ms | 5.63ms | link |

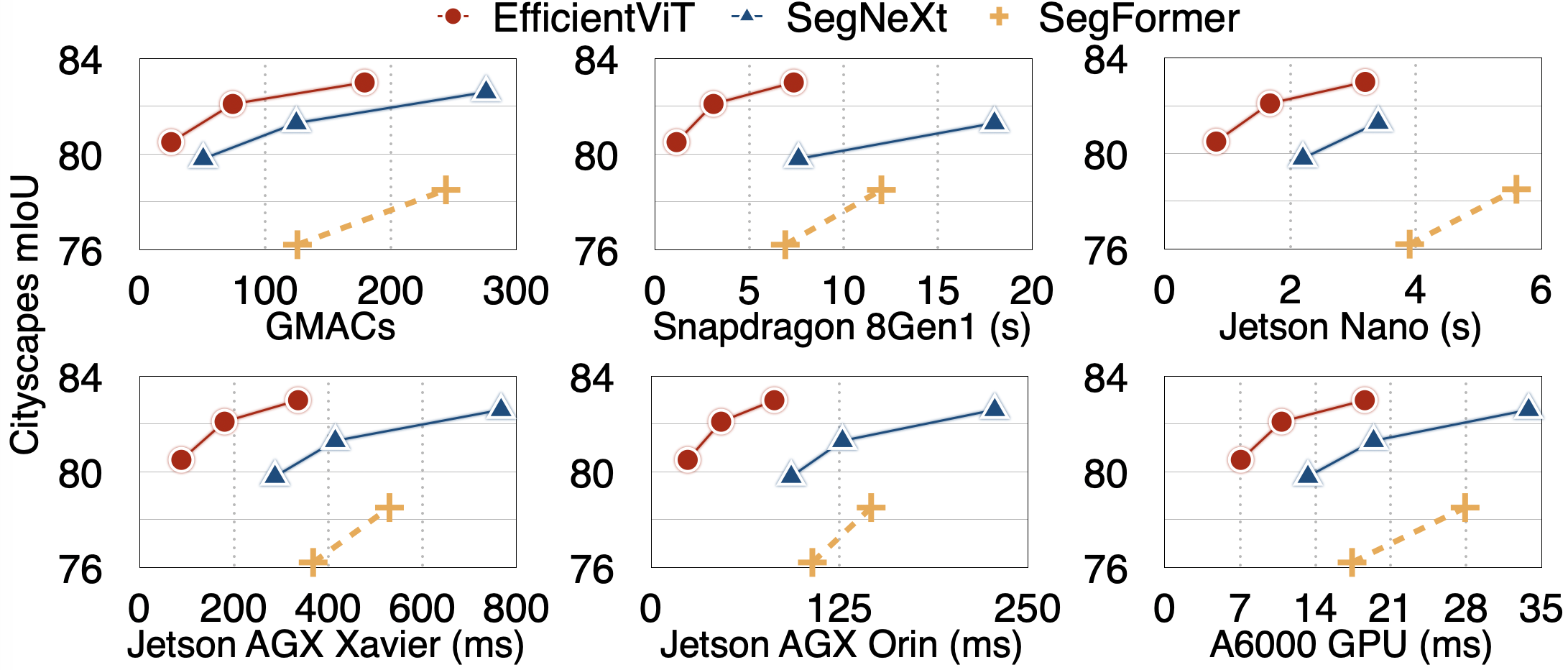

| Model | Resolution | Cityscapes mIoU | Params | MACs | Jetson Orin Latency (bs1) | A100 Throughput (bs1) | Checkpoint |

|---|---|---|---|---|---|---|---|

| EfficientViT-L1 | 1024x2048 | 82.716 | 40M | 282G | 45.9ms | 122 image/s | link |

| EfficientViT-L2 | 1024x2048 | 83.228 | 53M | 396G | 60.0ms | 102 image/s | link |

EfficientViT B series

| Model | Resolution | Cityscapes mIoU | Params | MACs | Jetson Nano (bs1) | Jetson Orin (bs1) | Checkpoint |

|---|---|---|---|---|---|---|---|

| EfficientViT-B0 | 1024x2048 | 75.653 | 0.7M | 4.4G | 275ms | 9.9ms | link |

| EfficientViT-B1 | 1024x2048 | 80.547 | 4.8M | 25G | 819ms | 24.3ms | link |

| EfficientViT-B2 | 1024x2048 | 82.073 | 15M | 74G | 1676ms | 46.5ms | link |

| EfficientViT-B3 | 1024x2048 | 83.016 | 40M | 179G | 3192ms | 81.8ms | link |

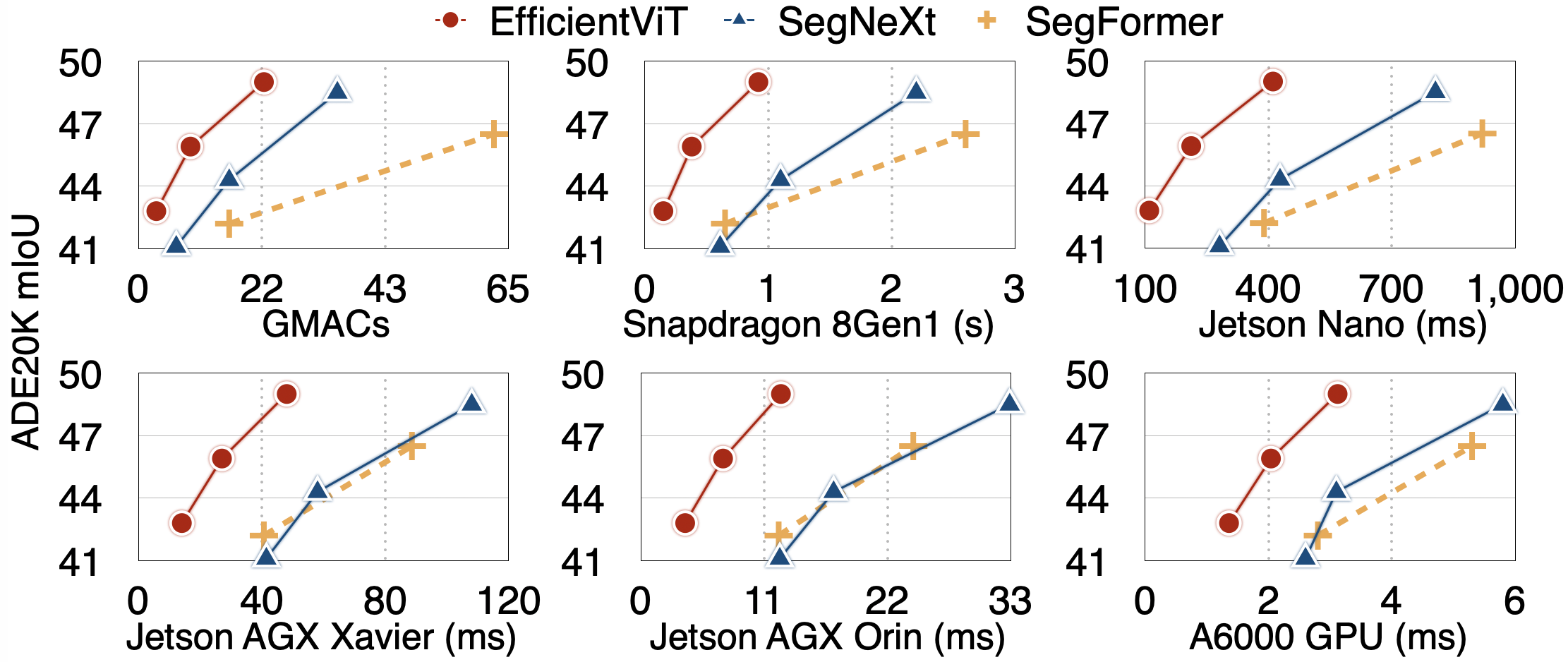

| Model | Resolution | ADE20K mIoU | Params | MACs | Jetson Orin Latency (bs1) | A100 Throughput (bs16) | Checkpoint |

|---|---|---|---|---|---|---|---|

| EfficientViT-L1 | 512x512 | 49.191 | 40M | 36G | 7.2ms | 947 image/s | link |

| EfficientViT-L2 | 512x512 | 50.702 | 51M | 45G | 9.0ms | 758 image/s | link |

EfficientViT B series

| Model | Resolution | ADE20K mIoU | Params | MACs | Jetson Nano (bs1) | Jetson Orin (bs1) | Checkpoint |

|---|---|---|---|---|---|---|---|

| EfficientViT-B1 | 512x512 | 42.840 | 4.8M | 3.1G | 110ms | 4.0ms | link |

| EfficientViT-B2 | 512x512 | 45.941 | 15M | 9.1G | 212ms | 7.3ms | link |

| EfficientViT-B3 | 512x512 | 49.013 | 39M | 22G | 411ms | 12.5ms | link |

# segment anything

from efficientvit.sam_model_zoo import create_sam_model

efficientvit_sam = create_sam_model(

name="l2", weight_url="assets/checkpoints/sam/l2.pt",

)

efficientvit_sam = efficientvit_sam.cuda().eval()from efficientvit.models.efficientvit.sam import EfficientViTSamPredictor

efficientvit_sam_predictor = EfficientViTSamPredictor(efficientvit_sam)from efficientvit.models.efficientvit.sam import EfficientViTSamAutomaticMaskGenerator

efficientvit_mask_generator = EfficientViTSamAutomaticMaskGenerator(efficientvit_sam)# classification

from efficientvit.cls_model_zoo import create_cls_model

model = create_cls_model(

name="l3", weight_url="assets/checkpoints/cls/l3-r384.pt"

)# semantic segmentation

from efficientvit.seg_model_zoo import create_seg_model

model = create_seg_model(

name="l2", dataset="cityscapes", weight_url="assets/checkpoints/seg/cityscapes/l2.pt"

)

model = create_seg_model(

name="l2", dataset="ade20k", weight_url="assets/checkpoints/seg/ade20k/l2.pt"

)Please run eval_sam_coco.py, eval_cls_model.py or eval_seg_model.py to evaluate our models.

Examples: segment anything, classification, segmentation

Please run demo_sam_model.py to visualize our segment anything models.

Example:

# segment everything

python demo_sam_model.py --model l1 --mode all

# prompt with points

python demo_sam_model.py --model l1 --mode point

# prompt with box

python demo_sam_model.py --model l1 --mode box --box "[150,70,630,400]"

Please run eval_seg_model.py to visualize the outputs of our semantic segmentation models.

Example:

python eval_seg_model.py --dataset cityscapes --crop_size 1024 --model b3 --save_path demo/cityscapes/b3/To generate TFLite files, please refer to tflite_export.py. It requires the TinyNN package.

pip install git+https://github.com/alibaba/TinyNeuralNetwork.gitExample:

python tflite_export.py --export_path model.tflite --task seg --dataset ade20k --model b3 --resolution 512 512To generate ONNX files, please refer to onnx_export.py.

To export ONNX files for EfficientViT SAM models, please refer to the scripts shared by CVHub.

Please see TRAINING.md for detailed training instructions.

Han Cai: hancai@mit.edu

- ImageNet Pretrained models

- Segmentation Pretrained models

- ImageNet training code

- EfficientViT L series, designed for cloud

- EfficientViT for segment anything

- EfficientViT for image generation

- EfficientViT for super-resolution

- Segmentation training code

If EfficientViT is useful or relevant to your research, please kindly recognize our contributions by citing our paper:

@article{cai2022efficientvit,

title={Efficientvit: Enhanced linear attention for high-resolution low-computation visual recognition},

author={Cai, Han and Gan, Chuang and Han, Song},

journal={arXiv preprint arXiv:2205.14756},

year={2022}

}