$ make dataCalls make-data.py to (roughly) do the following:

- Choose some images.

- For each image, choose a protein.

- Convert the image to black and white.

- Extract the horizontal black lines from the image.

- Scale the image to be the (aa) length of the protein.

- Break each horizontal line into some reads.

- Calculate a bit score for the reads based on how far down we are in the image.

- Add some noise (otherwise the image looks too good and you can't see the individual reads).

- Write out fake DIAMOND results for those reads.

- Write our fake FASTQ files for the reads.

The files to be injected into the pipeline appear in OUT/json and

OUT/fastq.

When the pipeline for the target sample is finished with the

03-diamond-civ-rna and 025-dedup steps:

$ make addCalls add-data.py to:

- Add the compressed DIAMOND results to the pre-existing

03-diamond-civ-rnaoutput. - Add the compressed FASTQ to the pre-existing

025-dedupoutput.

No original data is touched. Only intermediate pipeline outputs are appended to. The original intermediate files are saved.

$ make rerunCalls rerun-pipeline.py to re-run those two pipeline steps.

The easiest/calmest way to deploy is just to edit the 06-stop/stop.sh

script for the sample so that it does not remove the slurm-pipeline.running

file (or create slurm-pipeline.done). Instead you can just make it touch

some other file and wait for that file to show up. Then you do the make add

and make rerun. After that, just mv slurm-pipeline.running slurm-pipeline.done and the sample will be considered done by

monitor-run.py.

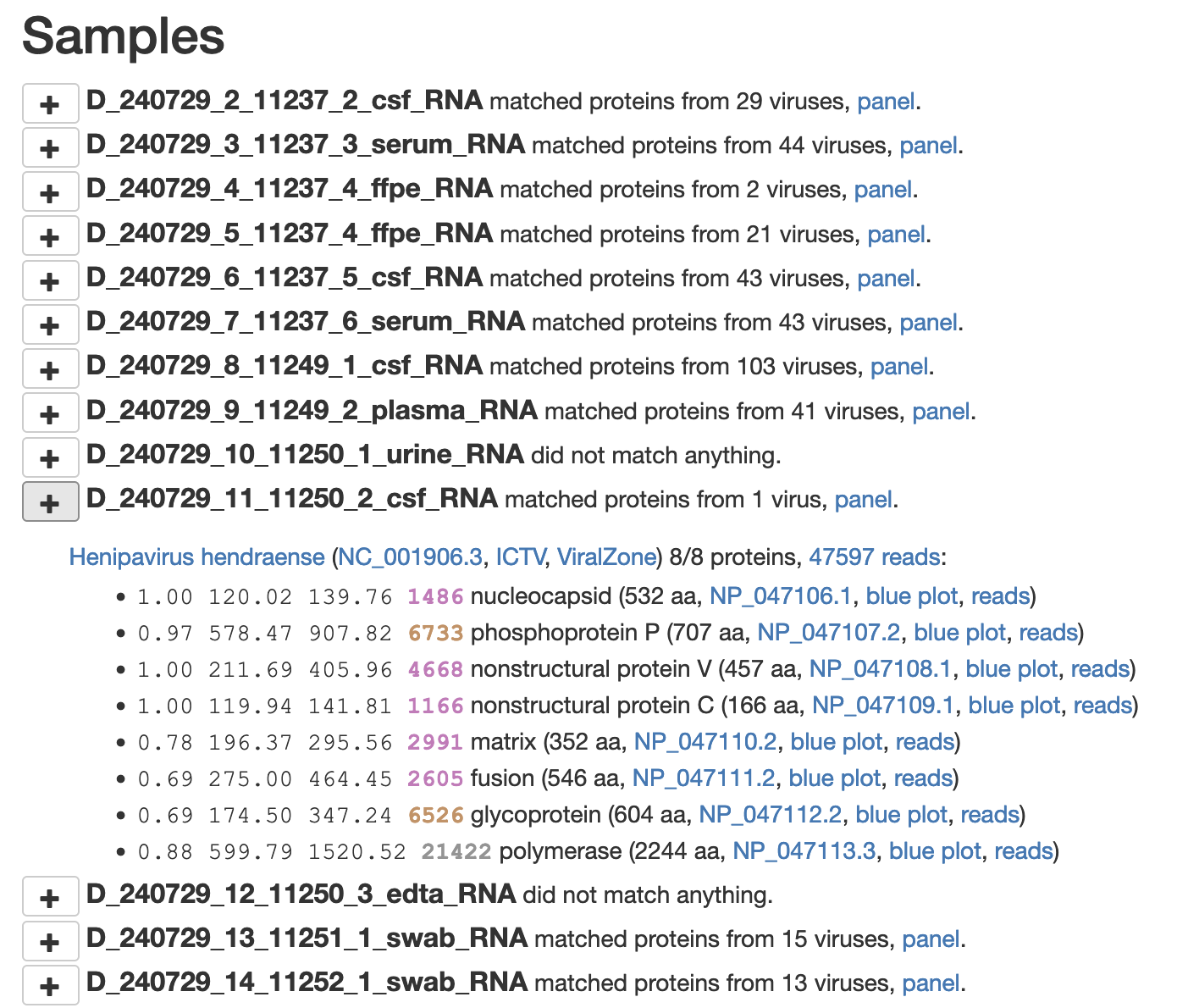

The pipeline results look like this:

with "blue plots" like this