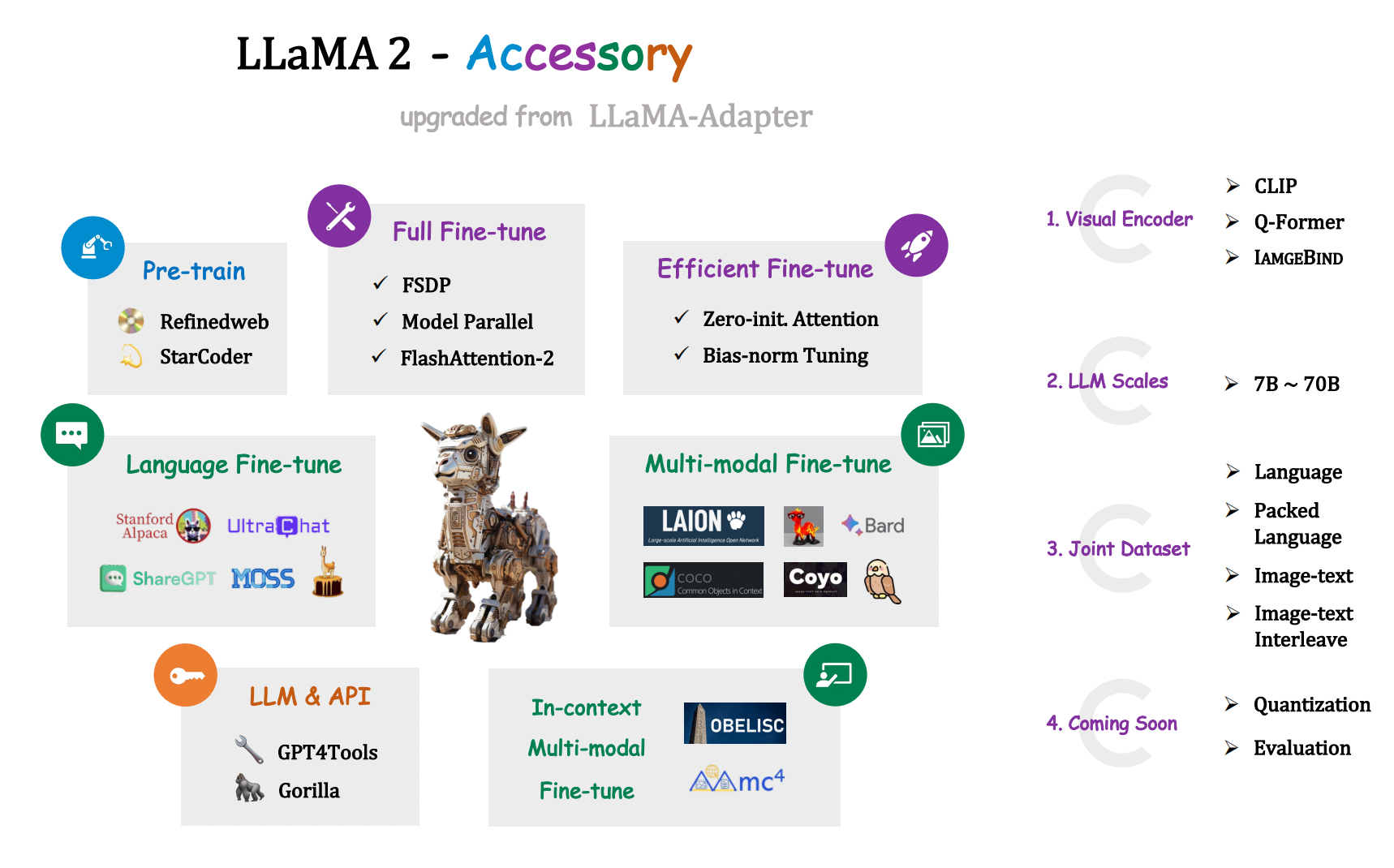

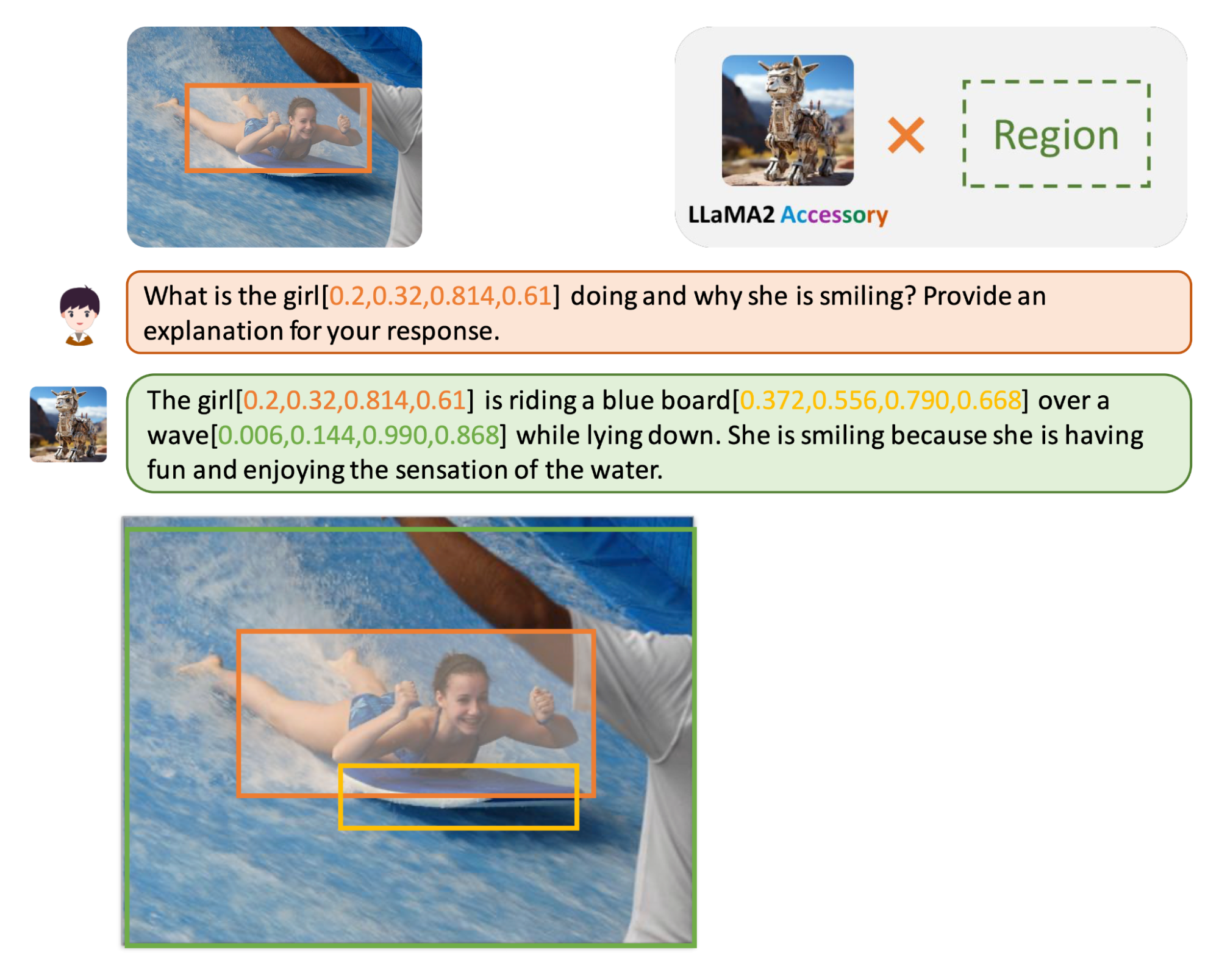

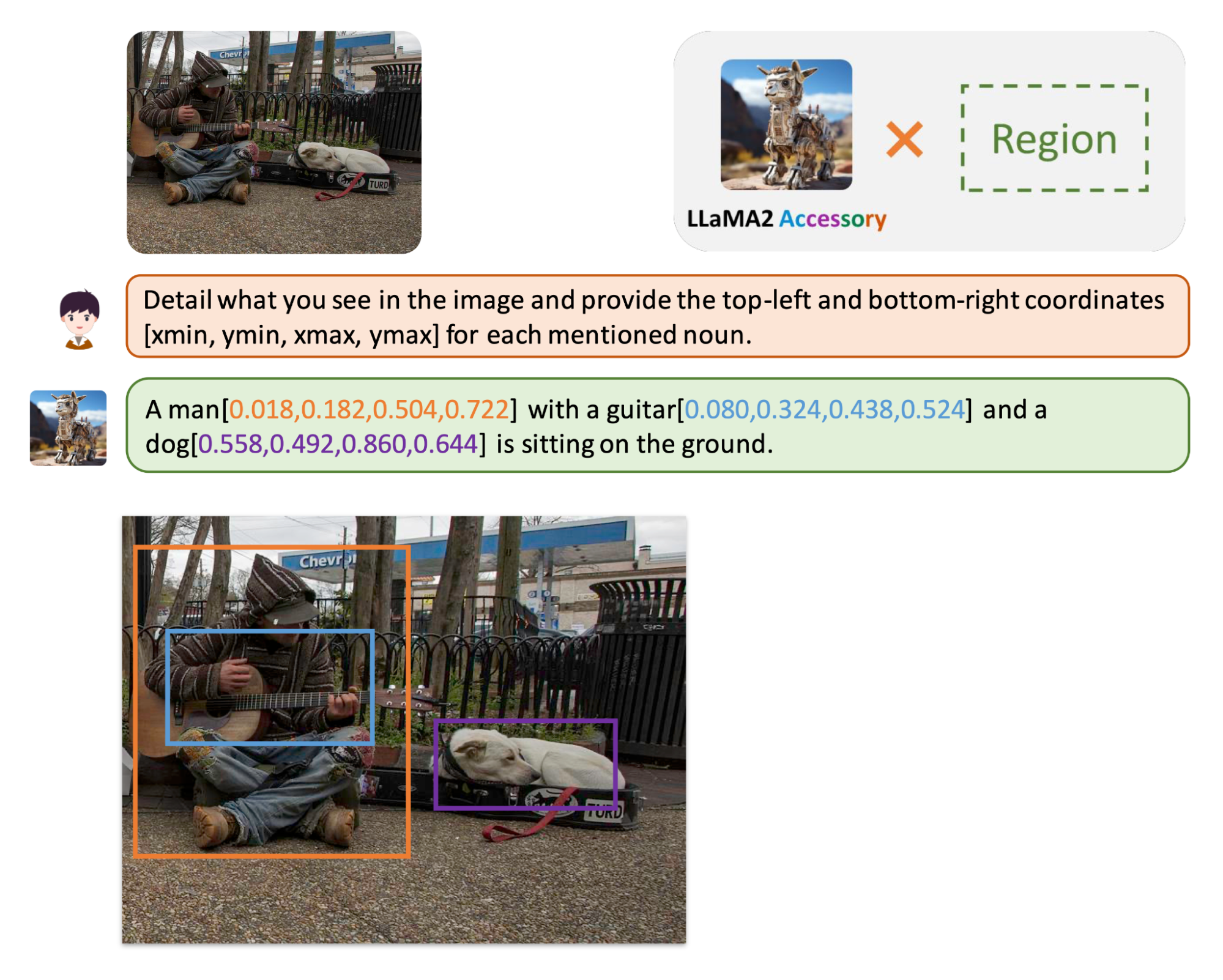

🚀LLaMA2-Accessory is an open-source toolkit for pre-training, fine-tuning and deployment of Large Language Models (LLMs) and mutlimodal LLMs. This repo is mainly inherited from LLaMA-Adapter with more advanced features.🧠

- [2023.08.05] We release the multimodel fine-tuning codes and checkpoints🔥🔥🔥

- [2023.07.23] Initial release 📌

-

💡Support More Datasets and Tasks

- 🎯 Pre-training with RefinedWeb and StarCoder.

- 📚 Single-modal fine-tuning with Alpaca, ShareGPT, LIMA, WizardLM, UltraChat and MOSS.

- 🌈 Multi-modal fine-tuning with image-text pairs (LAION, COYO and more), interleaved image-text data (MMC4 and OBELISC) and visual instruction data (LLaVA, Shrika, Bard)

- 🔧 LLM for API Control (GPT4Tools and Gorilla).

-

⚡Efficient Optimization and Deployment

- 🚝 Parameter-efficient fine-tuning with Zero-init Attenion and Bias-norm Tuning.

- 💻 Fully Sharded Data Parallel (FSDP), Flash Attention 2 and QLoRA.

-

🏋️♀️Support More Visual Encoders and LLMs

⚙️ For environment installation, please refer to docs/install.md.

🤖 Instructions for model training, inference, and fine-tuning are available in docs/pretrain.md, docs/inference.md, and docs/finetune.md, respectively.

❓ Encountering issues or have further questions? Find answers to common inquiries here. We're here to assist you!

- Instruction-tuned LLaMA2: alpaca & gorilla.

- Chatbot LLaMA2: dialog_sharegpt & dialog_lima & llama2-chat.

- Multimodal LLaMA2: in-context & alpacaLlava_llamaQformerv2_13b

Chris Liu, Ziyi Lin, Guian Fang, Jiaming Han, Yijiang Liu, Renrui Zhang

Peng Gao, Wenqi Shao, Shanghang Zhang

🔥 We are hiring interns, postdocs, and full-time researchers at the General Vision Group, Shanghai AI Lab, with a focus on multi-modality and vision foundation models. If you are interested, please contact gaopengcuhk@gmail.com.

If you find our code and paper useful, please kindly cite:

@article{zhang2023llamaadapter,

title = {LLaMA-Adapter: Efficient Fine-tuning of Language Models with Zero-init Attention},

author={Zhang, Renrui and Han, Jiaming and Liu, Chris and Gao, Peng and Zhou, Aojun and Hu, Xiangfei and Yan, Shilin and Lu, Pan and Li, Hongsheng and Qiao, Yu},

journal={arXiv preprint arXiv:2303.16199},

year={2023}

}@article{gao2023llamaadapterv2,

title = {LLaMA-Adapter V2: Parameter-Efficient Visual Instruction Model},

author={Gao, Peng and Han, Jiaming and Zhang, Renrui and Lin, Ziyi and Geng, Shijie and Zhou, Aojun and Zhang, Wei and Lu, Pan and He, Conghui and Yue, Xiangyu and Li, Hongsheng and Qiao, Yu},

journal={arXiv preprint arXiv:2304.15010},

year={2023}

}Show More

- @facebookresearch for ImageBind & LIMA

- @Instruction-Tuning-with-GPT-4 for GPT-4-LLM

- @tatsu-lab for stanford_alpaca

- @tloen for alpaca-lora

- @lm-sys for FastChat

- @domeccleston for sharegpt

- @karpathy for nanoGPT

- @Dao-AILab for flash-attention

- @NVIDIA for apex & Megatron-LM

- @Vision-CAIR for MiniGPT-4

- @haotian-liu for LLaVA

- @huggingface for peft & OBELISC

- @Lightning-AI for lit-gpt & lit-llama

- @allenai for mmc4

- @StevenGrove for GPT4Tools

- @ShishirPatil for gorilla

- @OpenLMLab for MOSS

- @thunlp for UltraChat

- @LAION-AI for LAION-5B

- @shikras for shikra

- @kakaobrain for coyo-dataset

- @salesforce for LAVIS

- @openai for CLIP

- @bigcode-project for starcoder

- @tiiuae for falcon-refinedweb

- @microsoft for DeepSpeed

- @declare-lab for flacuna

- @nlpxucan for WizardLM

- @Google for Bard

Llama 2 is licensed under the LLAMA 2 Community License, Copyright (c) Meta Platforms, Inc. All Rights Reserved.