Self-Driving Car Engineer Nanodegree Program

When we drive, we use our eyes to decide where to go. The lines on the road that show us where the lanes are act as our constant reference for where to steer the vehicle. Naturally, one of the first things we would like to do in developing a self-driving car is to automatically detect lane lines using an algorithm.

The goal of this project is to make a pipeline that finds lane lines on the road. In the implementation we detect lane lines in images using Python and OpenCV.

Below you will find a summary of the essential details about the repository, files, installation and required dependencies. The project contains various files essential to whole pipeline of the implementation:

P1.ipynb: jupyter notebook with the code that implements simple lane line trackingP1.html: HTML export of the jupyter notebooktest_video_output/solidWhiteRight.mp4andtest_video_output/solidYellowLeft.mp4: videos of the tracking of lane lines on a highwaytest_images: test images used during implemetationtest_videos/: folder containing the raw videos where the lines were tracked

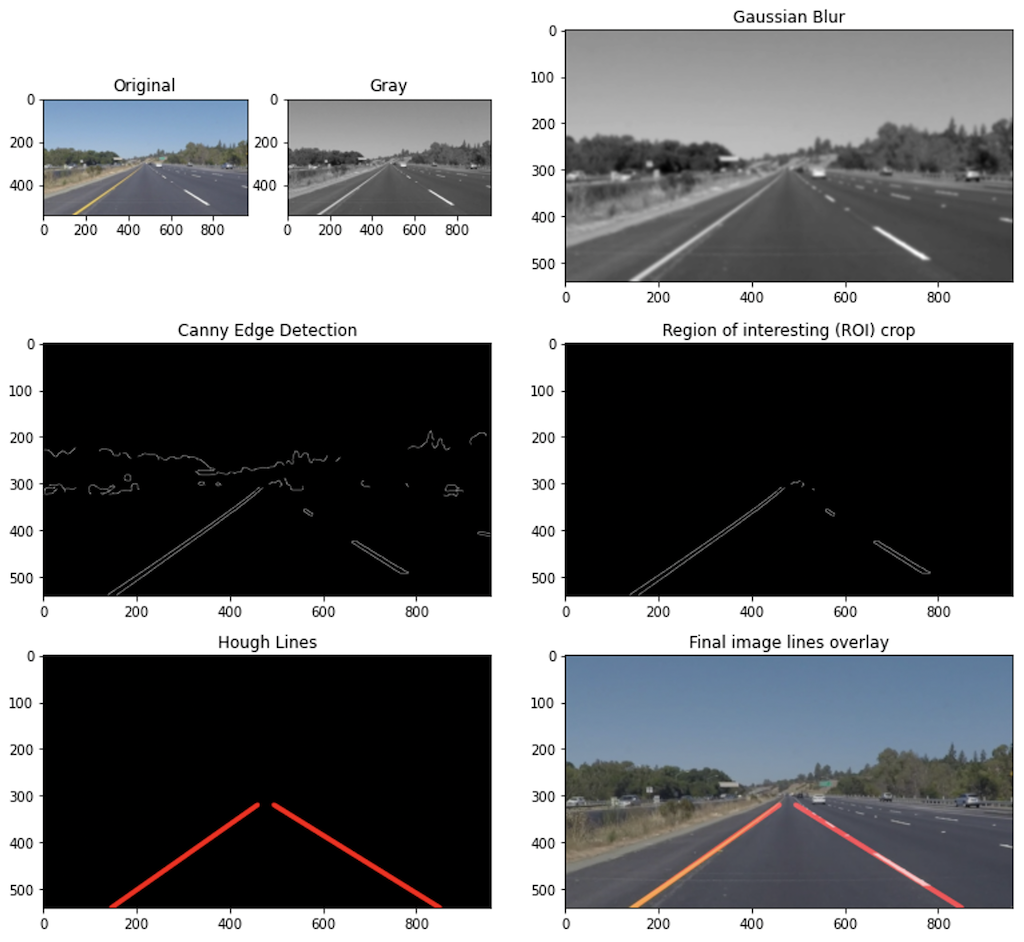

The pipeline consists of the following steps.

- Convert the input image/video to gray scale

- Apply Gaussian blur with a kernel size of 15

- Apply Canny edge detection to find relevant lines in the image

- Crop the image to a given region of interest (ROI)

- Apply Hough transform to find signification lines in the image

- Overlay the found lines to the original RGB image/video

In order to draw a single line on the left and right lanes, I modified the draw_lines() function by first calculating the slop of the incoming lines. If the slope is positive it belongs to the right lane line, and if negavetive it belongs to the left lane line. The individual (x,y) points (vertices) were then sorted into two arrays. Two first-order polynomial were fitted to the right and left points using np.polyfit(x,y,1). Hereafter two lines were drawn within the limits of the image boundary.

One potential shortcoming would be the instability to changing lighting conditions, shadows and colors. Additionally, the tracking of smaller line segments makes the final line detection for those areas become were eradic and not that confident.

A possible improvement would be to different color spaces to increase the stability of tracking under varying lighting conditions. The instability of the final line detection from draw_lines() could perhaps be improved by better fitting or averagining the points detected.

Simon Bøgh