This repo is for beginners who want to test Nvidia's trt_pose pose estimation.

To get started, follow the instructions below. If you run into any issues please let us know.

To get started with trt_pose, follow these steps.

Please follow instructions on official Nvidia's trt_pose and torch2trt - An easy to use PyTorch to TensorRT converter.

Move the pretrained models into the tasks/human_pose/pretrained directory.

If you have problem installing torch2trt, you can skip this step.

cd trt_pose/tasks/human_pose

python convert_trt.py --model pretrained/densenet121_baseline_att_256x256_B_epoch_160.pth --json human_pose.json --size 256You can find the size of input image in the name of your model file.

There are two modes to test : input a video and test with your webcam.

If you passed step-2, run this:

python inference.py --trt_model pretrained/densenet121_baseline_att_256x256_B_epoch_160_trt.pth --json human_pose.json --size 256 --video_input test.mkvIf you skipped step-2, run this:

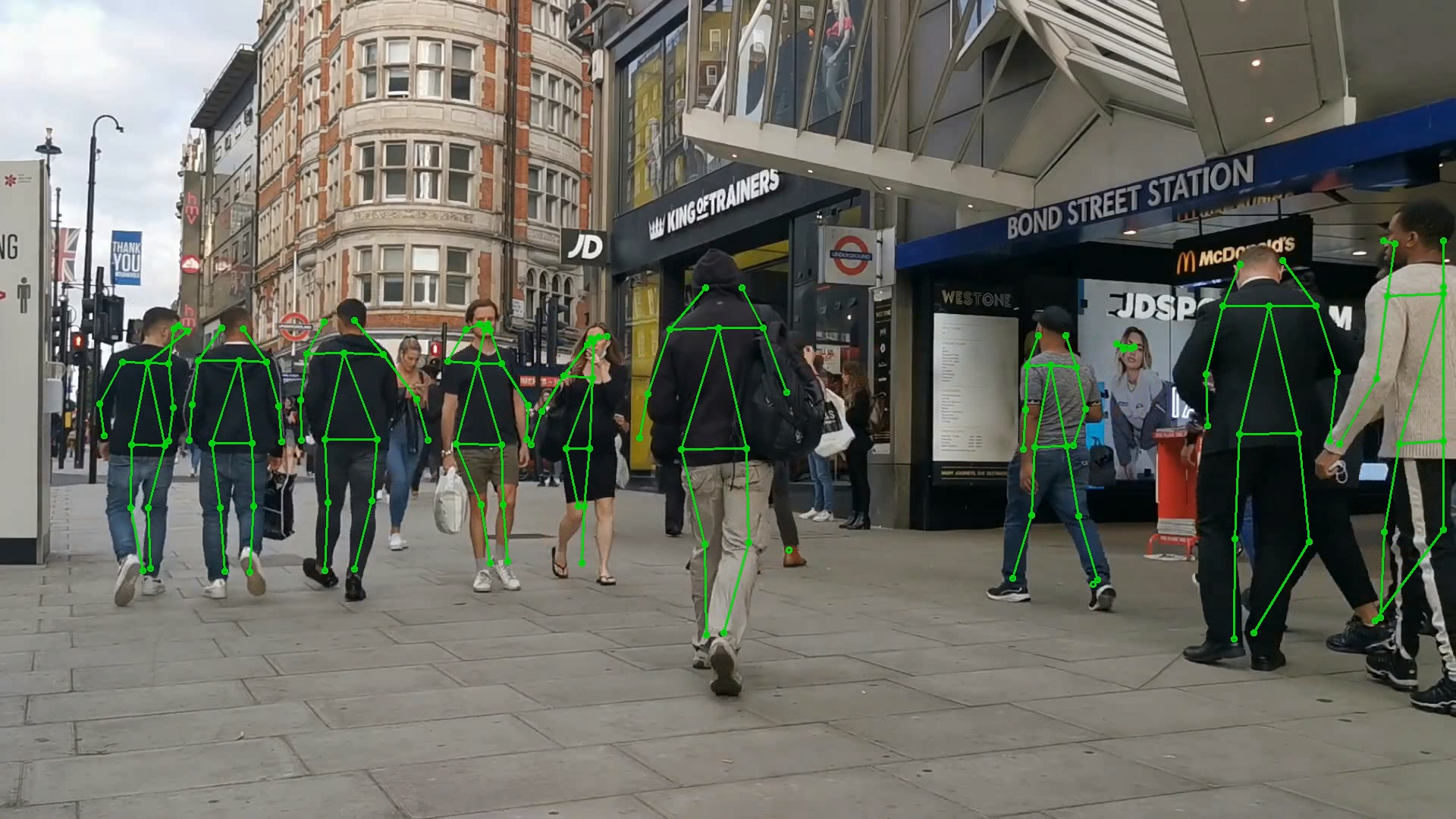

python inference.py --trt_model pretrained/densenet121_baseline_att_256x256_B_epoch_160.pth --json human_pose.json --size 256 --video_input test.mkvAbove Image is tested with video from https://www.youtube.com/watch?v=YzcawvDGe4Y.