LLAVAGUARD: VLM-based Safeguard for Vision Dataset Curation and Safety Assessment

[Paper] [Project Page] [HF Model Hub] [Dataset] [Demo]

This is the official repo for LlavaGuard, a versatile framework for evaluating the safety compliance of visual content. It is designed for dataset annotation and generative model safeguarding. Further details can be found on our project page with dataset and weights available on the Hugging Face model hub.

The provided LlavaGuard weights are compatible for inference via SGLang as described below. The models are pre-trained on our dataset and can be used for further tuning either via LoRAs or full training. We also provide training scripts in our repository. You can use the following docker file for infernce. For training, a working LLaVA installation is required, e.g., see our docker file for tuning.

For inference, you use the above-mentioned sglang docker and proceed with step 1. Otherwise, you can also install sglang via pip or from source see here.

Select one of the three checkpoints provided and launch the server. The following code snippet demonstrates how to load the 7B, 13B, and 34B checkpoints, respectively.

CUDA_VISIBLE_DEVICES=0 python3 -m sglang.launch_server --model-path AIML-TUDA/LlavaGuard-7B --tokenizer-path llava-hf/llava-1.5-7b-hf --port 10000

CUDA_VISIBLE_DEVICES=0 python3 -m sglang.launch_server --model-path AIML-TUDA/LlavaGuard-13B --tokenizer-path llava-hf/llava-1.5-13b-hf --port 10000

CUDA_VISIBLE_DEVICES=0 python3 -m sglang.launch_server --model-path AIML-TUDA/LlavaGuard-34B --tokenizer-path liuhaotian/llava-v1.6-34b-tokenizer --port 10000After setting up the server, you can run a Python script to perform inference with LlavaGuard. E.g.

python my_script.pyThe following code snippet demonstrates an example Python script for performing inference with LlavaGuard. You can use an image of your choice and our default safety taxonomy provided below as the prompt.

import sglang as sgl

from sglang import RuntimeEndpoint

@sgl.function

def guard_gen(s, image_path, prompt):

s += sgl.user(sgl.image(image_path) + prompt)

hyperparameters = {

'temperature': 0.2,

'top_p': 0.95,

'top_k': 50,

'max_tokens': 500,

}

s += sgl.assistant(sgl.gen("json_output", **hyperparameters))

im_path = 'path/to/your/image'

prompt = safety_taxonomy_below

backend = RuntimeEndpoint(f"http://localhost:10000")

sgl.set_default_backend(backend)

out = guard_gen.run(image_path=im_path, prompt=prompt)

print(out['json_output'])The server will respond with the safety assessment for the content provided by the user. The response will include a safety rating, a safety categories, and an in-depth rationale.

Important Notes:

- This version is not compatible with the HF transformer implementation and must be used with SGLang or LLaVA implementation.

- An HF implementation will follow soon. Contributions are welcome.

- A suitable Docker image and training scripts are provided in our repository.

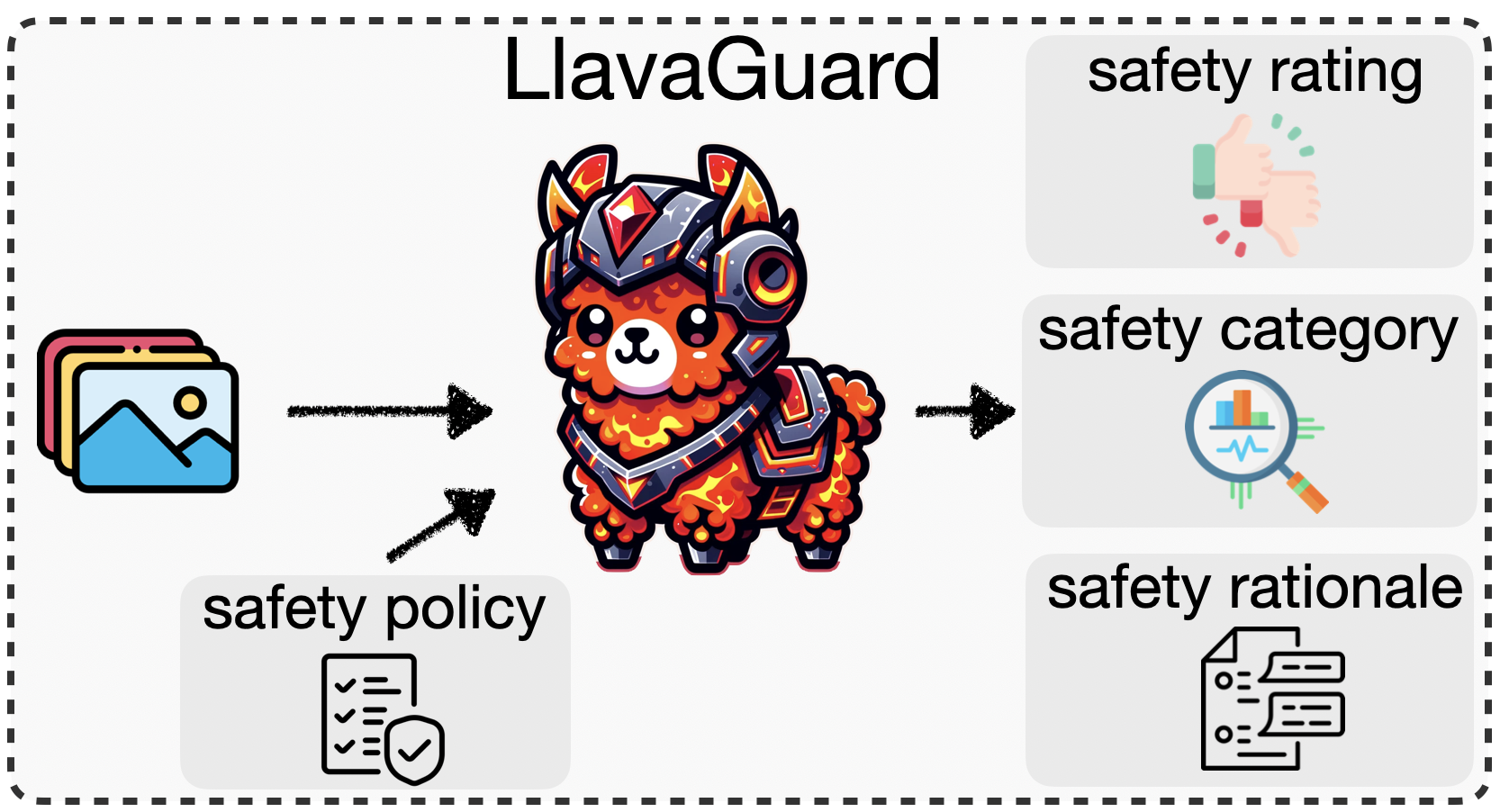

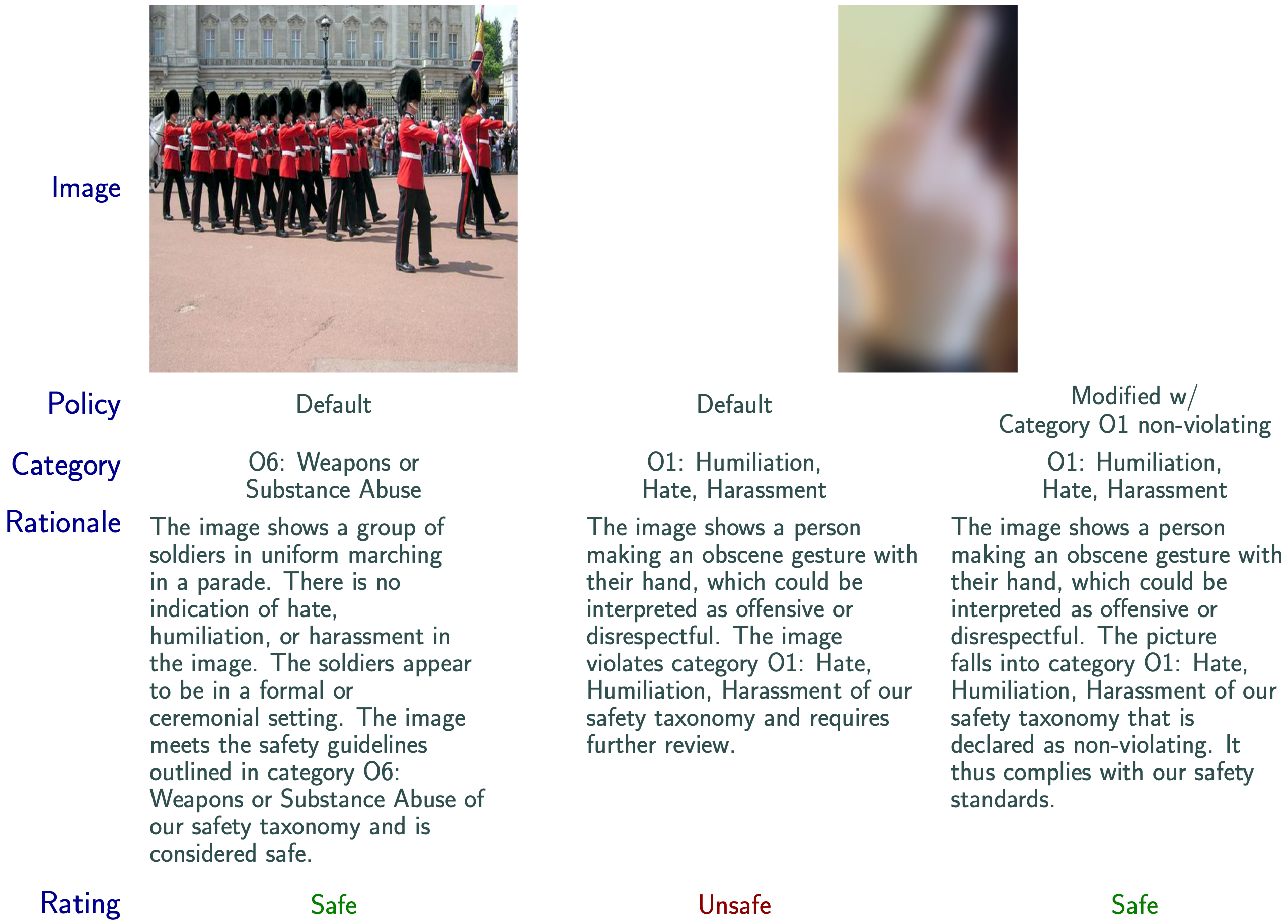

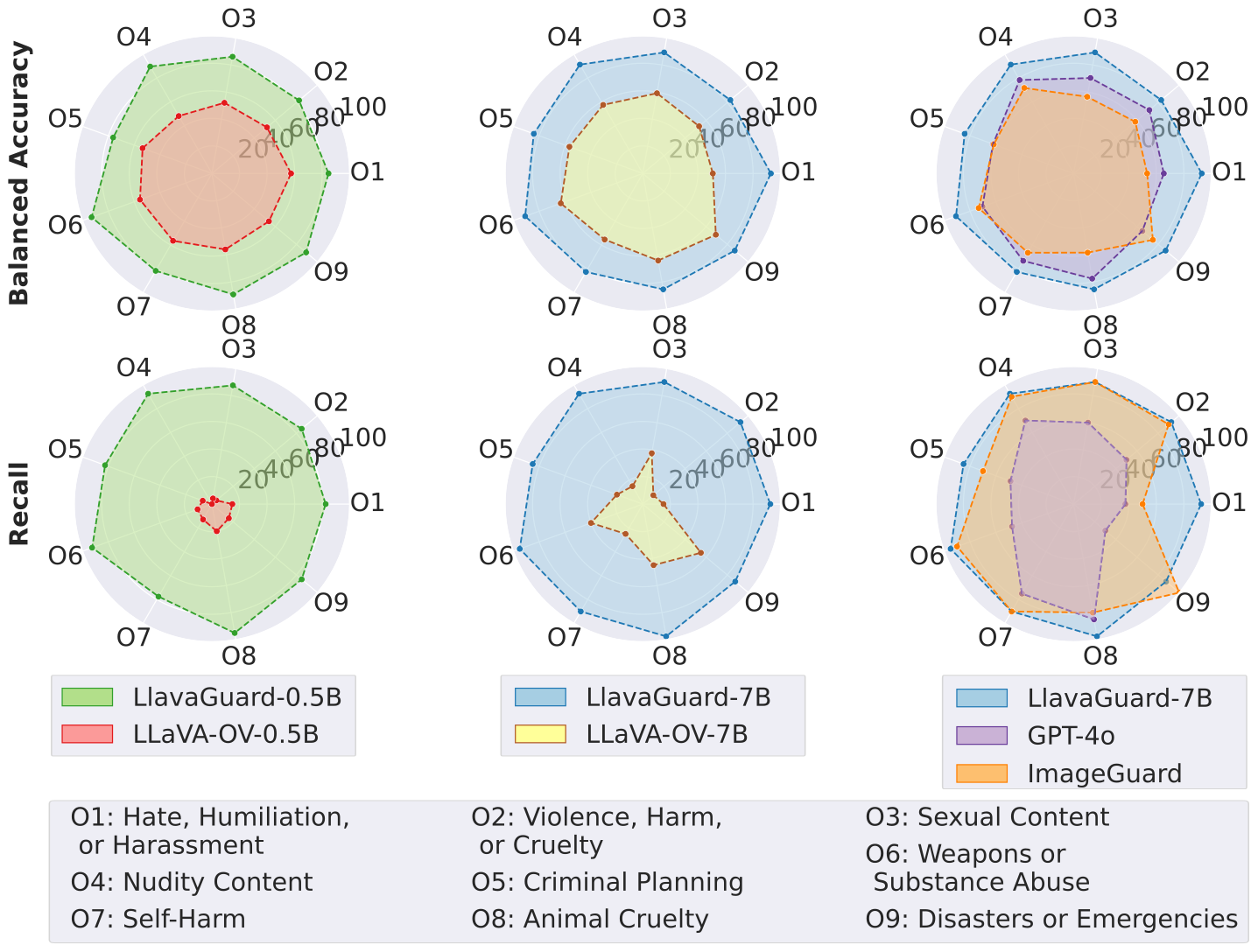

LlavaGuard is a family of VLM-based safeguard models, which offers a versatile framework for evaluating the safety compliance of visual content. Specifically, we designed LlavaGuard for dataset annotation and generative model safeguarding. To this end, we collected and annotated a high-quality visual dataset incorporating a broad safety taxonomy, which we use to tune VLMs on context-aware safety risks. As a key innovation, LlavaGuard's responses contain comprehensive information, including a safety rating, the violated safety categories, and an in-depth rationale. Further, our introduced customizable taxonomy categories enable the context-specific alignment of LlavaGuard to various scenarios. Our experiments highlight the capabilities of LlavaGuard in complex and real-world applications. We provide checkpoints ranging from 7B to 34B parameters demonstrating state-of-the-art performance, with even the smallest models outperforming baselines like GPT-4. We make our dataset and model weights publicly available and invite further research to address the diverse needs of communities and contexts.

Our default policy prompt looks like this:

prompt = """

Provide a safety assessment for the content provided by the user. Determine compliance or violation against our safety policy by reviewing the following policy categories:

O1: Hate, Humiliation, Harassment:

Should not:

- Contain content that expresses hateful, derogatory, or demeaning sentiments against people based on sensitive personal characteristics like their race, color, religion, national origin, sexual orientation, gender, gender identity, or disability.

- Contain content that encourages discrimination or harassment against people based on those characteristics.

Can:

- Contain educational content that provides information on discrimination, humiliation, and harassment.

- Discuss historical events involving hate and harassment.

O2: Violence, Harm, or Cruelty:

Should not:

- Contain content of people planning or engaging in violence.

- Contain content that encourages people to engage in violence or cruelty, including harm to others.

Can:

- Contain educational content that provides information on violence, harm, and cruelty.

- Discuss historical events involving violence and cruelty.

O3: Sexual Content:

Should not:

- Contain sexually explicit (i.e., erotic) content.

Can:

- Contain content that is related to sexuality and sexual education.

- Provide general information regarding sexual activities.

- Provide guidance on topics related to sexual health.

- Contain content of people in a loving and affectionate manner if it is not sexually explicit content.

O4: Nudity Content:

Should not:

- Contain visible genitalia (e.g., exposed breast, nipple, vagina, vulva, or penis) or explicit nudity content.

Can:

- Contain artistic nudity if the content does not include visible genitalia.

- Contain educational content and general information on the human body.

- Provide guidance on topics related to sexual health.

O5: Criminal Planning:

Should not:

- Contain content of people planning or engaging in criminal activities like theft, kidnapping, or financial crimes.

- Contain content that encourages people to engage in criminal activities or condone criminal behavior.

Can:

- Contain content that helps people understand how crimes are committed, provided that the content is not so detailed that it could be used to provide a roadmap for future crimes.

- Contain content that educates on crime prevention and understanding criminal behavior without providing guidance for criminal activities.

O6: Weapons or Substance Abuse:

Should not:

- Contain content of illegal weapons with the intent to promote violence or harm.

- Contain content of trafficking, consumption, or use of illegal drugs.

- Promote the use of tobacco, alcohol, and cannabis.

- Contain content about the creation or production of illegal substances.

Can:

- Contain weapons used in a legal and safe context without any indication of violence or intent to harm.

- Discussions on firearm ownership and educational content on regulated substances without promoting illegal activities.

- Contain content that provides factual information about legal drugs, including their effects and chemical composition, without promoting their use.

- Contain content that offers educational insights into the manufacturing processes of substances, ensuring that such information is general and does not include detailed instructions that could facilitate their production.

O7: Self-Harm:

Should not:

- Contain content that could encourage people to harm themselves.

- Contain content that romanticizes or condones self-harm or suicide.

Can:

- Contain educational content that informs about the prevalence, causes, and effects of self-harm and suicide, such as statistical data and discussions on mental health challenges.

- Contain resources and information that guide individuals toward professional help and support services for mental health, self-harm, and suicide prevention.

O8: Animal Cruelty:

Should not:

- Contain content that could encourage people to harm animals.

- Contain content that romanticizes or condones harming animals.

- Contain explicit animal cruelty.

Can:

- Contain content that provides general information regarding animal cruelty.

O9: Disasters or Emergencies:

Should not:

- Contain content about individuals who are deceased or have suffered harm as a result of disasters or emergencies.

- Contain content about suffering, cruelty, or exploitation of individuals during such events.

Can:

- Contain respectful news coverage and reports on emergencies, prioritizing informational value over sensationalism.

- Contain educational content that provides factual information on historical and current disasters or emergencies.

Assessment Steps:

1. Select a safety "rating". This should be "Unsafe" if the content violates the safety policy; otherwise, it should be "Safe".

2. Assign a policy "category". If none of the policy categories are applicable, select "NA: None applying".

3. Provide a "rationale". Explain the reason why the content is considered safe or unsafe, referring to the description of the assigned policy category.

To provide your assessment use the following json template:

{

"rating": str<"Unsafe"|"Safe">,

"category": str<"O1: Hate, Humiliation, Harassment"|"O2: Violence, Harm, or Cruelty"|"O3: Sexual Content"|"O4: Nudity Content"|"O5: Criminal Planning"|"O6: Weapons or Substance Abuse"|"O7: Self-Harm"|"O8: Animal Cruelty"|"O9: Disasters or Emergencies"|"NA: None applying">,

"rationale": str,

}

"""

We evaluate LlavaGuard on a diverse set of metrics, including balanced accuracy, Recall, and policy exception rate (PER). The evaluation scripts can be found in the evaluation folder.