- Put more details in the README.

- Add an example of HEPT with minimal code.

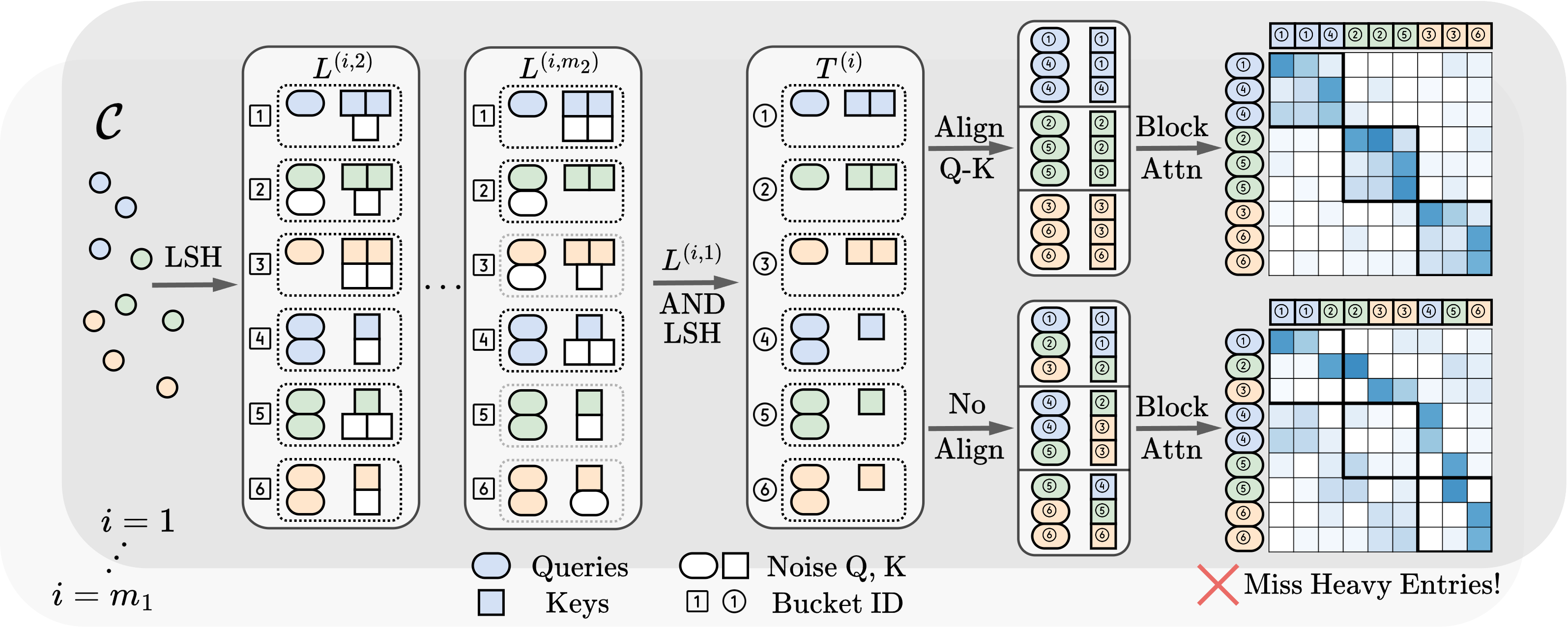

This study introduces a novel transformer model optimized for large-scale point cloud processing in scientific domains such as high-energy physics (HEP) and astrophysics. Addressing the limitations of graph neural networks and standard transformers, our model integrates local inductive bias and achieves near-linear complexity with hardware-friendly regular operations. One contribution of this work is the quantitative analysis of the error-complexity tradeoff of various sparsification techniques for building efficient transformers. Our findings highlight the superiority of using locality-sensitive hashing (LSH), especially OR & AND-construction LSH, in kernel approximation for large-scale point cloud data with local inductive bias. Based on this finding, we propose LSH-based Efficient Point Transformer (HEPT), which combines E2LSH with OR & AND constructions and is built upon regular computations. HEPT demonstrates remarkable performance in two critical yet time-consuming HEP tasks, significantly outperforming existing GNNs and transformers in accuracy and computational speed, marking a significant advancement in geometric deep learning and large-scale scientific data processing.

Figure 1.Pipline of HEPT.

All the datasets can be downloaded and processed automatically by running the scripts in ./src/datasets, i.e.,

cd ./src/datasets

python pileup.py

python tracking.py -d tracking-6k

python tracking.py -d tracking-60k

We are using torch 2.0.1 and pyg 2.4.0 with python 3.10.0 and cuda 11.8.

To run the code, you can use the following command:

python tracking_trainer.py -m hept

Or

python pileup_trainer.py -m hept

Configurations will be loaded from those located in ./configs/ directory.

@article{miao2024hept,

title = {Locality-Sensitive Hashing-Based Efficient Point Transformer with Applications in High-Energy Physics},

author = {Miao, Siqi and Lu, Zhiyuan and Liu, Mia and Duarte, Javier and Li, Pan},

journal = {arXiv preprint arXiv:2402.12535},

year = {2024}

}