This repo collects papers, docs, codes about model quantization for anyone who wants to do research on it. We are continuously improving the project. Welcome to PR the works (papers, repositories) that are missed by the repo. Special thanks to Yifu Ding, Xudong Ma, Yuxuan Wen, and all researchers who have contributed to this project!

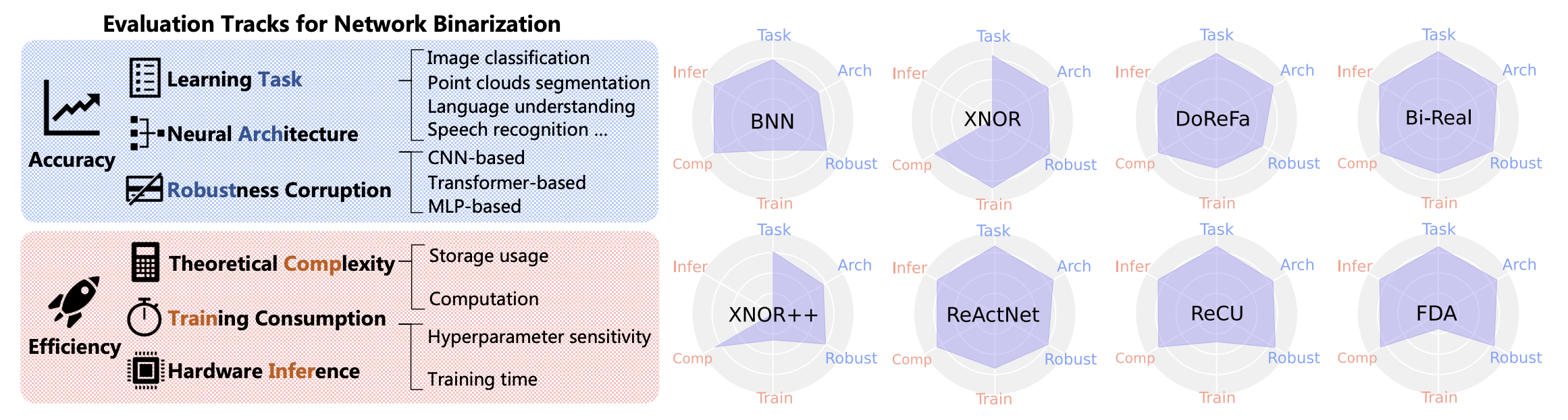

The paper BiBench: Benchmarking and Analyzing Network Binarization (ICML 2023) a rigorously designed benchmark with in-depth analysis for network binarization. For details, please refer to:

BiBench: Benchmarking and Analyzing Network Binarization [Paper] [Project]

Haotong Qin, Mingyuan Zhang, Yifu Ding, Aoyu Li, Zhongang Cai, Ziwei Liu, Fisher Yu, Xianglong Liu.

Bibtex

@inproceedings{qin2023bibench,

title={BiBench: Benchmarking and Analyzing Network Binarization},

author={Qin, Haotong and Zhang, Mingyuan and Ding, Yifu and Li, Aoyu and Cai, Zhongang and Liu, Ziwei and Yu, Fisher and Liu, Xianglong},

booktitle={International Conference on Machine Learning (ICML)},

year={2023}

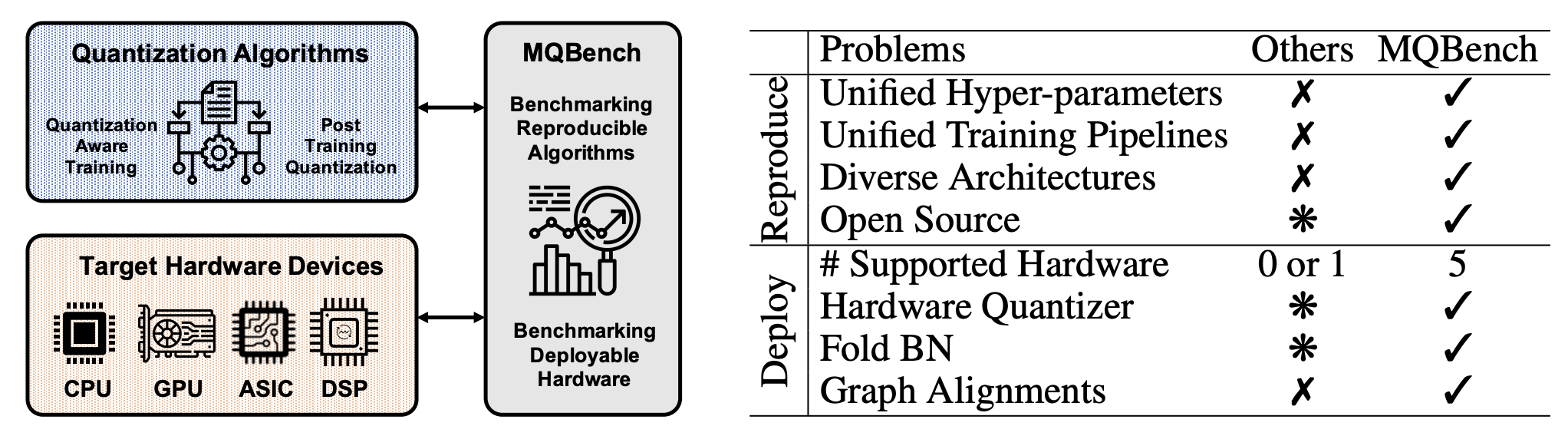

}The paper MQBench: Towards Reproducible and Deployable Model Quantization Benchmark (NeurIPS 2021) is a benchmark and framework for evluating the quantization algorithms under real world hardware deployments. For details, please refer to:

MQBench: Towards Reproducible and Deployable Model Quantization Benchmark [Paper] [Project]

Yuhang Li, Mingzhu Shen, Jian Ma, Yan Ren, Mingxin Zhao, Qi Zhang, Ruihao Gong, Fengwei Yu, Junjie Yan.

Bibtex

@article{2021MQBench,

title = "MQBench: Towards Reproducible and Deployable Model Quantization Benchmark",

author= "Yuhang Li* and Mingzhu Shen* and Jian Ma* and Yan Ren* and Mingxin Zhao* and Qi Zhang* and Ruihao Gong and Fengwei Yu and Junjie Yan",

journal = "https://openreview.net/forum?id=TUplOmF8DsM",

year = "2021"

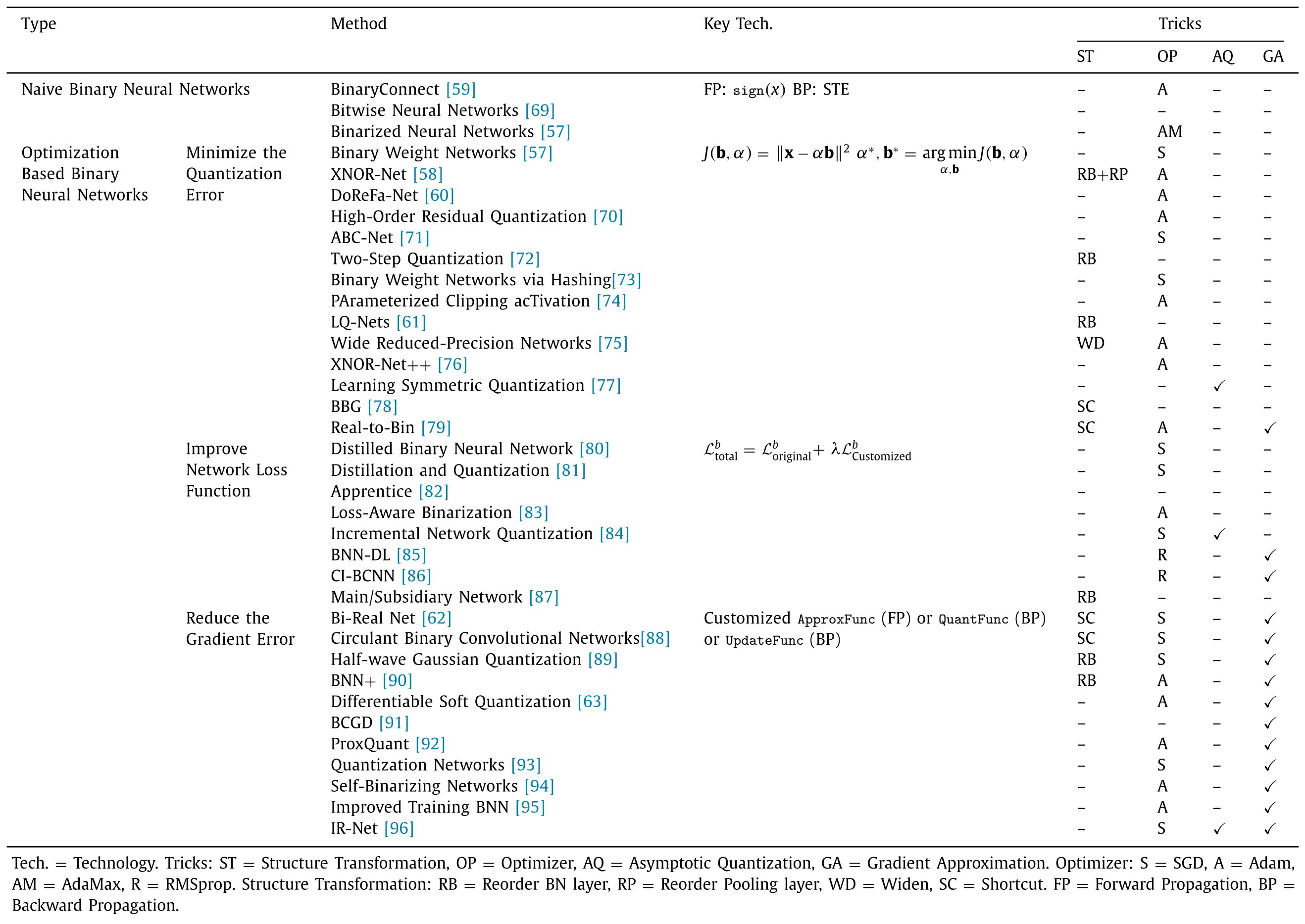

}Our survey paper Binary Neural Networks: A Survey (Pattern Recognition) is a comprehensive survey of recent progress in binary neural networks. For details, please refer to:

Binary Neural Networks: A Survey [Paper] [Blog]

Haotong Qin, Ruihao Gong, Xianglong Liu*, Xiao Bai, Jingkuan Song, and Nicu Sebe.

Bibtex

@article{Qin:pr20_bnn_survey,

title = "Binary neural networks: A survey",

author = "Haotong Qin and Ruihao Gong and Xianglong Liu and Xiao Bai and Jingkuan Song and Nicu Sebe",

journal = "Pattern Recognition",

volume = "105",

pages = "107281",

year = "2020"

}The survey paper A Survey of Quantization Methods for Efficient Neural Network Inference (ArXiv) is a comprehensive survey of recent progress in quantization. For details, please refer to:

A Survey of Quantization Methods for Efficient Neural Network Inference [Paper]

Amir Gholami* , Sehoon Kim* , Zhen Dong* , Zhewei Yao* , Michael W. Mahoney, Kurt Keutzer. (* Equal contribution)

Bibtex

@misc{gholami2021survey,

title={A Survey of Quantization Methods for Efficient Neural Network Inference},

author={Amir Gholami and Sehoon Kim and Zhen Dong and Zhewei Yao and Michael W. Mahoney and Kurt Keutzer},

year={2021},

eprint={2103.13630},

archivePrefix={arXiv},

primaryClass={cs.CV}

}Keywords: qnn: quantized neural networks | bnn: binarized neural networks | hardware: hardware deployment | snn: spiking neural networks | other

Statistics: 🔥 highly cited | ⭐ code is available and star > 50

- [ICML] BiBench: Benchmarking and Analyzing Network Binarization [

bnn] [code] - [CVPR]Toward Accurate Post-Training Quantization for Image Super Resolution

- [CVPR]One-Shot Model for Mixed-Precision Quantization

- [CVPR] Adaptive Data-Free Quantization

- [CVPR] NoisyQuant: Noisy Bias-Enhanced Post-Training Activation Quantization for Vision Transformers

- [CVPR] Boost Vision Transformer with GPU-Friendly Sparsity and Quantization

- [CVPR] NIPQ: Noise proxy-based Integrated Pseudo-Quantization

- [CVPR] Bit-shrinking: Limiting Instantaneous Sharpness for Improving Post-training Quantization

- [CVPR] Solving Oscillation Problem in Post-Training Quantization Through a Theoretical Perspective

- [CVPR] ABCD : Arbitrary Bitwise Coefficient for De-quantization

- [CVPR] GENIE: Show Me the Data for Quantization

- [TNNLS] BiFSMNv2: Pushing Binary Neural Networks for Keyword Spotting to Real-Network Performance. [

bnn] [code] - [WACV] Collaborative Multi-Teacher Knowledge Distillation for Learning Low Bit-width Deep Neural Networks.

- [PR] Bayesian asymmetric quantized neural networks.

- [Cognitive Neurodynamics] Pruning and quantization algorithm with applications in memristor-based convolutional neural network.

- [MMM] Binary Neural Network for Video Action Recognition. [

bnn] - [arxiv] SmoothQuant: Accurate and Efficient Post-Training Quantization for Large Language Models. [code] [387⭐]

- [arxiv] Post-training Quantization for Neural Networks with Provable Guarantees.

- [arxiv] Q-HyViT: Post-Training Quantization for Hybrid Vision Transformer with Bridge Block Reconstruction.

- [arxiv] EBSR: Enhanced Binary Neural Network for Image Super-Resolution. [

bnn] - [arxiv] Binarizing Sparse Convolutional Networks for Efficient Point Cloud Analysis. [

bnn] - [arxiv] Outlier Suppression+: Accurate quantization of large language models by equivalent and optimal shifting and scaling.

- [arxiv] LUT-GEMM: Quantized Matrix Multiplication based on LUTs for Efficient Inference in Large-Scale Generative Language Models

- [ICLR] GPTQ: Accurate Post-Training Quantization for Generative Pre-trained Transformers [code] [721⭐]

- [arxiv] AWQ: Activation-aware Weight Quantization for LLM Compression and Acceleration [code]

- [arxiv] SpQR: A Sparse-Quantized Representation for Near-Lossless LLM Weight Compression [code]

- [arxiv] QLORA: Efficient Finetuning of Quantized LLMs [code]

- [arxiv] LLM-QAT: Data-Free Quantization Aware Training for Large Language Models

- [arxiv] Compress, Then Prompt: Improving Accuracy-Efficiency Trade-off of LLM Inference with Transferable Prompt

- [arxiv] SqueezeLLM: Dense-and-Sparse Quantization [code]

- [arxiv] Quantizable Transformers: Removing Outliers by Helping Attention Heads Do Nothing

- [arxiv] Binary domain generalization for sparsifying binary neural networks [

bnn]

- [ECCV] Weight Fixing Networks. [

qnn] [code] - [IJCV] Distribution-sensitive Information Retention for Accurate Binary Neural Network. [

bnn] - [ICML] SDQ: Stochastic Differentiable Quantization with Mixed Precision [

qnn] - [ICML] Finding the Task-Optimal Low-Bit Sub-Distribution in Deep Neural Networks [

qnn] [hardware] - [ICML] GACT: Activation Compressed Training for Generic Network Architectures [

qnn] - [ICLR] BiBERT: Accurate Fully Binarized BERT. [

bnn]code] - [CVPR] It's All In the Teacher: Zero-Shot Quantization Brought Closer to the Teacher. [

qnn] [code] - [CVPR] Nonuniform-to-Uniform Quantization: Towards Accurate Quantization via Generalized Straight-Through Estimation. [

qnn] [code] [59⭐] - [CVPR] Learnable Lookup Table for Neural Network Quantization. [

qnn] - [CVPR] Mr.BiQ: Post-Training Non-Uniform Quantization based on Minimizing the Reconstruction Error. [

qnn] - [CVPR] Nonuniform-to-Uniform Quantization: Towards Accurate Quantization via Generalized Straight-Through Estimation. [

qnn] - [CVPR] Data-Free Network Compression via Parametric Non-uniform Mixed Precision Quantization. [

qnn] - [CVPR] Instance-Aware Dynamic Neural Network Quantization. [

qnn] - [NeurIPS] BiT: Robustly Binarized Multi-distilled Transformer. [

bnn] [code] [42⭐] - [NeurIPS] Leveraging Inter-Layer Dependency for Post -Training Quantization. [

qnn] - [NeurIPS] Theoretically Better and Numerically Faster Distributed Optimization with Smoothness-Aware Quantization Techniques. [

qnn] - [NeurIPS] Entropy-Driven Mixed-Precision Quantization for Deep Network Design. [

qnn] - [NeurIPS] Redistribution of Weights and Activations for AdderNet Quantization. [

qnn] - [NeurIPS] FP8 Quantization: The Power of the Exponent. [

qnn] - [NeurIPS] Towards Efficient Post-training Quantization of Pre-trained Language Models. [

qnn] - [NeurIPS] Optimal Brain Compression: A Framework for Accurate Post-Training Quantization and Pruning. [

qnn] [hardware] - [NeurIPS] ZeroQuant: Efficient and Affordable Post-Training Quantization for Large-Scale Transformers. [

qnn] - [NeurIPS] ClimbQ: Class Imbalanced Quantization Enabling Robustness on Efficient Inferences. [

qnn] - [NeurIPS] Q-ViT: Accurate and Fully Quantized Low-bit Vision Transformer. [

qnn] - [ECCV] Non-Uniform Step Size Quantization for Accurate Post-Training Quantization. [

qnn] - [ECCV] PTQ4ViT: Post-Training Quantization for Vision Transformers with Twin Uniform Quantization. [

qnn] - [ECCV] Towards Accurate Network Quantization with Equivalent Smooth Regularizer. [

qnn] - [ECCV] BASQ: Branch-wise Activation-clipping Search Quantization for Sub-4-bit Neural Networks. [

qnn] - [ECCV] RDO-Q: Extremely Fine-Grained Channel-Wise Quantization via Rate-Distortion Optimization. [

qnn] - [ECCV] Mixed-Precision Neural Network Quantization via Learned Layer-Wise Importance. [

qnn] [Code] - [ECCV] Symmetry Regularization and Saturating Nonlinearity for Robust Quantization. [

qnn] - [ECCV] Patch Similarity Aware Data-Free Quantization for Vision Transformers. [

qnn] - [IJCAI] BiFSMN: Binary Neural Network for Keyword Spotting. [

bnn] [code] - [IJCAI] RAPQ: Rescuing Accuracy for Power-of-Two Low-bit Post-training Quantization. [

qnn] - [IJCAI] MultiQuant: Training Once for Multi-bit Quantization of Neural Networks. [

qnn] - [IJCAI] FQ-ViT: Post-Training Quantization for Fully Quantized Vision Transformer. [

qnn] [code] [71:star:] - [ICLR] F8Net: Fixed-Point 8-bit Only Multiplication for Network Quantization. [

qnn] - [ICLR] 8-bit Optimizers via Block-wise Quantization. [

qnn] - [ICLR] Toward Efficient Low-Precision Training: Data Format Optimization and Hysteresis Quantization. [

qnn] - [ICLR] Information Bottleneck: Exact Analysis of (Quantized) Neural Networks. [

qnn] - [ICLR] QDrop: Randomly Dropping Quantization for Extremely Low-bit Post-Training Quantization. [

qnn] - [ICLR] SQuant: On-the-Fly Data-Free Quantization via Diagonal Hessian Approximation. [

qnn]code] - [ICLR] Optimal ANN-SNN Conversion for High-accuracy and Ultra-low-latency Spiking Neural Networks. [

snn] - [ICLR] VC dimension of partially quantized neural networks in the overparametrized regime. [

qnn] - [arxiv] Q-ViT: Fully Differentiable Quantization for Vision Transformer [

qnn] - [arxiv] SmoothQuant: Accurate and Efficient Post-Training Quantization for Large Language Models [

qnn] [code] [150:star:] - [arxiv] Quantune: Post-training Quantization of Convolutional Neural Networks using Extreme Gradient Boosting for Fast Deployment [

qnn] - [IEEE Transactions on Geoscience and Remote Sensing] Accelerating Convolutional Neural Network-Based Hyperspectral Image Classification by Step Activation Quantization [

qnn] - [arxiv] Neural network quantization with ai model efficiency toolkit (aimet).

- [IJNS] Convolutional Neural Networks Quantization with Attention.

- [ACM Trans. Des. Autom. Electron. Syst.] Structured Dynamic Precision for Deep Neural Networks uantization.

- [MICRO] ANT: Exploiting Adaptive Numerical Data Type for Low-bit Deep Neural Network Quantization.

- [Empirical Software Engineering] DiverGet: a Search-Based Software Testing approach for Deep Neural Network Quantization assessment.

- [TODAES] Dynamic Quantization Range Control for Analog-in-Memory Neural Networks Acceleration.

- [CVPR] BppAttack: Stealthy and Efficient Trojan Attacks against Deep Neural Networks via Image Quantization and Contrastive Adversarial Learning. [torch]

- [IEEE Internet of Things Journal] FedQNN: A Computation–Communication-Efficient Federated Learning Framework for IoT With Low-Bitwidth Neural Network Quantization.

- [FPGA] FILM-QNN: Efficient FPGA Acceleration of Deep Neural Networks with Intra-Layer, Mixed-Precision Quantization.

- [Neural Networks] Quantization-aware training for low precision photonic neural networks.

- [ICCRD] Post Training Quantization after Neural Network.

- [Electronics] A Survey on Efficient Convolutional Neural Networks and Hardware Acceleration.

- [Applied Soft Computing] A neural network compression method based on knowledge-distillation and parameter quantization for the bearing fault diagnosis.

- [CVPR] IntraQ: Learning Synthetic Images With Intra-Class Heterogeneity for Zero-Shot Network Quantization. [torch]

- [Neurocomputing] EPQuant: A Graph Neural Network compression approach based on product quantization.

- [tinyML Research Symposium] Power-of-Two Quantization for Low Bitwidth and Hardware Compliant Neural Networks.

- [arxiv] Sub-8-Bit Quantization Aware Training for 8-Bit Neural Network Accelerator with On-Device Speech Recognition.

- [Ocean Engineering] Neural network based adaptive sliding mode tracking control of autonomous surface vehicles with input quantization and saturation.

- [CVPR] A Low Memory Footprint Quantized Neural Network for Depth Completion of Very Sparse Time-of-Flight Depth Maps.

- [PPoPP] QGTC: accelerating quantized graph neural networks via GPU tensor core.

- [TCSVT] An Efficient Implementation of Convolutional Neural Network With CLIP-Q Quantization on FPGA.

- [EANN] A Robust, Quantization-Aware Training Method for Photonic Neural Networks.

- [arxiv] QONNX: Representing Arbitrary-Precision Quantized Neural Networks.

- [arxiv] Edge Inference with Fully Differentiable Quantized Mixed Precision Neural Networks.

- [ITSM] Edge–Artificial Intelligence-Powered Parking Surveillance With Quantized Neural Networks.

- [CVPR] Mr.BiQ: Post-Training Non-Uniform Quantization based on Minimizing the Reconstruction Error.

- [Intelligent Automation & Soft Computing] A Resource-Efficient Convolutional Neural Network Accelerator Using Fine-Grained Logarithmic Quantization.

- [ICML] Overcoming Oscillations in Quantization-Aware Training. [torch]

- [CCF Transactions on High Performance Computing] An efficient segmented quantization for graph neural networks.

- [CVPR] Simulated Quantization, Real Power Savings.

- [LNAI] ECQ$^x$: Explainability-Driven Quantization for Low-Bit and Sparse DNNs.

- [TCCN] Low-Bitwidth Convolutional Neural Networks for Wireless Interference Identification.

- [ASE] QVIP: An ILP-based Formal Verification Approach for Quantized Neural Networks.

- [IJCNN] Accuracy Evaluation of Transposed Convolution-Based Quantized Neural Networks.

- [NeurIPS] Outlier Suppression: Pushing the Limit of Low-bit Transformer Language Models. [code]

- [ACL] Compression of Generative Pre-trained Language Models via Quantization

- [NeurIPS] LLM.int8(): 8-bit Matrix Multiplication for Transformers at Scale

- [ICLR] BiPointNet: Binary Neural Network for Point Clouds. [

bnn] [torch] - [ICML] How Do Adam and Training Strategies Help BNNs Optimization?. [

bnn] [code] [48⭐] - [ICML] ActNN: Reducing Training Memory Footprint via 2-Bit Activation Compressed Training [

qnn] - [ICML] HAWQ-V3: Dyadic Neural Network Quantization. [

qnn] - [ICML] I-BERT: Integer-only BERT Quantization. [

qnn] - [ICML] Differentiable Dynamic Quantization with Mixed Precision and Adaptive Resolution. [

qnn] - [ICML] Auto-NBA: Efficient and Effective Search Over the Joint Space of Networks, Bitwidths, and Accelerators. [

qnn] - [CVPR] S2-bnn: Bridging the gap between self-supervised real and 1-bit neural networks via guided distribution calibration [

bnn] [code] [52⭐] - [CVPR] Diversifying Sample Generation for Accurate Data-Free Quantization. [

qnn] - [ACM MM] VQMG: Hierarchical Vector Quantised and Multi-hops Graph Reasoning for Explicit Representation Learning. [

other] - [ACM MM] Fully Quantized Image Super-Resolution Networks. [

qnn] - [NeurIPS] Qimera: Data-free Quantization with Synthetic Boundary Supporting Samples. [

qnn] - [NeurIPS] Post-Training Quantization for Vision Transformer. [

mixed] - [NeurIPS] Post-Training Sparsity-Aware Quantization. [

qnn] - [NeurIPS] Divergence Frontiers for Generative Models: Sample Complexity, Quantization Effects, and Frontier Integrals.

- [NeurIPS] VQ-GNN: A Universal Framework to Scale up Graph Neural Networks using Vector Quantization. [

other] - [NeurIPS] Qu-ANTI-zation: Exploiting Quantization Artifacts for Achieving Adversarial Outcomes .

- [NeurIPS] A Winning Hand: Compressing Deep Networks Can Improve Out-of-Distribution Robustness. [

bnn] [torch] - [CVPR] Permute, Quantize, and Fine-tune: Efficient Compression of Neural Networks. [

qnn] [torch] [137⭐] - [CVPR] Learnable Companding Quantization for Accurate Low-bit Neural Networks. [

qnn] - [CVPR] Zero-shot Adversarial Quantization. [

qnn] [torch] - [CVPR] Binary Graph Neural Networks. [

bnn] [torch] - [CVPR] Network Quantization with Element-wise Gradient Scaling. [

qnn] [torch] - [CVPR] PokeBNN: A Binary Pursuit of Lightweight Accuracy [

bnn] [tf] - [ICLR] BiPointNet: Binary Neural Network for Point Clouds. [

bnn] [torch] - [ICLR] Reducing the Computational Cost of Deep Generative Models with Binary Neural Networks. [

bnn] - [ICLR] High-Capacity Expert Binary Networks. [

bnn] - [ICLR] Multi-Prize Lottery Ticket Hypothesis: Finding Accurate Binary Neural Networks by Pruning A Randomly Weighted Network. [

bnn] - [ICLR] BRECQ: Pushing the Limit of Post-Training Quantization by Block Reconstruction. [

qnn] [torch] - [ICLR] Neural gradients are near-lognormal: improved quantized and sparse training. [

qnn] - [ICLR] Training with Quantization Noise for Extreme Model Compression. [

qnn] - [ICLR] Incremental few-shot learning via vector quantization in deep embedded space. [

qnn] - [ICLR] Degree-Quant: Quantization-Aware Training for Graph Neural Networks. [

qnn] - [ICLR] BSQ: Exploring Bit-Level Sparsity for Mixed-Precision Neural Network Quantization. [

qnn] - [ICLR] Simple Augmentation Goes a Long Way: ADRL for DNN Quantization. [

qnn] - [ICLR] Sparse Quantized Spectral Clustering. [

qnn] - [ICLR] WrapNet: Neural Net Inference with Ultra-Low-Resolution Arithmetic. [

qnn] - [ECCV] PAMS: Quantized Super-Resolution via Parameterized Max Scale. [

qnn] - [AAAI] Distribution Adaptive INT8 Quantization for Training CNNs. [

qnn] - [AAAI] Stochastic Precision Ensemble: Self‐Knowledge Distillation for Quantized Deep Neural Networks. [

qnn] - [AAAI] Optimizing Information Theory Based Bitwise Bottlenecks for Efficient Mixed-Precision Activation Quantization. [

qnn] - [AAAI] OPQ: Compressing Deep Neural Networks with One-shot Pruning-Quantization. [

qnn] - [AAAI] Scalable Verification of Quantized Neural Networks. [

qnn] - [AAAI] Uncertainty Quantification in CNN through the Bootstrap of Convex Neural Networks. [

qnn] - [AAAI] FracBits: Mixed Precision Quantization via Fractional Bit-Widths. [

qnn] - [AAAI] Post-‐training Quantization with Multiple Points: Mixed Precision without Mixed Precision. [

qnn] - [AAAI] Vector Quantized Bayesian Neural Network Inference for Data Streams. [

qnn] - [AAAI] TRQ: Ternary Neural Networks with Residual Quantization. [

qnn] - [AAAI] Memory and Computation-Efficient Kernel SVM via Binary Embedding and Ternary Coefficients. [

bnn] - [AAAI] Compressing Deep Convolutional Neural Networks by Stacking Low-Dimensional Binary Convolution Filters. [

bnn] - [AAAI] Training Binary Neural Network without Batch Normalization for Image Super-Resolution. [

bnn] - [AAAI] SA-BNN: State-Aware Binary Neural Network. [

bnn] - [ACL] On the Distribution, Sparsity, and Inference-time Quantization of Attention Values in Transformers. [

qnn] - [arxiv] Any-Precision Deep Neural Networks. [

mixed] [torch] - [arxiv] ReCU: Reviving the Dead Weights in Binary Neural Networks. [

bnn] [torch] - [arxiv] Post-Training Quantization for Vision Transformer. [

qnn] - [arxiv] A Survey of Quantization Methods for Efficient Neural Network Inference.

- [arxiv] A White Paper on Neural Network Quantization.

- [CVPR] Forward and Backward Information Retention for Accurate Binary Neural Networks. [

bnn] [torch] [105:star:] - [ACL] End to End Binarized Neural Networks for Text Classification. [

bnn] - [AAAI] HLHLp: Quantized Neural Networks Traing for Reaching Flat Minima in Loss Sufrface. [

qnn] - [AAAI] [72:fire:] Q-BERT: Hessian Based Ultra Low Precision Quantization of BERT. [

qnn] - [AAAI] Sparsity-Inducing Binarized Neural Networks. [

bnn] - [AAAI] Towards Accurate Low Bit-Width Quantization with Multiple Phase Adaptations.

- [COOL CHIPS] A Novel In-DRAM Accelerator Architecture for Binary Neural Network. [

hardware] - [CoRR] Training Binary Neural Networks using the Bayesian Learning Rule. [

bnn] - [CVPR] [47:fire:] GhostNet: More Features from Cheap Operations. [

qnn] [tensorflow & torch] [1.2k:star:] - [CVPR] APQ: Joint Search for Network Architecture, Pruning and Quantization Policy. [

qnn] [torch] [76:star:] - [CVPR] Rotation Consistent Margin Loss for Efficient Low-Bit Face Recognition. [

qnn] - [CVPR] BiDet: An Efficient Binarized Object Detector. [

qnn] [torch] [112:star:] - [CVPR] Fixed-Point Back-Propagation Training. [video] [

qnn] - [CVPR] Low-Bit Quantization Needs Good Distribution. [

qnn] - [DATE] BNNsplit: Binarized Neural Networks for embedded distributed FPGA-based computing systems. [

bnn] - [DATE] PhoneBit: Efficient GPU-Accelerated Binary Neural Network Inference Engine for Mobile Phones. [

bnn] [hardware] - [DATE] OrthrusPE: Runtime Reconfigurable Processing Elements for Binary Neural Networks. [

bnn] - [ECCV] Learning Architectures for Binary Networks. [

bnn] [torch] - [ECCV]PROFIT: A Novel Training Method for sub-4-bit MobileNet Models. [

qnn] - [ECCV] ProxyBNN: Learning Binarized Neural Networks via Proxy Matrices. [

bnn] - [ECCV] ReActNet: Towards Precise Binary Neural Network with Generalized Activation Functions. [

bnn] [torch] [108:star:] - [ECCV] Differentiable Joint Pruning and Quantization for Hardware Efficiency. [

hardware] - [ECCV] Generative Low-bitwidth Data Free Quantization. [

qnn] [torch] - [EMNLP] TernaryBERT: Distillation-aware Ultra-low Bit BERT. [

qnn] - [EMNLP] Fully Quantized Transformer for Machine Translation. [

qnn] - [ICET] An Energy-Efficient Bagged Binary Neural Network Accelerator. [

bnn] [hardware] - [ICASSP] Balanced Binary Neural Networks with Gated Residual. [

bnn] - [ICML] Training Binary Neural Networks through Learning with Noisy Supervision. [

bnn] - [ICLR] DMS: Differentiable Dimension Search for Binary Neural Networks. [

bnn] - [ICLR] [19:fire:] Training Binary Neural Networks with Real-to-Binary Convolutions. [

bnn] [code is comming] [re-implement] - [ICLR] BinaryDuo: Reducing Gradient Mismatch in Binary Activation Network by Coupling Binary Activations. [

bnn] [torch] - [ICLR] Mixed Precision DNNs: All You Need is a Good Parametrization. [

mixed] [code] [73:star:] - [IJCV] Binarized Neural Architecture Search for Efficient Object Recognition. [

bnn] - [IJCAI] CP-NAS: Child-Parent Neural Architecture Search for Binary Neural Networks. [

bnn] - [IJCAI] Towards Fully 8-bit Integer Inference for the Transformer Model. [

qnn] [nlp] - [IJCAI] Soft Threshold Ternary Networks. [

qnn] - [IJCAI] Overflow Aware Quantization: Accelerating Neural Network Inference by Low-bit Multiply-Accumulate Operations. [

qnn] - [IJCAI] Direct Quantization for Training Highly Accurate Low Bit-width Deep Neural Networks. [

qnn] - [IJCAI] Fully Nested Neural Network for Adaptive Compression and Quantization. [

qnn] - [ISCAS] MuBiNN: Multi-Level Binarized Recurrent Neural Network for EEG Signal Classification. [

bnn] - [ISQED] BNN Pruning: Pruning Binary Neural Network Guided by Weight Flipping Frequency. [

bnn] [torch] - [MICRO] GOBO: Quantizing Attention-Based NLP Models for Low Latency and Energy Efficient Inference. [

qnn] [nlp] - [MLST] Compressing deep neural networks on FPGAs to binary and ternary precision with HLS4ML. [

hardware] [qnn] - [NeurIPS] Rotated Binary Neural Network. [

bnn] [torch] - [NeurIPS] Searching for Low-Bit Weights in Quantized Neural Networks. [

qnn] [torch] - [NeurIPS] Universally Quantized Neural Compression. [

qnn] - [NeurIPS] Efficient Exact Verification of Binarized Neural Networks. [

bnn] [torch] - [NeurIPS] Path Sample-Analytic Gradient Estimators for Stochastic Binary Networks. [

bnn] [code] - [NeurIPS] HAWQ-V2: Hessian Aware trace-Weighted Quantization of Neural Networks. [

qnn] - [NeurIPS] Bayesian Bits: Unifying Quantization and Pruning. [

qnn] - [NeurIPS] Robust Quantization: One Model to Rule Them All. [

qnn] - [NeurIPS] Closing the Dequantization Gap: PixelCNN as a Single-Layer Flow. [

qnn] [torch] - [NeurIPS] Adaptive Gradient Quantization for Data-Parallel SGD. [

qnn] [torch] - [NeurIPS] FleXOR: Trainable Fractional Quantization. [

qnn] - [NeurIPS] Position-based Scaled Gradient for Model Quantization and Pruning. [

qnn] [torch] - [NN] Training high-performance and large-scale deep neural networks with full 8-bit integers. [

qnn] - [Neurocomputing] Eye localization based on weight binarization cascade convolution neural network. [

bnn] - [PR] [23:fire:] Binary neural networks: A survey. [

bnn] - [PR Letters] Controlling information capacity of binary neural network. [

bnn] - [SysML] Riptide: Fast End-to-End Binarized Neural Networks. [

qnn] [tensorflow] [129:star:] - [TPAMI] Hierarchical Binary CNNs for Landmark Localization with Limited Resources. [

bnn] [homepage] [code] - [TPAMI] Deep Neural Network Compression by In-Parallel Pruning-Quantization.

- [TPAMI] Towards Efficient U-Nets: A Coupled and Quantized Approach.

- [TVLSI] Phoenix: A Low-Precision Floating-Point Quantization Oriented Architecture for Convolutional Neural Networks. [

qnn] - [WACV] MoBiNet: A Mobile Binary Network for Image Classification. [

bnn] - [IEEE Access] An Energy-Efficient and High Throughput in-Memory Computing Bit-Cell With Excellent Robustness Under Process Variations for Binary Neural Network. [

bnn] [hardware] - [IEEE Trans. Magn] SIMBA: A Skyrmionic In-Memory Binary Neural Network Accelerator. [

bnn] - [IEEE TCS.II] A Resource-Efficient Inference Accelerator for Binary Convolutional Neural Networks. [

hardware] - [IEEE TCS.I] IMAC: In-Memory Multi-Bit Multiplication and ACcumulation in 6T SRAM Array. [

qnn] - [IEEE Trans. Electron Devices] Design of High Robustness BNN Inference Accelerator Based on Binary Memristors. [

bnn] [hardware] - [arxiv] Training with Quantization Noise for Extreme Model Compression. [

qnn] [torch] - [arxiv] Binarized Graph Neural Network. [

bnn] - [arxiv] How Does Batch Normalization Help Binary Training? [

bnn] - [arxiv] Distillation Guided Residual Learning for Binary Convolutional Neural Networks. [

bnn] - [arxiv] Accelerating Binarized Neural Networks via Bit-Tensor-Cores in Turing GPUs. [

bnn] [code] - [arxiv] MeliusNet: Can Binary Neural Networks Achieve MobileNet-level Accuracy? [

bnn] [code] [192:star:] - [arxiv] RPR: Random Partition Relaxation for Training; Binary and Ternary Weight Neural Networks. [

bnn] [qnn] - [paper] Towards Lossless Binary Convolutional Neural Networks Using Piecewise Approximation. [

bnn] - [arxiv] Understanding Learning Dynamics of Binary Neural Networks via Information Bottleneck. [

bnn] - [arxiv] BinaryBERT: Pushing the Limit of BERT Quantization. [

bnn] [nlp] - [ECCV] BATS: Binary ArchitecTure Search. [

bnn]

- [AAAI] Efficient Quantization for Neural Networks with Binary Weights and Low Bitwidth Activations. [

qnn] - [AAAI] [31:fire:] Projection Convolutional Neural Networks for 1-bit CNNs via Discrete Back Propagation. [

bnn] - [APCCAS] Using Neuroevolved Binary Neural Networks to solve reinforcement learning environments. [

bnn] [code] - [BMVC] [32:fire:] XNOR-Net++: Improved Binary Neural Networks. [

bnn] - [BMVC] Accurate and Compact Convolutional Neural Networks with Trained Binarization. [

bnn] - [CoRR] RBCN: Rectified Binary Convolutional Networks for Enhancing the Performance of 1-bit DCNNs. [

bnn] - [CoRR] TentacleNet: A Pseudo-Ensemble Template for Accurate Binary Convolutional Neural Networks. [

bnn] - [CoRR] Improved training of binary networks for human pose estimation and image recognition. [

bnn] - [CoRR] Binarized Neural Architecture Search. [

bnn] - [CoRR] Matrix and tensor decompositions for training binary neural networks. [

bnn] - [CoRR] Back to Simplicity: How to Train Accurate BNNs from Scratch? [

bnn] [code] [193:star:] - [CVPR] [53:fire:] Structured Binary Neural Networks for Accurate Image Classification and Semantic Segmentation. [

bnn] - [CVPR] SeerNet: Predicting Convolutional Neural Network Feature-Map Sparsity Through Low-Bit Quantization. [

qnn] - [CVPR] [218:fire:] HAQ: Hardware-Aware Automated Quantization with Mixed Precision. [

qnn] [hardware] [torch] [233:star:] - [CVPR] [48:fire:] Quantization Networks. [

bnn] [torch] [82:star:] - [CVPR] Fully Quantized Network for Object Detection. [

qnn] - [CVPR] Learning Channel-Wise Interactions for Binary Convolutional Neural Networks. [

bnn] - [CVPR] [31:fire:] Circulant Binary Convolutional Networks: Enhancing the Performance of 1-bit DCNNs with Circulant Back Propagation. [

bnn] - [CVPR] [36:fire:] Regularizing Activation Distribution for Training Binarized Deep Networks. [

bnn] - [CVPR] A Main/Subsidiary Network Framework for Simplifying Binary Neural Network. [

bnn] - [CVPR] Binary Ensemble Neural Network: More Bits per Network or More Networks per Bit? [

bnn] - [FPGA] Towards Fast and Energy-Efficient Binarized Neural Network Inference on FPGA. [

bnn] [hardware] - [GLSVLSI] Binarized Depthwise Separable Neural Network for Object Tracking in FPGA. [

bnn] [hardware] - [ICCV] [55:fire:] Differentiable Soft Quantization: Bridging Full-Precision and Low-Bit Neural Networks. [

qnn] - [ICCV] Bayesian optimized 1-bit cnns. [

bnn] - [ICCV] Searching for Accurate Binary Neural Architectures. [

bnn] - [ICCV] HAWQ: Hessian AWare Quantization of Neural Networks With Mixed-Precision. [

qnn] - [ICCV] Data-Free Quantization Through Weight Equalization and Bias Correction. [

qnn] [hardware] [torch] - [ICML] Efficient 8-Bit Quantization of Transformer Neural Machine Language Translation Model. [

qnn] [nlp] - [ICLR] [37:fire:] ProxQuant: Quantized Neural Networks via Proximal Operators. [

qnn] [torch] - [ICLR] An Empirical study of Binary Neural Networks' Optimisation. [

bnn] - [ICIP] Training Accurate Binary Neural Networks from Scratch. [

bnn] [code] [192:star:] - [ICUS] Balanced Circulant Binary Convolutional Networks. [

bnn] - [IJCAI] Binarized Neural Networks for Resource-Efficient Hashing with Minimizing Quantization Loss. [

bnn] - [IJCAI] Binarized Collaborative Filtering with Distilling Graph Convolutional Network. [

bnn] - [ISOCC] Dual Path Binary Neural Network. [

bnn] - [IEEE J. Emerg. Sel. Topics Circuits Syst.] Hyperdrive: A Multi-Chip Systolically Scalable Binary-Weight CNN Inference Engine. [

hardware] - [IEEE JETC] [128:fire:] Eyeriss v2: A Flexible Accelerator for Emerging Deep Neural Networks on Mobile Devices. [

hardware] - [IEEE J. Solid-State Circuits] An Energy-Efficient Reconfigurable Processor for Binary-and Ternary-Weight Neural Networks With Flexible Data Bit Width. [

qnn] - [MDPI Electronics] A Review of Binarized Neural Networks. [

bnn] - [NeurIPS] MetaQuant: Learning to Quantize by Learning to Penetrate Non-differentiable Quantization. [

qnn] [torch] - [NeurIPS] Latent Weights Do Not Exist: Rethinking Binarized Neural Network Optimization. [

bnn] [tensorflow] - [NeurIPS] [43:fire:] Regularized Binary Network Training. [

bnn] - [NeurIPS] [44:fire:] Q8BERT: Quantized 8Bit BERT. [

qnn] [nlp] - [NeurIPS] Fully Quantized Transformer for Improved Translation. [

qnn] [nlp] - [NeurIPS] Normalization Helps Training of Quantized LSTM. [

qnn] [bnn] - [RoEduNet] PXNOR: Perturbative Binary Neural Network. [

bnn] [code] - [SiPS] Knowledge distillation for optimization of quantized deep neural networks. [

qnn] - [TMM] [45:fire:] Deep Binary Reconstruction for Cross-Modal Hashing. [

bnn] - [TMM] Compact Hash Code Learning With Binary Deep Neural Network. [

bnn] - [IEEE TCS.I] Xcel-RAM: Accelerating Binary Neural Networks in High-Throughput SRAM Compute Arrays. [

hardware] - [IEEE TCS.I] Recursive Binary Neural Network Training Model for Efficient Usage of On-Chip Memory. [

bnn] - [VLSI-SoC] A Product Engine for Energy-Efficient Execution of Binary Neural Networks Using Resistive Memories. [

bnn] [hardware] - [paper] [43:fire:] BNN+: Improved Binary Network Training. [

bnn] - [arxiv] Self-Binarizing Networks. [

bnn] - [arxiv] Towards Unified INT8 Training for Convolutional Neural Network. [

qnn] - [arxiv] daBNN: A Super Fast Inference Framework for Binary Neural Networks on ARM devices. [

bnn] [hardware] [code] - [arxiv] QKD: Quantization-aware Knowledge Distillation. [

qnn] - [arxiv] [59:fire:] Mixed Precision Quantization of ConvNets via Differentiable Neural Architecture Search. [

qnn]

- [AAAI] From Hashing to CNNs: Training BinaryWeight Networks via Hashing. [

bnn] - [AAAI] [136:fire:] Extremely Low Bit Neural Network: Squeeze the Last Bit Out with ADMM. [

qnn] [homepage] - [CAAI] Fast object detection based on binary deep convolution neural networks. [

bnn] - [CoRR] LightNN: Filling the Gap between Conventional Deep Neural Networks and Binarized Networks. [

bnn] - [CoRR] BinaryRelax: A Relaxation Approach For Training Deep Neural Networks With Quantized Weights. [

bnn] - [CVPR] [63:fire:] Two-Step Quantization for Low-bit Neural Networks. [

qnn] - [CVPR] Effective Training of Convolutional Neural Networks with Low-bitwidth Weights and Activations. [

qnn] - [CVPR] [97:fire:] Towards Effective Low-bitwidth Convolutional Neural Networks. [

qnn] - [CVPR] Modulated convolutional networks. [

bnn] - [CVPR] [67:fire:] SYQ: Learning Symmetric Quantization For Efficient Deep Neural Networks. [

qnn] [code] - [CVPR] [630:fire:] Quantization and Training of Neural Networks for Efficient Integer-Arithmetic-Only Inference. [

qnn] - [ECCV] Training Binary Weight Networks via Semi-Binary Decomposition. [

bnn] - [ECCV] [47:fire:] TBN: Convolutional Neural Network with Ternary Inputs and Binary Weights. [

bnn] [qnn] [torch] - [ECCV] [202:fire:] LQ-Nets: Learned Quantization for Highly Accurate and Compact Deep Neural Networks. [

qnn] [tensorflow] [188:star:] - [ECCV] [145:fire:] Bi-Real Net: Enhancing the Performance of 1-bit CNNs With Improved Representational Capability and Advanced Training Algorithm. [

bnn] [torch] [120:star:] - [ECCV] Quantization Mimic: Towards Very Tiny CNN for Object Detection. [

qnn] - [FCCM] ReBNet: Residual Binarized Neural Network. [

bnn] [tensorflow] - [FPL] FBNA: A Fully Binarized Neural Network Accelerator. [

hardware] - [ICLR] [65:fire:] Loss-aware Weight Quantization of Deep Networks. [

qnn] [code] - [ICLR] [230:fire:] Model compression via distillation and quantization. [

qnn] [torch] [284:star:] - [ICLR] [201:fire:] PACT: Parameterized Clipping Activation for Quantized Neural Networks. [

qnn] - [ICLR] [168:fire:] WRPN: Wide Reduced-Precision Networks. [

qnn] - [ICLR] Analysis of Quantized Models. [

qnn] - [ICLR] [141:fire:] Apprentice: Using Knowledge Distillation Techniques To Improve Low-Precision Network Accuracy. [

qnn] - [IJCAI] Deterministic Binary Filters for Convolutional Neural Networks. [

bnn] - [IJCAI] Planning in Factored State and Action Spaces with Learned Binarized Neural Network Transition Models. [

bnn] - [IJCNN] Analysis and Implementation of Simple Dynamic Binary Neural Networks. [

bnn] - [IPDPS] BitFlow: Exploiting Vector Parallelism for Binary Neural Networks on CPU. [

bnn] - [IEEE J. Solid-State Circuits] [66:fire:] BRein Memory: A Single-Chip Binary/Ternary Reconfigurable in-Memory Deep Neural Network Accelerator Achieving 1.4 TOPS at 0.6 W. [

hardware] [qnn] - [NCA] [88:fire:] A survey of FPGA-based accelerators for convolutional neural networks. [

hardware] - [NeurIPS] [150:fire:] Training Deep Neural Networks with 8-bit Floating Point Numbers. [

qnn] - [NeurIPS] [91:fire:] Scalable methods for 8-bit training of neural networks. [

qnn] [torch] - [MM] BitStream: Efficient Computing Architecture for Real-Time Low-Power Inference of Binary Neural Networks on CPUs. [

bnn] - [Res Math Sci] Blended coarse gradient descent for full quantization of deep neural networks. [

qnn] [bnn] - [TCAD] XNOR Neural Engine: A Hardware Accelerator IP for 21.6-fJ/op Binary Neural Network Inference. [

hardware] - [TRETS] [50:fire:] FINN-R: An End-to-End Deep-Learning Framework for Fast Exploration of Quantized Neural Networks. [

qnn] - [TVLSI] An Energy-Efficient Architecture for Binary Weight Convolutional Neural Networks. [

bnn] - [arxiv] Training Competitive Binary Neural Networks from Scratch. [

bnn] [code] [192:star:] - [arxiv] Joint Neural Architecture Search and Quantization. [

qnn] [torch] - [CVPR] Explicit loss-error-aware quantization for low-bit deep neural networks. [

qnn]

- [CoRR] BMXNet: An Open-Source Binary Neural Network Implementation Based on MXNet. [

bnn] [code] - [CVPR] [251:fire:] Deep Learning with Low Precision by Half-wave Gaussian Quantization. [

qnn] [code] [118:star:] - [CVPR] [156:fire:] Local Binary Convolutional Neural Networks. [

bnn] [torch] [94:star:] - [FPGA] [463:fire:] FINN: A Framework for Fast, Scalable Binarized Neural Network Inference. [

hardware] [bnn] - [ICASSP)] Fixed-point optimization of deep neural networks with adaptive step size retraining. [

qnn] - [ICCV] [130:fire:] Binarized Convolutional Landmark Localizers for Human Pose Estimation and Face Alignment with Limited Resources. [

bnn] [homepage] [torch] [207:star:] - [ICCV] [55:fire:] Performance Guaranteed Network Acceleration via High-Order Residual Quantization. [

qnn] - [ICLR] [554:fire:] Incremental Network Quantization: Towards Lossless CNNs with Low-Precision Weights. [

qnn] [torch] [144:star:] - [ICLR] [119:fire:] Loss-aware Binarization of Deep Networks. [

bnn] [code] - [ICLR] [222:fire:] Soft Weight-Sharing for Neural Network Compression. [

other] - [ICLR] [637:fire:] Trained Ternary Quantization. [

qnn] [torch] [90:star:] - [InterSpeech] Binary Deep Neural Networks for Speech Recognition. [

bnn] - [IPDPSW] On-Chip Memory Based Binarized Convolutional Deep Neural Network Applying Batch Normalization Free Technique on an FPGA. [

hardware] - [JETC] A GPU-Outperforming FPGA Accelerator Architecture for Binary Convolutional Neural Networks. [

hardware] [bnn] - [NeurIPS] [293:fire:] Towards Accurate Binary Convolutional Neural Network. [

bnn] [tensorflow] - [Neurocomputing] [126:fire:] FP-BNN: Binarized neural network on FPGA. [

hardware] - [MWSCAS] Deep learning binary neural network on an FPGA. [

hardware] [bnn] - [arxiv] [71:fire:] Ternary Neural Networks with Fine-Grained Quantization. [

qnn] - [arxiv] ShiftCNN: Generalized Low-Precision Architecture for Inference of Convolutional Neural Networks. [

qnn] [code] [53:star:]

- [CoRR] [1k:fire:] DoReFa-Net: Training Low Bitwidth Convolutional Neural Networks with Low Bitwidth Gradients. [

qnn] [code] [5.8k:star:] - [ECCV] [2.7k:fire:] XNOR-Net: ImageNet Classification Using Binary Convolutional Neural Networks. [

bnn] [torch] [787:star:] - [ICASSP)] Fixed-point Performance Analysis of Recurrent Neural Networks. [

qnn] - [NeurIPS] [572:fire:] Ternary weight networks. [

qnn] [code] [61:star:] - [NeurIPS)] [1.7k:fire:] Binarized Neural Networks: Training Deep Neural Networks with Weights and Activations Constrained to +1 or -1. [

bnn] [torch] [239:star:] - [CVPR] [270:fire:] Quantized convolutional neural networks for mobile devices. code

- [ICML] [191:fire:] Bitwise Neural Networks. [

bnn] - [NeurIPS] [1.8k:fire:] BinaryConnect: Training Deep Neural Networks with binary weights during propagations. [

bnn] [code] [330:star:] - [arxiv] Resiliency of Deep Neural Networks under quantizations. [

qnn]

-

[Doc] ZF-Net: An Open Source FPGA CNN Library.

-

[Doc] Accelerating CNN inference on FPGAs: A Survey.

-

[中文] An Overview of Deep Compression Approaches.

-

[中文] 嵌入式深度学习之神经网络二值化 - FPGA 实现

Our team is part of the DIG group of the State Key Laboratory of Software Development Environment (SKLSDE), supervised Prof. Xianglong Liu. The main research goals of our team is compressing and accelerating models under multiple scenes.

- Haotong Qin is a Ph.D. student in the State Key Laboratory of Software Development Environment (SKLSDE) and Shen Yuan Honors College at Beihang University, supervised by Prof. Wei Li and Prof. Xianglong Liu. And He is also a student researcher in Bytedance AI Lab. He obtained a B.Eng degree in computer science and engineering from Beihang University, and interned at the ByteDance AI Lab, Microsoft Research Asia, and Tencent WXG. I'm interested in hardware-friendly deep learning and neural network quantization. And his research goal is to enable state-of-the-art neural network models to be deployed on resource-limited hardware, which includes the compression and acceleration for multiple architectures, and the flexible and efficient deployment on multiple hardware.

- Ruihao Gong is currently a Ph.D. student in in the State Key Laboratory of Software Development Environment (SKLSDE) and a Senior Research Manager at SenseTime. Since 2017, he worked on the build-up of computer vision systems and model quantization as an intern at Sensetime Research, where he enjoyed working with the talented researchers and grew up a lot with the help of Fengwei Yu, Wei Wu, Jing Shao, and Junjie Yan. During the early time of the internship, he independently took responsibility for the development of intelligent video analysis system Sensevideo. Later, he started the research on model quantization which can speed up the inference and even the training of neural networks on edge devices. Now he is devoted to further promoting the accuracy of extremely low-bit models and the auto-deployment of quantized models.

- Yifu Ding is a senior student in the School of Computer Science and Engineering at Beihang University. She is in the State Key Laboratory of Software Development Environment (SKLSDE), under the supervision of Prof. Xianglong Liu. Currently, she is interested in computer vision and model quantization. She thinks that neural network models which are highly compressed can be deployed on resource-constrained devices. And among all the compression methods, quantization is a potential one.

Xiuying Wei

- Xiuying Wei is a first-year graduate student at Beihang University under the supervision of Prof. Xianglong Liu. She recevied a bachelor’s degree from Shandong University in 2020. Currently, she is interested in model quantization. She thinks that quantization could make model faster and more robust, which could put deep learning systems on low-power devices and bring more opportunity for future.

Qinghua Yan

- I am a senior student in the Sino-French Engineer School at Beihang University. I just started the research on model compression in the Skate Key Laboratory of Software Development Environment (SKLSDE), under the supervision of Prof. Xianglong Liu. I have great enthusiasm for deep learning and model quantization and I really enjoy working with my talented teammates.

Hong Chen

- Hong Chen is a first-year graduate student at Beihang University under the supervision of Prof. Xianglong Liu. He recevied a bachelor’s degree from Beihang University in 2022. Currently, he is interested in model quantization, especially data-free quantization. He believes that data-free quantization is the general trend, and is committed to breaking through its accuracy bottleneck.

Aoyu Li

- I am a senior student in the School of Computer Science and Engineering at Beihang University. Supervised by Prof. Xianglong Liu, I am currently conducting research on model quantization. My research interests are mainly about model compression, deployment and AI systems.

Xudong Ma

- Xudong Ma is a first-year graduate student at the School of Computer Science and Engineering, Beihang University. He received his bachelor's degree from UESTC in 2022. He is interested in the direction of model quantization, and he believes that model quantization is one of the current trends in AI.

Yuxuan Wen

Zhuoqing Peng

- Peng is a junior student in the School of Computer Science and Engineering at Beihang University under the supervision of Prof. Xianglong Liu. He is interested in model compression, computer vision and AI systems.

Li Wang

Xingyu Zheng

- Xingyu Zheng is a junior student at Beihang University. After participating in model distillation and model stealing, he is currently devoted to the quantization of large language models. He hopes to gain a deeper understanding of models, and in turn make them more robust and generalizable.

Mingzhu Shen

- Sensetime Research.

Xiangguo Zhang

- Sensetime Research. Xiangguo Zhang achieved the master degree in the School of Computer Science of Beihang University, under the guidance of Prof. Xianglong Liu. He received a bachelor's degree from Shandong University in 2019 and entered Beihang University in the same year. Currently, he is interested in computer vision and post training quantization.

-

Haotong Qin , Xiangguo Zhang, Ruihao Gong, Yifu Ding, Yi Xu, Xianglong Liu#. Distribution-sensitive Information Retention for Accurate Binary Neural Network. **International Journal of Computer Vision (IJCV), 2022.

-

Haotong Qin*, Xudong Ma*, Yifu Ding*, Xiaoyang Li, Yang Zhang, Yao Tian, Zejun Ma, Jie Luo, Xianglong Liu#. BiFSMN: Binary Neural Network for Keyword Spotting. **International Joint Conference on Artificial Intelligence (IJCAI)*, 2022.

-

Haotong Qin*, Yifu Ding*, Mingyuan Zhang*, Qinghua Yan, Aishan Liu, Qingqing Dang, Ziwei Liu, Xianglong Liu#. BiBERT: Accurate Fully Binarized BERT. **International Conference on Learning Representations (ICLR)*, 2022.

-

Xiuying Wei*, Ruihao Gong*, Yuhang Li, Xianglong Liu#, Fengwei Yu. QDrop: Randomly Dropping Quantization for Extremely Low-bit Post-Training Quantization. *International Conference on Learning Representations (ICLR), 2022.

-

Xiangguo Zhang*, Haotong Qin*, Yifu Ding, Ruihao Gong, Qinghua Yan, Renshuai Tao, Yuhang Li, Fengwei Yu, Xianglong Liu#. Diversifying Sample Generation for Data-Free Quantization. *IEEE Conference on Computer Vision and Pattern Recognition (CVPR)*, 2021 (oral).

-

Haotong Qin*, Zhongang Cai*, Mingyuan Zhang*, Yifu Ding, Haiyu Zhao, Shuai Yi, Xianglong Liu#, Hao Su. BiPointNet: Binary Neural Network for Point Clouds. International Conference on Learning Representations (ICLR), 2021.

-

Haotong Qin, Ruihao Gong, Xianglong Liu#, Xiao Bai, Jingkuan Song, Nicu Sebe. Binary Neural Network: A Survey. Pattern Recognition (PR), 2020.

-

Haotong Qin, Ruihao Gong, Xianglong Liu#, Mingzhu Shen, Ziran Wei, Fengwei Yu, Jingkuan Song. Forward and Backward Information Retention for Accurate Binary Neural Networks. IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2020.

-

Y. Wu, X. Liu#, H. Qin , K. Xia, S. Hu, Y. Ma, M. Wang. Boosting Temporal Binary Coding for Large-scale Video Search. IEEE Transactions on Multimedia (TMM), 2020.

-

F. Zhu, R. Gong, F. Yu, X. Liu#, Y. Wang, Z. Li, X. Yang, J. Yan. Towards Unified INT8 Training for Convolutional Neural Network. IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2020.

-

Y. Li, R. Gong, F. Yu, X. Dong, X. Liu#. DMS: Differentiable Dimension Search for Binary Neural Networks, International Conference on Learning Representations workshop on Neural Architecture Search (ICLR NAS workshop), 2020.

-

Y. Wu, Y. Wu, R. Gong, Y. Lv, K. Chen, D. Liang, X. Hu, X. Liu#, J. Yan. Rotation Consistent Margin Loss for Efficient Low-bit Face Recognition. IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2020.

-

M. Shen, X. Liu, R. Gong, K. Han. Balanced Binary Neural Networks with Gated Residual. IEEE International Conference on Acoustics, Speech, and Signal Processing, 2020.

-

R. Gong, X. Liu#, S. Jiang, T. Li, P. Hu, J. Lin, F. Yu, J. Yan. Differentiable Soft Quantization: Bridging Full-Precision and Low-Bit Neural Networks. IEEE International Conference on Computer Vision (CVPR), 2019.