RING++: Roto-Translation-Invariant Gram for Global Localization on a Sparse Scan Map (IEEE T-RO 2023)

Official implementation of RING and RING++:

- One RING to Rule Them All: Radon Sinogram for Place Recognition, Orientation and Translation Estimation (IEEE IROS 2022).

- RING++: Roto-Translation-Invariant Gram for Global Localization on a Sparse Scan Map (IEEE T-RO 2023).

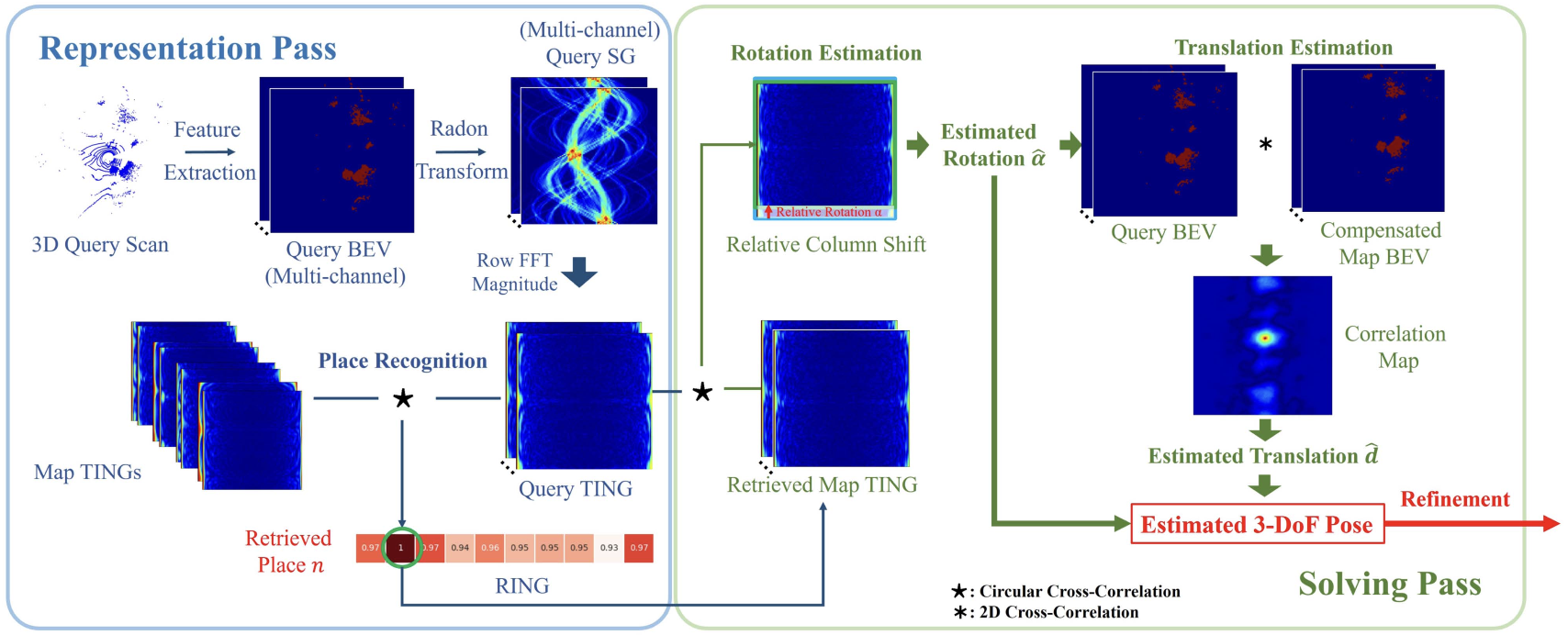

Global localization plays a critical role in many robot applications. LiDAR-based global localization draws the community’s focus with its robustness against illumination and seasonal changes. To further improve the localization under large viewpoint differences, we propose RING++ that has roto-translation invariant representation for place recognition and global convergence for both rotation and translation estimation. With the theoretical guarantee, RING++ is able to address the large viewpoint difference using a lightweight map with sparse scans. In addition, we derive sufficient conditions of feature extractors for the representation preserving the roto-translation invariance, making RING++ a framework applicable to generic multichannel features. To the best of our knowledge, this is the first learning-free framework to address all the subtasks of global localization in the sparse scan map. Validations on real-world datasets show that our approach demonstrates better performance than state-of-the-art learning-free methods and competitive performance with learning-based methods. Finally, we integrate RING++ into a multirobot/session simultaneous localization and mapping system, performing its effectiveness in collaborative applications.

You can install RING++ locally on your machine, or use the provided Dockerfile to run it in a container. This repository has been tested in the following environments:

- Ubuntu 18.04/20.04

- CUDA 11.1/11.3/11.6

- PyTorch 1.9/1.10/1.12

- Install PyTorch > 1.6.0 (make sure to select the correct cuda version)

pip install torch==1.11.0+cu113 torchvision==0.12.0+cu113 torchaudio==0.11.0 --extra-index-url https://download.pytorch.org/whl/cu113- Clone this repo and install the requirements

git clone https://github.com/lus6-Jenny/RING.git

cd RING

pip install -r requirements.txt- Install generate_bev_occ_cython and generate_bev_pointfeat_cython to generate BEV representation of point cloud

# Occpuancy BEV

cd utils/generate_bev_occ_cython

python setup.py install

python test.py

# Point feature BEV

cd utils/generate_bev_pointfeat_cython

python setup.py install

python test.pyNOTE: If you meet segmentation fault error, you may have overlarge number of points to process e.g. 67w. To tackle this problem you may need to change your system stack size by ulimit -s 81920 in your bash.

- Install torch-radon (install v2 for PyTorch > 1.6.0), you can install it from this repo or the original repo

# git clone https://github.com/matteo-ronchetti/torch-radon.git -b v2; cd torch-radon; python setup.py install

cd utils/torch-radon

python setup.py install- Install fast_gicp for fast point cloud registration, you can install it from this repo or the original repo

# git clone https://github.com/SMRT-AIST/fast_gicp.git --recursive; cd fast_gicp; python setup.py install --user

cd utils/fast_gicp

python setup.py install --user- Quick demo to see how the repo works

cd RING

# add the path of RING to PYTHONPATH

export PYTHONPATH=$PYTHONPATH:$(pwd)

python test.pyMake sure you have installed Docker and NVIDIA-Docker on your machine.

You can pull the pre-built docker image from dockerhub:

docker pull lus6/ring:noeticOr you can build the docker image from the Dockerfile by yourself:

- Build the docker image

docker build --network host --tag ring:noetic -f Dockerfile .- Run the docker container

docker run -itd --gpus all --network host --name ring ring:noetic- Run the test script in the container

docker exec -it ring bash

python3 test.pyWe carry out substantial experiments on four widely used datasets: NCLT, MulRan, SemanticKITTI and Oxford Radar RobotCar. Please download these datasets and put them in the data folder, origanized as follows:

NCLT Dataset

├── 2012-02-04

│ ├── velodyne_data

│ │ ├── velodyne_sync

│ │ │ ├── xxxxxx.bin

│ │ │ ├── ...

│ ├── ground_truth

│ │ ├── groundtruth_2012-02-04.csv

MulRan Dataset

├── Sejong01

│ ├── Ouster

│ │ ├── xxxxxx.bin

│ │ ├── ...

│ ├── global_pose.csv

KITTI Dataset

├── 08

│ ├── velodyne

│ │ ├── xxxxxx.bin

│ │ ├── ...

│ ├── labels

│ ├── times.txt

│ ├── poses.txt

│ ├── calib.txt

Oxford Radar RobotCar Dataset

├── 2019-01-11-13-24-51

│ ├── velodyne_left

│ │ ├── xxxxxx.png

│ │ ├── ...

│ ├── velodyne_right

│ │ ├── xxxxxx.png

│ │ ├── ...

│ ├── gps.csv

│ ├── ins.csv

├── velodyne_left.txt

├── velodyne_right.txt

The configuration of the evaluation is in utils/config.py. You can change the parameters in the configuration file to perform different settings.

Generate the evaluation sets for loop closure evaluation. For instance, to generate the evaluation set for the NCLT dataset:

python evaluation/generate_evaluate_sets.py --dataset nclt --dataset_root ./data/NCLT --map_sequence 2012-02-04 --query_sequence 2012-03-17 --map_sampling_distance 20.0 --query_sampling_distance 5.0 --dist_threshold 20.0where dataset is the evaluation dataset (nclt / mulran / kitti / oxford_radar), dataset_root is the path of the dataset, map_sequence and query_sequence are the sequences used as map and query, map_sampling_distance and query_sampling_distance are the sampling distances for the map and query, and dist_threshold is the distance threshold for the ground truth loop closure pairs. After running the script, the evaluation set will be saved in the path of dataset_root as a pickle file, for example ./data/NCLT/test_2012-02-04_2012-03-17_20.0_5.0_20.0.pickle.

To evaluate the loop closure performance of RING / RING++, run:

python evaluation/evaluate.py --dataset nclt --eval_set_filepath ./data/NCLT/test_2012-02-04_2012-03-17_20.0_5.0_20.0.pickle --revisit_thresholds 5.0 10.0 15.0 20.0 --num_k 25 --bev_type occwhere dataset is the evaluation dataset (nclt / mulran / kitti / oxford_radar), eval_set_filepath is the path of the evaluation set, revisit_thresholds is the list of revisit thresholds, num_k is the number of nearest neighbors for recall@k, and bev_type is the type of BEV representation (occ / feat). To evaluate RING, use --bev_type occ, and to evaluate RING++, use --bev_type feat.

To plot the precision-recall curve, run:

python evaluation/plot_PR_curve.py To visualize the pose estimation errors, run:

python evaluation/plot_pose_errors.py NOTE: You may need to change the path of the results in the script.

If you find this work useful, please cite:

@article{xu2023ring++,

title={RING++: Roto-Translation-Invariant Gram for Global Localization on a Sparse Scan Map},

author={Xu, Xuecheng and Lu, Sha and Wu, Jun and Lu, Haojian and Zhu, Qiuguo and Liao, Yiyi and Xiong, Rong and Wang, Yue},

journal={IEEE Transactions on Robotics},

year={2023},

publisher={IEEE}

}@inproceedings{lu2023deepring,

title={DeepRING: Learning Roto-translation Invariant Representation for LiDAR based Place Recognition},

author={Lu, Sha and Xu, Xuecheng and Tang, Li and Xiong, Rong and Wang, Yue},

booktitle={2023 IEEE International Conference on Robotics and Automation (ICRA)},

pages={1904--1911},

year={2023},

organization={IEEE}

}@inproceedings{lu2022one,

title={One ring to rule them all: Radon sinogram for place recognition, orientation and translation estimation},

author={Lu, Sha and Xu, Xuecheng and Yin, Huan and Chen, Zexi and Xiong, Rong and Wang, Yue},

booktitle={2022 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS)},

pages={2778--2785},

year={2022},

organization={IEEE}

}If you have any questions, please contact

Sha Lu: lusha@zju.edu.cn

The code is released under the MIT License.