Centernet [1] is a point-based detector, that works without anchor-box proposals. This type of implementation eliminates the post-processing part known from Single Shot MultiBox Detectors [2], which usually consumes a lot of resources, therefore the Non-Maximum Suppression can be simplified.

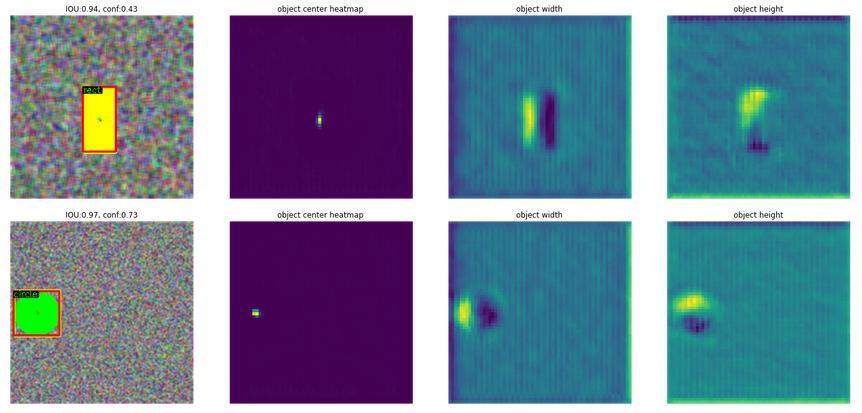

With this approach, the object's center points and other properties (size, bounding box offset, depth, orientation, or others) are regressed, providing a large versatility of detection tasks.

This implementation provides an optimized version with low computational complexity, that can be easily deployed on embedded devices.

NOTE: This is an unofficial implementation of Centernet, for the original one consult the article [1]

The architecture has the following parts:

- Backbone - that can be adjusted to the computational complexity needed. currently, slim U-NET [3] is used to generate the necessary features maps

- Centernet head - that generates Nr of classes + 2 (center point offset) + 2 (width and height), head width, head height heatmaps. Easily can be extended to regress for other type of values, i.e. 3D object detection or human pose detection

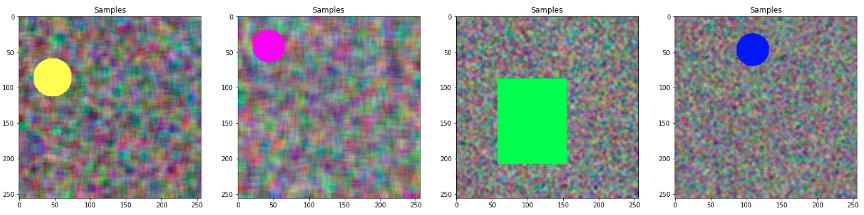

The implementation has a sample generator, that generates a noisy background and an object with different colors in the foreground, like circles, and rectangles.

Measurements:

Total params: 1,029,985

Backbone computational complexity [FLOPS]: 0.263 G

Backbone + head computational complexity [FLOPS]: 0.581 G

IOU: 92.55 %

Class Accuracy: 100.00 %,

- Objects as Points - arXiv:1904.07850 [cs.CV]

- SSD: Single Shot MultiBox Detector - arXiv:1512.02325 [cs.CV]

- U-Net: Convolutional Networks for Biomedical Image Segmentation - arXiv:1505.04597 [cs.CV]

- Focal Loss for Dense Object Detection

/Enjoy.