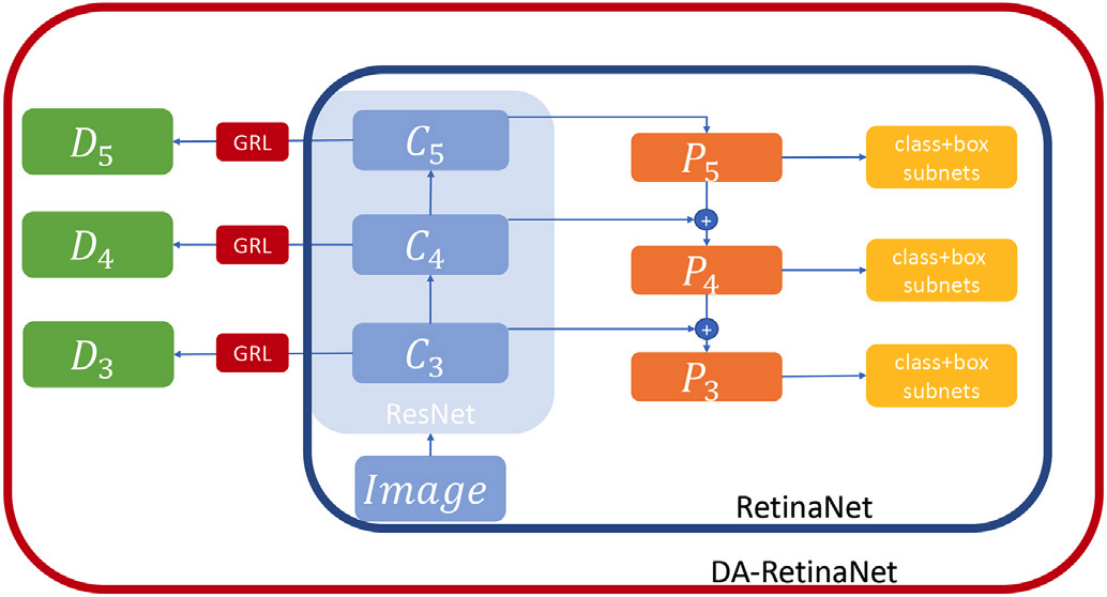

This is the implementation of our Image and Vision Computing 2021 work 'An unsupervised domain adaptation scheme for single-stage artwork recognition in cultural sites'. The aim is to reduce the gap between source and target distribution improving the object detector performance on the target domain when training and test data belong to different distributions. The original paper can be found here.

If you want to use this code with your dataset, please follow the following guide.

Please leave a star ⭐ and cite the following paper if you use this repository for your project.

@article{PASQUALINO2021104098,

title = "An unsupervised domain adaptation scheme for single-stage artwork recognition in cultural sites",

journal = "Image and Vision Computing",

pages = "104098",

year = "2021",

issn = "0262-8856",

doi = "https://doi.org/10.1016/j.imavis.2021.104098",

author = "Giovanni Pasqualino and Antonino Furnari and Giovanni Signorello and Giovanni Maria Farinella",

}

You can use this repo following one of these three methods:

NB: Detectron2 0.6 is required, installing other versions this code will not work.

Quickstart here 👉

Or load and run the DA-RetinaNet.ipynb on Google Colab following the instructions inside the notebook.

Follow the official guide to install Detectron2 0.6

Or

Download the official Detectron2 0.6 from here

Unzip the file and rename it in detectron2

run python -m pip install -e detectron2

Follow these instructions:

cd docker/

# Build

docker build -t detectron2:v0 .

# Launch

docker run --gpus all -it --shm-size=8gb -v /home/yourpath/:/home/yourpath --name=name_container detectron2:v0

If you exit from the container you can restart it using:

docker start name_container

docker exec -it name_container /bin/bash

Create the Cityscapes-Foggy Cityscapes dataset following the instructions available here

The UDA-CH dataset is available here

If you want to use this code with your dataset arrange the dataset in the format of COCO or PASCAL VOC.

For COCO annotations, inside the script uda_train.py register your dataset using:

register_coco_instances("dataset_name_source_training",{},"path_annotations","path_images")

register_coco_instances("dataset_name_target_training",{},"path_annotations","path_images")

register_coco_instances("dataset_name_target_test",{},"path_annotations","path_images")

For PASCAL VOC annotations, inside the cityscape_train.py register your dataset using:

register_pascal_voc("city_trainS", "cityscape/VOC2007/", "train_s", 2007, ['car','person','rider','truck','bus','train','motorcycle','bicycle'])

register_pascal_voc("city_trainT", "cityscape/VOC2007/", "train_t", 2007, ['car','person','rider','truck','bus','train','motorcycle','bicycle'])

register_pascal_voc("city_testT", "cityscape/VOC2007/", "test_t", 2007, ['car','person','rider','truck','bus','train','motorcycle','bicycle'])

You need to replace the parameters inside the register_pascal_voc() function according to your dataset name and classes.

Replace at the following path detectron2/modeling/meta_arch/ the dense_detector.py script with our dense_detector.py.

Do the same for the fpn.py file at the path detectron2/modeling/backbone/

Run the script uda_train.py for COCO annotations or cityscape_train.py for PASCAL VOC annotations.

Trained model on Cityscapes to FoggyCityscapes is available at this link:

DA-RetinaNet_Cityscapes

Trained models on the proposed UDA-CH dataset are available at these links:

DA-RetinaNet

DA-RetinaNet-CycleGAN

If you want to test the model load the new weights, set to 0 the number of iterations and rerun the same script used for the training.

Results adaptation between Cityscapes and Foggy Cityscapes dataset. The performance scores of the methods marked with the “*” symbol are reported from the authors of their respective papers.

| Model | mAP |

|---|---|

| Faster RCNN* | 20.30% |

| DA-Faster RCNN* | 27.60% |

| StrongWeak* | 34.30% |

| Diversify and Match* | 34.60% |

| DA-RetinaNet | 44.87% |

| RetinaNet (Oracle) | 53.46% |

Results of DA-Faster RCNN, Strong-Weak and the proposed DA-RetinaNet combined with image-to-image translation approach.

| image to image translation (CycleGAN) | ||

|---|---|---|

| Object Detector | None | Synthetic to Real |

| DA-Faster RCNN | 12.94% | 33.20% |

| StrongWeak | 25.12% | 47.70% |

| DA-RetinaNet | 31.04% | 58.01% |