Official PyTorch implementation of Exposure Bracketing is All You Need

Exposure Bracketing is All You Need for Unifying Image Restoration and Enhancement Tasks

Zhilu Zhang, Shuohao Zhang, Renlong Wu, Zifei Yan, Wangmeng Zuo

Harbin Institute of Technology, China

In this work, we propose to utilize bracketing photography to unify image restoration and enhancement tasks, including image denoising, deblurring, high dynamic range reconstruction, and super-resolution.

-

2024-05-01: The training, inference and evaluation codes for synthetic dataset have been released. -

2024-03-26: The synthetic dataset has been released. -

2024-02-05: The codes, pre-trained models, and ISP for the Bracketing Image Restoration and Enhancement Challenge are released in NTIRE2024 folder. (The codes have been restructured. If there are any problems with the codes, please contact us.) -

2024-02-04: We organize the Bracketing Image Restoration and Enhancement Challenge in NTIRE 2024 (CVPR Workshop), including Track 1 (BracketIRE Task) and Track 2 (BracketIRE+ Task). Details can bee seen in NTIRE2024/README.md. Welcome to participate!

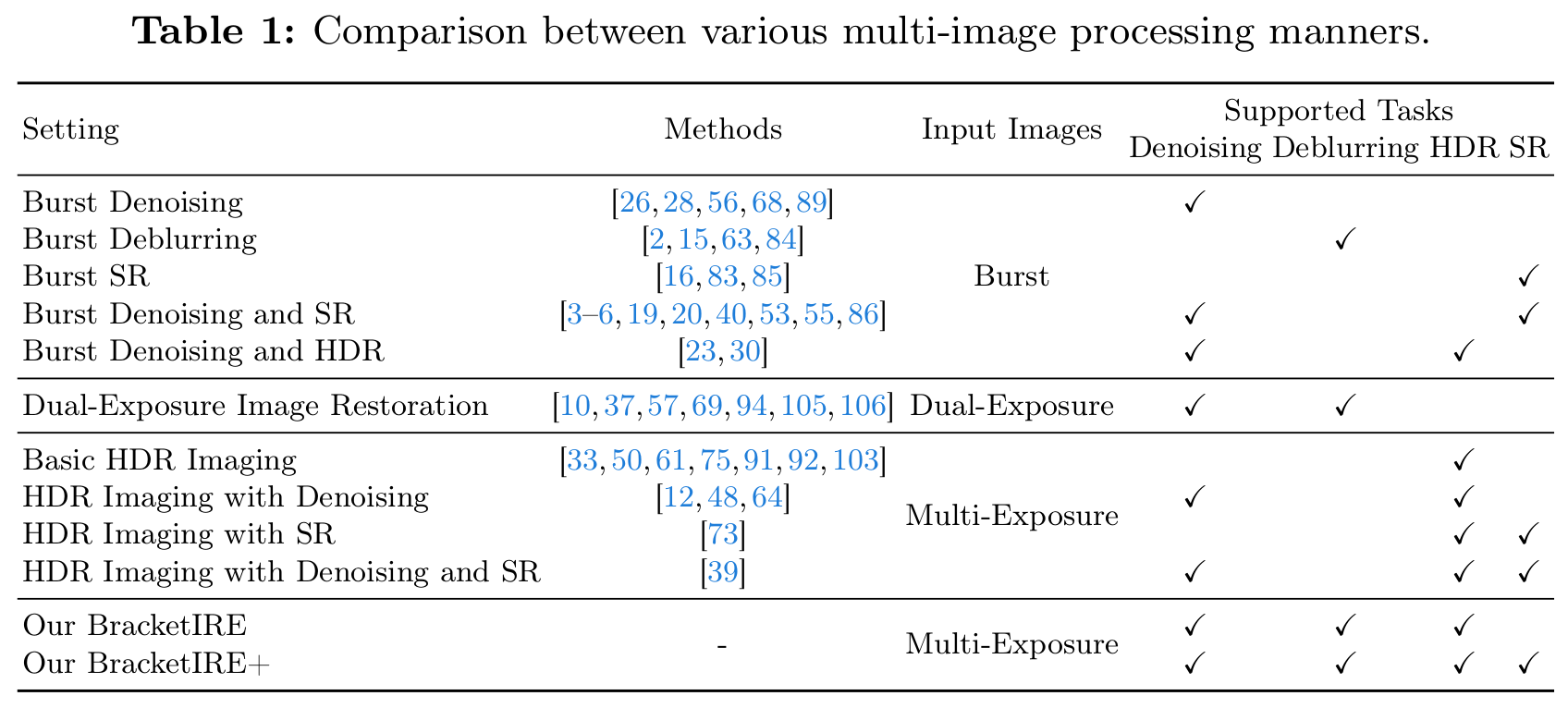

It is challenging but highly desired to acquire high-quality photos with clear content in low-light environments. Although multi-image processing methods (using burst, dual-exposure, or multi-exposure images) have made significant progress in addressing this issue, they typically focus exclusively on specific restoration or enhancement tasks, being insufficient in exploiting multi-image. Motivated by that multi-exposure images are complementary in denoising, deblurring, high dynamic range imaging, and super-resolution, we propose to utilize bracketing photography to unify restoration and enhancement tasks in this work. Due to the difficulty in collecting real-world pairs, we suggest a solution that first pre-trains the model with synthetic paired data and then adapts it to real-world unlabeled images. In particular, a temporally modulated recurrent network (TMRNet) and self-supervised adaptation method are proposed. Moreover, we construct a data simulation pipeline to synthesize pairs and collect real-world images from 200 nighttime scenarios. Experiments on both datasets show that our method performs favorably against the state-of-the-art multi-image processing ones.

- Python 3.x and PyTorch 1.12.

- OpenCV, NumPy, Pillow, timm, tqdm, scikit-image and tensorboardX.

Please download the data from Baidu Netdisk (Chinese: 百度网盘).

- BracketIRE task: https://pan.baidu.com/s/1Jy8G36Njg68UbzsbOEKdZQ?pwd=v4nn

- BracketIRE+ task: https://pan.baidu.com/s/1AKHppSl8ejKR8a8-tnVigA?pwd=kvsd

TODO

- Link: https://pan.baidu.com/s/10bQxDHaZ0sKB87tRooIewg?pwd=wtdg

- Place

synandsyn_plusfolders inckptfolder.

-

BracketIRE task

- Modify

dataroot - Run

sh ./syn_bash/train_syn.sh

- Modify

-

BracketIRE+ task

- Modify

datarootandload_path - Run

sh ./syn_bash/train_synplus.sh

- Modify

-

BracketIRE task

- Modify

datarootandname - Run

sh ./syn_bash/test_syn.sh

- Modify

-

BracketIRE+ task

- Modify

datarootandname - Run

sh ./syn_bash/test_synplus.sh

- Modify

TODO

- Note that the evaluation method is different from that of 'Bracketing Image Restoration and Enhancement Challenges on NTIRE 2024'.

- Here, we exclude invalid pixels around the image for evaluation, which is more reasonable.

- Related explanations can be found in Appendix B in our revised paper.

- We recommend that you use the new evaluation method in your future work.

- You can specify which GPU to use by

--gpu_ids, e.g.,--gpu_ids 0,1,--gpu_ids 3,--gpu_ids -1(for CPU mode). In the default setting, all GPUs are used. - You can refer to options for more arguments.

If you find it useful in your research, please consider citing:

@article{BracketIRE,

title={Exposure Bracketing is All You Need for Unifying Image Restoration and Enhancement Tasks},

author={Zhang, Zhilu and Zhang, Shuohao and Wu, Renlong and Yan, Zifei and Zuo, Wangmeng},

journal={arXiv preprint arXiv:2401.00766},

year={2024}

}

@inproceedings{ntire2024bracketing,

title={{NTIRE} 2024 Challenge on Bracketing Image Restoration and Enhancement: Datasets, Methods and Results},

author={Zhang, Zhilu and Zhang, Shuohao and Wu, Renlong and Zuo, Wangmeng and Timofte, Radu and others},

booktitle={CVPR Workshops},

year={2024}

}

This work is also a continuation of our previous works, i.e., SelfIR (NeurIPS 2022) and SelfHDR (ICLR 2024). If you are interested in them, please consider citing:

@article{SelfIR,

title={Self-supervised Image Restoration with Blurry and Noisy Pairs},

author={Zhang, Zhilu and Xu, Rongjian and Liu, Ming and Yan, Zifei and Zuo, Wangmeng},

journal={NeurIPS},

year={2022}

}

@inproceedings{SelfHDR,

title={Self-Supervised High Dynamic Range Imaging with Multi-Exposure Images in Dynamic Scenes},

author={Zhang, Zhilu and Wang, Haoyu and Liu, Shuai and Wang, Xiaotao and Lei, Lei and Zuo, Wangmeng},

booktitle={ICLR},

year={2024}

}