Shijie Zhou, Haoran Chang*, Sicheng Jiang*, Zhiwen Fan, Zehao Zhu, Dejia Xu, Pradyumna Chari, Suya You, Zhangyang Wang, Achuta Kadambi (* indicates equal contribution)

| Webpage | Full Paper | Video | Viewer Pre-built for Windows

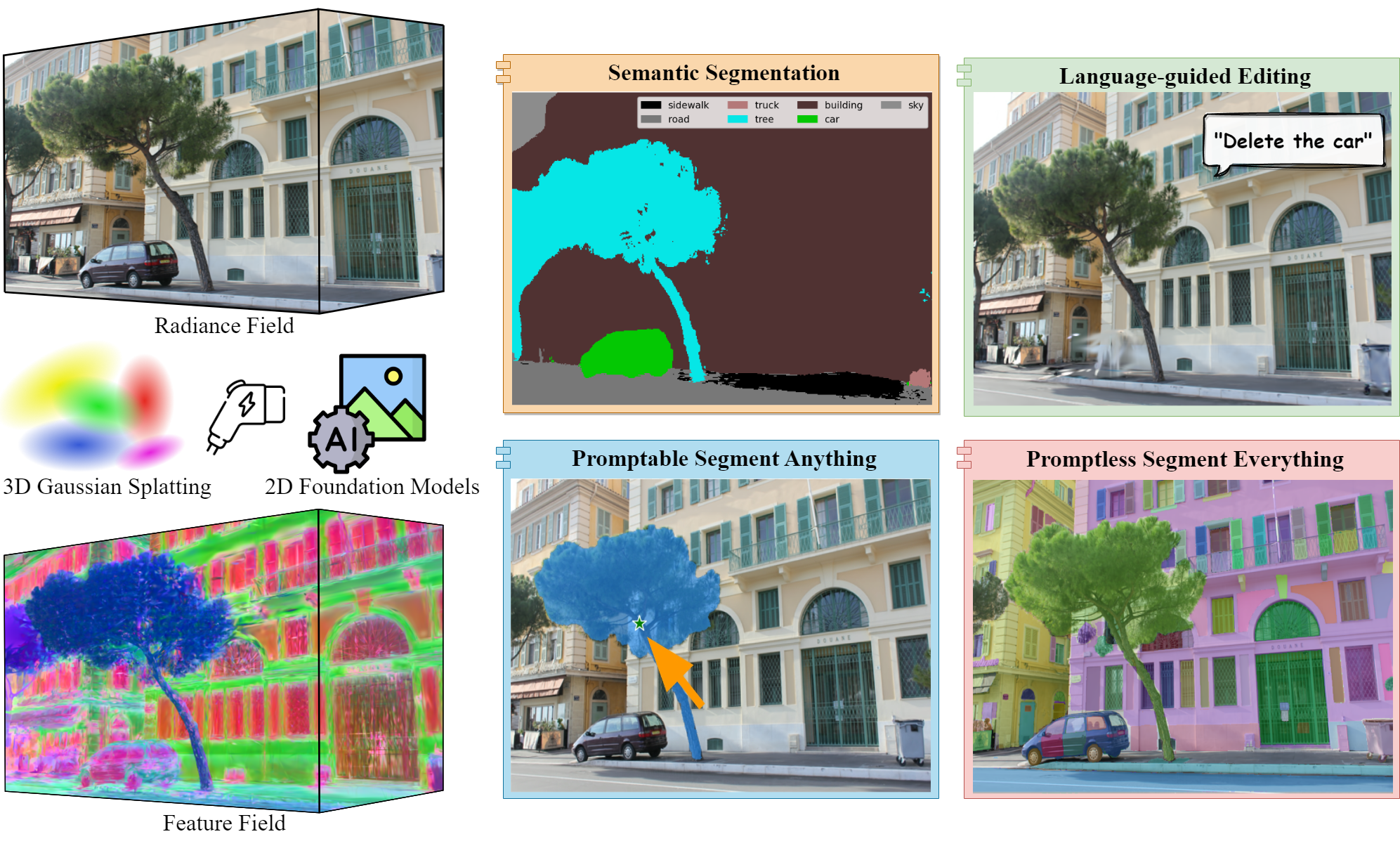

Abstract: 3D scene representations have gained immense popularity in recent years. Methods that use Neural Radiance fields are versatile for traditional tasks such as novel view synthesis. In recent times, some work has emerged that aims to extend the functionality of NeRF beyond view synthesis, for semantically aware tasks such as editing and segmentation using 3D feature field distillation from 2D foundation models. However, these methods have two major limitations: (a) they are limited by the rendering speed of NeRF pipelines, and (b) implicitly represented feature fields suffer from continuity artifacts reducing feature quality. Recently, 3D Gaussian Splatting has shown state-of-the-art performance on real-time radiance field rendering. In this work, we go one step further: in addition to radiance field rendering, we enable 3D Gaussian splatting on arbitrary-dimension semantic features via 2D foundation model distillation. This translation is not straightforward: naively incorporating feature fields in the 3DGS framework encounters significant challenges, notably the disparities in spatial resolution and channel consistency between RGB images and feature maps. We propose architectural and training changes to efficiently avert this problem. Our proposed method is general, and our experiments showcase novel view semantic segmentation, language-guided editing and segment anything through learning feature fields from state-of-the-art 2D foundation models such as SAM and CLIP-LSeg. Across experiments, our distillation method is able to provide comparable or better results, while being significantly faster to both train and render. Additionally, to the best of our knowledge, we are the first method to enable point and bounding-box prompting for radiance field manipulation, by leveraging the SAM model.

@article{zhou2023feature,

title = {Feature 3DGS: Supercharging 3D Gaussian Splatting to Enable Distilled Feature Fields},

author = {Zhou, Shijie and Chang, Haoran and Jiang, Sicheng and Fan, Zhiwen and Zhu, Zehao and Xu, Dejia and Chari, Pradyumna and You, Suya and Wang, Zhangyang and Kadambi, Achuta},

journal = {arXiv preprint arXiv:2312.03203},

year = {2023}

}Our default, provided install method is based on Conda package and environment management:

conda env create --file environment.yml

conda activate feature_3dgs

We follow the same dataset logistics for 3D Gaussian Splatting. If you want to work with your own scene, put the images you want to use in a directory <location>/input.

<location>

|---input

|---<image 0>

|---<image 1>

|---...

For rasterization, the camera models must be either a SIMPLE_PINHOLE or PINHOLE camera. We provide a converter script convert.py, to extract undistorted images and SfM information from input images. Optionally, you can use ImageMagick to resize the undistorted images. This rescaling is similar to MipNeRF360, i.e., it creates images with 1/2, 1/4 and 1/8 the original resolution in corresponding folders. To use them, please first install a recent version of COLMAP (ideally CUDA-powered) and ImageMagick.

If you have COLMAP and ImageMagick on your system path, you can simply run

python convert.py -s <location> [--resize] #If not resizing, ImageMagick is not neededOur COLMAP loaders expect the following dataset structure in the source path location:

<location>

|---images

| |---<image 0>

| |---<image 1>

| |---...

|---sparse

|---0

|---cameras.bin

|---images.bin

|---points3D.bin

Alternatively, you can use the optional parameters --colmap_executable and --magick_executable to point to the respective paths. Please note that on Windows, the executable should point to the COLMAP .bat file that takes care of setting the execution environment. Once done, <location> will contain the expected COLMAP data set structure with undistorted, resized input images, in addition to your original images and some temporary (distorted) data in the directory distorted.

If you have your own COLMAP dataset without undistortion (e.g., using OPENCV camera), you can try to just run the last part of the script: Put the images in input and the COLMAP info in a subdirectory distorted:

<location>

|---input

| |---<image 0>

| |---<image 1>

| |---...

|---distorted

|---database.db

|---sparse

|---0

|---...

Then run

python convert.py -s <location> --skip_matching [--resize] #If not resizing, ImageMagick is not neededCommand Line Arguments for convert.py

Flag to avoid using GPU in COLMAP.

Flag to indicate that COLMAP info is available for images.

Location of the inputs.

Which camera model to use for the early matching steps, OPENCV by default.

Flag for creating resized versions of input images.

Path to the COLMAP executable (.bat on Windows).

Path to the ImageMagick executable.

Download dataset to data directory.

mkdir dataDownload Mip-NeRF 360's dataset:

wget http://storage.googleapis.com/gresearch/refraw360/360_v2.zipDownload 3DGS's dataset:

wget https://repo-sam.inria.fr/fungraph/3d-gaussian-splatting/datasets/input/tandt_db.zipNote: During this preprocessing step, SAM usually takes shorter time compared to using LSeg. For the counter dataset from MipNeRF 360, SAM takes around 6 minutes and LSeg takes around 30 minutes using a single RTX A6000.

Setup LSeg

cd encoders/lseg_encoder

pip install -r requirements.txt

pip install git+https://github.com/zhanghang1989/PyTorch-Encoding/

Download the LSeg model file demo_e200.ckpt from the Google drive.

gdown 1ayk6NXURI_vIPlym16f_RG3ffxBWHxvb

python -u encode_images.py --backbone clip_vitl16_384 --weights demo_e200.ckpt --widehead --no-scaleinv --outdir ../../data/DATASET_NAME/rgb_feature_langseg --test-rgb-dir ../../data/DATASET_NAME/images --workers 0

This may produces large feature map files in --outdir (100-200MB per file).

Run train.py. If reconstruction fails, change --scale 4.0 to smaller or larger values, e.g., --scale 1.0 or --scale 16.0.

The code requires python>=3.8, as well as pytorch>=1.7 and torchvision>=0.8. Please follow the instructions here to install both PyTorch and TorchVision dependencies. Installing both PyTorch and TorchVision with CUDA support is strongly recommended.

SAM setup:

cd encoders/sam_encoder

pip install -e .

Pretrain model download:

Click the links below to download the checkpoint for the corresponding model type.

defaultorvit_h: ViT-H SAM model.vit_l: ViT-L SAM model.vit_b: ViT-B SAM model.

And place it at the folder: encoders/sam_encoder/checkpoints

The following optional dependencies are necessary for mask post-processing, saving masks in COCO format.

pip install opencv-python pycocotools matplotlib onnxruntime onnx

Run the following to export the image embeddings of an input image or directory of images.

python export_image_embeddings.py --checkpoint checkpoints/sam_vit_h_4b8939.pth --model-type vit_h --input ../../data/DATASET_NAME/images --output ../../data/OUTPUT_NAME/sam_embeddings

We are glad to introduce a brand new Multi-functional Interactive Viewer for the visualization of RGB, Depth, Edge, Normal, Curvature, and especially semantic feature. The Pre-built Viewer for Windows can be found here. If you use Ubuntu or you want to check the viewer usage, please refer to GS Monitor.

Feature.3DGS.mp4

Firstly run the viewer,

<path to downloaded/compiled viewer>/bin/SIBR_remoteGaussian_app_rwdiand then

-

If you want to monitor the training process, run

train.py, see Train section for more details. -

If you want to view the trained model, run

view.py, see View the Trained Model section for more details.

python train.py -s data/DATASET_NAME -m output/OUTPUT_NAME -f lseg -r 0 --speedup --iterations 7000

Command Line Arguments for train.py

Path to the source directory containing a COLMAP or Synthetic NeRF data set.

Path where the trained model should be stored (output/<random> by default).

Switch different foundation model encoders, lseg for LSeg and sam for SAM

Alternative subdirectory for COLMAP images (images by default).

Add this flag to use a MipNeRF360-style training/test split for evaluation.

Specifies resolution of the loaded images before training. If provided 1, 2, 4 or 8, uses original, 1/2, 1/4 or 1/8 resolution, respectively. If proveided 0, use GT feature map's resolution. For all other values, rescales the width to the given number while maintaining image aspect. If proveided -2, use the customized resolution (utils/camera_utils.py L31). If not set and input image width exceeds 1.6K pixels, inputs are automatically rescaled to this target.

Optional speed-up module for reduced feature dimention initialization.

Specifies where to put the source image data, cuda by default, recommended to use cpu if training on large/high-resolution dataset, will reduce VRAM consumption, but slightly slow down training. Thanks to HrsPythonix.

Add this flag to use white background instead of black (default), e.g., for evaluation of NeRF Synthetic dataset.

Order of spherical harmonics to be used (no larger than 3). 3 by default.

Flag to make pipeline compute forward and backward of SHs with PyTorch instead of ours.

Flag to make pipeline compute forward and backward of the 3D covariance with PyTorch instead of ours.

Enables debug mode if you experience erros. If the rasterizer fails, a dump file is created that you may forward to us in an issue so we can take a look.

Debugging is slow. You may specify an iteration (starting from 0) after which the above debugging becomes active.

Number of total iterations to train for, 30_000 by default.

IP to start GUI server on, 127.0.0.1 by default.

Port to use for GUI server, 6009 by default.

Space-separated iterations at which the training script computes L1 and PSNR over test set, 7000 30000 by default.

Space-separated iterations at which the training script saves the Gaussian model, 7000 30000 <iterations> by default.

Space-separated iterations at which to store a checkpoint for continuing later, saved in the model directory.

Path to a saved checkpoint to continue training from.

Flag to omit any text written to standard out pipe.

Spherical harmonics features learning rate, 0.0025 by default.

Opacity learning rate, 0.05 by default.

Scaling learning rate, 0.005 by default.

Rotation learning rate, 0.001 by default.

Number of steps (from 0) where position learning rate goes from initial to final. 30_000 by default.

Initial 3D position learning rate, 0.00016 by default.

Final 3D position learning rate, 0.0000016 by default.

Position learning rate multiplier (cf. Plenoxels), 0.01 by default.

Iteration where densification starts, 500 by default.

Iteration where densification stops, 15_000 by default.

Limit that decides if points should be densified based on 2D position gradient, 0.0002 by default.

How frequently to densify, 100 (every 100 iterations) by default.

How frequently to reset opacity, 3_000 by default.

Influence of SSIM on total loss from 0 to 1, 0.2 by default.

Percentage of scene extent (0--1) a point must exceed to be forcibly densified, 0.01 by default.

You can customize NUM_SEMANTIC_CHANNELS in submodules/diff-gaussian-rasterization/cuda_rasterizer/config.h for any number of feature dimension that you want:

- Customize

NUM_SEMANTIC_CHANNELSinconfig.h.

If you would like to use the optional CNN speed-up module, do the following accordingly:

- Customize

NUMBERinsemantic_feature_size/NUMBERinscene/gaussian_model.pyin line 142. - Customize

NUMBERinfeature_out_dim/NUMBERintrain.pyin line 51. - Customize

NUMBERinfeature_out_dim/NUMBERinrender.pyin line 116 and 246.

where feature_out_dim / NUMBER = NUM_SEMANTIC_CHANNELS. The feature_out_dim matches the ground truth foundation model dimensions, 512 for LSeg and 256 for SAM. The default NUMBER = 2. For your reference, here are 4 configurations of runing train.py:

For langage-guided editing:

-f lseg with NUM_SEMANTIC_CHANNELS 512*.

For segmentation tasks:

-f lseg --speedup with NUM_SEMANTIC_CHANNELS 256, NUMBER = 2*.

-f sam with NUM_SEMANTIC_CHANNELS 256.

-f sam --speedup with NUM_SEMANTIC_CHANNELS 128, NUMBER = 2*.

*: setup used in our experiments

Make sure to compile everytime after modifying any CUDA code

cd submodules/diff-gaussian-rasterization

pip install .

After training, you can view your trained model directly while keep the viewer running by:

python view.py -s <path to COLMAP or NeRF Synthetic dataset> -m <path to trained model> -f lsegImportant Command Line Arguments for view.py

Path to the source directory containing a COLMAP or Synthetic NeRF data set.

Path where the trained model should be stored (output/<random> by default).

Specifies which of iteration to load.

sam or lseg

- Render from training and test views:

python render.py -s data/DATASET_NAME -m output/OUTPUT_NAME --iteration 3000

Command Line Arguments for render.py

Path to the trained model directory you want to create renderings for.

Flag to skip rendering the training set.

Flag to skip rendering the test set.

Flag to omit any text written to standard out pipe.

The below parameters will be read automatically from the model path, based on what was used for training. However, you may override them by providing them explicitly on the command line.

Path to the source directory containing a COLMAP or Synthetic NeRF data set.

Alternative subdirectory for COLMAP images (images by default).

Add this flag to use a MipNeRF360-style training/test split for evaluation.

Changes the resolution of the loaded images before training. If provided 1, 2, 4 or 8, uses original, 1/2, 1/4 or 1/8 resolution, respectively. For all other values, rescales the width to the given number while maintaining image aspect. 1 by default.

Add this flag to use white background instead of black (default), e.g., for evaluation of NeRF Synthetic dataset.

Flag to make pipeline render with computed SHs from PyTorch instead of ours.

Flag to make pipeline render with computed 3D covariance from PyTorch instead of ours.

- Render from novel views (add

--novel_view):

python render.py -s data/DATASET_NAME -m output/OUTPUT_NAME -f lseg --iteration 3000 --novel_view

(Add numbers after --num_views to change number of views, e.g. --num_views 100, default number is 200)

- Render from novel views using multiple interpolations (add

--novel_viewand--multi_interpolate):

python render.py -s data/DATASET_NAME -m output/OUTPUT_NAME -f lseg --iteration 3000 --novel_view --multi_interpolate

python render.py -s data/DATASET_NAME -m output/OUTPUT_NAME -f lseg --iteration 3000 --edit_config configs/XXX.yaml

Run to create videos (add --fps to change FPS, e.g. --fps 20 default is 10):

python videos.py --data output/OUTPUT_NAME --fps 10 -f lseg --iteration 10000

- Run the following to segment with 150 labels (default is ADE20K):

python -u segmentation.py --data ../../output/DATASET_NAME/ --iteration 6000- Run the following to segment with self-defined label set (e.g. add

--label_src car,building,tree):

python -u segmentation.py --data ../../output/DATASET_NAME/ --iteration 6000 --label_src car,building,treeCalculate segmentaion metric:

cd encoders/lseg_encoder

python -u segmentation_metric.py --backbone clip_vitl16_384 --weights demo_e200.ckpt --widehead --no-scaleinv --student-feature-dir ../../output/OUTPUT_NAME/test/ours_30000/saved_feature/ --teacher-feature-dir ../../data/DATASET_NAME/rgb_feature_langseg/ --test-rgb-dir ../../output/OUTPUT_NAME/test/ours_30000/renders/ --workers 0 --eval-mode testRun with following (add --image to encode features from images):

- Run with given input point coordinate (e.g. add

--point 500 800):

python segment_prompt.py --checkpoint checkpoints/sam_vit_h_4b8939.pth --model-type vit_h --data ../../output/OUTPUT_NAME --iteration 7000 --point 500 800

- Run with given input box (e.g. add

--box 100 100 1500 1200):

python segment_prompt.py --checkpoint checkpoints/sam_vit_h_4b8939.pth --model-type vit_h --data ../../output/OUTPUT_NAME --iteration 7000 --box 100 100 1500 1200

- Run with given input point (negative) and box (e.g. add

--point 500 800and--box 100 100 1500 1200):

python segment_prompt.py --checkpoint checkpoints/sam_vit_h_4b8939.pth --model-type vit_h --data ../../output/OUTPUT_NAME --iteration 7000 --box 100 100 1500 1200 --point 500 800

(Add--onnx_path to change onnx path)

Run with following (add --image to encode features from images):

python segment.py --checkpoint checkpoints/sam_vit_h_4b8939.pth --model-type vit_h --data ../../output/OUTPUT_NAME --iteration 7000

Run with following (remove --feature_path to encode features directly from images):

python segment_time.py --checkpoint checkpoints/sam_vit_h_4b8939.pth --model-type vit_h --image_path ../../output/OUTPUT_NAME/novel_views/ours_7000/renders/ --feature_path ../../output/OUTPUT_NAME/novel_views/ours_7000/saved_feature --output ../../output/OUTPUT_NAME

Our repo is developed based on 3D Gaussian Splatting, DFFs and Segment Anything. Many thanks to the authors for opensoucing the codebase.