Effective Data Augmentation With Diffusion Models

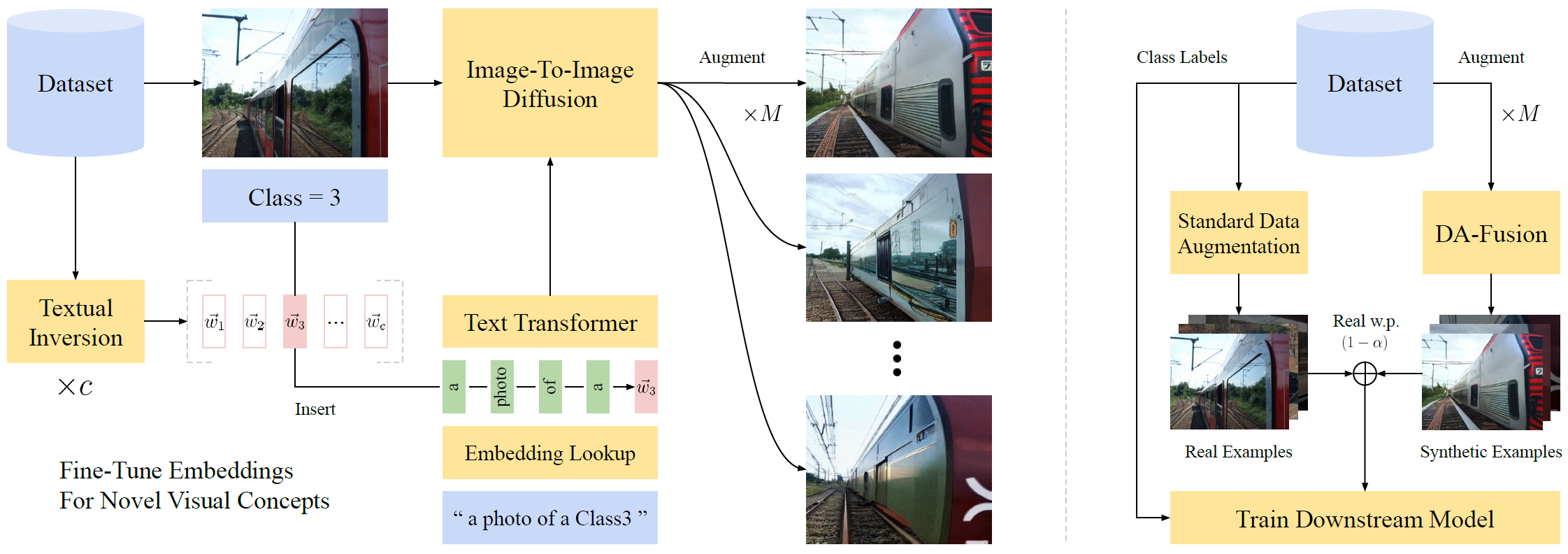

Existing data augmentations like rotations and re-colorizations provide diversity but preserve semantics. We explore how prompt-based generative models complement existing data augmentations by controlling image semantics via prompts. Our generative data augmentations build on Stable Diffusion and improve visual few-shot learning.

Installation

To install the package, first create a conda environment.

conda create -n da-fusion python=3.7 pytorch==1.12.1 torchvision==0.13.1 cudatoolkit=11.6 -c pytorch

conda activate da-fusion

pip install diffusers["torch"] transformers pycocotools pandas matplotlib seaborn scipyThen download and install the source code.

git clone git@github.com:brandontrabucco/da-fusion.git

pip install -e da-fusionDatasets

We benchmark DA-Fusion on few-shot image classification problems, including a Leafy Spurge weed recognition task, and classification tasks derived from COCO and PASCAL VOC. For the latter two, we label images with the classes corresponding to the largest object in the image.

Custom datasets can be evaluated by implementing subclasses of semantic_aug/few_shot_dataset.py.

Setting Up PASCAL VOC

Data for the PASCAL VOC task is adapted from the 2012 PASCAL VOC Challenge. Once this dataset has been downloaded and extracted, the PASCAL dataset class semantic_aug/datasets/pascal.py should be pointed to the downloaded dataset via the PASCAL_DIR config variable located here.

Ensure that PASCAL_DIR points to a folder containing ImageSets, JPEGImages, SegmentationClass, and SegmentationObject subfolders.

Setting Up COCO

To setup COCO, first download the 2017 Training Images, the 2017 Validation Images, and the 2017 Train/Val Annotations. These files should be unzipped into the following directory structure.

coco2017/

train2017/

val2017/

annotations/

COCO_DIR located here should be updated to point to the location of coco2017 on your system.

Setting Up The Spurge Dataset

We are planning to release this dataset in the next few months. Check back for updates!

Fine-Tuning Tokens

We perform textual inversion (https://arxiv.org/abs/2208.01618) to adapt Stable Diffusion to the classes present in our few-shot datasets. The implementation in fine_tune.py is adapted from the Diffusers example.

We wrap this script for distributing experiments on a slurm cluster in a set of sbatch scripts located at scripts/fine_tuning. These scripts will perform multiple runs of Textual Inversion in parallel, subject to the number of available nodes on your slurm cluster.

If sbatch is not available in your system, you can run these scripts with bash and manually set SLURM_ARRAY_TASK_ID and SLURM_ARRAY_TASK_COUNT for each parallel job (these are normally set automatically by slurm to control the job index, and the number of jobs respectively, and can be set to 0, 1).

Few-Shot Classification

Code for training image classification models using augmented images from DA-Fusion is located in train_classifier.py. This script accepts a number of arguments that control how the classifier is trained:

python train_classifier.py --logdir pascal-baselines/textual-inversion-0.5 \

--synthetic-dir "aug/textual-inversion-0.5/{dataset}-{seed}-{examples_per_class}" \

--dataset pascal --prompt "a photo of a {name}" \

--aug textual-inversion --guidance-scale 7.5 \

--strength 0.5 --mask 0 --inverted 0 \

--num-synthetic 10 --synthetic-probability 0.5 \

--num-trials 1 --examples-per-class 4This example will train a classifier on the PASCAL VOC task, with 4 images per class, using the prompt "a photo of a ClassX" where the special token ClassX is fine-tuned (from scratch) with textual inversion. Slurm scripts that reproduce the paper are located in scripts/textual_inversion. Results are logged to .csv files based on the script argument --logdir.

We used a custom plotting script to generate the figures in the main paper.

Citation

If you find our method helpful, consider citing our preprint!

@misc{https://doi.org/10.48550/arxiv.2302.07944,

doi = {10.48550/ARXIV.2302.07944},

url = {https://arxiv.org/abs/2302.07944},

author = {Trabucco, Brandon and Doherty, Kyle and Gurinas, Max and Salakhutdinov, Ruslan},

keywords = {Computer Vision and Pattern Recognition (cs.CV), Artificial Intelligence (cs.AI), FOS: Computer and information sciences, FOS: Computer and information sciences},

title = {Effective Data Augmentation With Diffusion Models},

publisher = {arXiv},

year = {2023},

copyright = {arXiv.org perpetual, non-exclusive license}

}