This is an official implementation of ILA, a new temporal modeling method for video action recognition.

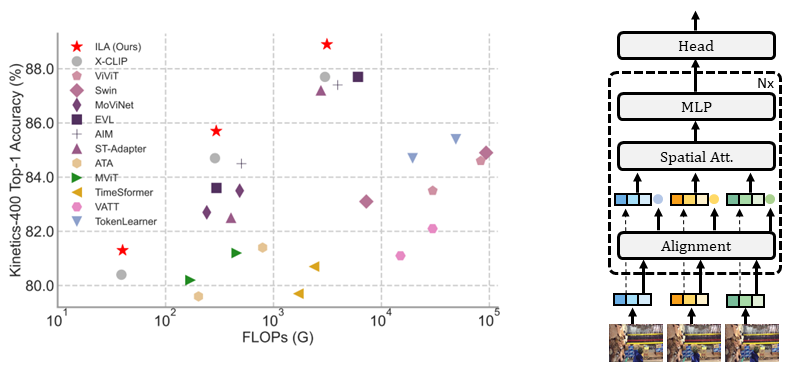

Implicit Temporal Modeling with Learnable Alignment for Video Recognition

Shuyuan Tu, Qi Dai, Zuxuan Wu, Zhi-Qi Cheng, Han Hu, Yu-Gang Jiang

To set up the environment, you can easily run the following command:

pip install torch==1.11.0

pip install torchvision==0.12.0

pip install pathlib

pip install mmcv-full

pip install decord

pip install ftfy

pip install einops

pip install termcolor

pip install timm

pip install regex

Install Apex as follows

git clone https://github.com/NVIDIA/apex

cd apex

pip install -v --disable-pip-version-check --no-cache-dir --global-option="--cpp_ext" --global-option="--cuda_ext" ./

For downloading the Kinetics datasets, you can refer to mmaction2 or CVDF. For Something-Something v2, you can get them from the official website.

Due to limited storage, we decord the videos in an online fashion using decord.

We provide the following way to organize the dataset:

- Standard Folder: For standard folder, put all videos in the

videosfolder, and prepare the annotation files astrain.txtandval.txt. Please make sure the folder looks like this:$ ls /PATH/TO/videos | head -n 2 a.mp4 b.mp4 $ head -n 2 /PATH/TO/train.txt a.mp4 0 b.mp4 2 $ head -n 2 /PATH/TO/val.txt c.mp4 1 d.mp4 2

The training configurations lie in configs. For example, you can run the following command to train ILA-ViT-B/16 with 8 frames on Something-Something v2.

python -m torch.distributed.launch --nproc_per_node=8 main.py -cfg configs/ssv2/16_8.yaml --output /PATH/TO/OUTPUT --accumulation-steps 8

Note:

- We recommend setting the total batch size to 256.

- Please specify the data path in config file(

configs/*.yaml). For standard folder, set that to/PATH/TO/videosnaturally. - The pretrained CLIP is specified by using

--pretrained /PATH/TO/PRETRAINED.

For example, you can run the following command to validate the ILA-ViT-B/16 with 8 frames on Something-Something v2.

python -m torch.distributed.launch --nproc_per_node=8 main.py -cfg configs/ssv2/16_8.yaml --output /PATH/TO/OUTPUT --only_test --resume /PATH/TO/CKPT --opts TEST.NUM_CLIP 4 TEST.NUM_CROP 3

Note:

- According to our experience and sanity checks, there is a reasonable random variation about accuracy when testing on different machines.

- There are two parts in the provided logs. The first part is conventional training followed by validation per epoch with single-view. The second part refers to the multiview (3 crops x 4 clips) inference logs.

This is an original-implementation for open-source use. In the following table we report the accuracy in original paper.

-

Fully-supervised on Kinetics-400:

Model Input Top-1 Acc.(%) Top-5 Acc.(%) ckpt ILA-B/32 8x224 81.3 95.0 GoogleDrive ILA-B/32 16x224 82.4 95.8 GoogleDrive ILA-B/16 8x224 84.0 96.6 GoogleDrive ILA-B/16 16x224 85.7 97.2 GoogleDrive ILA-L/14 8x224 88.0 98.1 GoogleDrive ILA-L/14 16x336 88.7 97.8 GoogleDrive -

Fully-supervised on Something-Something v2:

Model Input Top-1 Acc.(%) Top-5 Acc.(%) ckpt ILA-B/16 8x224 65.0 89.2 GoogleDrive ILA-B/16 16x224 66.8 90.3 GoogleDrive ILA-L/14 8x224 67.8 90.5 GoogleDrive ILA-L/14 16x336 70.2 91.8 GoogleDrive

If this project is useful for you, please consider citing our paper :

@article{ILA,

title={Implicit Temporal Modeling with Learnable Alignment for Video Recognition},

author={Tu, Shuyuan and Dai, Qi and Wu, Zuxuan and Cheng, Zhi-Qi and Hu, Han and Jiang, Yu-Gang},

journal={arXiv preprint arXiv:2304.10465},

year={2023}

}

Parts of the codes are borrowed from mmaction2, Swin and X-CLIP. Sincere thanks to their wonderful works.