This repo contains the code for our paper Convolutions Die Hard: Open-Vocabulary Panoptic Segmentation with Single Frozen Convolutional CLIP

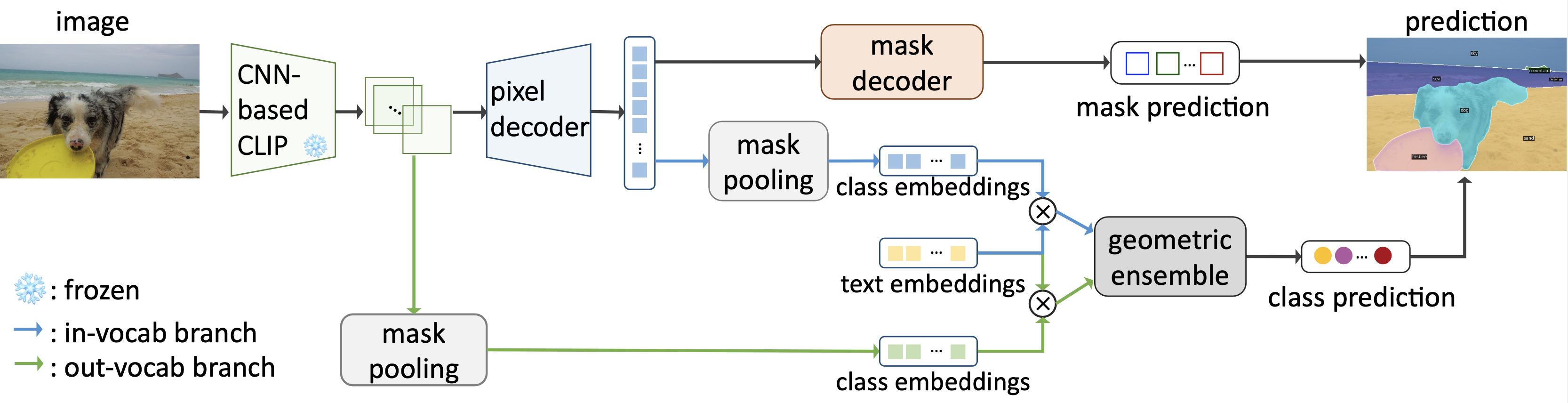

FC-CLIP is an universal model for open-vocabulary image segmentation problems, consisting of a class-agnostic segmenter, in-vocabulary classifier, out-of-vocabulary classifier. With everything built upon a shared single frozen convolutional CLIP model, FC-CLIP not only achieves state-of-the-art performance on various open-vocabulary segmentation benchmarks, but also enjoys a much lower training (3.2 days with 8 V100) and testing costs compared to prior arts.

See installation instructions.

See Preparing Datasets for FC-CLIP.

See Getting Started with FC-CLIP.

We also support FC-CLIP with HuggingFace 🤗 Demo

| ADE20K(A-150) | Cityscapes | Mapillary Vistas | ADE20K-Full (A-847) |

Pascal Context 59 (PC-59) |

Pascal Context 459 (PC-459) |

Pascal VOC 21 (PAS-21) |

Pascal VOC 20 (PAS-20) |

COCO (training dataset) |

download | ||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| PQ | mAP | mIoU | PQ | mAP | mIoU | PQ | mIoU | mIoU | mIoU | mIoU | mIoU | mIoU | PQ | mAP | mIoU | ||

| FC-CLIP | 26.8 | 16.8 | 34.1 | 44.0 | 26.8 | 56.2 | 18.3 | 27.8 | 14.8 | 58.4 | 18.2 | 81.8 | 95.4 | 54.4 | 44.6 | 63.7 | checkpoint |

If you use FC-CLIP in your research, please use the following BibTeX entry.

@inproceedings{yu2023fcclip,

title={Convolutions Die Hard: Open-Vocabulary Panoptic Segmentation with Single Frozen Convolutional CLIP},

author={Qihang Yu and Ju He and Xueqing Deng and Xiaohui Shen and Liang-Chieh Chen},

journal={arXiv},

year={2023}

}Mask2Former (https://github.com/facebookresearch/Mask2Former)

ODISE (https://github.com/NVlabs/ODISE)