English | 简体中文

Ye Li1

Lingdong Kong2

Hanjiang Hu3

Xiaohao Xu1

Xiaonan Huang1

1University of Michigan, Ann Arbor

2National University of Singapore

3Carnegie Mellon University

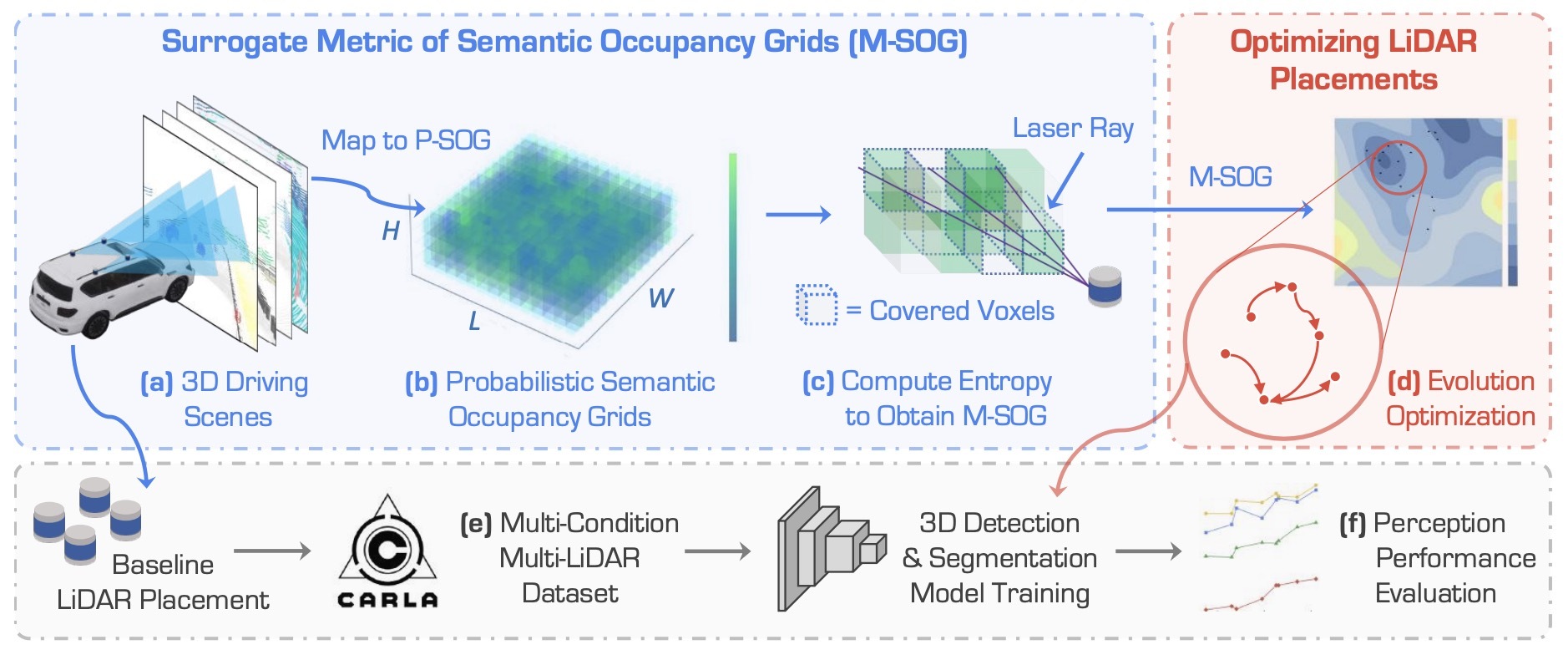

Place3D is a full-cycle pipeline that encompasses LiDAR placement optimization, data generation, and downstream evaluations.

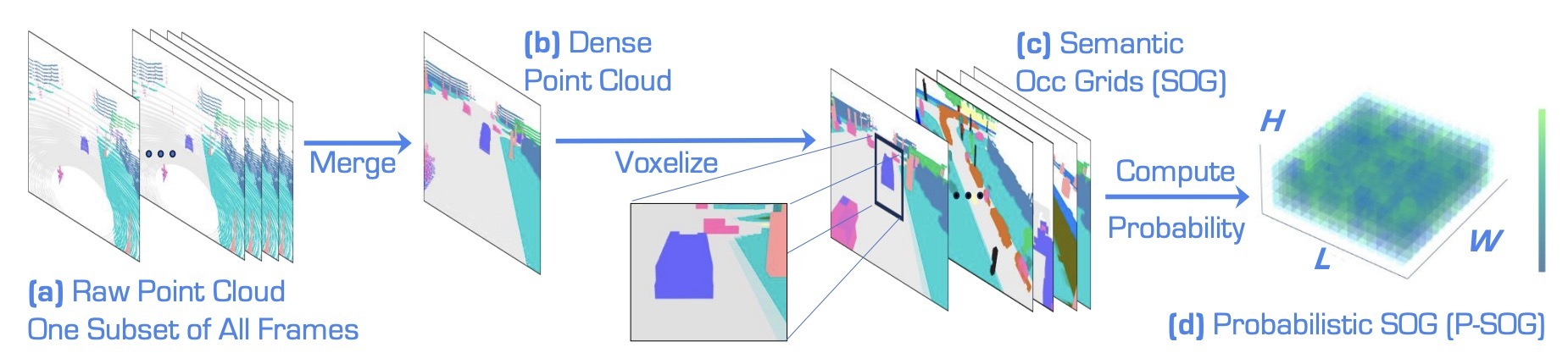

- To identify the most effective configurations for multi-LiDAR systems, we introduce the Surrogate Metric of the Semantic Occupancy Grids (M-SOG) to evaluate LiDAR placement quality.

- Leveraging the M-SOG metric, we propose a novel optimization strategy to refine multi-LiDAR placements.

- Centered around the theme of multi-condition multi-LiDAR perception, we collect a 364,000-frame dataset from both clean and adverse conditions.

We showcase exceptional robustness in both 3D object detection and LiDAR semantic segmentation tasks, under diverse adverse weather and sensor failure conditions.

|

|

|

|

|---|---|---|---|

| Waymo | Motional | Cruise | Pony.ai |

|

|

|

|

| Zoox | Toyota | Momenta | Ford |

Visit our project page to explore more examples. 🚙

- [2024.03] - Our paper is available on arXiv. The code has been made publicly accessible. 🚀

- Installation

- Data Preparation

- Sensor Placement

- Place3D Pipeline

- Getting Started

- SOG Generation

- Model Zoo

- Place3D Benchmark

- TODO List

- Citation

- License

- Acknowledgements

For details related to installation and environment setups, kindly refer to INSTALL.md.

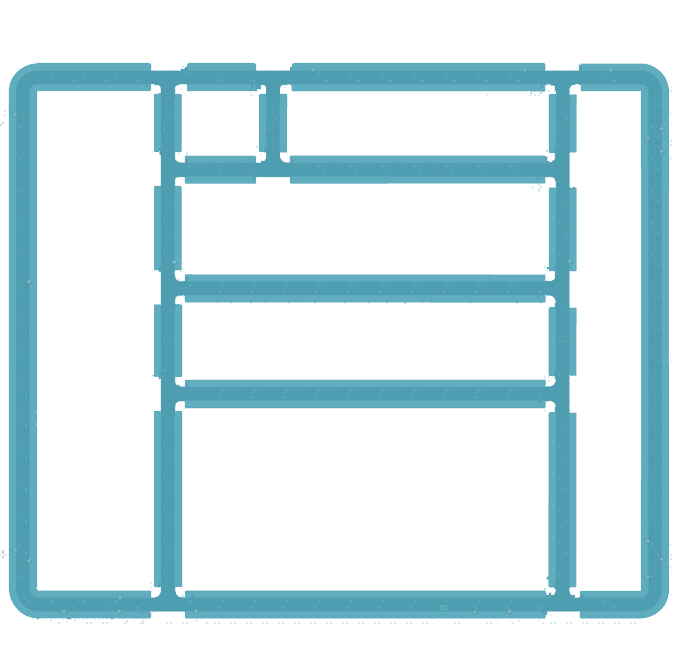

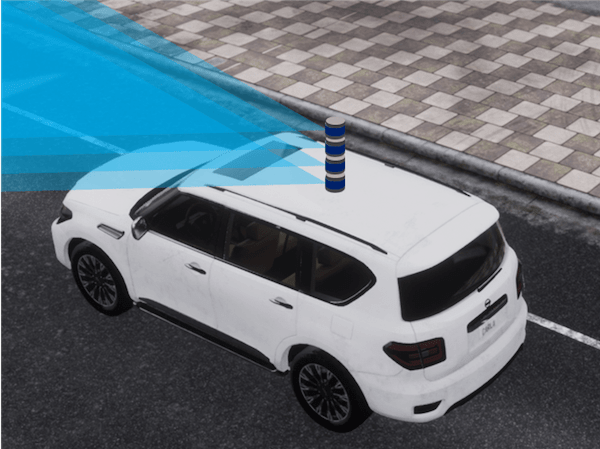

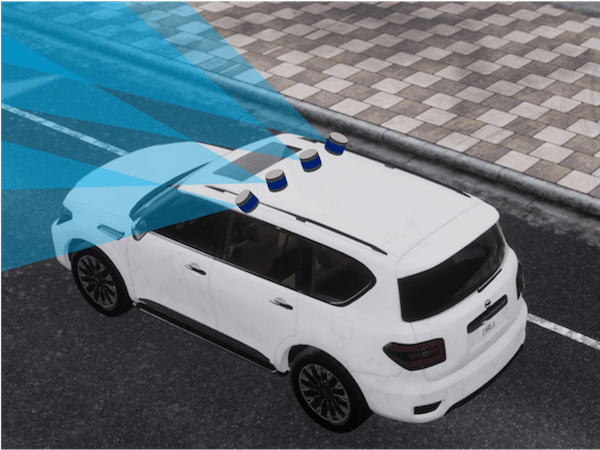

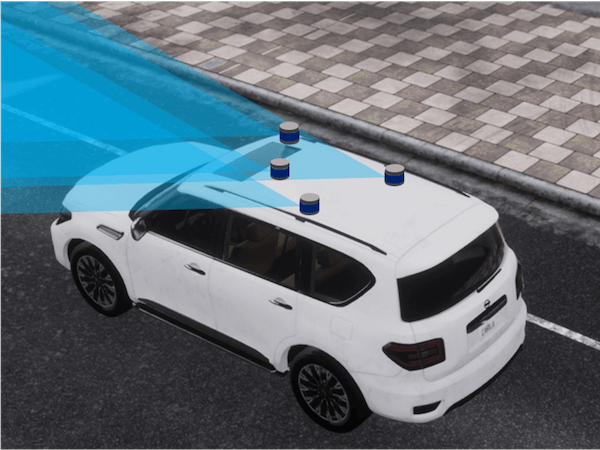

The Place3D dataset consists of a total of eleven LiDAR placements, in which seven baselines are inspired by existing self-driving configurations from autonomous vehicle companies and four LiDAR placements are obtained by sensor optimization.

Each LiDAR placement contains four LiDAR sensors. For each LiDAR configuration, the sub-dataset consists of 13,600 frames of samples, comprising 11,200 samples for training and 2,400 samples for validation, following the split ratio used in nuScenes.

|

|

|

|

|---|---|---|---|

| Town 1 | Town 3 | Town 4 | Town 6 |

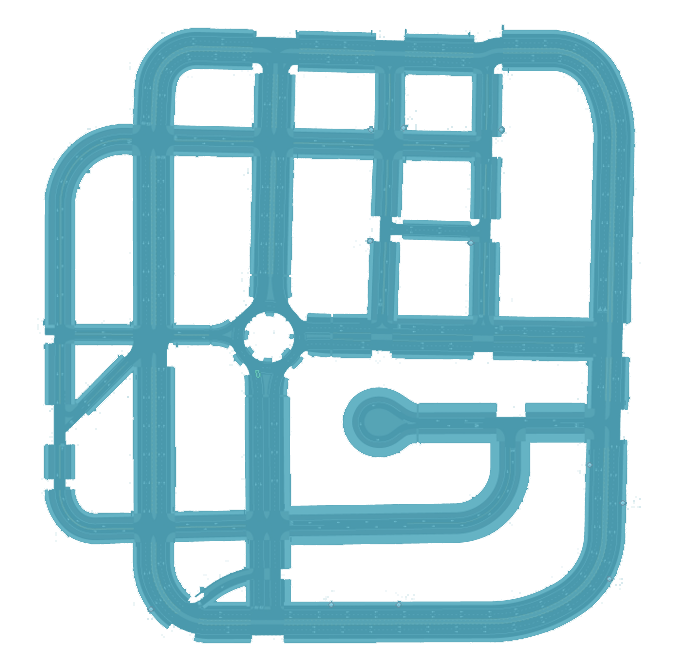

We choose four maps (Towns 1, 3, 4, and 6) in CARLA v0.9.10 to collect point cloud data and generate ground truth information. For each map, we manually set 6 ego-vehicle routes to cover all roads with no roads overlapped. The frequency of the simulation is set to 20 Hz.

Our datasets are hosted by OpenDataLab.

OpenDataLab is a pioneering open data platform for the large AI model era, making datasets accessible. By using OpenDataLab, researchers can obtain free formatted datasets in various fields.

Kindly refer to DATA_PREPARE.md for the details to prepare the Place3D dataset.

|

|

|

|

|---|---|---|---|

| Center | Line | Pyramid | Square |

|

|

|

|

| Trapezoid | Line-Roll | Ours (Det) | Ours (Seg) |

To learn more usage about this codebase, kindly refer to GET_STARTED.md.

LiDAR Semantic Segmentation

3D Object Detection

- PointPillars, CVPR 2019.

[Code]- CenterPoint, CVPR 2021.

[Code]- BEVFusion, ICRA 2023.

[Code]- FSTR, TGRS 2023.

[Code]

| Method | Center | Line | Pyramid | Square | ||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| mIoU | mAcc | ECE | mIoU | mAcc | ECE | mIoU | mAcc | ECE | mIoU | mAcc | ECE | |

| MinkUNet | 65.7 | 72.4 | 0.041 | 59.7 | 67.7 | 0.037 | 62.7 | 70.6 | 0.072 | 60.7 | 68.4 | 0.043 |

| PolarNet | 71.0 | 76.0 | 0.033 | 67.7 | 74.1 | 0.034 | 67.7 | 73.0 | 0.032 | 69.3 | 74.7 | 0.033 |

| SPVCNN | 67.1 | 74.4 | 0.034 | 59.3 | 66.7 | 0.068 | 67.6 | 74.0 | 0.037 | 63.4 | 70.2 | 0.031 |

| Cylinder3D | 72.7 | 79.2 | 0.041 | 68.9 | 76.3 | 0.045 | 68.4 | 76.0 | 0.093 | 69.9 | 76.7 | 0.044 |

| Average | 69.1 | 75.5 | 0.037 | 63.9 | 71.2 | 0.046 | 66.6 | 73.4 | 0.059 | 65.8 | 72.5 | 0.038 |

| Method | Trapezoid | Line-Roll | Pyramid-Roll | Ours | ||||||||

| mIoU | mAcc | ECE | mIoU | mAcc | ECE | mIoU | mAcc | ECE | mIoU | mAcc | ECE | |

| MinkUNet | 59.0 | 66.2 | 0.040 | 58.5 | 66.4 | 0.047 | 62.2 | 69.6 | 0.051 | 66.5 | 73.2 | 0.031 |

| PolarNet | 66.8 | 72.3 | 0.034 | 67.2 | 72.8 | 0.037 | 70.9 | 75.9 | 0.035 | 76.7 | 81.5 | 0.033 |

| SPVCNN | 61.0 | 68.8 | 0.044 | 60.6 | 68.0 | 0.034 | 67.9 | 74.2 | 0.033 | 68.3 | 74.6 | 0.034 |

| Cylinder3D | 68.5 | 75.4 | 0.057 | 69.8 | 77.0 | 0.048 | 69.3 | 77.0 | 0.048 | 73.0 | 78.9 | 0.037 |

| Average | 63.8 | 70.7 | 0.044 | 64.0 | 71.1 | 0.042 | 67.6 | 74.2 | 0.042 | 71.1 | 77.1 | 0.034 |

| Method | Center | Line | Pyramid | Square | ||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Car | Ped | Bicy | Car | Ped | Bicy | Car | Ped | Bicy | Car | Ped | Bicy | |

| PointPillars | 46.5 | 19.4 | 27.1 | 43.4 | 22.0 | 27.7 | 46.1 | 24.4 | 29.0 | 43.8 | 20.8 | 27.1 |

| CenterPoint | 55.8 | 28.7 | 28.8 | 54.0 | 34.2 | 37.7 | 55.9 | 37.4 | 35.6 | 54.0 | 35.5 | 34.1 |

| BEVFusionL | 52.5 | 31.9 | 32.2 | 49.3 | 29.0 | 29.5 | 51.0 | 21.7 | 27.9 | 49.2 | 27.0 | 26.7 |

| FSTR | 55.3 | 27.7 | 29.3 | 51.9 | 30.2 | 33.0 | 55.7 | 29.4 | 33.8 | 52.8 | 30.3 | 31.3 |

| Average | 52.5 | 26.9 | 29.4 | 49.7 | 28.9 | 32.0 | 52.2 | 28.2 | 31.6 | 50.0 | 28.4 | 29.8 |

| Method | Trapezoid | Line-Roll | Pyramid-Roll | Ours | ||||||||

| Car | Ped | Bicy | Car | Ped | Bicy | Car | Ped | Bicy | Car | Ped | Bicy | |

| PointPillars | 43.5 | 21.5 | 27.3 | 44.6 | 21.3 | 27.0 | 46.1 | 23.6 | 27.9 | 46.8 | 24.9 | 27.2 |

| CenterPoint | 55.4 | 35.6 | 37.5 | 55.2 | 32.7 | 37.2 | 56.2 | 36.5 | 35.9 | 57.1 | 34.4 | 37.3 |

| BEVFusionL | 50.2 | 30.0 | 31.7 | 50.8 | 29.4 | 29.5 | 50.7 | 22.7 | 28.2 | 53.0 | 28.7 | 29.5 |

| FSTR | 54.6 | 30.0 | 33.3 | 53.5 | 29.8 | 32.4 | 55.5 | 29.9 | 32.0 | 56.6 | 31.9 | 34.1 |

| Average | 50.9 | 29.3 | 32.5 | 51.0 | 28.3 | 31.5 | 52.1 | 28.2 | 31.0 | 53.4 | 30.0 | 32.0 |

- Initial release. 🚀

- Add LiDAR placement benchmarks.

- Add LiDAR placement datasets.

- Add acknowledgments.

- Add citations.

- Add more 3D perception models.

If you find this work helpful for your research, please kindly consider citing our papers:

@article{li2024place3d,

author = {Ye Li and Lingdong Kong and Hanjiang Hu and Xiaohao Xu and Xiaonan Huang},

title = {Optimizing LiDAR Placements for Robust Driving Perception in Adverse Conditions},

journal = {arXiv preprint arXiv:2403.17009},

year = {2024},

}@misc{mmdet3d,

title = {MMDetection3D: OpenMMLab Next-Generation Platform for General 3D Object Detection},

author = {MMDetection3D Contributors},

howpublished = {\url{https://github.com/open-mmlab/mmdetection3d}},

year = {2020}

}This work is under the Apache License Version 2.0, while some specific implementations in this codebase might be with other licenses. Kindly refer to LICENSE.md for a more careful check, if you are using our code for commercial matters.

This work is developed based on the MMDetection3D codebase.

MMDetection3D is an open-source toolbox based on PyTorch, towards the next-generation platform for general 3D perception. It is a part of the OpenMMLab project developed by MMLab.

We acknowledge the use of the following public resources, during the course of this work: 1CARLA, 2nuScenes-devkit, 3SemanticKITTI-API, 4MinkowskiEngine, 5TorchSparse, 6Open3D-ML, and 7Robo3D.

We thank the exceptional contributions from the above open-source repositories! ❤️