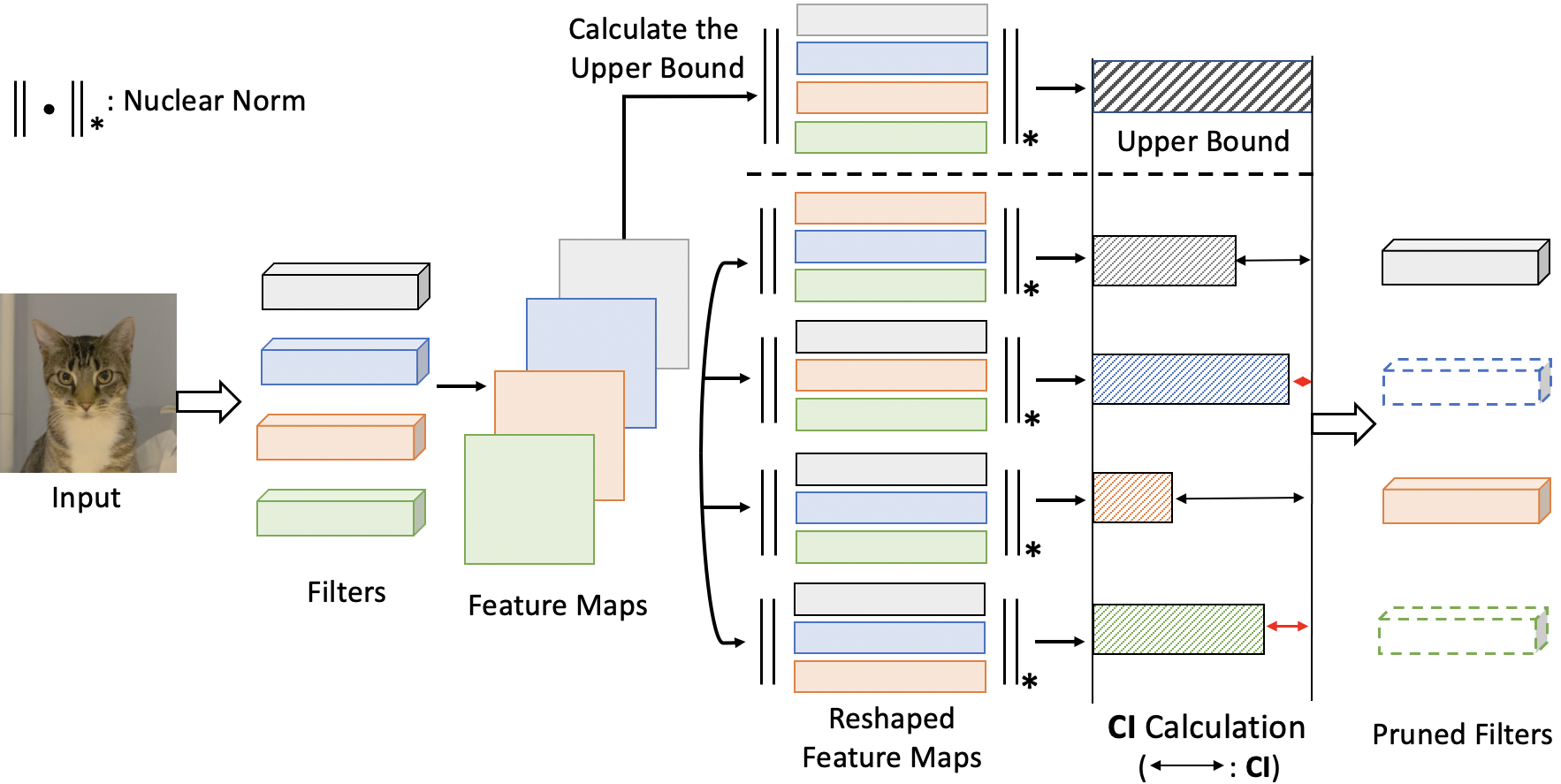

Code for our NeurIPS 2021 paper: (CHIP: CHannel Independence-based Pruning for Compact Neural Networks) .

.

@article{sui2021chip,

title={CHIP: CHannel Independence-based Pruning for Compact Neural Networks},

author={Sui, Yang and Yin, Miao and Xie, Yi and Phan, Huy and Aliari Zonouz, Saman and Yuan, Bo},

journal={Advances in Neural Information Processing Systems},

volume={34},

year={2021}

}

python calculate_feature_maps.py \

--arch resnet_56 \

--dataset cifar10 \

--data_dir ./data \

--pretrain_dir ./pretrained_models/resnet_56.pt \

--gpu 0python calculate_feature_maps.py \

--arch resnet_50 \

--dataset imagenet \

--data_dir /raid/data/imagenet \

--pretrain_dir ./pretrained_models/resnet50.pth \

--gpu 0This procedure is time-consuming, please be patient.

python calculate_ci.py \

--arch resnet_56 \

--repeat 5 \

--num_layers 55python calculate_ci.py \

--arch resnet_50 \

--repeat 5 \

--num_layers 53python prune_finetune_cifar.py \

--data_dir ./data \

--result_dir ./result/resnet_56/1 \

--arch resnet_56 \

--ci_dir ./CI_resnet_56 \

--batch_size 256 \

--epochs 400 \

--lr_type cos \

--learning_rate 0.01 \

--momentum 0.9 \

--weight_decay 0.005 \

--pretrain_dir ./pretrained_models/resnet_56.pt \

--sparsity [0.]+[0.4]*2+[0.5]*9+[0.6]*9+[0.7]*9 \

--gpu 0 python prune_finetune_imagenet.py \

--data_dir /raid/data/imagenet \

--result_dir ./result/resnet_50/1 \

--arch resnet_50 \

--ci_dir ./CI_resnet_50 \

--batch_size 256 \

--epochs 180 \

--lr_type cos \

--learning_rate 0.01 \

--momentum 0.99 \

--label_smooth 0.1 \

--weight_decay 0.0001 \

--pretrain_dir ./pretrained_models/resnet50.pth \

--sparsity [0.]+[0.1]*3+[0.35]*16 \

--gpu 0- Pre-trained Models

- CIFAR-10: VGG-16_BN, ResNet-56, ResNet-110.

- ImageNet: ResNet-50.

We release our training logs of ResNet-56/110 model on CIFAR-10 for more epochs which can achieve better results than paper. We release our training logs of ResNet-50 model on ImageNet. Training logs can be found at link. Some results are better than papers.

Codes are based on link.

Since I rearranged my original codes for simplicity, please feel free to open an issue if something wrong happens when you run the codes. (Please forgive me for the late response and wait for me to respond to your problems in several days.)