StudioGAN is a Pytorch library providing implementations of representative Generative Adversarial Networks (GANs) for conditional/unconditional image generation. StudioGAN aims to offer an identical playground for modern GANs so that machine learning researchers can readily compare and analyze a new idea.

- Extensive GAN implementations for PyTorch

- Comprehensive benchmark of GANs using CIFAR10, Tiny ImageNet, and ImageNet datasets

- Better performance and lower memory consumption than original implementations

- Providing pre-trained models that are fully compatible with up-to-date PyTorch environment

- Support Multi-GPU (DP, DDP, and Multinode DistributedDataParallel), Mixed Precision, Synchronized Batch Normalization, LARS, Tensorboard Visualization, and other analysis methods

| Method | Venue | Architecture | GC | DC | Loss | EMA |

|---|---|---|---|---|---|---|

| DCGAN | arXiv'15 | CNN/ResNet[1] | N/A | N/A | Vanilla | False |

| LSGAN | ICCV'17 | CNN/ResNet[1] | N/A | N/A | Least Sqaure | False |

| GGAN | arXiv'17 | CNN/ResNet[1] | N/A | N/A | Hinge | False |

| WGAN-WC | ICLR'17 | ResNet | N/A | N/A | Wasserstein | False |

| WGAN-GP | NIPS'17 | ResNet | N/A | N/A | Wasserstein | False |

| WGAN-DRA | arXiv'17 | ResNet | N/A | N/A | Wasserstein | False |

| ACGAN-Mod[2] | - | ResNet | cBN | AC | Hinge | False |

| ProjGAN | ICLR'18 | ResNet | cBN | PD | Hinge | False |

| SNGAN | ICLR'18 | ResNet | cBN | PD | Hinge | False |

| SAGAN | ICML'19 | ResNet | cBN | PD | Hinge | False |

| BigGAN | ICLR'19 | Big ResNet | cBN | PD | Hinge | True |

| BigGAN-Deep | ICLR'19 | Big ResNet Deep | cBN | PD | Hinge | True |

| BigGAN-Mod[3] | - | Big ResNet | cBN | PD | Hinge | True |

| CRGAN | ICLR'20 | Big ResNet | cBN | PD/CL | Hinge | True |

| ICRGAN | arXiv'20 | Big ResNet | cBN | PD/CL | Hinge | True |

| LOGAN | arXiv'19 | Big ResNet | cBN | PD | Hinge | True |

| DiffAugGAN | Neurips'20 | Big ResNet | cBN | PD/CL | Hinge | True |

| ADAGAN | Neurips'20 | Big ResNet | cBN | PD/CL | Hinge | True |

| ContraGAN | Neurips'20 | Big ResNet | cBN | CL | Hinge | True |

| FreezeD | CVPRW'20 | - | - | - | - | - |

GC/DC indicates the way how we inject label information to the Generator or Discriminator.

EMA: Exponential Moving Average update to the generator. cBN : conditional Batch Normalization. AC : Auxiliary Classifier. PD : Projection Discriminator. CL : Contrastive Learning.

| Method | Venue | Architecture | GC | DC | Loss | EMA |

|---|---|---|---|---|---|---|

| StyleGAN2 | CVPR' 20 | StyleNet | - | - | Vanilla | True |

Please refer to requirements.md for more information.

You can install the recommended environment as follows:

conda env create -f environment.yml -n studioganWith docker, you can use:

docker pull mgkang/studiogan:latestThis is my command to make a container named "studioGAN".

Also, you can use port number 6006 to connect the tensoreboard.

docker run -it --gpus all --shm-size 128g -p 6006:6006 --name studioGAN -v /home/USER:/root/code --workdir /root/code mgkang/studiogan:latest /bin/bash- Train (

-t) and evaluate (-e) the model defined inCONFIG_PATHusing GPU0

CUDA_VISIBLE_DEVICES=0 python3 src/main.py -t -e -c CONFIG_PATH- Train (

-t) and evaluate (-e) the model defined inCONFIG_PATHusing GPUs(0, 1, 2, 3)andDataParallel

CUDA_VISIBLE_DEVICES=0,1,2,3 python3 src/main.py -t -e -c CONFIG_PATHTry python3 src/main.py to see available options.

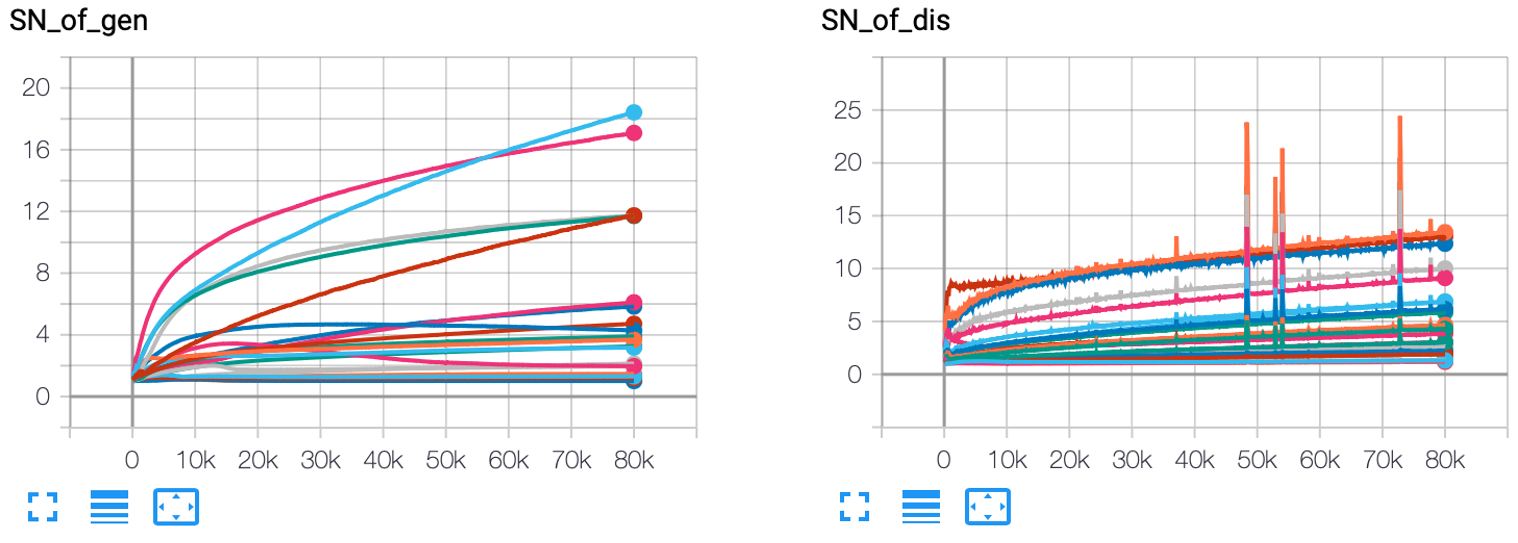

Via Tensorboard, you can monitor trends of IS, FID, F_beta, Authenticity Accuracies, and the largest singular values:

~ PyTorch-StudioGAN/logs/RUN_NAME>>> tensorboard --logdir=./ --port PORT-

CIFAR10: StudioGAN will automatically download the dataset once you execute

main.py. -

Tiny Imagenet, Imagenet, or a custom dataset:

- download Tiny Imagenet and Imagenet. Prepare your own dataset.

- make the folder structure of the dataset as follows:

┌── docs

├── src

└── data

└── ILSVRC2012 or TINY_ILSVRC2012 or CUSTOM

├── train

│ ├── cls0

│ │ ├── train0.png

│ │ ├── train1.png

│ │ └── ...

│ ├── cls1

│ └── ...

└── valid

├── cls0

│ ├── valid0.png

│ ├── valid1.png

│ └── ...

├── cls1

└── ...

- DistributedDataParallel (Please refer to Here)

### NODE_0, 4_GPUs, All ports are open to NODE_1 docker run -it --gpus all --shm-size 128g --name studioGAN --network=host -v /home/USER:/root/code --workdir /root/code mgkang/studiogan:latest /bin/bash ~/code>>> export NCCL_SOCKET_IFNAME=^docker0,lo ~/code>>> export MASTER_ADDR=PUBLIC_IP_OF_NODE_0 ~/code>>> export MASTER_PORT=AVAILABLE_PORT_OF_NODE_0 ~/code/PyTorch-StudioGAN>>> CUDA_VISIBLE_DEVICES=0,1,2,3 python3 src/main.py -t -e -DDP -n 2 -nr 0 -c CONFIG_PATH

### NODE_1, 4_GPUs, All ports are open to NODE_0 docker run -it --gpus all --shm-size 128g --name studioGAN --network=host -v /home/USER:/root/code --workdir /root/code mgkang/studiogan:latest /bin/bash ~/code>>> export NCCL_SOCKET_IFNAME=^docker0,lo ~/code>>> export MASTER_ADDR=PUBLIC_IP_OF_NODE_0 ~/code>>> export MASTER_PORT=AVAILABLE_PORT_OF_NODE_0 ~/code/PyTorch-StudioGAN>>> CUDA_VISIBLE_DEVICES=0,1,2,3 python3 src/main.py -t -e -DDP -n 2 -nr 1 -c CONFIG_PATH

※ StudioGAN does not support DDP training for ContraGAN. This is because conducting contrastive learning requires a 'gather' operation to calculate the exact conditional contrastive loss.

- Mixed Precision Training

CUDA_VISIBLE_DEVICES=0,...,N python3 src/main.py -t -mpc -c CONFIG_PATH

- Standing Statistics

CUDA_VISIBLE_DEVICES=0,...,N python3 src/main.py -e -std_stat --standing_step STANDING_STEP -c CONFIG_PATH

- Synchronized BatchNorm

CUDA_VISIBLE_DEVICES=0,...,N python3 src/main.py -t -sync_bn -c CONFIG_PATH

- Load All Data in Main Memory

CUDA_VISIBLE_DEVICES=0,...,N python3 src/main.py -t -l -c CONFIG_PATH

- LARS

CUDA_VISIBLE_DEVICES=0,...,N python3 src/main.py -t -l -c CONFIG_PATH -LARS

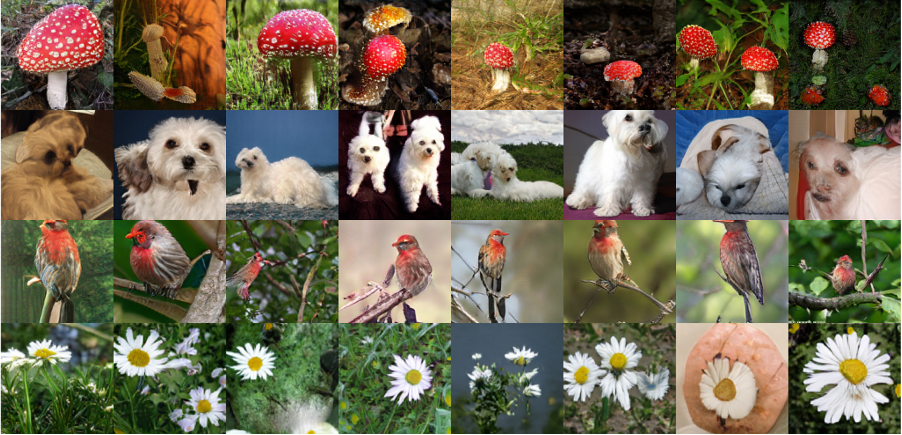

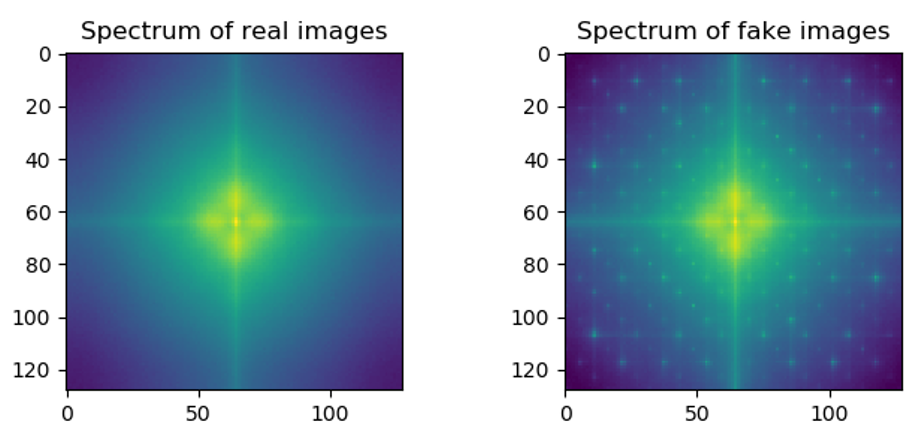

The StudioGAN supports Image visualization, K-nearest neighbor analysis, Linear interpolation, and Frequency analysis. All results will be saved in ./figures/RUN_NAME/*.png.

- Image Visualization

CUDA_VISIBLE_DEVICES=0,...,N python3 src/main.py -iv -std_stat --standing_step STANDING_STEP -c CONFIG_PATH --checkpoint_folder CHECKPOINT_FOLDER --log_output_path LOG_OUTPUT_PATH- K-Nearest Neighbor Analysis (we have fixed K=7, the images in the first column are generated images.)

CUDA_VISIBLE_DEVICES=0,...,N python3 src/main.py -knn -std_stat --standing_step STANDING_STEP -c CONFIG_PATH --checkpoint_folder CHECKPOINT_FOLDER --log_output_path LOG_OUTPUT_PATH- Linear Interpolation (applicable only to conditional Big ResNet models)

CUDA_VISIBLE_DEVICES=0,...,N python3 src/main.py -itp -std_stat --standing_step STANDING_STEP -c CONFIG_PATH --checkpoint_folder CHECKPOINT_FOLDER --log_output_path LOG_OUTPUT_PATH- Frequency Analysis

CUDA_VISIBLE_DEVICES=0,...,N python3 src/main.py -fa -std_stat --standing_step STANDING_STEP -c CONFIG_PATH --checkpoint_folder CHECKPOINT_FOLDER --log_output_path LOG_OUTPUT_PATH- TSNE Analysis

CUDA_VISIBLE_DEVICES=0,...,N python3 src/main.py -tsne -std_stat --standing_step STANDING_STEP -c CONFIG_PATH --checkpoint_folder CHECKPOINT_FOLDER --log_output_path LOG_OUTPUT_PATHInception Score (IS) is a metric to measure how much GAN generates high-fidelity and diverse images. Calculating IS requires the pre-trained Inception-V3 network, and recent approaches utilize OpenAI's TensorFlow implementation.

To compute official IS, you have to make a "samples.npz" file using the command below:

CUDA_VISIBLE_DEVICES=0,...,N python3 src/main.py -s -c CONFIG_PATH --checkpoint_folder CHECKPOINT_FOLDER --log_output_path LOG_OUTPUT_PATHIt will automatically create the samples.npz file in the path ./samples/RUN_NAME/fake/npz/samples.npz.

After that, execute TensorFlow official IS implementation. Note that we do not split a dataset into ten folds to calculate IS ten times. We use the entire dataset to compute IS only once, which is the evaluation strategy used in the CompareGAN repository.

CUDA_VISIBLE_DEVICES=0,...,N python3 src/inception_tf13.py --run_name RUN_NAME --type "fake"Keep in mind that you need to have TensorFlow 1.3 or earlier version installed!

Note that StudioGAN logs Pytorch-based IS during the training.

FID is a widely used metric to evaluate the performance of a GAN model. Calculating FID requires the pre-trained Inception-V3 network, and modern approaches use Tensorflow-based FID. StudioGAN utilizes the PyTorch-based FID to test GAN models in the same PyTorch environment. We show that the PyTorch based FID implementation provides almost the same results with the TensorFlow implementation (See Appendix F of our paper).

Precision measures how accurately the generator can learn the target distribution. Recall measures how completely the generator covers the target distribution. Like IS and FID, calculating Precision and Recall requires the pre-trained Inception-V3 model. StudioGAN uses the same hyperparameter settings with the original Precision and Recall implementation, and StudioGAN calculates the F-beta score suggested by Sajjadi et al.

※ We always welcome your contribution if you find any wrong implementation, bug, and misreported score.

We report the best IS, FID, and F_beta values of various GANs. B. S. means batch size for training.

CR, ICR, DiffAug, ADA, and LO refer to regularization or optimization techiniques: CR (Consistency Regularization), ICR (Improved Consistency Regularization), DiffAug (Differentiable Augmentation), ADA (Adaptive Discriminator Augmentation), and LO (Latent Optimization), respectively.

When training, we used the command below.

With a single TITAN RTX GPU, training BigGAN takes about 13-15 hours.

CUDA_VISIBLE_DEVICES=0 python3 src/main.py -t -e -l -stat_otf -c CONFIG_PATH --eval_type "test"| Method | Reference | IS(⭡) | FID(⭣) | F_1/8(⭡) | F_8(⭡) | Cfg | Log | Weights |

|---|---|---|---|---|---|---|---|---|

| DCGAN | StudioGAN | 6.638 | 49.030 | 0.833 | 0.795 | Cfg | Log | Link |

| LSGAN | StudioGAN | 5.577 | 66.686 | 0.757 | 0.720 | Cfg | Log | Link |

| GGAN | StudioGAN | 6.227 | 42.714 | 0.916 | 0.822 | Cfg | Log | Link |

| WGAN-WC | StudioGAN | 2.579 | 159.090 | 0.190 | 0.199 | Cfg | Log | Link |

| WGAN-GP | StudioGAN | 7.458 | 25.852 | 0.962 | 0.929 | Cfg | Log | Link |

| WGAN-DRA | StudioGAN | 6.432 | 41.586 | 0.922 | 0.863 | Cfg | Log | Link |

| ACGAN-Mod | StudioGAN | 6.629 | 45.571 | 0.857 | 0.847 | Cfg | Log | Link |

| ProjGAN | StudioGAN | 7.539 | 33.830 | 0.952 | 0.855 | Cfg | Log | Link |

| SNGAN | StudioGAN | 8.677 | 13.248 | 0.983 | 0.978 | Cfg | Log | Link |

| SAGAN | StudioGAN | 8.680 | 14.009 | 0.982 | 0.970 | Cfg | Log | Link |

| BigGAN | Paper | 9.22[4] | 14.73 | - | - | - | - | - |

| BigGAN + CR | Paper | - | 11.5 | - | - | - | - | - |

| BigGAN + ICR | Paper | - | 9.2 | - | - | - | - | - |

| BigGAN + DiffAug | Repo | 9.2[4] | 8.7 | - | - | - | - | - |

| BigGAN-Mod | StudioGAN | 9.746 | 8.034 | 0.995 | 0.994 | Cfg | Log | Link |

| BigGAN-Mod + CR | StudioGAN | 10.380 | 7.178 | 0.994 | 0.993 | Cfg | Log | Link |

| BigGAN-Mod + ICR | StudioGAN | 10.153 | 7.430 | 0.994 | 0.993 | Cfg | Log | Link |

| BigGAN-Mod + DiffAug | StudioGAN | 9.775 | 7.157 | 0.996 | 0.993 | Cfg | Log | Link |

| BigGAN-Mod + ADA | StudioGAN | 10.136 | 7.881 | 0.993 | 0.994 | Cfg | Log | Link |

| BigGAN-Mod + LO | StudioGAN | 9.701 | 8.369 | 0.992 | 0.989 | Cfg | Log | Link |

| ContraGAN | StudioGAN | 9.729 | 8.065 | 0.993 | 0.992 | Cfg | Log | Link |

| ContraGAN + CR | StudioGAN | 9.812 | 7.685 | 0.995 | 0.993 | Cfg | Log | Link |

| ContraGAN + ICR | StudioGAN | 10.117 | 7.547 | 0.996 | 0.993 | Cfg | Log | Link |

| ContraGAN + DiffAug | StudioGAN | 9.996 | 7.193 | 0.995 | 0.990 | Cfg | Log | Link |

| ContraGAN + ADA | StudioGAN | 9.411 | 10.830 | 0.990 | 0.964 | Cfg | Log | Link |

When evaluating, the statistics of batch normalization layers are calculated on the fly (statistics of a batch).

IS, FID, and F_beta values are computed using 10K test and 10K generated Images.

CUDA_VISIBLE_DEVICES=0 python3 src/main.py -e -l -stat_otf -c CONFIG_PATH --checkpoint_folder CHECKPOINT_FOLDER --eval_type "test"When training, we used the command below.

With 4 TITAN RTX GPUs, training BigGAN takes about 2 days.

CUDA_VISIBLE_DEVICES=0,...,N python3 src/main.py -t -e -l -stat_otf -c CONFIG_PATH --eval_type "valid"| Method | Reference | IS(⭡) | FID(⭣) | F_1/8(⭡) | F_8(⭡) | Cfg | Log | Weights |

|---|---|---|---|---|---|---|---|---|

| DCGAN | StudioGAN | 5.640 | 91.625 | 0.606 | 0.391 | Cfg | Log | Link |

| LSGAN | StudioGAN | 5.381 | 90.008 | 0.638 | 0.390 | Cfg | Log | Link |

| GGAN | StudioGAN | 5.146 | 102.094 | 0.503 | 0.307 | Cfg | Log | Link |

| WGAN-WC | StudioGAN | 9.696 | 41.454 | 0.940 | 0.735 | Cfg | Log | Link |

| WGAN-GP | StudioGAN | 1.322 | 311.805 | 0.016 | 0.000 | Cfg | Log | Link |

| WGAN-DRA | StudioGAN | 9.564 | 40.655 | 0.938 | 0.724 | Cfg | Log | Link |

| ACGAN-Mod | StudioGAN | 6.342 | 78.513 | 0.668 | 0.518 | Cfg | Log | Link |

| ProjGAN | StudioGAN | 6.224 | 89.175 | 0.626 | 0.428 | Cfg | Log | Link |

| SNGAN | StudioGAN | 8.412 | 53.590 | 0.900 | 0.703 | Cfg | Log | Link |

| SAGAN | StudioGAN | 8.342 | 51.414 | 0.898 | 0.698 | Cfg | Log | Link |

| BigGAN-Mod | StudioGAN | 11.998 | 31.920 | 0.956 | 0.879 | Cfg | Log | Link |

| BigGAN-Mod + CR | StudioGAN | 14.887 | 21.488 | 0.969 | 0.936 | Cfg | Log | Link |

| BigGAN-Mod + ICR | StudioGAN | 5.605 | 91.326 | 0.525 | 0.399 | Cfg | Log | Link |

| BigGAN-Mod + DiffAug | StudioGAN | 17.075 | 16.338 | 0.979 | 0.971 | Cfg | Log | Link |

| BigGAN-Mod + ADA | StudioGAN | 15.158 | 24.121 | 0.953 | 0.942 | Cfg | Log | Link |

| BigGAN-Mod + LO | StudioGAN | 6.964 | 70.660 | 0.857 | 0.621 | Cfg | Log | Link |

| ContraGAN | StudioGAN | 13.494 | 27.027 | 0.975 | 0.902 | Cfg | Log | Link |

| ContraGAN + CR | StudioGAN | 15.623 | 19.716 | 0.983 | 0.941 | Cfg | Log | Link |

| ContraGAN + ICR | StudioGAN | 15.830 | 21.940 | 0.980 | 0.944 | Cfg | Log | Link |

| ContraGAN + DiffAug | StudioGAN | 17.303 | 15.755 | 0.984 | 0.962 | Cfg | Log | Link |

| ContraGAN + ADA | StudioGAN | 8.398 | 55.025 | 0.878 | 0.677 | Cfg | Log | Link |

When evaluating, the statistics of batch normalization layers are calculated on the fly (statistics of a batch).

IS, FID, and F_beta values are computed using 10K validation and 10K generated Images.

CUDA_VISIBLE_DEVICES=0,...,N python3 src/main.py -e -l -stat_otf -c CONFIG_PATH --checkpoint_folder CHECKPOINT_FOLDER --eval_type "valid"When training, we used the command below.

With 8 TESLA V100 GPUs, training BigGAN2048 takes about a month.

CUDA_VISIBLE_DEVICES=0,...,N python3 src/main.py -t -e -l -sync_bn -stat_otf -c CONFIG_PATH --eval_type "valid"| Method | Reference | IS(⭡) | FID(⭣) | F_1/8(⭡) | F_8(⭡) | Cfg | Log | Weights |

|---|---|---|---|---|---|---|---|---|

| SNGAN | StudioGAN | 32.247 | 26.792 | 0.938 | 0.913 | Cfg | Log | Link |

| SAGAN | StudioGAN | 29.848 | 34.726 | 0.849 | 0.914 | Cfg | Log | Link |

| BigGAN | Paper | 98.8[4] | 8.7 | - | - | - | - | - |

| BigGAN | Paper | - | 21.072 | - | - | Cfg | - | - |

| BigGAN | StudioGAN | 28.633 | 24.684 | 0.941 | 0.921 | Cfg | Log | Link |

| BigGAN | StudioGAN | 99.705 | 7.893 | 0.985 | 0.989 | Cfg | Log | Link |

| ContraGAN | Paper | 31.101 | 19.693 | 0.951 | 0.927 | Cfg | Log | Link |

| ContraGAN | StudioGAN | 25.249 | 25.161 | 0.947 | 0.855 | Cfg | Log | Link |

When evaluating, the statistics of batch normalization layers are calculated in advance (moving average of the previous statistics).

IS, FID, and F_beta values are computed using 50K validation and 50K generated Images.

CUDA_VISIBLE_DEVICES=0,...,N python3 src/main.py -e -l -sync_bn -c CONFIG_PATH --checkpoint_folder CHECKPOINT_FOLDER --eval_type "valid"Exponential Moving Average: https://github.com/ajbrock/BigGAN-PyTorch

Synchronized BatchNorm: https://github.com/vacancy/Synchronized-BatchNorm-PyTorch

Self-Attention module: https://github.com/voletiv/self-attention-GAN-pytorch

Implementation Details: https://github.com/ajbrock/BigGAN-PyTorch

Architecture Details: https://github.com/google/compare_gan

DiffAugment: https://github.com/mit-han-lab/data-efficient-gans

Adaptive Discriminator Augmentation: https://github.com/rosinality/stylegan2-pytorch

Tensorflow IS: https://github.com/openai/improved-gan

Tensorflow FID: https://github.com/bioinf-jku/TTUR

Pytorch FID: https://github.com/mseitzer/pytorch-fid

Tensorflow Precision and Recall: https://github.com/msmsajjadi/precision-recall-distributions

torchlars: https://github.com/kakaobrain/torchlars

StudioGAN is established for the following research project. Please cite our work if you use StudioGAN.

@inproceedings{kang2020ContraGAN,

title = {{ContraGAN: Contrastive Learning for Conditional Image Generation}},

author = {Minguk Kang and Jaesik Park},

journal = {Conference on Neural Information Processing Systems (NeurIPS)},

year = {2020}

}[1] Experiments on Tiny ImageNet are conducted using the ResNet architecture instead of CNN.

[2] Our re-implementation of ACGAN (ICML'17) with slight modifications, which bring strong performance enhancement for the experiment using CIFAR10.

[3] Our re-implementation of BigGAN/BigGAN-Deep (ICLR'18) with slight modifications, which bring strong performance enhancement for the experiment using CIFAR10.

[4] IS is computed using Tensorflow official code.