Apache Liminal is an end-to-end platform for data engineers & scientists, allowing them to build, train and deploy machine learning models in a robust and agile way.

The platform provides the abstractions and declarative capabilities for data extraction & feature engineering followed by model training and serving. Liminal's goal is to operationalize the machine learning process, allowing data scientists to quickly transition from a successful experiment to an automated pipeline of model training, validation, deployment and inference in production, freeing them from engineering and non-functional tasks, and allowing them to focus on machine learning code and artifacts.

Using simple YAML configuration, create your own schedule data pipelines (a sequence of tasks to perform), application servers, and more.

name: MyPipeline

owner: Bosco Albert Baracus

pipelines:

- pipeline: my_pipeline

start_date: 1970-01-01

timeout_minutes: 45

schedule: 0 * 1 * *

metrics:

namespace: TestNamespace

backends: [ 'cloudwatch' ]

tasks:

- task: my_static_input_task

type: python

description: static input task

image: my_static_input_task_image

source: helloworld

env_vars:

env1: "a"

env2: "b"

input_type: static

input_path: '[ { "foo": "bar" }, { "foo": "baz" } ]'

output_path: /output.json

cmd: python -u hello_world.py

- task: my_parallelized_static_input_task

type: python

description: parallelized static input task

image: my_static_input_task_image

env_vars:

env1: "a"

env2: "b"

input_type: static

input_path: '[ { "foo": "bar" }, { "foo": "baz" } ]'

split_input: True

executors: 2

cmd: python -u helloworld.py

- task: my_task_output_input_task

type: python

description: task with input from other task's output

image: my_task_output_input_task_image

source: helloworld

env_vars:

env1: "a"

env2: "b"

input_type: task

input_path: my_static_input_task

cmd: python -u hello_world.py

services:

- service:

name: my_python_server

type: python_server

description: my python server

image: my_server_image

source: myserver

endpoints:

- endpoint: /myendpoint1

module: myserver.my_server

function: myendpoint1func- Install this package

pip install liminal- Optional: set LIMINAL_HOME to path of your choice (if not set, will default to ~/liminal_home)

echo 'export LIMINAL_HOME=</path/to/some/folder>' >> ~/.bash_profile && source ~/.bash_profileThis involves at minimum creating a single file called liminal.yml as in the example above.

If your pipeline requires custom python code to implement tasks, they should be organized like this

If your pipeline introduces imports of external packages which are not already a part of the liminal framework (i.e. you had to pip install them yourself), you need to also provide a requirements.txt in the root of your project.

When your pipeline code is ready, you can test it by running it locally on your machine.

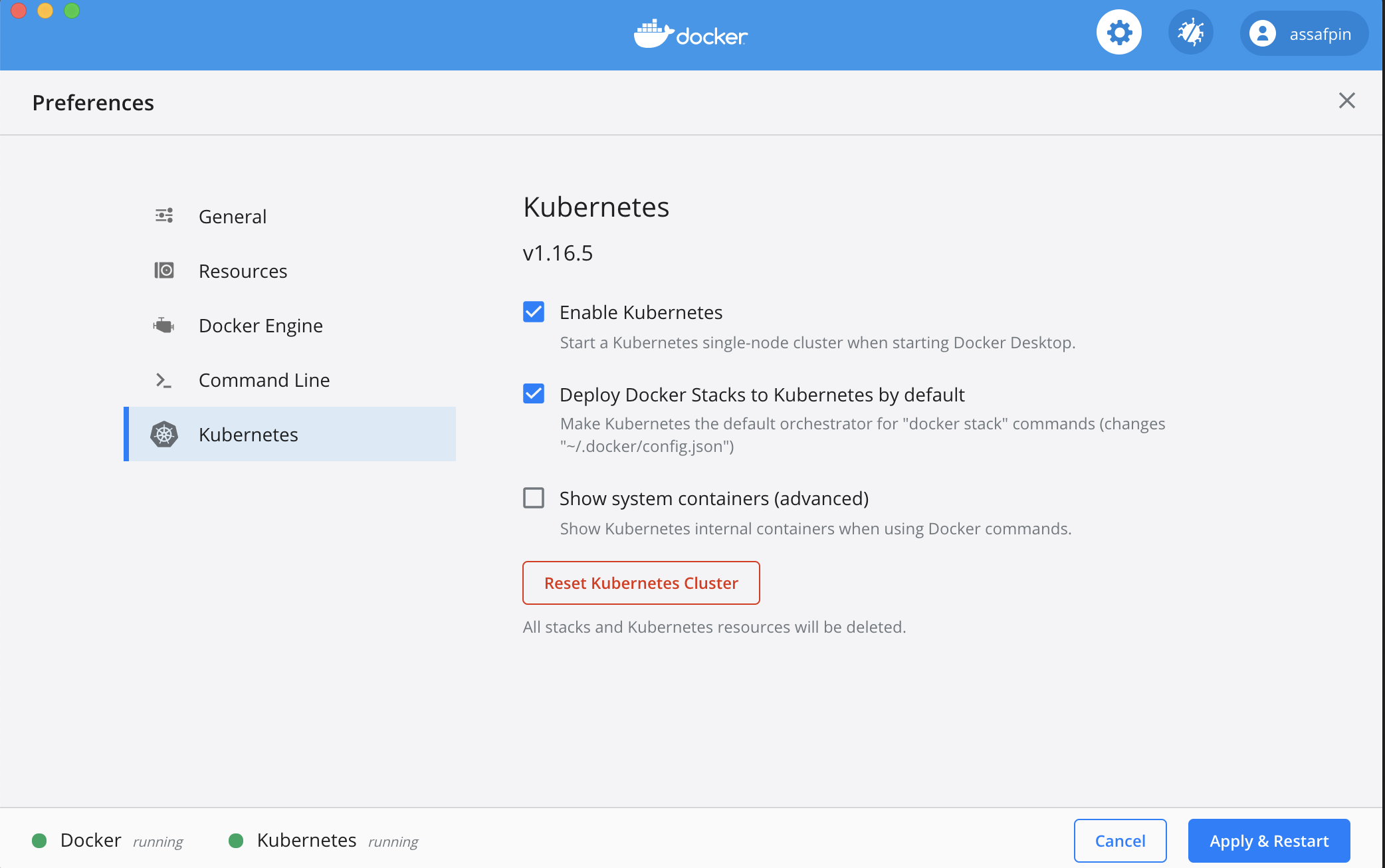

If you want to execute your pipeline on a remote kubernetes cluster, make sure the cluster is configured using :

kubectl config set-context <your remote kubernetes cluster>- Build the docker images used by your pipeline.

In the example pipeline above, you can see that tasks and services have an "image" field - such as "my_static_input_task_image". This means that the task is executed inside a docker container, and the docker container is created from a docker image where various code and libraries are installed.

You can take a look at what the build process looks like, e.g. here

In order for the images to be available for your pipeline, you'll need to build them locally:

cd </path/to/your/liminal/code>

liminal buildYou'll see that a number of outputs indicating various docker images built.

- Deploy the pipeline:

cd </path/to/your/liminal/code>

liminal deploy- Start the server

liminal start-

Navigate to http://localhost:8080/admin

-

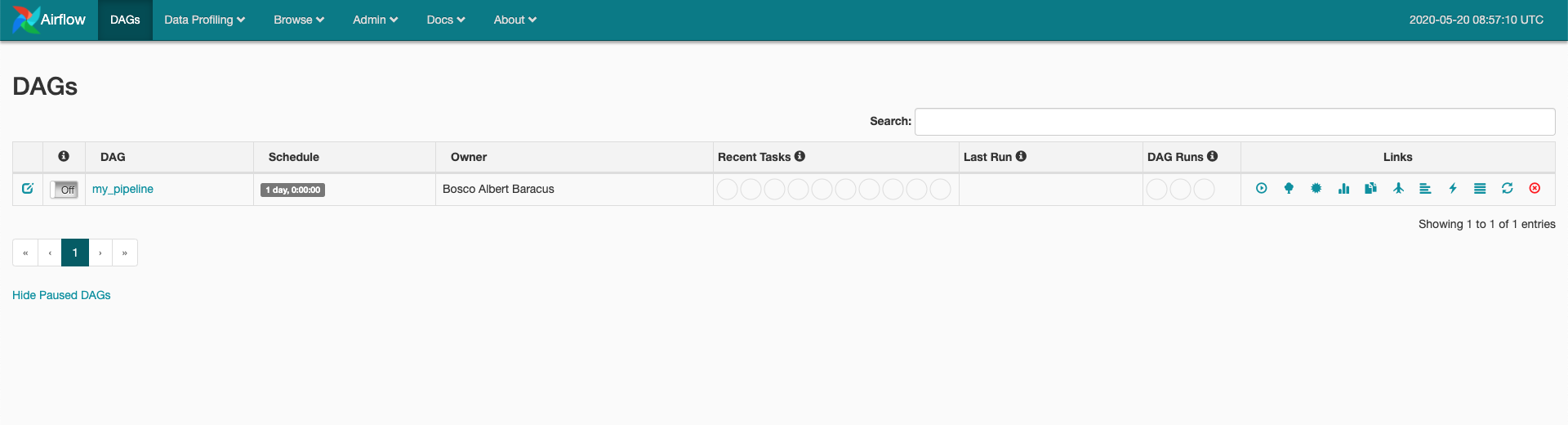

You should see your

The pipeline is scheduled to run according to the

The pipeline is scheduled to run according to the json schedule: 0 * 1 * *field in the .yml file you provided. -

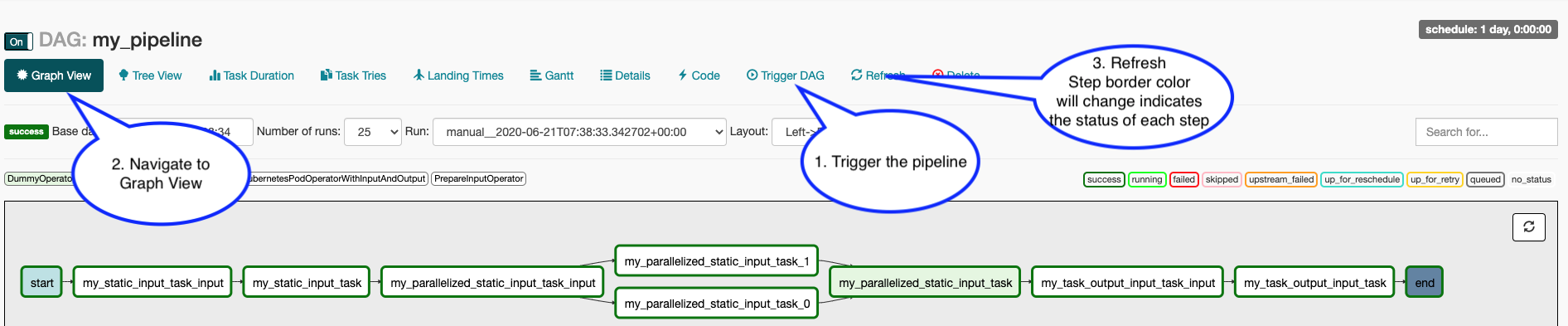

To manually activate your pipeline: Click your pipeline and then click "trigger DAG" Click "Graph view" You should see the steps in your pipeline getting executed in "real time" by clicking "Refresh" periodically.

When doing local development and running Liminal unit-tests, make sure to set LIMINAL_STAND_ALONE_MODE=True