🔥🔥🔥 A Survey on Multimodal Large Language Models

Project Page | Paper

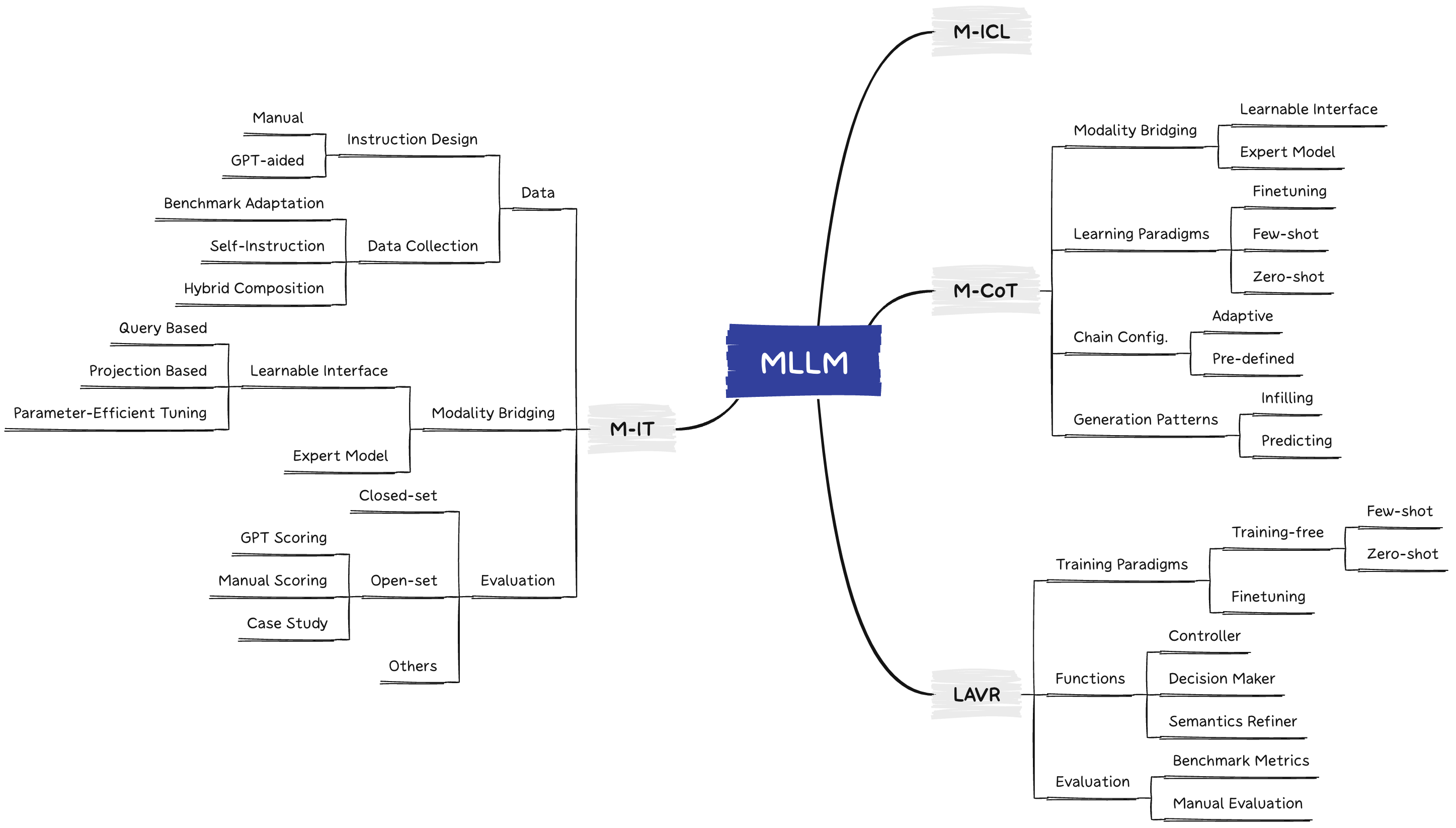

A curated list of Multimodal Large Language Models (MLLMs), including datasets, multimodal instruction tuning, multimodal in-context learning, multimodal chain-of-thought, llm-aided visual reasoning, foundation models, and others. This list will be updated in real time. ✨

Welcome to join our WeChat group of MLLM communication!

Please add WeChat ID (wmd_rz_ustc) to join the group. 🌟

🔥🔥🔥 MME: A Comprehensive Evaluation Benchmark for Multimodal Large Language Models

Project Page [Leaderboards] | Paper

Please feel free to open an issue to add new evaluation results or if you have any questions about the evaluation. We will update the leaderboards in time. ✨

Download MME 🌟🌟

The benchmark dataset is collected by Xiamen University for academic research only. You can email guilinli@stu.xmu.edu.cn to obtain the dataset, according to the following requirement.

Requirement: A real-name system is encouraged for better academic communication. Your email suffix needs to match your affiliation, such as xx@stu.xmu.edu.cn and Xiamen University. Otherwise, you need to explain why. Please include the information bellow when sending your application email.

Name: (tell us who you are.)

Affiliation: (the name/url of your university or company)

Job Title: (e.g., professor, PhD, and researcher)

Email: (your email address)

How to use: (only for non-commercial use)

If you find our projects helpful to your research, please cite the following papers:

@article{yin2023survey,

title={A Survey on Multimodal Large Language Models},

author={Yin, Shukang and Fu, Chaoyou and Zhao, Sirui and Li, Ke and Sun, Xing and Xu, Tong and Chen, Enhong},

journal={arXiv preprint arXiv:2306.13549},

year={2023}

}

@article{fu2023mme,

title={MME: A Comprehensive Evaluation Benchmark for Multimodal Large Language Models},

author={Fu, Chaoyou and Chen, Peixian and Shen, Yunhang and Qin, Yulei and Zhang, Mengdan and Lin, Xu and Qiu, Zhenyu and Lin, Wei and Yang, Jinrui and Zheng, Xiawu and Li, Ke and Sun, Xing and Ji, Rongrong},

journal={arXiv preprint arXiv:2306.13394},

year={2023}

}

Table of Contents

| Title | Venue | Date | Code | Demo |

|---|---|---|---|---|

MIMIC-IT: Multi-Modal In-Context Instruction Tuning |

arXiv | 2023-06-08 | Github | Demo |

Chameleon: Plug-and-Play Compositional Reasoning with Large Language Models |

arXiv | 2023-04-19 | Github | Demo |

HuggingGPT: Solving AI Tasks with ChatGPT and its Friends in HuggingFace |

arXiv | 2023-03-30 | Github | Demo |

MM-REACT: Prompting ChatGPT for Multimodal Reasoning and Action |

arXiv | 2023-03-20 | Github | Demo |

Prompting Large Language Models with Answer Heuristics for Knowledge-based Visual Question Answering |

CVPR | 2023-03-03 | Github | - |

Visual Programming: Compositional visual reasoning without training |

CVPR | 2022-11-18 | Github | Local Demo |

An Empirical Study of GPT-3 for Few-Shot Knowledge-Based VQA |

AAAI | 2022-06-28 | Github | - |

Flamingo: a Visual Language Model for Few-Shot Learning |

NeurIPS | 2022-04-29 | Github | Demo |

| Multimodal Few-Shot Learning with Frozen Language Models | NeurIPS | 2021-06-25 | - | - |

| Title | Venue | Date | Code | Demo |

|---|---|---|---|---|

Kosmos-2: Grounding Multimodal Large Language Models to the World |

arXiv | 2023-06-26 | Github | - |

Transfer Visual Prompt Generator across LLMs |

arXiv | 2023-05-02 | Github | Demo |

| GPT-4 Technical Report | arXiv | 2023-03-15 | - | - |

| PaLM-E: An Embodied Multimodal Language Model | arXiv | 2023-03-06 | - | Demo |

Prismer: A Vision-Language Model with An Ensemble of Experts |

arXiv | 2023-03-04 | Github | Demo |

Language Is Not All You Need: Aligning Perception with Language Models |

arXiv | 2023-02-27 | Github | - |

BLIP-2: Bootstrapping Language-Image Pre-training with Frozen Image Encoders and Large Language Models |

arXiv | 2023-01-30 | Github | Demo |

VIMA: General Robot Manipulation with Multimodal Prompts |

ICML | 2022-10-06 | Github | Local Demo |

MineDojo: Building Open-Ended Embodied Agents with Internet-Scale Knowledge |

NeurIPS | 2022-06-17 | Github | - |

Language Models are General-Purpose Interfaces |

arXiv | 2022-06-13 | Github | - |

| Title | Venue | Date | Page |

|---|---|---|---|

MME: A Comprehensive Evaluation Benchmark for Multimodal Large Language Models |

arXiv | 2023-06-23 | Github |

LVLM-eHub: A Comprehensive Evaluation Benchmark for Large Vision-Language Models |

arXiv | 2023-06-15 | Github |

LAMM: Language-Assisted Multi-Modal Instruction-Tuning Dataset, Framework, and Benchmark |

arXiv | 2023-06-11 | Github |

M3Exam: A Multilingual, Multimodal, Multilevel Benchmark for Examining Large Language Models |

arXiv | 2023-06-08 | Github |

| Title | Venue | Date | Code | Demo |

|---|---|---|---|---|

| Can Large Pre-trained Models Help Vision Models on Perception Tasks? | arXiv | 2023-06-01 | Coming soon | - |

Contextual Object Detection with Multimodal Large Language Models |

arXiv | 2023-05-29 | Github | Demo |

Generating Images with Multimodal Language Models |

arXiv | 2023-05-26 | Github | - |

On Evaluating Adversarial Robustness of Large Vision-Language Models |

arXiv | 2023-05-26 | Github | - |

Evaluating Object Hallucination in Large Vision-Language Models |

arXiv | 2023-05-17 | Github | - |

Grounding Language Models to Images for Multimodal Inputs and Outputs |

ICML | 2023-01-31 | Github | Demo |

| Name | Paper | Link | Notes |

|---|---|---|---|

| MIMIC-IT | MIMIC-IT: Multi-Modal In-Context Instruction Tuning | Coming soon | Multimodal in-context instruction dataset |

| Name | Paper | Link | Notes |

|---|---|---|---|

| EgoCOT | EmbodiedGPT: Vision-Language Pre-Training via Embodied Chain of Thought | Coming soon | Large-scale embodied planning dataset |

| VIP | Let’s Think Frame by Frame: Evaluating Video Chain of Thought with Video Infilling and Prediction | Coming soon | An inference-time dataset that can be used to evaluate VideoCOT |

| ScienceQA | Learn to Explain: Multimodal Reasoning via Thought Chains for Science Question Answering | Link | Large-scale multi-choice dataset, featuring multimodal science questions and diverse domains |

| Name | Paper | Link | Notes |

|---|---|---|---|

| MME | MME: A Comprehensive Evaluation Benchmark for Multimodal Large Language Models | Link | A comprehensive MLLM Evaluation benchmark |

| LVLM-eHub | LVLM-eHub: A Comprehensive Evaluation Benchmark for Large Vision-Language Models | Link | An evaluation platform for MLLMs |

| LAMM-Benchmark | LAMM: Language-Assisted Multi-Modal Instruction-Tuning Dataset, Framework, and Benchmark | Link | A benchmark for evaluating the quantitative performance of MLLMs on various2D/3D vision tasks |

| M3Exam | M3Exam: A Multilingual, Multimodal, Multilevel Benchmark for Examining Large Language Models | Link | A multilingual, multimodal, multilevel benchmark for evaluating MLLM |

| OwlEval | mPLUG-Owl: Modularization Empowers Large Language Models with Multimodality | Link | Dataset for evaluation on multiple capabilities |

| Name | Paper | Link | Notes |

|---|---|---|---|

| IMAD | IMAD: IMage-Augmented multi-modal Dialogue | Link | Multimodal dialogue dataset |

| Video-ChatGPT | Video-ChatGPT: Towards Detailed Video Understanding via Large Vision and Language Models | Link | A quantitative evaluation framework for video-based dialogue models |

| CLEVR-ATVC | Accountable Textual-Visual Chat Learns to Reject Human Instructions in Image Re-creation | Link | A synthetic multimodal fine-tuning dataset for learning to reject instructions |

| Fruit-ATVC | Accountable Textual-Visual Chat Learns to Reject Human Instructions in Image Re-creation | Link | A manually pictured multimodal fine-tuning dataset for learning to reject instructions |

| InfoSeek | Can Pre-trained Vision and Language Models Answer Visual Information-Seeking Questions? | Coming soon | A VQA dataset that focuses on asking information-seeking questions |