We tackle the challenge of using machine learning models on iOS via Core ML and ML Kit (TensorFlow Lite).

- Machine Learning Framework for iOS

- Baseline Projects

- Application Projects

- Create ML Projects

- Performance

- See also

- Core ML

- ML Kit

- fritz

- etc. (Tensorflow Lite,

Tensorflow MobileDEPRECATED)

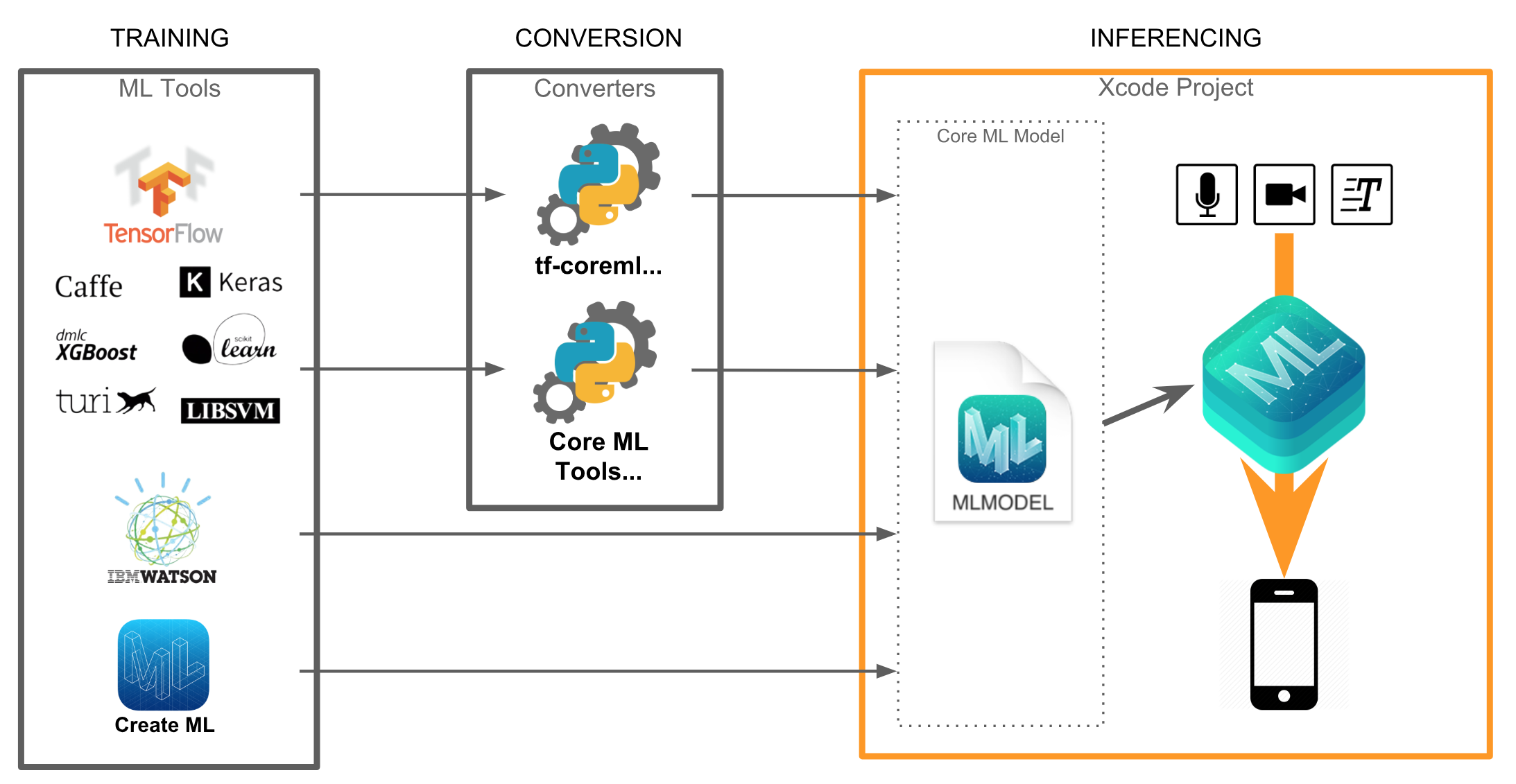

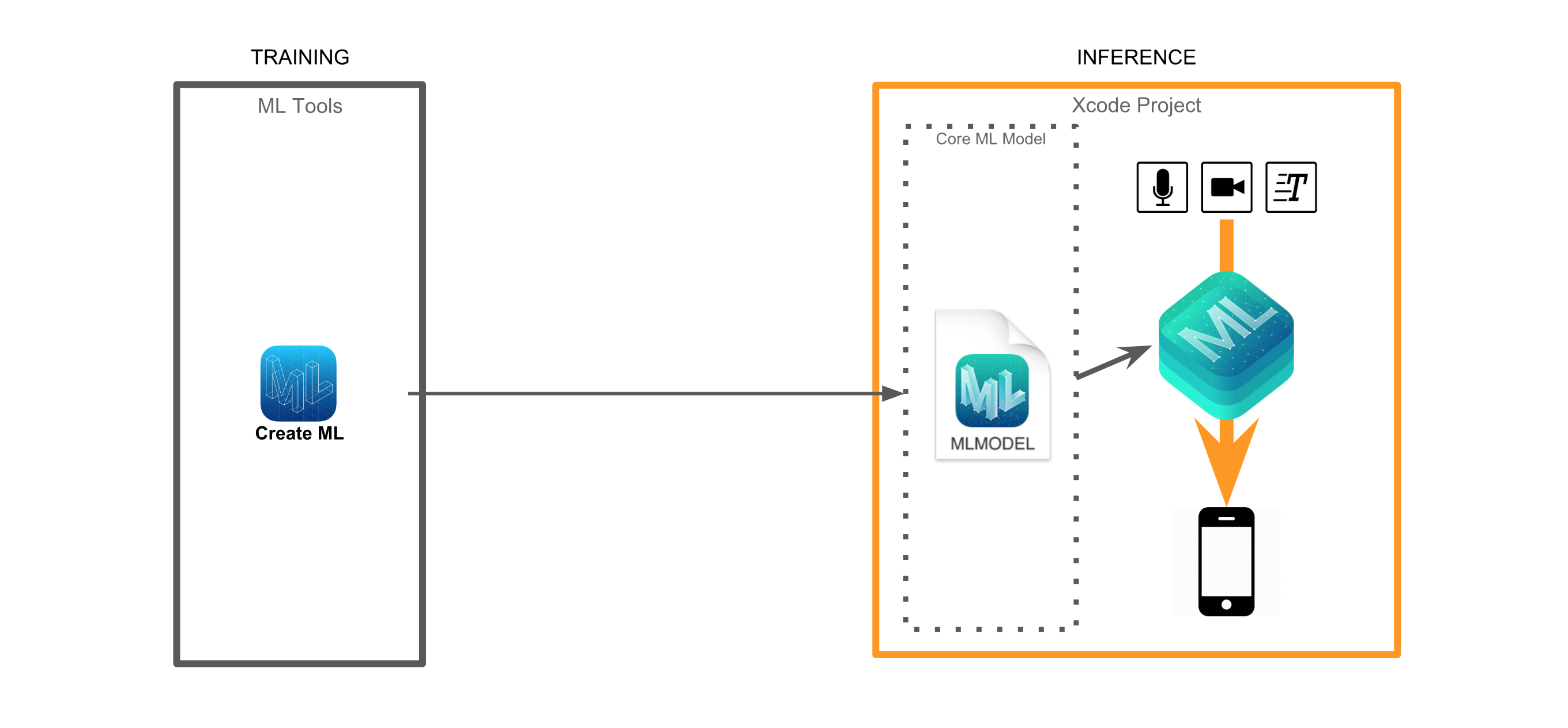

The overall flow is very similar for most ML frameworks. Each framework has its own compatible model format. We need to take the model created in TensorFlow and convert it into the appropriate format, for each mobile ML framework.

Once the compatible model is prepared, you can run the inference using the ML framework. Note that you must perform pre/postprocessing manually.

If you want more explanation, check this slide(Korean).

-

Using built-in model with Core ML

-

Using built-in on-device model with ML Kit

-

Using custom model for Vision with Core ML and ML Kit

-

Object Detection with Core ML

- Object Detection with ML Kit

- Using built-in cloud model on ML Kit

- Landmark recognition

- Using custom model for NLP with Core ML and ML Kit

- Using custom model for Audio with Core ML and ML Kit

- Audio recognition

- Speech recognition

- TTS

| Name | DEMO | Note |

|---|---|---|

| MobileNet-CoreML | - | |

| MobileNet-MLKit | - |

| Name | DEMO | Note |

|---|---|---|

| PoseEstimation-CoreML | - | |

| PoseEstimation-MLKit | - | |

| FingertipEstimation-CoreML | - |

| Name | DEMO | Note |

|---|---|---|

| SSDMobileNet-CoreML | - | |

| TextDetection-CoreML | - | |

| TextRecognition-MLKit | - |

| Name | DEMO | Note |

|---|---|---|

| dont-be-turtle-ios | - | |

| WordRecognition-CoreML-MLKit(preparing...) | Detect character, find a word what I point and then recognize the word using Core ML and ML Kit. |

| Name | DEMO | Note |

|---|---|---|

| KeypointAnnotation | Annotation tool for own custom estimation dataset |

| Name | Create ML DEMO | Core ML DEMO | Note |

|---|---|---|---|

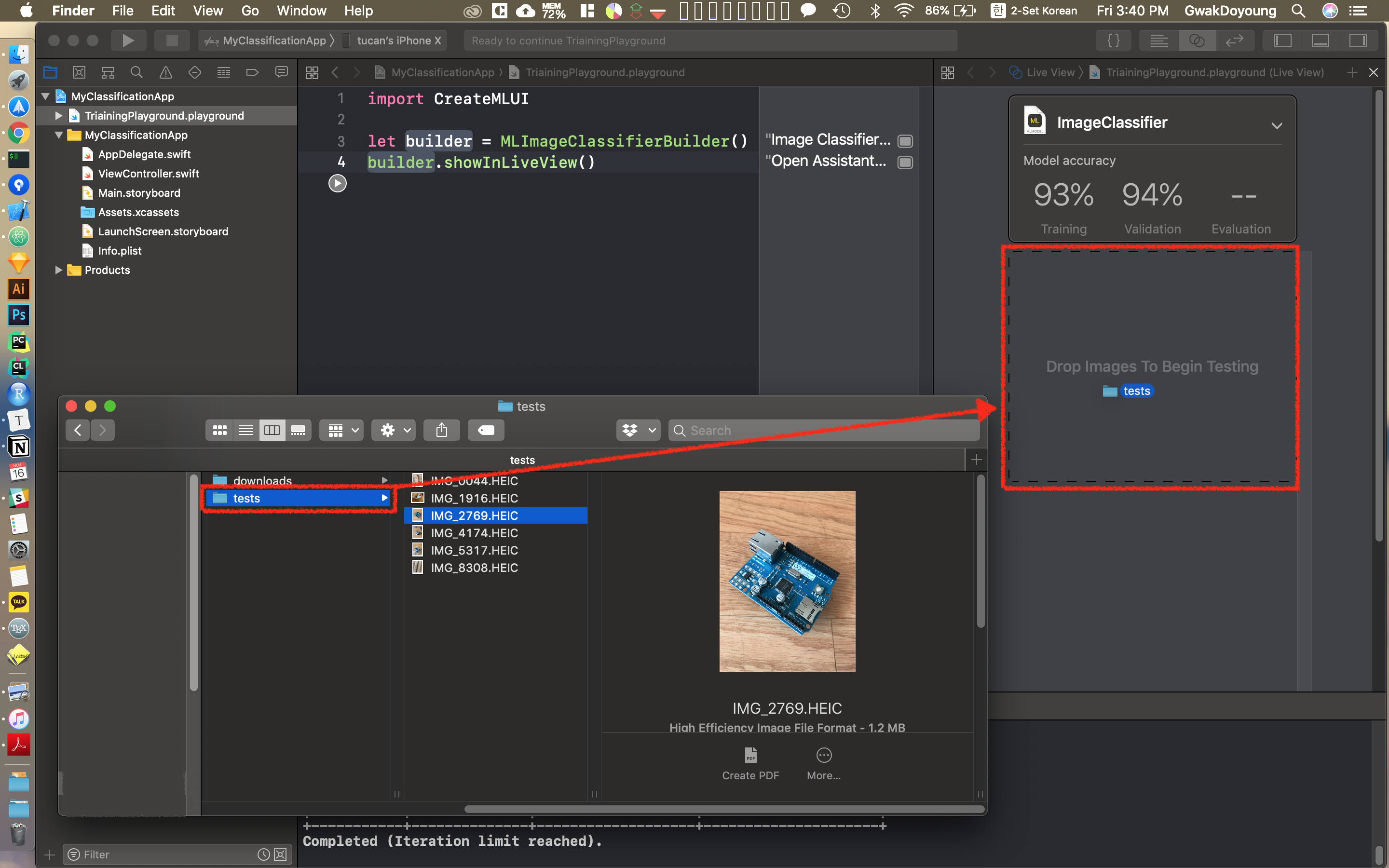

| SimpleClassification-CreateML-CoreML |  |

A Simple Classification Using Create ML and Core ML |

Execution Time: Inference Time + Postprocessing Time

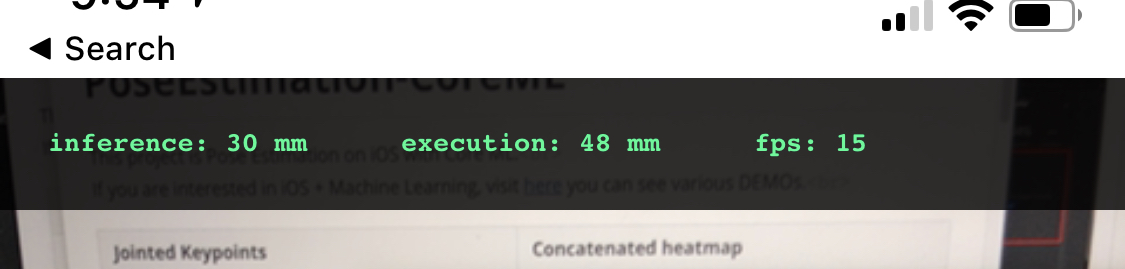

| (with iPhone X) | Inference Time(ms) | Execution Time(ms) | FPS |

|---|---|---|---|

| MobileNet-CoreML | 40 | 40 | 23 |

| MobileNet-MLKit | 120 | 130 | 6 |

| PoseEstimation-CoreML | 51 | 65 | 14 |

| PoseEstimation-MLKit | 200 | 217 | 3 |

| SSDMobileNet-CoreML | 100 ~ 120 | 110 ~ 130 | 5 |

| TextDetection-CoreML | 12 | 13 | 30(max) |

| TextRecognition-MLKit | 35~200 | 40~200 | 5~20 |

| WordRecognition-CoreML-MLKit | 23 | 30 | 14 |

You can see the measured latency time for inference or execution and FPS on the top of the screen.

If you have more elegant method for measuring the performance, suggest on issue!

| Measure📏 | Unit Test | Bunch Test | |

|---|---|---|---|

| MobileNet-CoreML | O | X | X |

| MobileNet-MLKit | O | X | X |

| PoseEstimation-CoreML | O | O | X |

| PoseEstimation-MLKit | O | X | X |

| SSDMobileNet-CoreML | O | O | X |

| TextDetection-CoreML | O | X | X |

| TextRecognition-MLKit | O | X | X |