Tips on Kubernetes cluster management using kubectl command. A goal of this repository is to allow you reversely lookup kubectl commands from what you want to do around kubernetes cluster management.

- kubectl-tips

- Kubectl Version Manager

- Print Cluster Info

- Print the supported API resources

- Print the available API versions

- Display Resource (CPU/Memory) usage of nodes/pods

- Updating Kubernetes Deployments on a ConfigMap/Secrets Change

- Deploy and rollback app using kubectl

- Get all endpoints in the cluster

- Execute shell commands inside the cluster

- Access k8s API endpoint via local proxy

- Port forward a local port to a port on k8s resources

- Change the service type to LoadBalancer by patching

- Delete Kubernetes Resources

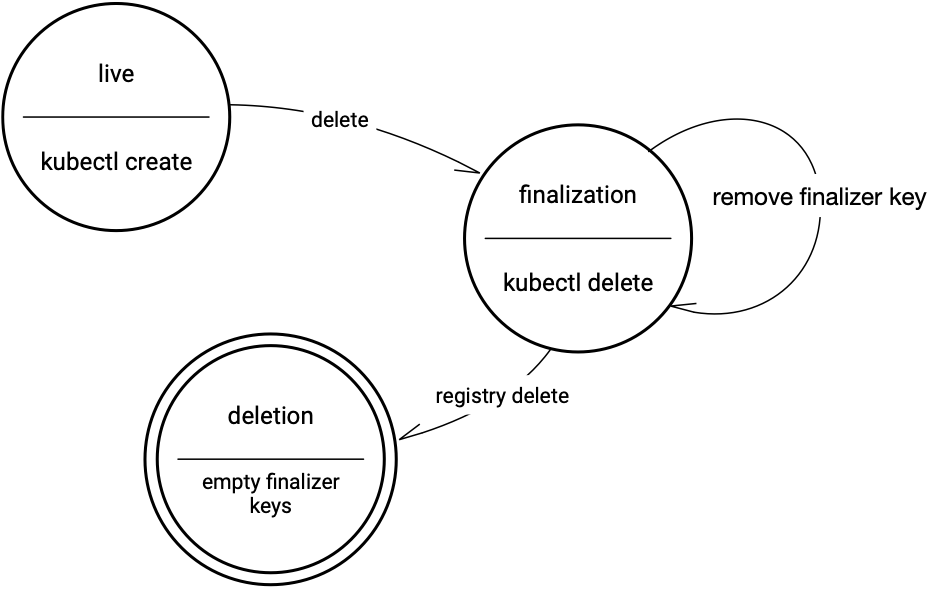

- Using finalizers to control deletion

- Delete a worker node in the cluster

- Evicted all pods in a node for investigation

- Get Pods Logs

- Get Kubernetes events

- Get Kubernetes Raw Metrics - Prometheus metrics endpoint

- Get Kubernetes Raw Metrics - metrics API

You can manager multiple kubectl version with asdf and asdf-kubectl

Install asdf and asdf-kubectl

# Homebrew on macOS

# Install asdf

brew install asdf

# Add asdf.sh to your ~/.zshrc

echo -e "\n. $(brew --prefix asdf)/libexec/asdf.sh" >> ~/.zshrcInstall asdf-kubectl

# Install asdf-kubectl plugin

asdf plugin-add kubectl https://github.com/asdf-community/asdf-kubectl.git

# check installed plugin

asdf plugin list

# -> kubectlThen, check available version

asdf list-all kubectlLet's install 1.21.14

asdf install kubectl 1.21.14

# Check install kubectl version

asdf list kubectl

#-> *1.21.14Finally configure asdf to use kubectl version 1.21.14

asdf global kubectl 1.21.14Let's see how kubectl client version looks like

kubectl version --client

Client Version: version.Info{Major:"1", Minor:"21", GitVersion:"v1.21.14", GitCommit:"0f77da5bd4809927e15d1658fb4aa8f13ad890a5", GitTreeState:"clean", BuildDate:"2022-06-15T14:17:29Z", GoVersion:"go1.16.15", Compiler:"gc", Platform:"darwin/amd64"}See also

- https://asdf-vm.com/

- https://asdf-vm.com/guide/getting-started.html

- https://github.com/asdf-community/asdf-kubectl

kubectl cluster-info

Kubernetes control plane is running at https://A8A4143BAA8FADD6BA355D6C2A12344.gr5.ap-northeast-1.eks.amazonaws.com

CoreDNS is running at https://A8A4143BAA8FADD6BA355D6C2A12345.gr7.ap-northeast-1.eks.amazonaws.com/api/v1/namespaces/kube-system/services/kube-dns:dns/proxy

Metrics-server is running at https://A8A4143BAA8FADD6BA355D6C2A12345.gr7.ap-northeast-1.eks.amazonaws.com/api/v1/namespaces/kube-system/services/https:metrics-server:/proxykubectl api-resources

kubectl api-resources -o widesample output

NAME SHORTNAMES APIGROUP NAMESPACED KIND

bindings true Binding

componentstatuses cs false ComponentStatus

configmaps cm true ConfigMap

endpoints ep true Endpoints

events ev true Event

limitranges limits true LimitRange

namespaces ns false Namespace

nodes no false Node

persistentvolumeclaims pvc true PersistentVolumeClaim

persistentvolumes pv false PersistentVolume

pods po true Pod

podtemplates true PodTemplate

replicationcontrollers rc true ReplicationController

resourcequotas quota true ResourceQuota

secrets true Secret

serviceaccounts sa true ServiceAccount

services svc true Service

mutatingwebhookconfigurations admissionregistration.k8s.io false MutatingWebhookConfiguration

validatingwebhookconfigurations admissionregistration.k8s.io false ValidatingWebhookConfiguration

customresourcedefinitions crd,crds apiextensions.k8s.io false CustomResourceDefinition

apiservices apiregistration.k8s.io false APIService

controllerrevisions apps true ControllerRevision

daemonsets ds apps true DaemonSet

deployments deploy apps true Deployment

replicasets rs apps true ReplicaSet

statefulsets sts apps true StatefulSet

applications app,apps argoproj.io true Application

appprojects appproj,appprojs argoproj.io true AppProject

tokenreviews authentication.k8s.io false TokenReview

localsubjectaccessreviews authorization.k8s.io true LocalSubjectAccessReview

selfsubjectaccessreviews authorization.k8s.io false SelfSubjectAccessReview

selfsubjectrulesreviews authorization.k8s.io false SelfSubjectRulesReview

subjectaccessreviews authorization.k8s.io false SubjectAccessReview

horizontalpodautoscalers hpa autoscaling true HorizontalPodAutoscaler

cronjobs cj batch true CronJob

jobs batch true Job

certificatesigningrequests csr certificates.k8s.io false CertificateSigningRequest

leases coordination.k8s.io true Lease

eniconfigs crd.k8s.amazonaws.com false ENIConfig

events ev events.k8s.io true Event

daemonsets ds extensions true DaemonSet

deployments deploy extensions true Deployment

ingresses ing extensions true Ingress

networkpolicies netpol extensions true NetworkPolicy

podsecuritypolicies psp extensions false PodSecurityPolicy

replicasets rs extensions true ReplicaSet

networkpolicies netpol networking.k8s.io true NetworkPolicy

poddisruptionbudgets pdb policy true PodDisruptionBudget

podsecuritypolicies psp policy false PodSecurityPolicy

clusterrolebindings rbac.authorization.k8s.io false ClusterRoleBinding

clusterroles rbac.authorization.k8s.io false ClusterRole

rolebindings rbac.authorization.k8s.io true RoleBinding

roles rbac.authorization.k8s.io true Role

priorityclasses pc scheduling.k8s.io false PriorityClass

storageclasses sc storage.k8s.io false StorageClass

volumeattachments storage.k8s.io false VolumeAttachment

kubectl get apiservicessample output

NAME SERVICE AVAILABLE AGE

v1. Local True 97d

v1.apps Local True 97d

v1.authentication.k8s.io Local True 97d

v1.authorization.k8s.io Local True 97d

v1.autoscaling Local True 97d

v1.batch Local True 97d

v1.networking.k8s.io Local True 97d

v1.rbac.authorization.k8s.io Local True 97d

v1.storage.k8s.io Local True 97d

v1alpha1.argoproj.io Local True 4d

v1alpha1.crd.k8s.amazonaws.com Local True 6d

v1beta1.admissionregistration.k8s.io Local True 97d

v1beta1.apiextensions.k8s.io Local True 97d

v1beta1.apps Local True 97d

v1beta1.authentication.k8s.io Local True 97d

v1beta1.authorization.k8s.io Local True 97d

v1beta1.batch Local True 97d

v1beta1.certificates.k8s.io Local True 97d

v1beta1.coordination.k8s.io Local True 97d

v1beta1.events.k8s.io Local True 97d

v1beta1.extensions Local True 97d

v1beta1.policy Local True 97d

v1beta1.rbac.authorization.k8s.io Local True 97d

v1beta1.scheduling.k8s.io Local True 97d

v1beta1.storage.k8s.io Local True 97d

v1beta2.apps Local True 97d

v2beta1.autoscaling Local True 97d

v2beta2.autoscaling Local True 97d

Display Resource (CPU/Memory) usage of nodes

kubectl top nodesample output

NAME CPU(cores) CPU% MEMORY(bytes) MEMORY%

aks-node-28537427-0 281m 1% 10989Mi 39%

aks-node-28537427-1 123m 0% 6795Mi 24%

aks-node-28537427-2 234m 1% 7963Mi 28%

Display Resource (CPU/Memory) usage of pods

kubectl top po -A

kubectl top po --all-namespacessample output

NAMESPACE NAME CPU(cores) MEMORY(bytes)

dd-agent dd-agent-2nffb 55m 235Mi

dd-agent dd-agent-kkxsq 26m 208Mi

dd-agent dd-agent-srnlt 29m 210Mi

kube-system azure-cni-networkmonitor-5k7ws 1m 22Mi

kube-system azure-cni-networkmonitor-72sxx 1m 20Mi

kube-system azure-cni-networkmonitor-wxqvm 1m 22Mi

kube-system azure-ip-masq-agent-gft8h 1m 11Mi

kube-system azure-ip-masq-agent-tc8jc 1m 10Mi

kube-system azure-ip-masq-agent-v54pm 1m 11Mi

kube-system coredns-6cb457974f-kth9q 4m 25Mi

kube-system coredns-6cb457974f-m9lth 4m 24Mi

kube-system coredns-autoscaler-66cdbfb8fc-9kklp 1m 11Mi

kube-system kube-proxy-b4x7q 5m 47Mi

kube-system kube-proxy-gm8df 6m 49Mi

kube-system kube-proxy-vsgbs 5m 50Mi

kube-system kubernetes-dashboard-686c6f85dc-n5xgg 1m 19Mi

kube-system metrics-server-5b9794db67-5rs25 1m 18Mi

kube-system tunnelfront-f586b8b5c-lrfkm 68m 52Mi

custom-app-00-dev1 custom-app-deployment-5db9d949dd-fnrqd 2m 1563Mi

custom-app-00-dev2 custom-app-deployment-c67497d95-rtggx 2m 1577Mi

custom-app-00-dev3 custom-app-deployment-958c59798-6vvwj 2m 1581Mi

custom-app-00-dev4 custom-app-deployment-5c7797bc85-mlppp 2m 1585Mi

custom-app-00-dev5 custom-app-deployment-bfd4596dd-d5q76 2m 1603Mi

custom-app-00-dev6 custom-app-deployment-6f9f56ffc6-tpngg 2m 1600Mi

custom-app-00-dev7 custom-app-deployment-7c6896ff98-4pmln 2m 1573Mi

kubectl v1.15+ provides a rollout restart command that allows you to restart Pods in a Deployment and allow them pick up changes to a referenced ConfigMap, Secret or similar.

kubectl rollout restart deploy/<deployment-name>

kubectl rollout restart deploy <deployment-name>kubectl run nginx --image nginx

kubectl run nginx --image=nginx --port=80 --restart=Never

kubectl expose deployment nginx --external-ip="10.0.47.10" --port=8000 --target-port=80

kubectl scale --replicas=3 deployment nginx

kubectl set image deployment nginx nginx=nginx:1.8

kubectl rollout status deploy nginx

kubectl set image deployment nginx nginx=nginx:1.9

kubectl rollout status deploy nginx

kubectl rollout history deploy nginkubectl get --raw /metricsxLet's check rollout history

kubectl rollout history deploy nginx

deployment.extensions/nginx

REVISION CHANGE-CAUSE

1 <none>

2 <none>

3 <none>You can undo the rollout of nginx deploy like this:

kubectl rollout undo deploy nginx

kubectl describe deploy nginx |grep Image

Image: nginx:1.8More specifically you can undo the rollout with --to-revision option

kubectl rollout undo deploy nginx --to-revision=1

kubectl describe deploy nginx |grep Image

Image: nginxkubectl get endpoints [-A|-n <namespace>]

kubectl get ep [-A|-n <namespace>]You can exec shell commands in a new creating Pod

kubectl run --generator=run-pod/v1 -it busybox --image=busybox --rm --restart=Never -- shIf you want to run curl from a pod (The busybox image above doesn't contain curl)

kubectl run --generator=run-pod/v1 -it --rm busybox --image=radial/busyboxplus:curl --restart=Never -- shIf you want to debug databases connections from a pod

kubectl run mysql -it --rm --image=mysql -- mysql -h <host/ip> -P <port> -u <user> -p<password>You can also exec shell commands in existing Pod

kubectl exec -n <namespace> -it <pod-name> -- /bin/sh

kubectl exec -n <namespace> -it <pod-name> -c <container-name> -- /bin/shYou can change kind of the resource you're creating with option in kubectl run

| Kind | Option |

|---|---|

| deployment | node |

| pod | --restart=Never |

| job | --restart=OnFailure |

| cronjob | --schedule='cron format(0/5 * * * ?' |

NOTE: kubernetes 1.18+ kubectl run has removed previously deprecated flags not related to generator and pod creation. The kubectl run command now only creates pods. To create objects other than Pods, see the specific kubectl create subcommand. So run

kubectl create deploymentin order to create deployment like this:kubectl create deployment nginx --image=nginx kubectl create deployment nginx --image=nginx --dry-run -o yamlsee also kubectl cheat sheet

You can access k8s API via local proxy

# Making local proxy

kubectl proxy

Starting to serve on 127.0.0.1:8001Access k8s API resources via local proxy

# Get pods list in namespace foo

curl http://localhost:8001/api/v1/namespaces/foo/pods

# Access pod's endpoint

curl http://localhost:8001/api/v1/namespaces/foo/pods/<pod-name>/proxy/<podendpoint>

curl http://localhost:8001/api/v1/namespaces/foo/pods/foo-86c498d84c-xbkn9/proxy/healthcheck# kubectl port-forward -n <namespace> <resource> LocalPort:TargetPort

kubectl port-forward -n <namespace> redis-master-765d459796-258hz 7000:6379

kubectl port-forward -n <namespace> pods/redis-master-765d459796-258hz 7000:6379

kubectl port-forward -n <namespace> deployment/redis-master 7000:6379

kubectl port-forward -n <namespace> rs/redis-master 7000:6379

kubectl port-forward -n <namespace> svc/redis-master 7000:6379See also this for more detail

# kubectl patch svc SERVICE_NAME -p '{"spec": {"type": "LoadBalancer"}}'

kubectl patch svc argocd-server -n argocd -p '{"spec": {"type": "LoadBalancer"}}'# Delete resources that has name=<label> label

kubectl delete svc,cm,secrets,deploy -l name=<label> -n <namespace>

# Delete all certain resources from a certain namespace

# --all is used to delete every object of that resource type instead of specifying it using its name or label.

kubectl delete svc,cm,secrets,deploy --all -n <namespace>

# Delete all resources (except crd) from a certain namespace

kubectl delete all --all -n <namespace>

# Delete pods in namespace <namespace>

for pod in $(kubectl get po -n <namespace> --no-headers=true | cut -d ' ' -f 1); do

kubectl delete pod $pod -n <namespace>

done

# Delete pods with --grace-period=0 and --force option

# Add --grace-period=0 in order to delete pod as quickly as possible

# Add --force in case that pod stay terminating state and cannnot be deleted

kubectl delete pod <pod> -n <namespace> --grace-period=0 --forceYou can delete the k8s object by patching command to remove finalizers. Simply patch it on the command line to remove the finalizers, so the object will be deleted

For example, you want to delete configmap/mymap

kubectl patch configmap/mymap \

--type json \

--patch='[ { "op": "remove", "path": "/metadata/finalizers" } ]'

Read Using Finalizers to Control Deletion to understand how the object will be deleted by using finalizers

A point is to cordon a node at first, then to evict pods in the node with drain.

kubectl get nodes # to get nodename to delete

kubectl cordon <node-name>

kubectl drain --ignore-daemonsets <node-name>

kubectl delete node <node-name>[NOTE] Add

--ignore-daemonsetsif you want to ignore DaemonSet for eviction

A point is to cordon a node at first, then to evict pods in the node with drain, to uncordon after the investigation

kubectl get nodes # to get nodename to delete

kubectl cordon <node-name>

kubectl drain --ignore-daemonsets <node-name>Now that you evicted all pods in the node, you can do investigation in the node. After the investigation, you can uncordon the node with the following command.

kubectl uncordon <node-name>

[NOTE] Add

--ignore-daemonsetsif you want to ignore DaemonSet for eviction

You can get Kubernetes pods logs by running the following commands

kubectl logs <pod-name> -n <namespace>

# Add -f option if the logs should be streamed

kubectl logs <pod-name> -n <namespace> -f

kubectl logs <pod-name> -n <namespace> -f --tail 0Or you can use 3rd party OSS tools. For example, you can get stern that allows you to aggregate logs of all pods and to filter them by using regular expression like stern <expression>

stern <keyword> -n <namespace>You can Pods' events with the following commands which will show events at the end of the output for the pod (Relatively recent events will appear).

kubectl describe pod <podname> -n <namespace># Get recent events in a specific namespace

kubectl get events -n <namespaces>

kubectl get events -n kube-system

# Get recent events for all resources in the system

# Get events from all namespaces with either --all-namespaces or -A

kubectl get event --all-namespaces

kubectl get event -A

kubectl get event -A -o wide

# No Pod events

kubectl get events --field-selector involvedObject.kind!=Pod

# Events from a specific Pod

kubectl get events --field-selector involvedObject.kind=Pod,involvedObject.name=<podname>

# Events from a specific Node

kubectl get events --field-selector involvedObject.kind=Node,involvedObject.name=<nodename>

# Warning events (from all namespaces)

kubectl get events --field-selector type=Warning -A

# NOT normal events (from all namespaces)

kubectl get events --field-selector type!=Normal -AMany Kubernetes components exposes their metrics via the /metrics endpoint, including API server, etcd and many other add-ons. These metrics are in Prometheus format, and can be defined and exposed using Prometheus client libs

kubectl get --raw /metrics

sample output

APIServiceOpenAPIAggregationControllerQueue1_adds 282663

APIServiceOpenAPIAggregationControllerQueue1_depth 0

APIServiceOpenAPIAggregationControllerQueue1_longest_running_processor_microseconds 0

APIServiceOpenAPIAggregationControllerQueue1_queue_latency{quantile="0.5"} 63

APIServiceOpenAPIAggregationControllerQueue1_queue_latency{quantile="0.9"} 105

APIServiceOpenAPIAggregationControllerQueue1_queue_latency{quantile="0.99"} 126

APIServiceOpenAPIAggregationControllerQueue1_queue_latency_sum 1.4331448e+07

APIServiceOpenAPIAggregationControllerQueue1_queue_latency_count 282663

APIServiceOpenAPIAggregationControllerQueue1_retries 282861

APIServiceOpenAPIAggregationControllerQueue1_unfinished_work_seconds 0

APIServiceOpenAPIAggregationControllerQueue1_work_duration{quantile="0.5"} 59

APIServiceOpenAPIAggregationControllerQueue1_work_duration{quantile="0.9"} 98

APIServiceOpenAPIAggregationControllerQueue1_work_duration{quantile="0.99"} 2003

APIServiceOpenAPIAggregationControllerQueue1_work_duration_sum 2.1373689e+07

APIServiceOpenAPIAggregationControllerQueue1_work_duration_count 282663

...

workqueue_work_duration_seconds_bucket{name="non_structural_schema_condition_controller",le="1e-08"} 0

workqueue_work_duration_seconds_bucket{name="non_structural_schema_condition_controller",le="1e-07"} 0

workqueue_work_duration_seconds_bucket{name="non_structural_schema_condition_controller",le="1e-06"} 0

workqueue_work_duration_seconds_bucket{name="non_structural_schema_condition_controller",le="9.999999999999999e-06"} 459

workqueue_work_duration_seconds_bucket{name="non_structural_schema_condition_controller",le="9.999999999999999e-05"} 473

workqueue_work_duration_seconds_bucket{name="non_structural_schema_condition_controller",le="0.001"} 924

workqueue_work_duration_seconds_bucket{name="non_structural_schema_condition_controller",le="0.01"} 927

workqueue_work_duration_seconds_bucket{name="non_structural_schema_condition_controller",le="0.1"} 928

workqueue_work_duration_seconds_bucket{name="non_structural_schema_condition_controller",le="1"} 928

workqueue_work_duration_seconds_bucket{name="non_structural_schema_condition_controller",le="10"} 928

workqueue_work_duration_seconds_bucket{name="non_structural_schema_condition_controller",le="+Inf"} 928

workqueue_work_duration_seconds_sum{name="non_structural_schema_condition_controller"} 0.09712353499999991

...

You can access Metrics API via kubectl proxy like this:

kubectl get --raw /apis/metrics.k8s.io/v1beta1/nodes

kubectl get --raw /apis/metrics.k8s.io/v1beta1/pods

kubectl get --raw /apis/metrics.k8s.io/v1beta1/nodes/<node-name>

kubectl get --raw /apis/metrics.k8s.io/v1beta1/namespaces/<namespace-name>/pods/<pod-name>

(ref: feiskyer/kubernetes-handbook)

Outputs are unformated JSON. It's good to use jq to parse it.

sample output - /apis/metrics.k8s.io/v1beta1/nodes

kubectl get --raw /apis/metrics.k8s.io/v1beta1/nodes | jq

{

"kind": "NodeMetricsList",

"apiVersion": "metrics.k8s.io/v1beta1",

"metadata": {

"selfLink": "/apis/metrics.k8s.io/v1beta1/nodes"

},

"items": [

{

"metadata": {

"name": "ip-xxxxxxxxxx.ap-northeast-1.compute.internal",

"selfLink": "/apis/metrics.k8s.io/v1beta1/nodes/ip-xxxxxxxxxx.ap-northeast-1.compute.internal",

"creationTimestamp": "2020-05-24T01:29:05Z"

},

"timestamp": "2020-05-24T01:28:58Z",

"window": "30s",

"usage": {

"cpu": "105698348n",

"memory": "819184Ki"

}

},

{

"metadata": {

"name": "ip-yyyyyyyyyy.ap-northeast-1.compute.internal",

"selfLink": "/apis/metrics.k8s.io/v1beta1/nodes/ip-yyyyyyyyyy.ap-northeast-1.compute.internal",

"creationTimestamp": "2020-05-24T01:29:05Z"

},

"timestamp": "2020-05-24T01:29:01Z",

"window": "30s",

"usage": {

"cpu": "71606060n",

"memory": "678944Ki"

}

}

]

}

sample output - /apis/metrics.k8s.io/v1beta1/namespaces/NAMESPACE/pods/PODNAME

kubectl get --raw /apis/metrics.k8s.io/v1beta1/namespaces/kube-system/pods/cluster-autoscaler-7d8d69668c-5rcmt | jq

{

"kind": "PodMetrics",

"apiVersion": "metrics.k8s.io/v1beta1",

"metadata": {

"name": "cluster-autoscaler-7d8d69668c-5rcmt",

"namespace": "kube-system",

"selfLink": "/apis/metrics.k8s.io/v1beta1/namespaces/kube-system/pods/cluster-autoscaler-7d8d69668c-5rcmt",

"creationTimestamp": "2020-05-24T01:33:17Z"

},

"timestamp": "2020-05-24T01:32:58Z",

"window": "30s",

"containers": [

{

"name": "cluster-autoscaler",

"usage": {

"cpu": "1030416n",

"memory": "27784Ki"

}

}

]

}