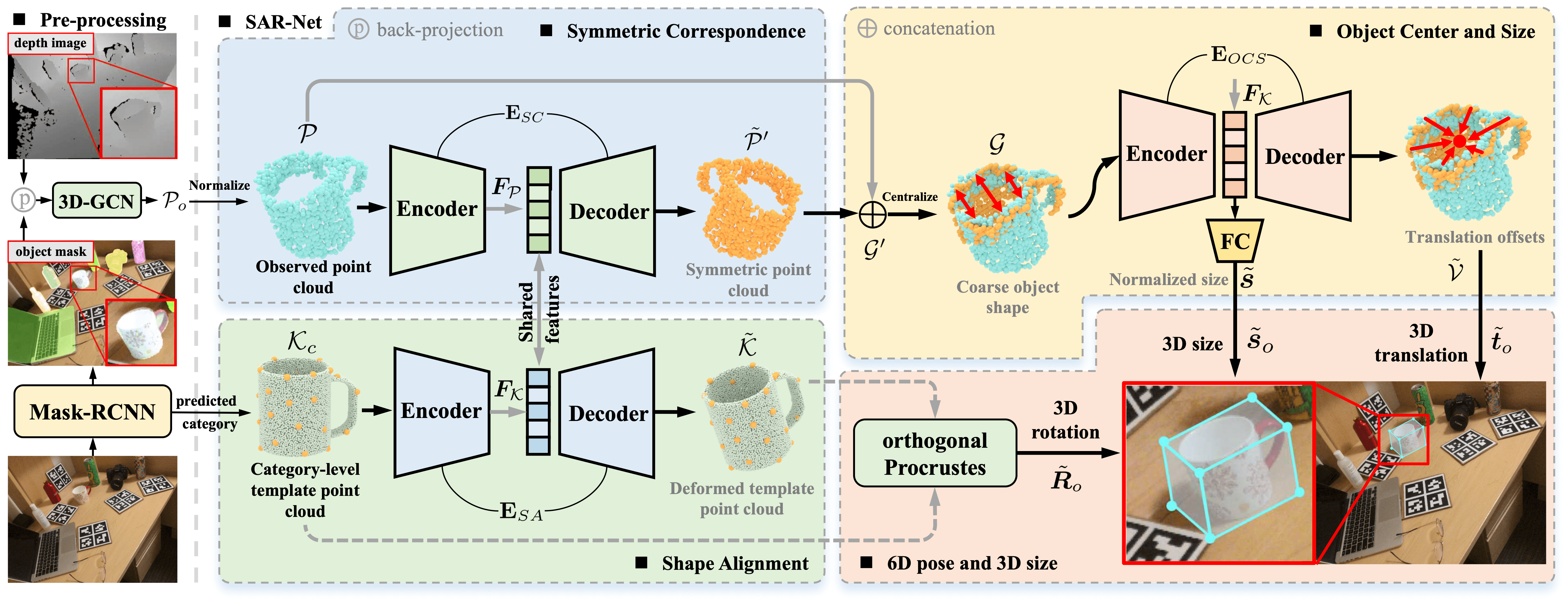

This repository contains the PyTorch implementation of the paper "SAR-Net: Shape Alignment and Recovery Network for Category-level 6D Object Pose and Size Estimation" [pdf] [supp] [arXiv]. Our approach could recover the 6-DoF pose and 3D size of category-level objects from the cropped depth image.

For more results and robotic demos, please refer to our Webpage.

- Python >= 3.6

- PyTorch >= 1.4.0

- CUDA >= 10.1

conda create -n sarnet python=3.6

conda activate sarnet

pip install -r requirements.txt

- Download [camera_train_processed] that we have preprocessed.

- Download [CAMERA/val], [Real/test], [gts], [obj_models] and [nocs_results] provided by NOCS.

- Download [mrcnn_mask_results] provided by DualPoseNet.

Unzip and organize these files in ./data/NOCS and ./results/NOCS as follows:

data

└── NOCS

├── camera_train_processed

├── template_FPS

├── CAMERA

│ ├── val

│ └── val_list.txt

├── Real

│ ├── test

│ └── test_list.txt

├── gts

│ ├── cam_val

│ └── real_test

└── obj_models

├── val

└── real_test

results

└── NOCS

├── mrcnn_mask_results

│ ├── cam_val

│ └── real_test

└── nocs_results

├── val

└── real_test

python preprocess/shape_data.py

python preprocess/pose_data.py

python generate_json.py

NOTE that there is a small bug in the original evaluation code of NOCS w.r.t. IOU. We fixed this bug in our evaluation code and re-evaluated our method. Also thanks Peng et al. for further confirming this bug.

modified the ${gpu_id} in config_sarnet.py

# using single GPU

e.g. gpu_id = '0'

# using multiple GPUs

e.g. gpu_id = '0,1,2,3'

python train_sarnet.py

python evaluate.py --config ./config_evaluate/nocs_real_mrcnn_mask.txt

We also provide the results reported in our paper for comparison.

If you find our work helpful, please consider citing:

@InProceedings{Lin_2022_CVPR,

author = {Lin, Haitao and Liu, Zichang and Cheang, Chilam and Fu, Yanwei and Guo, Guodong and Xue, Xiangyang},

title = {SAR-Net: Shape Alignment and Recovery Network for Category-Level 6D Object Pose and Size Estimation},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {June},

year = {2022},

pages = {6707-6717}

}

Our implementation leverages the code from NOCS, SPD and 3DGCN. Thanks for the authors' work.