DataPulse: Platform For Big Data & AI

Features

- Spark Application Deployment

- Jar Application Submission

- PySpark Application Submission

- Jupyter Notebook

- Customized Integration with PySpark

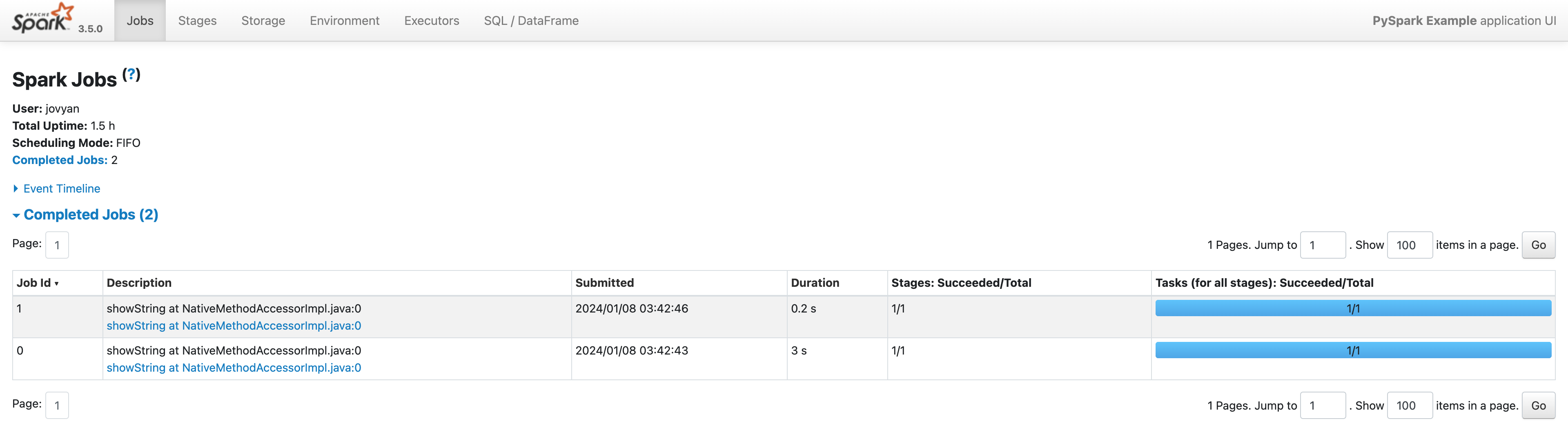

- Monitoring

- Spark UI

- History Server

Supported Versions

- Apache Spark: 3.5.0

- Scala: 2.12

- Python: 3.11

- GCS Connector: hadoop3-2.2.0

Prerequisites

- GCP account

- Kubernetes Engine

- Cloud Storage

- gcloud SDK

- kubectl

- helm

- docker

- python3

Quickstart

Notebook

Step1: Setup Configuration

cp bin/env_template.yaml bin/env.yamlFill in the env.yaml file with your own configurations.

Step2: Create a Kubernetes cluster on GCP

source bin/setup.shStep3: Create a Jupyter Notebook

A service notebook will be created on the Kubernetes cluster.

Step4: Check Spark Integration

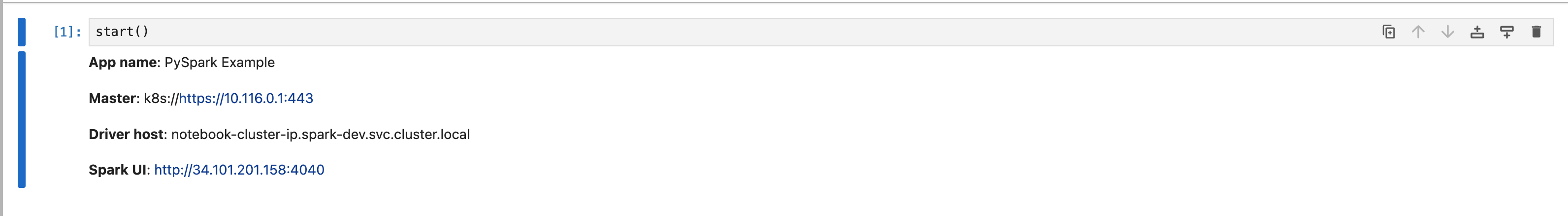

Check Spark information by running the following code in a notebook cell:

start()Step5: Check Spark UI

Check Spark UI by clicking the link in the notebook cell output.

License

This project is licensed under the terms of the MIT license.