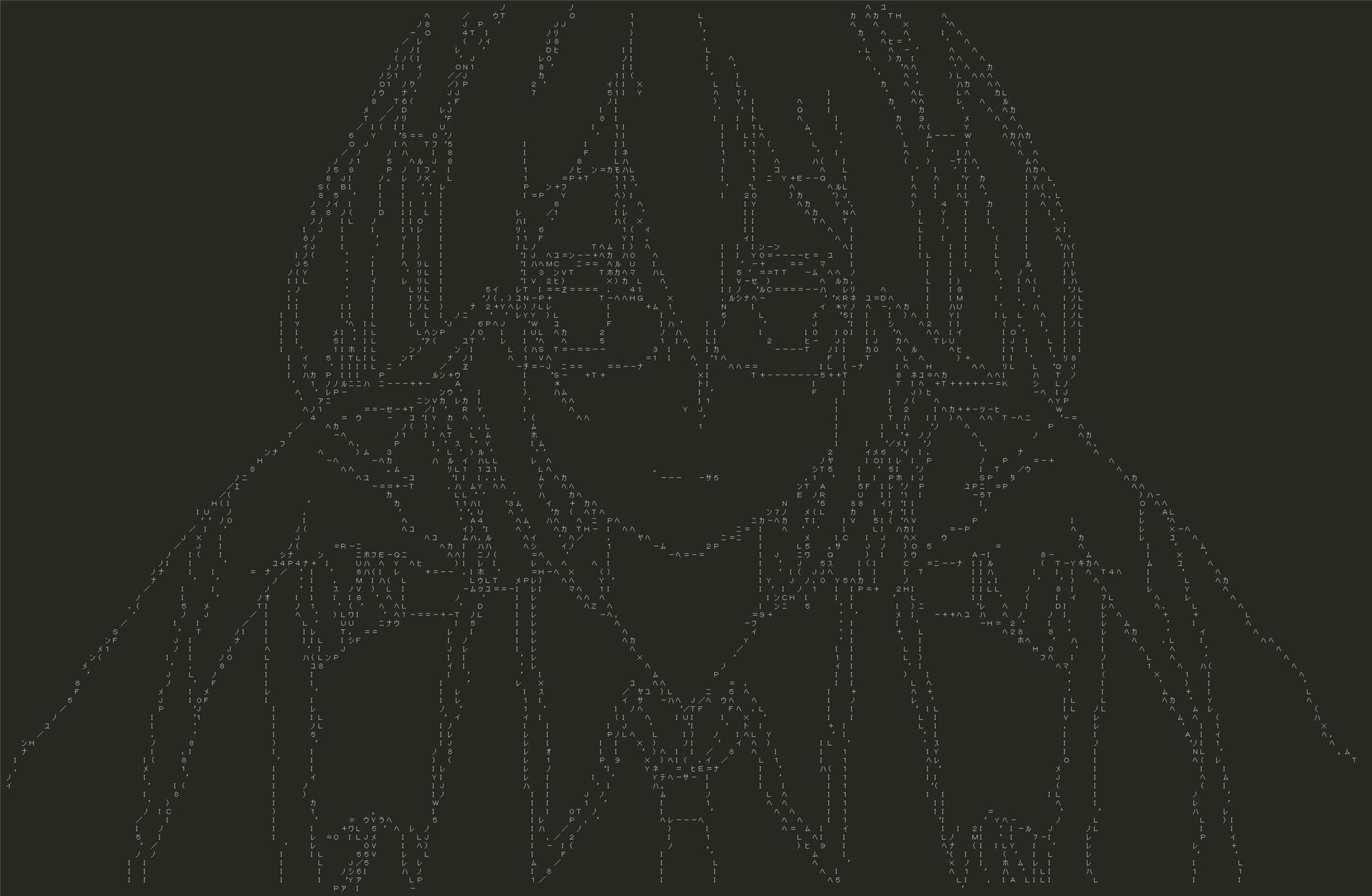

A deep ascii art generator. Work done during MSR Montreal hackathon 2018.

- Python 2/3

- Install Pytorch, follow this.

- Install OpenCV2,

conda install opencvshould work. - Ask for permission of using ETL (a Japanese handwritten character recognition dataset) from here, then unzip and put ETL1 or ETL6 inside

asciiko/(we recommend using ETL6, which contains more punctuation marks, they might be helpful for generating ascii arts).

It will take some time if you run the first time, asciiko will preprocess and parse the ETL datasets, save the useful part into .npy files.

- In

config/config.yaml, enable CUDA if you have access to it, also specify which split of ETL data are you using. - To train a model, run

python train.py -c config/. - To generate ascii strings from an image, modify and run

python img2charid.py, it will generate a.jsonfile; - To generate ascii strings from a video, run

python utils/get_video_frames.py, to get all frames of a video into a folder, then modify and runpython img2charid.py, it will generate a.jsonfile. - To render the ascii arts, run

python char_classifier/renderer.py, with your.jsonfile specified inside. - a pretrained model (on ETL6) is provided in

saved_models/.

Image processing is an important step before generating ascii arts, and the performance of the latter steps are highly relying on how good the images are processed, i.e., how good the edges are extracted. To our knowledge there's no 'panacea' for all input images/videos, so depending on which specific image or video you are using, sometimes you need to massage your edge detector carefully. Thanks to OpenCV, one can do some standard image processing tricks in one line of python code. We provide code to help tweaking your edge detector in utils/.

- example outputs corresponding to DeepAA sample images

- nichijou op, pic in pic

- nichijou op, up and down