All individual features are not listed here, instead check ChangeLog for full list of changes

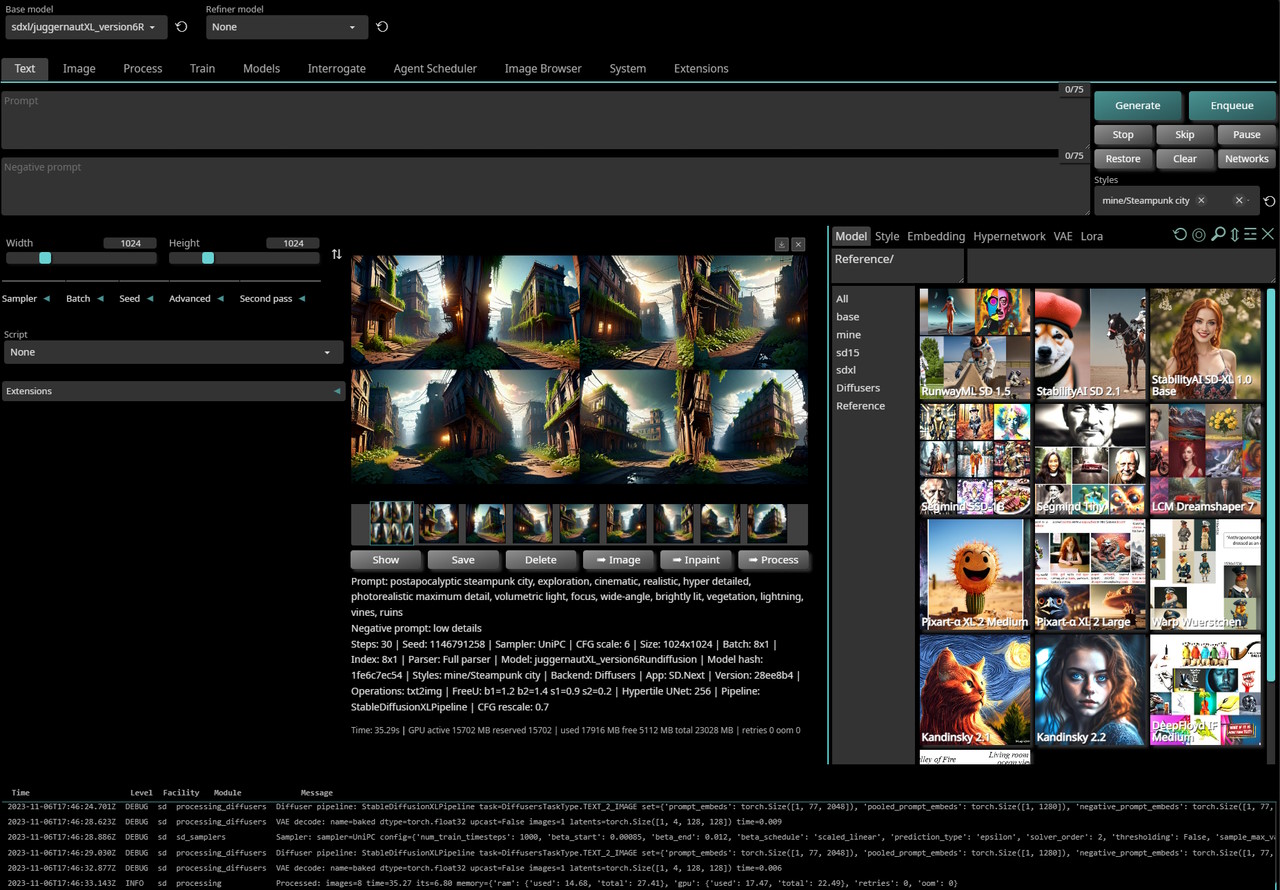

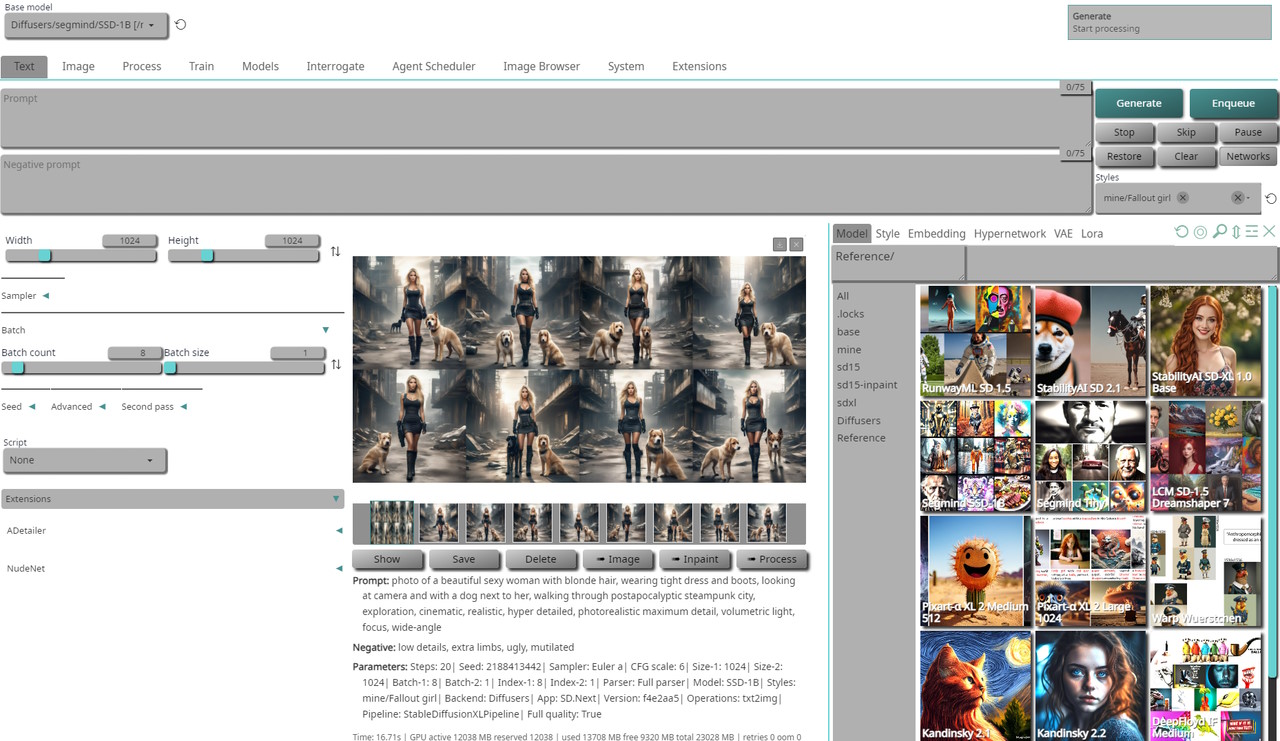

- Multiple backends!

▹ Original | Diffusers - Multiple diffusion models!

▹ Stable Diffusion | SD-XL | LCM | Segmind | Kandinsky | Pixart-α | Würstchen | DeepFloyd IF | UniDiffusion | SD-Distilled | etc. - Multiplatform!

▹ Windows | Linux | MacOS with CPU | nVidia | AMD | IntelArc | DirectML | OpenVINO | ONNX+Olive - Platform specific autodetection and tuning performed on install

- Optimized processing with latest

torchdevelopments with built-in support fortorch.compileand multiple compile backends - Improved prompt parser

- Enhanced Lora/LoCon/Lyco code supporting latest trends in training

- Built-in queue management

- Advanced metadata caching and handling to speed up operations

- Enterprise level logging and hardened API

- Modern localization and hints engine

- Broad compatibility with existing extensions ecosystem and new extensions manager

- Built in installer with automatic updates and dependency management

- Modernized UI with theme support and number of built-in themes (dark and light)

SD.Next supports two main backends: Original and Diffusers:

- Original: Based on LDM reference implementation and significantly expanded on by A1111

This is the default backend and it is fully compatible with all existing functionality and extensions

Supports SD 1.x and SD 2.x models

All other model types such as SD-XL, LCM, PixArt, Segmind, Kandinsky, etc. require backend Diffusers - Diffusers: Based on new Huggingface Diffusers implementation

Supports original SD models as well as all models listed below

See wiki article for more information

Additional models will be added as they become available and there is public interest in them

- RunwayML Stable Diffusion 1.x and 2.x (all variants)

- StabilityAI Stable Diffusion XL

- Segmind SSD-1B

- LCM: Latent Consistency Models

- Kandinsky 2.1 and 2.2

- PixArt-α XL 2 Medium and Large

- Warp Wuerstchen

- Tsinghua UniDiffusion

- DeepFloyd IF Medium and Large

- Segmind SD Distilled (all variants)

Important

- Loading any model other than standard SD 1.x / SD 2.x requires use of backend Diffusers

- Loading any other models using Original backend is not supproted

- Loading manually download model

.safetensorsfiles is supported for SD 1.x / SD 2.x / SD-XL models only - For all other model types, use backend Diffusers and use built in Model downloader or

select model from Networks -> Models -> Reference list in which case it will be auto-downloaded and loaded

- nVidia GPUs using CUDA libraries on both Windows and Linux

- AMD GPUs using ROCm libraries on Linux

Support will be extended to Windows once AMD releases ROCm for Windows - Intel Arc GPUs using OneAPI with IPEX XPU libraries on both Windows and Linux

- Any GPU compatible with DirectX on Windows using DirectML libraries

This includes support for AMD GPUs that are not supported by native ROCm libraries - Any GPU or device compatible with OpenVINO libraries on both Windows and Linux

- Apple M1/M2 on OSX using built-in support in Torch with MPS optimizations

- ONNX/Olive (experimental)

- Step-by-step install guide

- Advanced install notes

- Common installation errors

- FAQ

- If you can't run us locally, try our friends at RunDuffusion!

Tip

- Server can run without virtual environment,

Recommended to useVENVto avoid library version conflicts with other applications - nVidia/CUDA / AMD/ROCm / Intel/OneAPI are auto-detected if present and available,

For any other use case such as DirectML, ONNX/Olive, OpenVINO specify required parameter explicitly

or wrong packages may be installed as installer will assume CPU-only environment - Full startup sequence is logged in

sdnext.log,

so if you encounter any issues, please check it first

Once SD.Next is installed, simply run webui.ps1 or webui.bat (Windows) or webui.sh (Linux or MacOS)

Below is partial list of all available parameters, run webui --help for the full list:

Server options:

--config CONFIG Use specific server configuration file, default: config.json

--ui-config UI_CONFIG Use specific UI configuration file, default: ui-config.json

--medvram Split model stages and keep only active part in VRAM, default: False

--lowvram Split model components and keep only active part in VRAM, default: False

--ckpt CKPT Path to model checkpoint to load immediately, default: None

--vae VAE Path to VAE checkpoint to load immediately, default: None

--data-dir DATA_DIR Base path where all user data is stored, default:

--models-dir MODELS_DIR Base path where all models are stored, default: models

--share Enable UI accessible through Gradio site, default: False

--insecure Enable extensions tab regardless of other options, default: False

--listen Launch web server using public IP address, default: False

--auth AUTH Set access authentication like "user:pwd,user:pwd""

--autolaunch Open the UI URL in the system's default browser upon launch

--docs Mount Gradio docs at /docs, default: False

--no-hashing Disable hashing of checkpoints, default: False

--no-metadata Disable reading of metadata from models, default: False

--no-download Disable download of default model, default: False

--backend {original,diffusers} force model pipeline type

Setup options:

--debug Run installer with debug logging, default: False

--reset Reset main repository to latest version, default: False

--upgrade Upgrade main repository to latest version, default: False

--requirements Force re-check of requirements, default: False

--quick Run with startup sequence only, default: False

--use-directml Use DirectML if no compatible GPU is detected, default: False

--use-openvino Use Intel OpenVINO backend, default: False

--use-ipex Force use Intel OneAPI XPU backend, default: False

--use-cuda Force use nVidia CUDA backend, default: False

--use-rocm Force use AMD ROCm backend, default: False

--use-xformers Force use xFormers cross-optimization, default: False

--skip-requirements Skips checking and installing requirements, default: False

--skip-extensions Skips running individual extension installers, default: False

--skip-git Skips running all GIT operations, default: False

--skip-torch Skips running Torch checks, default: False

--skip-all Skips running all checks, default: False

--experimental Allow unsupported versions of libraries, default: False

--reinstall Force reinstallation of all requirements, default: False

--safe Run in safe mode with no user extensions

SD.Next comes with several extensions pre-installed:

- We'd love to have additional maintainers with full admin rights. If you're interested, ping us!

- In addition to general cross-platform code, desire is to have a lead for each of the main platforms. This should be fully cross-platform, but we'd really love to have additional contributors and/or maintainers to join and help lead the efforts on different platforms.

This project started as a fork from Automatic1111 WebUI and it grew significantly since then,

but although it diverged considerably, any substantial features to original work is ported to this repository as well.

The idea behind the fork is to enable latest technologies and advances in text-to-image generation.

Sometimes this is not the same as "as simple as possible to use".

General goals:

- Multi-model

- Enable usage of as many as possible txt2img and img2img generative models

- Cross-platform

- Create uniform experience while automatically managing any platform specific differences

- Performance

- Enable best possible performance on all platforms

- Ease-of-Use

- Automatically handle all requirements, dependencies, flags regardless of platform

- Integrate all best options for uniform out-of-the-box experience without the need to tweak anything manually

- Look-and-Feel

- Create modern, intuitive and clean UI

- Up-to-Date

- Keep code up to date with latest advanced in text-to-image generation

- Main credit goes to Automatic1111 WebUI

- Additional credits are listed in Credits

- Licenses for modules are listed in Licenses