Tao Huang, Xiaohuan Pei, Shan You, Fei Wang, Chen Qian, Chang Xu

ArXiv Preprint (arXiv 2403.09338)

- 21 Mar: We released the detection code of

LocalVim. - 20 Mar: We released the classification code of

LocalVMamba(py). Since we rewrite the code related to Mamba operations, we need to retrain the models, and the checkpoints and logs of rest models will be uploaded later. We are preparing the detection and segmentation code now. - 19 Mar: We released the classification code of

LocalVim(py). The checkpoint and training log ofLocalVim-Tare uploaded. - 15 Mar: We are working hard in releasing the code, it will be public in several days.

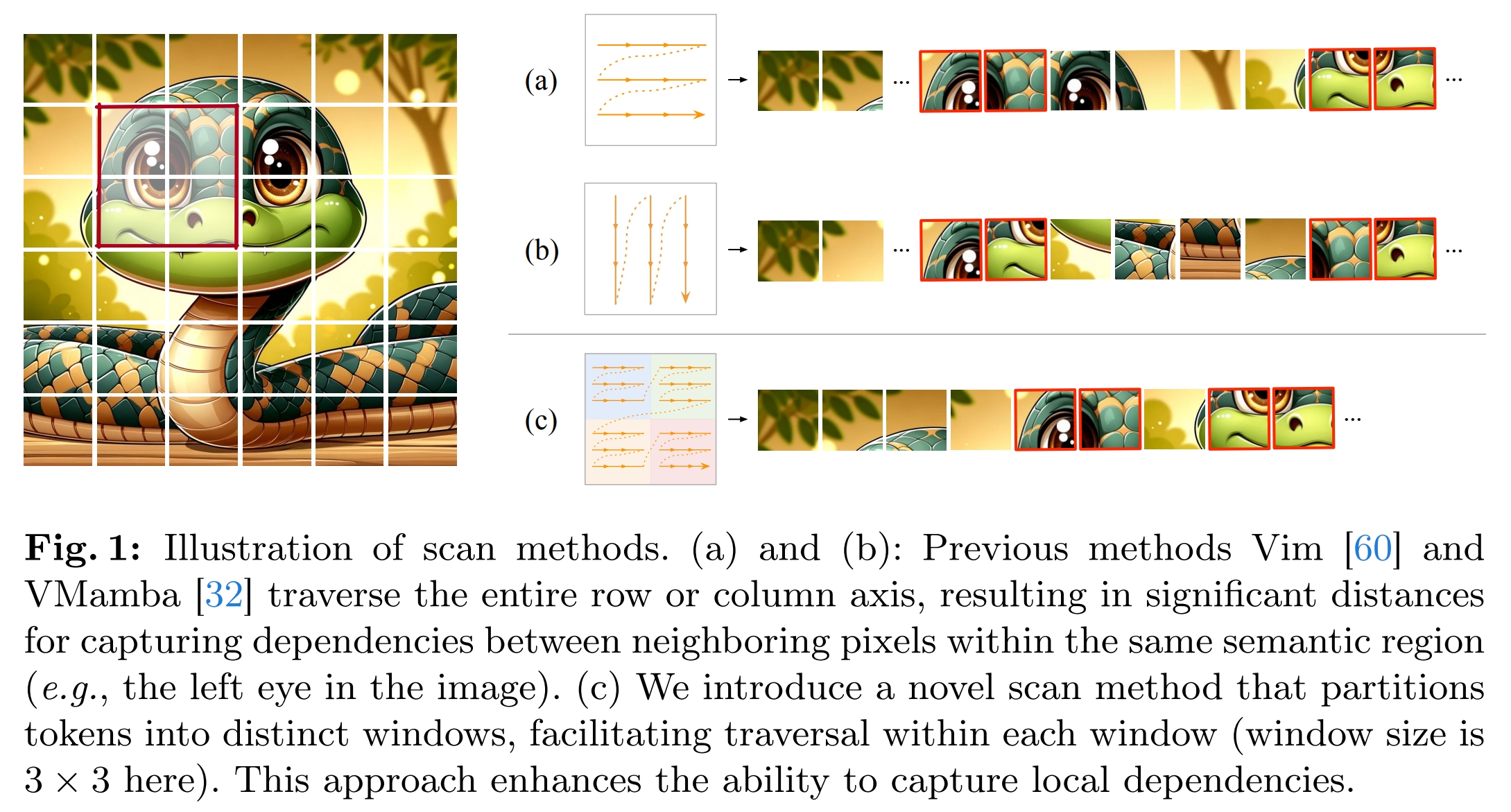

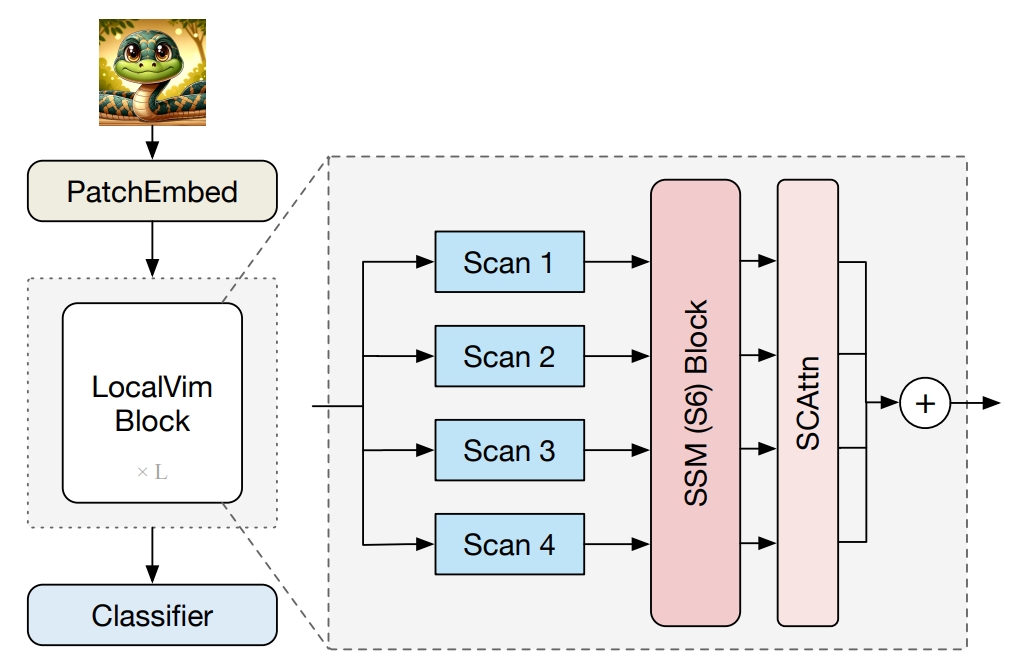

Recent advancements in state space models, notably Mamba, have demonstrated significant progress in modeling long sequences for tasks like language understanding. Yet, their application in vision tasks has not markedly surpassed the performance of traditional Convolutional Neural Networks (CNNs) and Vision Transformers (ViTs). This paper posits that the key to enhancing Vision Mamba (ViM) lies in optimizing scan directions for sequence modeling. Traditional ViM approaches, which flatten spatial tokens, overlook the preservation of local 2D dependencies, thereby elongating the distance between adjacent tokens. We introduce a novel local scanning strategy that divides images into distinct windows, effectively capturing local dependencies while maintaining a global perspective. Additionally, acknowledging the varying preferences for scan patterns across different network layers, we propose a dynamic method to independently search for the optimal scan choices for each layer, substantially improving performance. Extensive experiments across both plain and hierarchical models underscore our approach's superiority in effectively capturing image representations. For example, our model significantly outperforms Vim-Ti by 3.1% on ImageNet with the same 1.5G FLOPs.

| Model | Dataset | Resolution | ACC@1 | #Params | FLOPs | ckpts/logs |

|---|---|---|---|---|---|---|

| Vim-Ti | ImageNet-1K | 224x224 | 73.1 | 7M | 1.5G | - |

| Vim-S | ImageNet-1K | 224x224 | 80.3 | 26M | 5.1G | - |

| LocalVim-T | ImageNet-1K | 224x224 | 76.2 | 8M | 1.5G | ckpt/log |

| LocalVim-S | ImageNet-1K | 224x224 | 81.2 | 28M | 4.8G | retraining... |

| VMamba-T | ImageNet-1K | 224x224 | 82.2 | 22M | 5.6G | - |

| VMamba-S | ImageNet-1K | 224x224 | 83.5 | 44M | 11.2G | - |

| LocalVMamba-T | ImageNet-1K | 224x224 | 82.7 | 26M | 5.7G | retraining... |

| LocalVMamba-S | ImageNet-1K | 224x224 | 83.7 | 50M | 11.4G | retraining... |

See detection folder.

git clone https://github.com/hunto/LocalMamba.gitWe tested our code on torch==1.13.1 and torch==2.0.2.

Install Mamba kernels:

cd causual-conv1d && pip install .

cd ..

cd mamba-1p1p1 && pip install .Other dependencies:

timm==0.9.12

fvcore==0.1.5.post20221221We use ImageNet-1K dataset for training and validation. It is recommended to put the dataset files into ./data folder, then the directory structures should be like:

classification

├── lib

├── tools

├── configs

├── data

│ ├── imagenet

│ │ ├── meta

│ │ ├── train

│ │ ├── val

│ ├── cifar

│ │ ├── cifar-10-batches-py

│ │ ├── cifar-100-python

sh tools/dist_run.sh tools/test.py ${NUM_GPUS} configs/strategies/local_vmamba/config.yaml timm_local_vim_tiny --drop-path-rate 0.1 --experiment lightvit_tiny_test --resume ${ckpt_file_path}- LocalVim-T

sh tools/dist_train.sh 8 configs/strategies/local_mamba/config.yaml timm_local_vim_tiny -b 128 --drop-path-rate 0.1 --experiment local_vim_tiny

Other training options:

--amp: enable torch Automatic Mixed Precision (AMP) training. It can speedup the training on large models. We open it on LocalVMamba models.--clip-grad-norm: enable gradient clipping.--clip-grad-max-norm 1: gradient clipping value.--model-ema: enable model exponential moving average. It can improve the accuracy on large model.--model-ema-decay 0.9999: decay rate of model EMA.

sh tools/dist_train.sh 8 configs/strategies/local_mamba/config.yaml timm_local_vim_tiny_search -b 128 --drop-path-rate 0.1 --experiment local_vim_tiny --epochs 100After training, run tools/vis_search_prob.py to get the searched directions.

This project is released under the Apache 2.0 license.

This project is based on Mamba (paper, code), Vim (paper, code), VMamba (paper, code), thanks for the excellent works.

If our paper helps your research, please consider citing us:

@article{huang2024localmamba,

title={LocalMamba: Visual State Space Model with Windowed Selective Scan},

author={Huang, Tao and Pei, Xiaohuan and You, Shan and Wang, Fei and Qian, Chen and Xu, Chang},

journal={arXiv preprint arXiv:2403.09338},

year={2024}

}