Talk @ elasticserach usergroup vienna Log management with Logstash, Elasticsearch, and Kibana

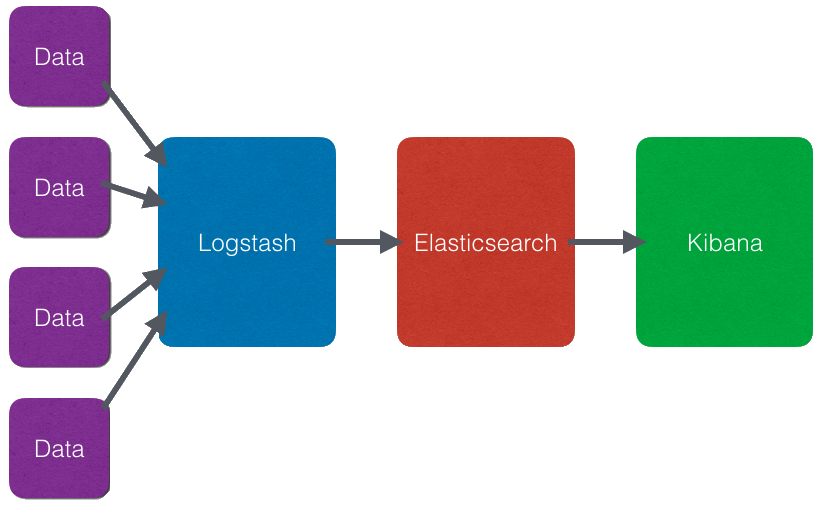

- Elasticsearch - Search and analyze data

- Logstash - Collect, enrich, and transport data

- Kibana - Explore and visualise

Data is collected, enriched by Logstash and indexed within Elasicsearch. Kibana accesses the data stored within Elasticsearch and visualises it with a Single Page WebApp.

- High level API lightweight Linux containers

- Package format with all dependencies

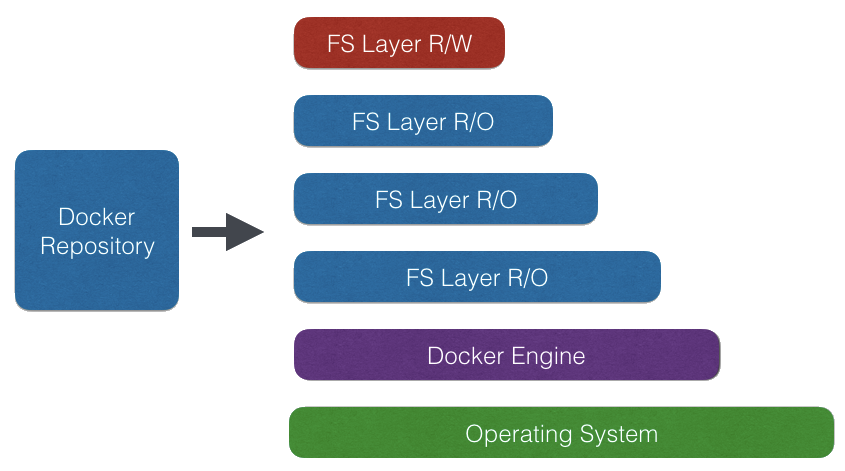

- Layerd File System

It consiststs of the following building blocks

- Docker Engine - server process

- Docker CLI - command line interface to conrol the server

- Docker Repository - stores prepared docker images

Within the layerd filesystem all layers are read only except for the top layer. Layers can be stored within the repository and cached locally.

http://martinfowler.com/bliki/PhoenixServer.html

-

Install Java (as ELK is JVM based)

-

Download ELK binaries

-

configure

-

Set up services

But what about updates?

- Use official Docker packages

- configure

Updates are simple, just throw away server, run new version from repository

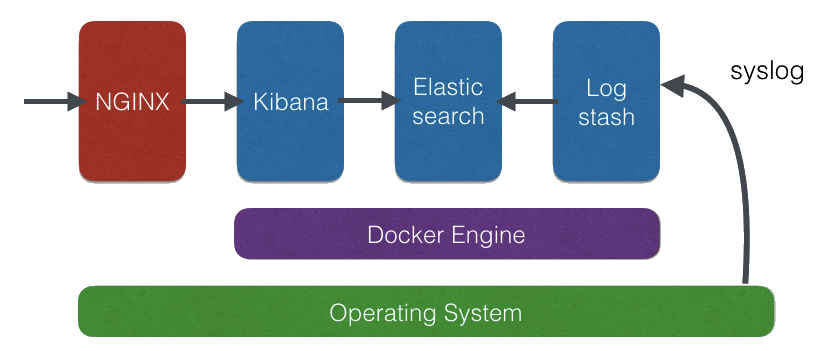

- Grab Ubuntu 16.04 box from https://cloud.digitalocean.com

- Install Firewall & Frontend NGINX Proxy

- Install Docker

- Install ELK on Docker from official repository

- Feed syslog to ELK

apt-get -y update

apt-get -y upgrade

ufw status

ufw default deny incoming

ufw default allow outgoing

ufw allow ssh

ufw allow 80/tcp

ufw --force enable

sysctl -w vm.max_map_count=262144

https://docs.docker.com/installation/ubuntulinux/

wget -qO- https://get.docker.com/ | sh

mkdir -p /var/docker/elasticsearch

mkdir -p /var/docker/logstash

chmod -R uga+rwX /var/docker

cat >/var/docker/logstash/syslog.conf <<'EOL'

input {

tcp {

port => 25826

type => syslog

}

udp {

port => 25826

type => syslog

}

}

filter {

if [type] == "syslog" {

grok {

match => { "message" => "<%{POSINT:syslog_pri}>%{SYSLOGTIMESTAMP:syslog_timestamp} %{SYSLOGHOST:syslog_hostname} %{DATA:syslog_program}(?:\[%{POSINT:syslog_pid}\])?: %{GREEDYDATA:syslog_message}" }

}

}

if "docker/" in [program] {

mutate {

add_field => {

"container_id" => "%{program}"

}

}

mutate {

gsub => [

"container_id", "docker/", ""

]

}

mutate {

update => [

"program", "docker"

]

}

}

}

output {

elasticsearch {

hosts => ["db"]

}

}

EOL

docker network create --driver bridge isolated-elk

docker run -d --restart=always -v /var/docker/elasticsearch:/usr/share/elasticsearch/data --net=isolated-elk -p 127.0.0.1:9200:9200 -p 127.0.0.1:9300:9300 --name elasticsearch docker.elastic.co/elasticsearch/elasticsearch:6.4.0

docker run -d --restart=always --link elasticsearch --net=isolated-elk -p 127.0.0.1:5601:5601 --name kibana docker.elastic.co/kibana/kibana:6.4.0

docker run -d --restart=always --link elasticsearch:db -v /var/docker/logstash:/usr/share/logstash/pipeline/ --net=isolated-elk -p 127.0.0.1:25826:25826 --name logstash docker.elastic.co/logstash/logstash:6.4.0

docker network inspect isolated-elk

docker ps

apt-get -y install nginx

apt-get -y install apache2-utils

htpasswd -c /etc/nginx/.htpasswd ops

cat >/etc/nginx/sites-available/default <<'EOL'

server {

listen 80 default_server;

listen [::]:80 default_server ipv6only=on;

root /usr/share/nginx/html;

index index.html index.htm;

server_name docker-elk;

location / {

try_files $uri $uri/ =404;

}

location ~* /.* {

auth_basic "Restricted";

auth_basic_user_file /etc/nginx/.htpasswd;

rewrite ^/(.*) /$1 break;

proxy_pass http://127.0.0.1:5601;

proxy_set_header Host $host;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

}

}

EOL

service nginx restart

sudo cat >/etc/rsyslog.d/10-logstash.conf <<'EOL'

*.* @@127.0.0.1:25826

EOL

service rsyslog restart

logger -s -p 1 "This is fake error..."

logger -s -p 1 "This is another fake error..."

logger -s -p 1 "This is one more fake error..."

docker run --log-driver syslog ubuntu echo "Test"