Authors: Ge-Peng Ji, Deng-Ping Fan, Yu-Cheng Chou, Dengxin Dai, Alexander Liniger, & Luc Van Gool.

This official repository contains the source code, prediction results, and evaluation toolbox of Deep Gradient Network (accepted by Machine Intelligence Research 2023), also called DGNet. The technical report could be found at arXiv.

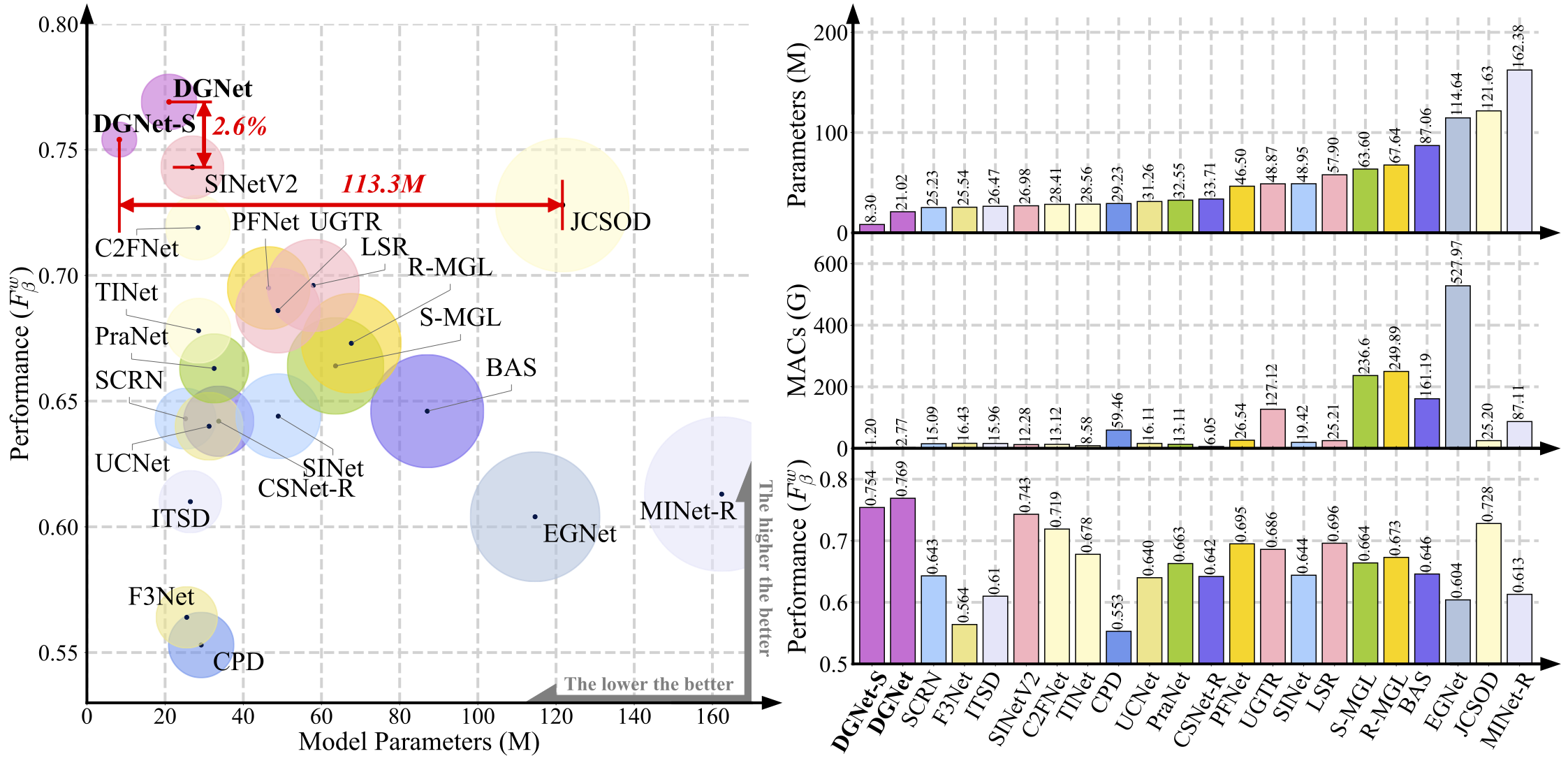

Figure 1: We present the scatter relationship between the performance weighted F-measure and parameters of all competitors on CAMO-Test. These scatters are in various colors for better visual recognition and are also corresponding to the histogram (Right).

The larger size of the coloured scatter point, the heavier the model parameter. (Right) We also report the parallel histogram comparison of model's parameters, MACs, and performance.

-

Novel supervision. We propose to excavate the texture information via learning the object level gradient rather than using boundary-supervised or uncertainty-aware modeling.

-

Simple but efficient. We decouple all the heavy designs as much as we can, yielding a simple but efficient framework. We hope this framework could be served as a baseline learning paradigm for the COD field.

-

Best trade-off. Our vision is to achieve new SOTA with the best performance-efficiency trade-off on existing cutting-edge COD benchmarks.

- [2022/11/18] The segmentation results on CHAMELEON dataset are availbale here: DGNet (CAHMELEON) and DGNet-S (CHEMELEON).

- [2022/11/14] We convert the PyTorch model to ONNX model that is easier to access hardware optimizations and Huawei-OM model that supports Huawei Ascend series AI processors. More details could be found at lib_ascend.

- [2022/11/03] We add the support for the PVTv2 Transformer backbone, achieving excitied performance again on COD benchmarks. Please enjoy it -> (link)

- [2022/08/06] Our paper has been accepted by Machine Intelligence Research (MIR).

- [2022/05/30] 🔥 We release the implementation of DGNet with different AI frameworks: Pytorch-based and Jittor-based.

- [2022/05/30] Thank @Katsuya Hyodo for adding our model into PINTO. This is a repository for storing models that have been inter-converted between various frameworks (e.g., TensorFlow, PyTorch, ONNX).

- [2022/05/25] Releasing the codebase of DGNet (Pytorch) and whole COD benchmarking results (20 models).

- [2022/05/23] Creating repository.

This project is still work in progress, and we invite all to contribute in making it more acessible and useful. If you have any questions about our paper, feel free to contact me via e-mail (gepengai.ji@gmail.com & johnson111788@gmail.com & dengpfan@gmail.com). And if you are using our code and evaluation toolbox for your research, please cite this paper (BibTeX).

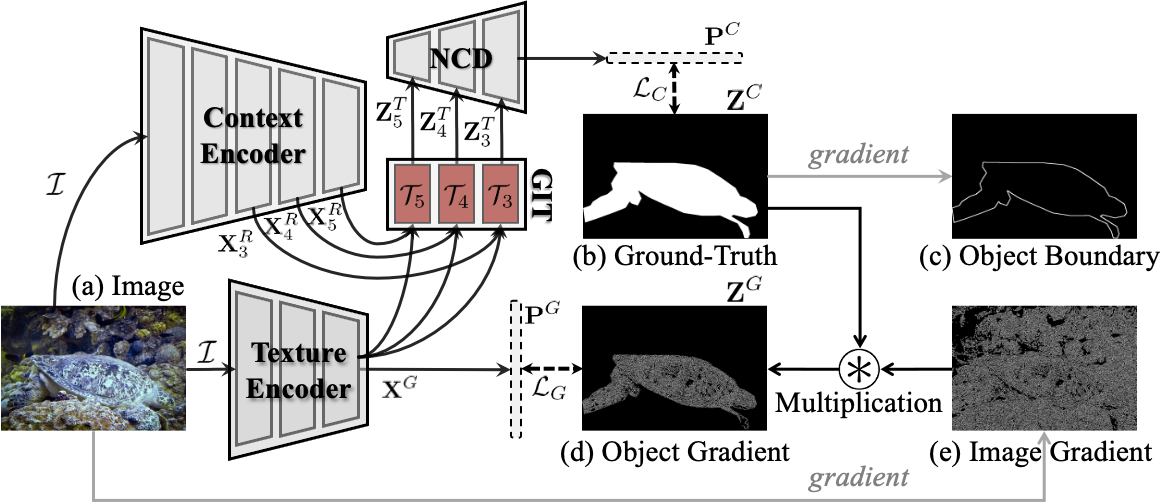

Figure 2: Overall pipeline of the proposed DGNet, It consists of two connected learning branches, i.e., context encoder and texture encoder.

Then, we introduce a gradient-induced transition (GIT) to collaboratively aggregate the feature that is derived from the above two encoders. Finally, a neighbor connected decoder (NCD [1]) is adopted to generate the prediction.

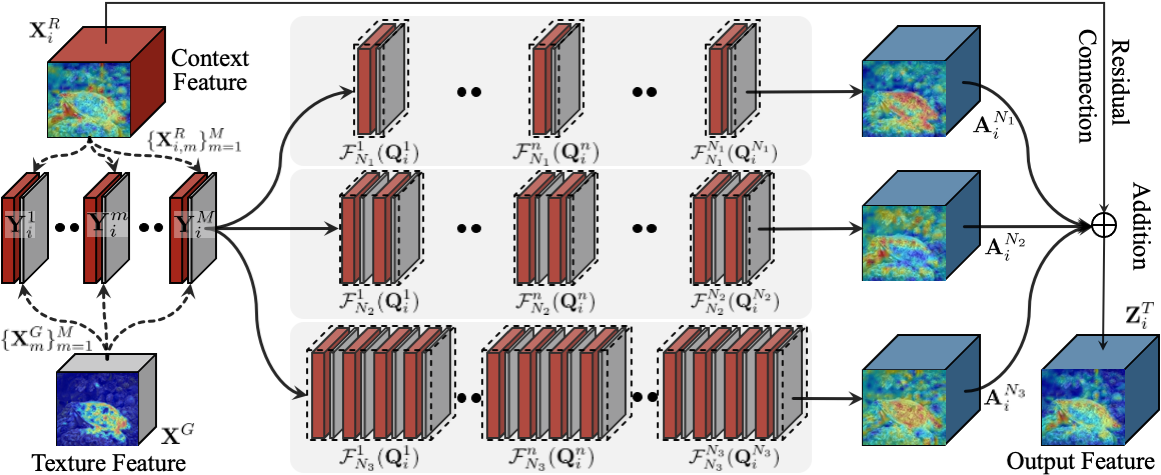

Figure 3: Illustration of the proposed gradient-induced transition (GIT).

It use a soft grouping strategy to provide parallel nonlinear projections at multiple fine-grained sub-spaces, which enables the network to probe multi-source representations jointly.

References of neighbor connected decoder (NCD) benchmark works [1] Concealed Object Detection. TPAMI, 2023.

We provide various versions for different deep learning platforms, including PyTorch and Jittor libraries. Note that we only report the results of the Pytorch-based DGNet in our manuscript.

-

For the Pytorch users, please refer to our lib_pytorch.

-

For the Jittor users, please refer to our lib_jittor.

-

For the Huawei Ascend users, please refer to our lib_ascend.

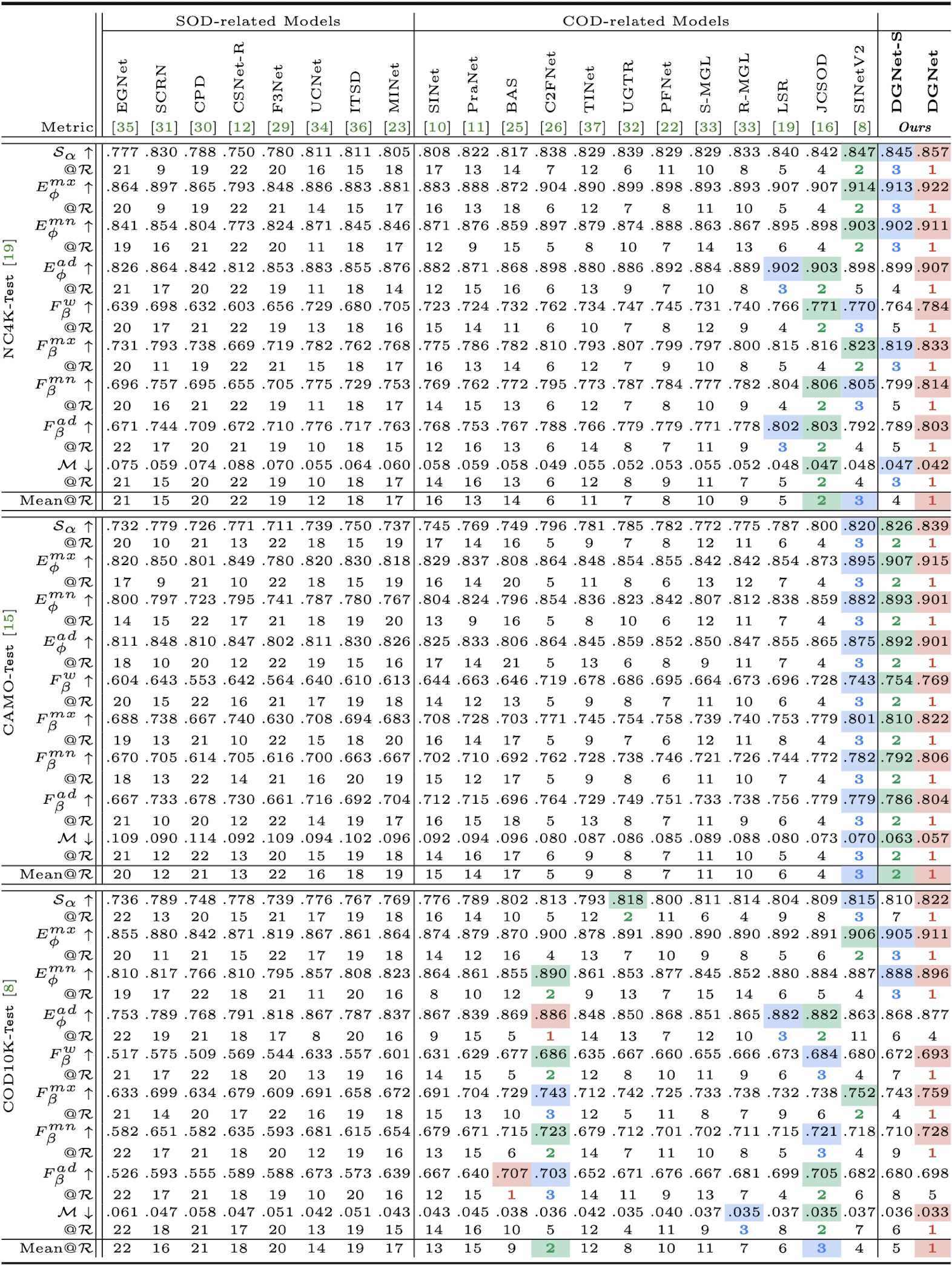

The prediction of our DGNet and DGNet-S can be found in Pytorch Results / Jitror Results. The whole benchmark results can be found at OneDrive. Here are quantitative performance comparison from three perspectives. Note that we used the matlab-based toolbox to generate the reported metrics.

Figure 4: Quantitative results in terms of full metrics for cutting-edge competitors, including 8 SOD-related and 12 COD-related, on three test datasets: NC4K-Test, CAMO-Test, and COD10K-Test. @R means the ranking of the current metric, and Mean@R indicates the mean ranking of all metrics.

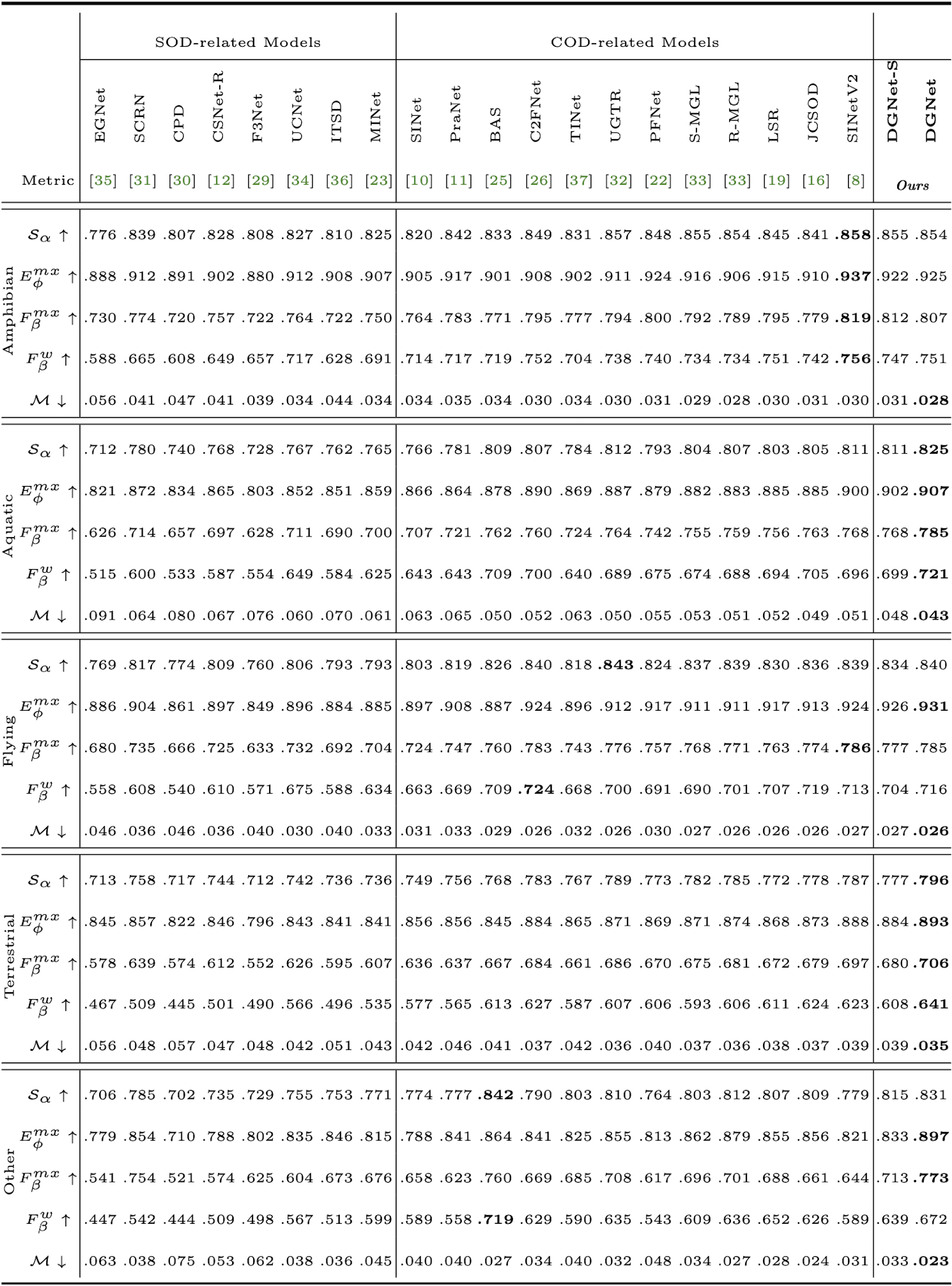

Figure 5: Super-classes (i.e., Amphibian, Aquatic, Flying, Terrestrial, and Other) on the COD10K-Test of the proposed methods (DGNet & DGNet-S) and other 20 competitors. Symbol \uparrow indicates the higher the score, the better, and symbol \downarrow indicates the lower, the better. The best score is marked with bold.

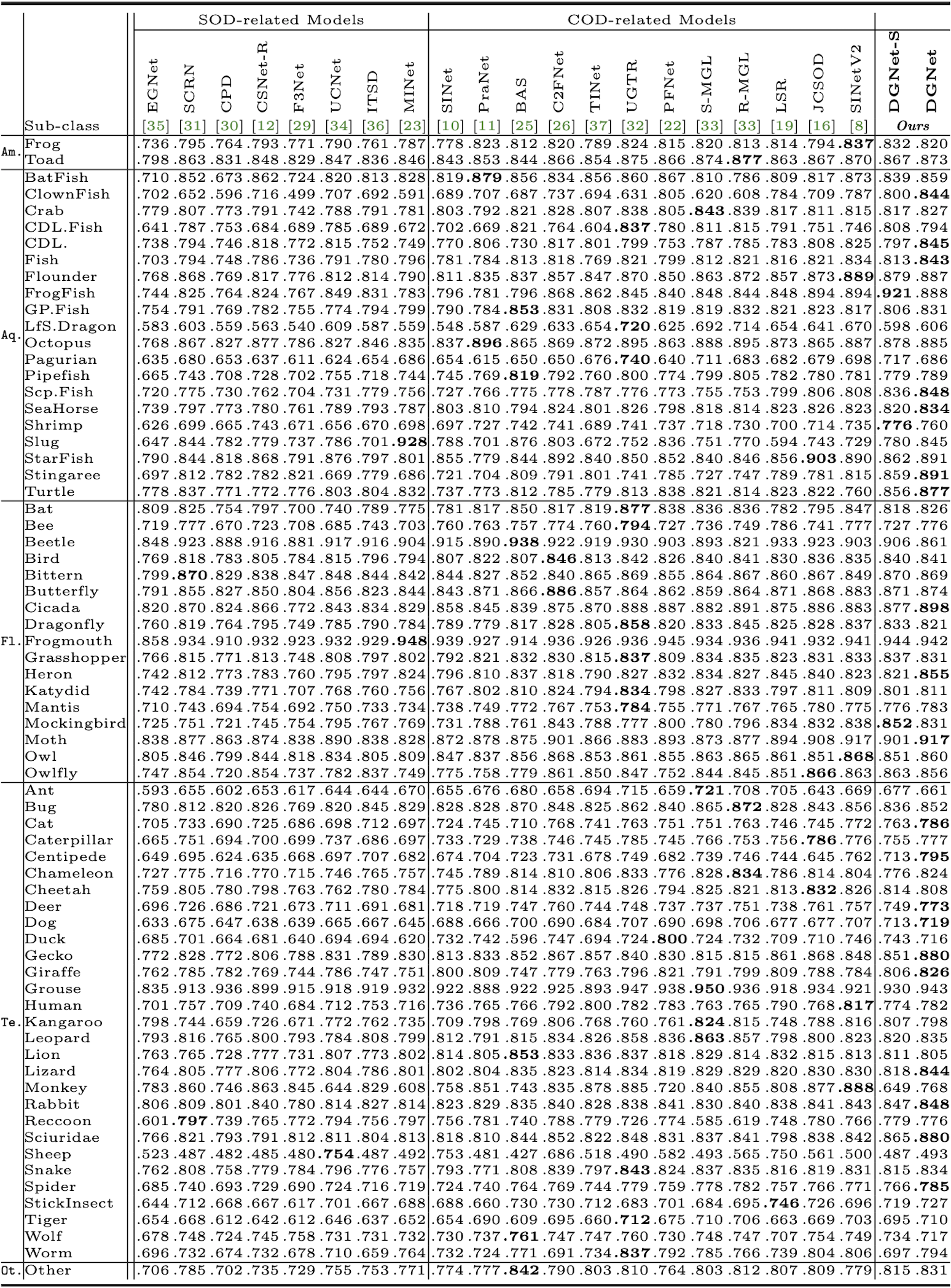

Figure 6: Sub-class results on COD10K-Test of 12 COD-related and 8 SOD-related baselines in terms of structure measure (\mathcal{S}_\alpha), where Am., Aq., Fl., Te., and Ot. represent Amphibian, Aquatic, Flying, Terrestrial, and Other, respectively. CDL., GP.Fish, and LS.Dragon denote Crocodile, and GhostPipeFish, LeafySeaDragon, respectively. The best score is marked with bold.

Please cite our paper if you find the work useful:

@article{ji2023gradient,

title={Deep Gradient Learning for Efficient Camouflaged Object Detection},

author={Ji, Ge-Peng and Fan, Deng-Ping and Chou, Yu-Cheng and Dai, Dengxin and Liniger, Alexander and Van Gool, Luc},

journal={Machine Intelligence Research},

pages={92-108},

volume={20},

issue={1},

year={2023}

}