This is the official implementation of CoRL2022 paper "CoBEVT: Cooperative Bird's Eye View Semantic Segmentation with Sparse Transformers". Runsheng Xu, Zhengzhong Tu, Hao Xiang, Wei Shao, Bolei Zhou, Jiaqi Ma

UCLA, UT-Austin

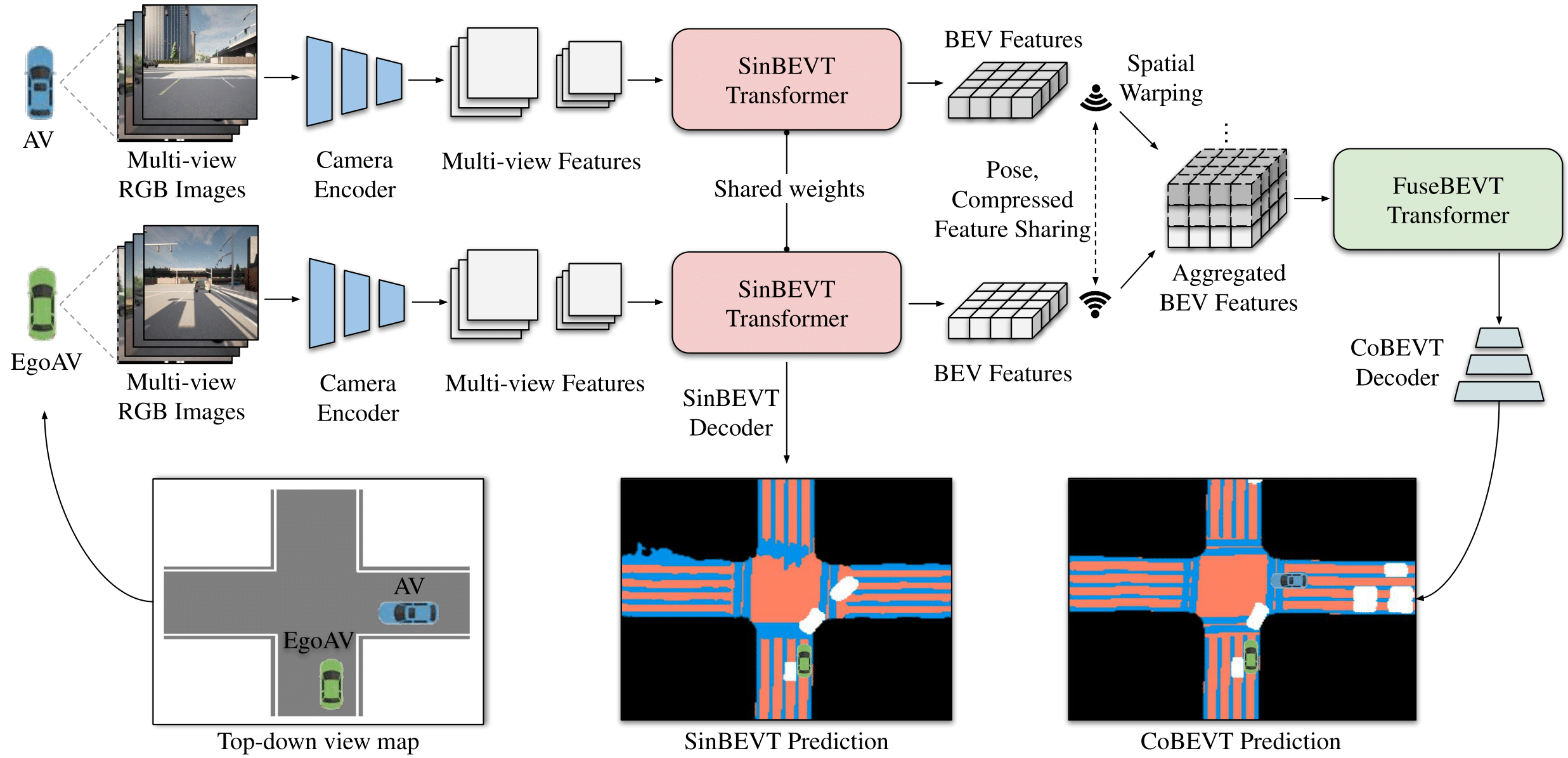

CoBEVT is the first generic multi-agent multi-camera perception framework that can cooperatively generate BEV map predictions. The core component of CoBEVT, named fused axial attention or FAX module, can capture sparsely local and global spatial interactions across views and agents. We achieve SOTA performance both on OPV2V and nuScenes dataset with real-time performance.

The pipeline for nuScenes dataset and OPV2V dataset is different. Please refer to the specific folder for more details based on your research purpose.

👉 nuScenes Users

👉 OPV2V Users

@inproceedings{xu2022cobevt,

author = {Runsheng Xu, Zhengzhong Tu, Hao Xiang, Wei Shao, Bolei Zhou, Jiaqi Ma},

title = {CoBEVT: Cooperative Bird's Eye View Semantic Segmentation with Sparse Transformers},

booktitle={Conference on Robot Learning (CoRL)},

year = {2022}}CoBEVT is build upon OpenCOOD, which is the first Open Cooperative Detection framework for autonomous driving.

Our nuScenes experiments used the training pipeline in CVT(CVPR2022).