This is a project setting up automatic benchmark experiments with CI triggered by github comments.

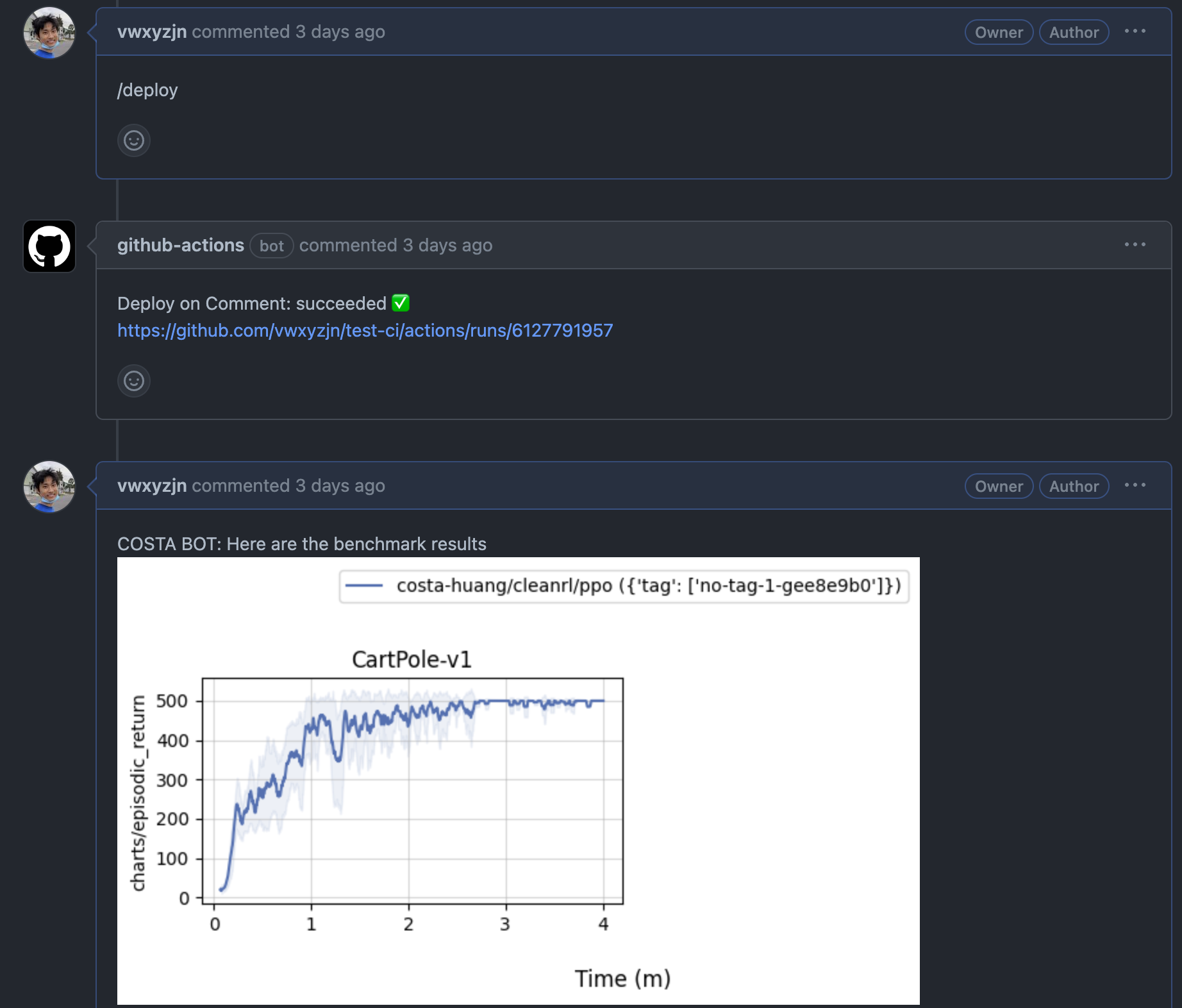

Basically, we want to input a command in the issue like /deploy and our CI will run trigger the benchmark experiments and report the results back to the issue, like this:

The process is as follows:

- In

.github/workflows/blank.ymlwe trigger the CI when there is a/deploycomment in the issue. - The CI runs

benchmark/benchmark_and_report.sh- The script with run

benchmark/benchmark.shto run the benchmark experiments in a SLURM cluster. - The script then parse the job ids and run a dependency job

sbatch --dependency=afterany:$job_ids benchmark/post_github_comment.sbatch, basically invoking the scriptbenchmark/post_github_comment.pyto post the benchmark results to the issue once all the experiments finished.

- The script with run

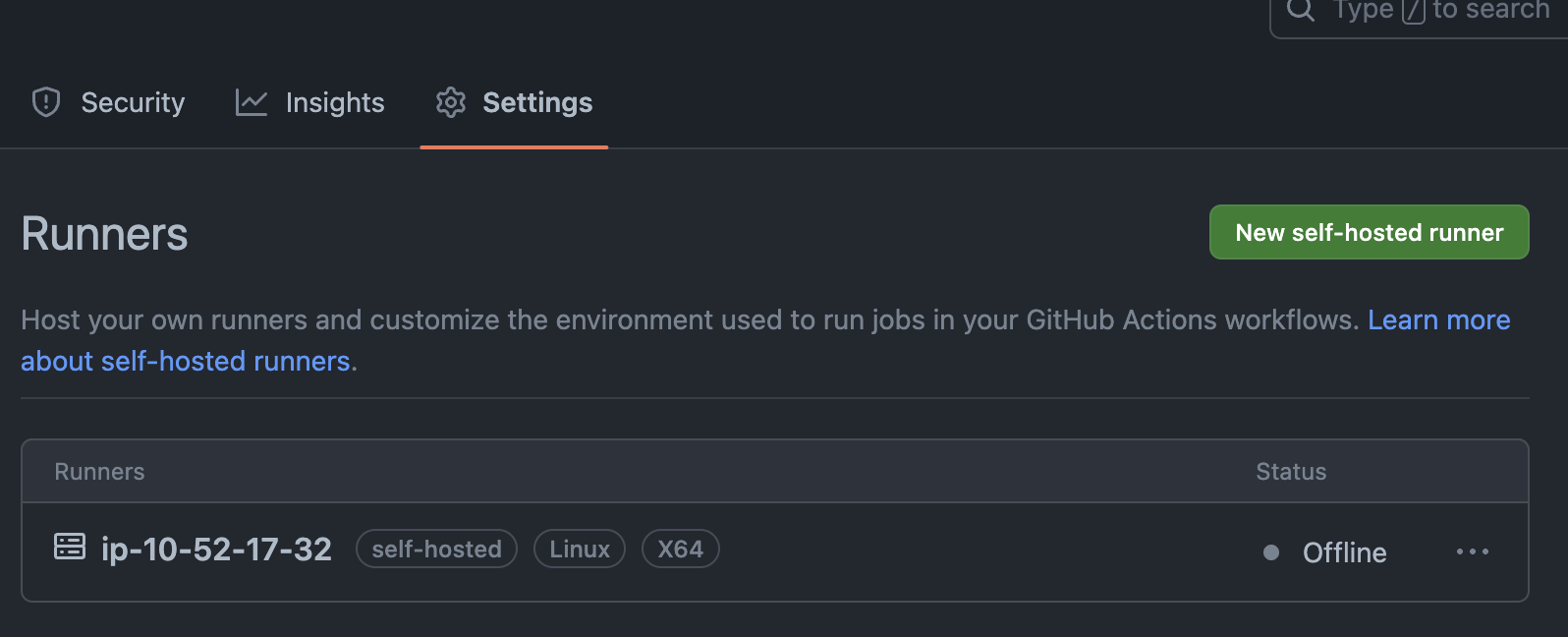

- You need a slurm cluster and use its head node to set up a Github self-hosted runner that has access to the

sbatchcommand.

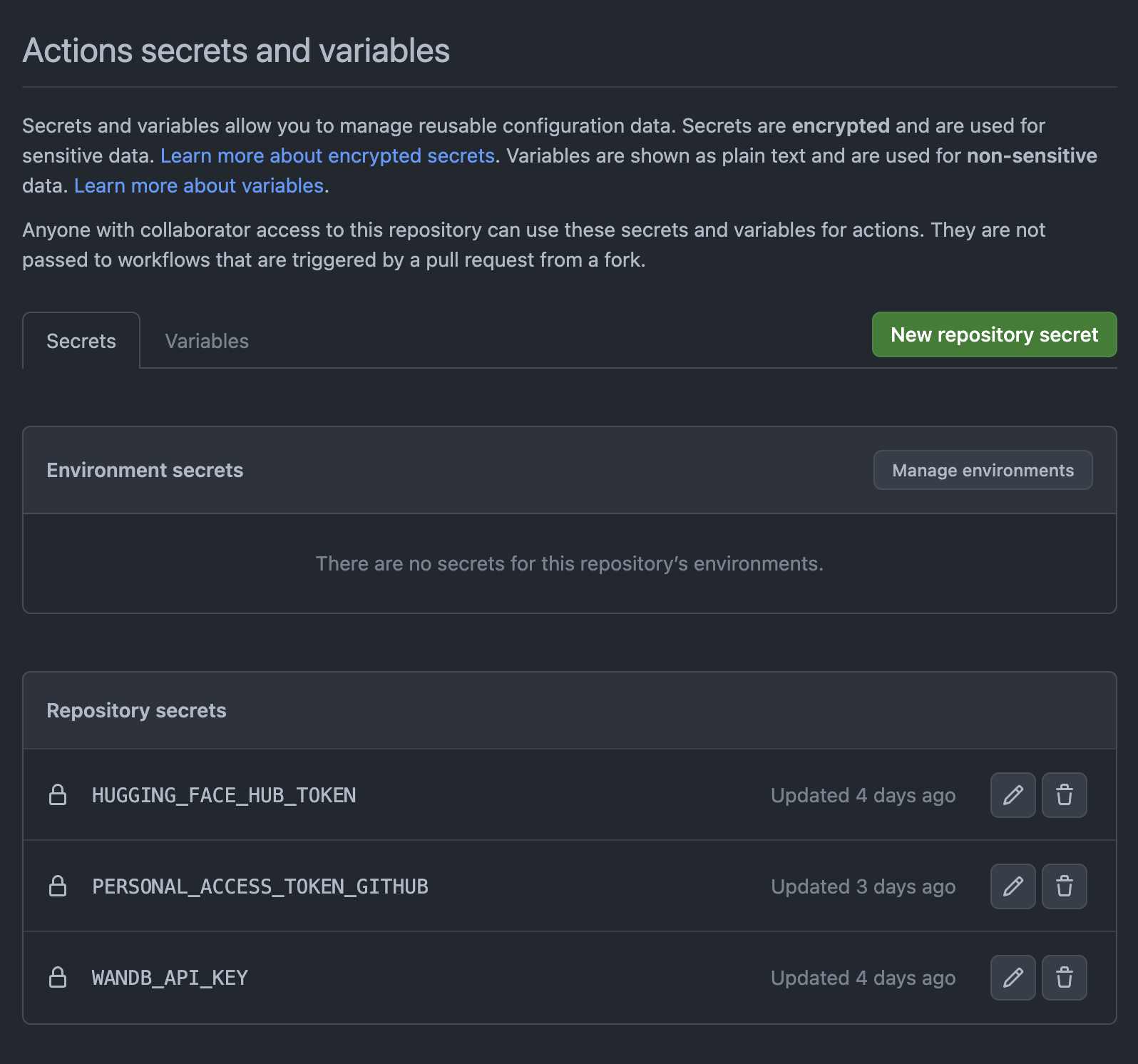

- You need to set up the required secrets, which are:

HUGGING_FACE_HUB_TOKEN: a token to access the HuggingFace hub.PERSONAL_ACCESS_TOKEN_GITHUB: a token to access the Github API. This is required because the Github action token only lasts for the duration of the workflow, which is not enough for the benchmark experiments to finish.WANDB_API_KEY: a token to access the Weights and Biases API.