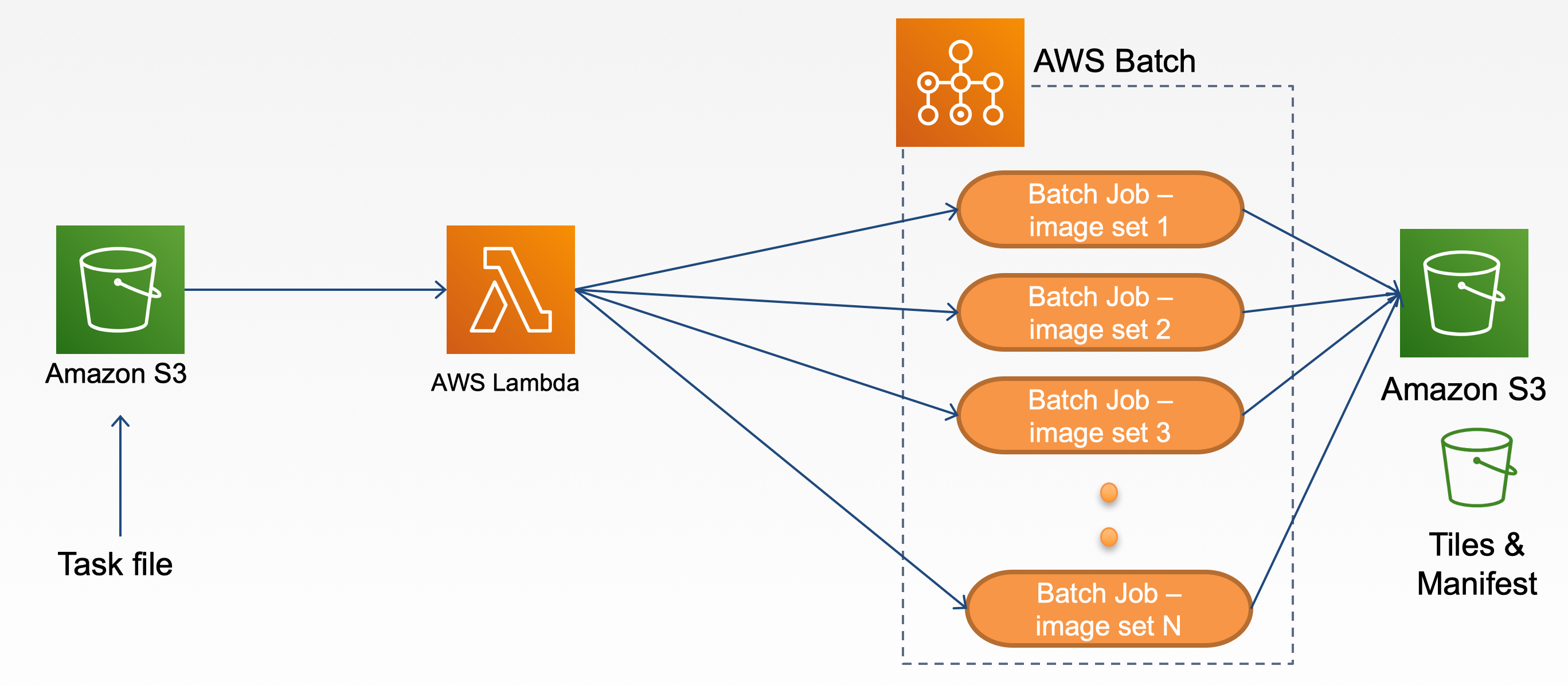

- Upload task file to the batch bucket

- Batch bucket trigger a lambda function

- Lambda function read the content in the task file and submit a batch job

- Each batch job generates tiles and manifests from the original image and upload to the target S3 bucket

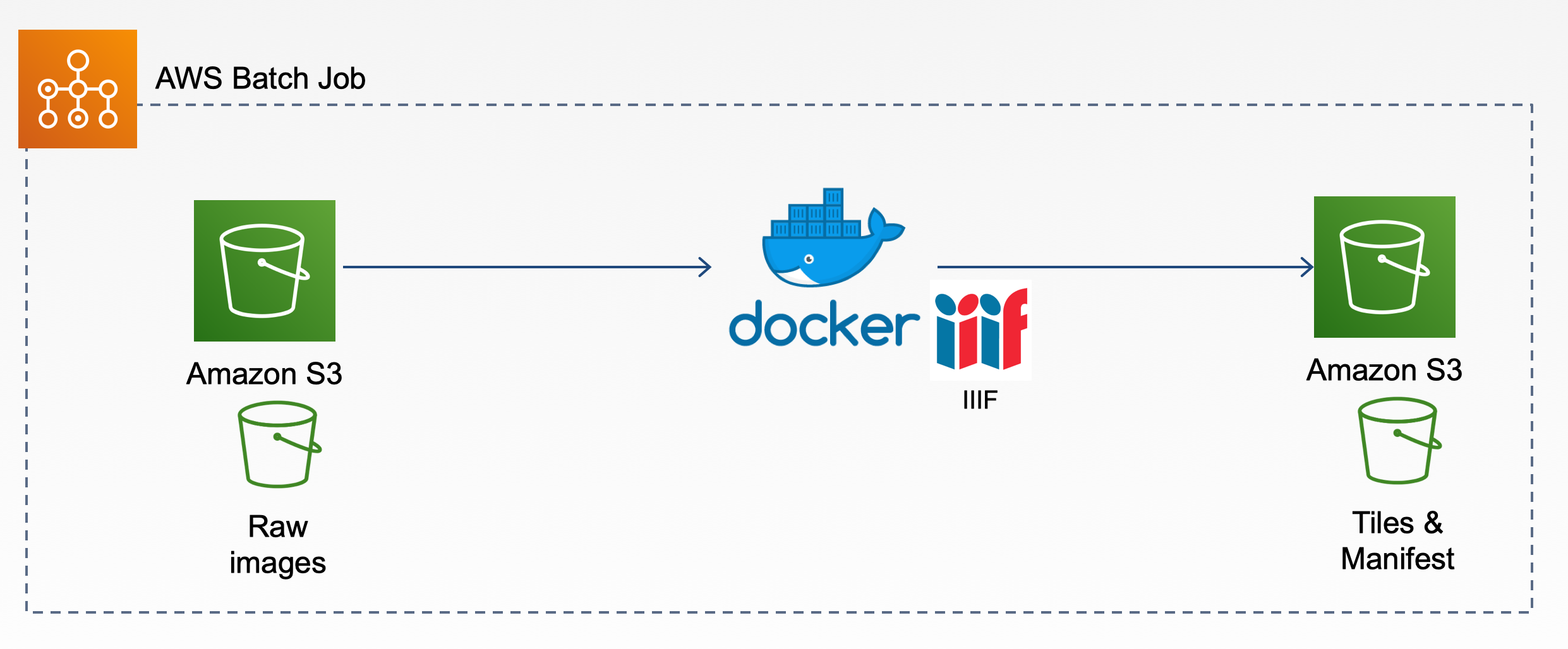

- Pull raw original files from the S3 bucket

- Generate tiles and manifests

- Upload to target S3 bucket

Click Next to continue

| Name | Description |

|---|---|

| Stack name | any valid name |

| BatchRepositoryName | any valid name for Batch process repository |

| DockerImage | any valid Docker image. E.g. yinlinchen/vtl:iiifs3_v3 |

| JDName | any valid name for Job definition |

| JQName | any valid name for Job queue |

| LambdaFunctionName | any valid name for Lambda function |

| LambdaRoleName | any valid name for Lambda role |

| S3BucketName | any valid name for S3 bucket |

Leave it as is and click Next

Make sure all checkboxes under Capabilities section are CHECKED

Click Create stack

Run the following in your shell to deploy the application to AWS:

aws cloudformation create-stack --stack-name awsiiifs3batch --template-body file://awsiiifs3batch.template --capabilities CAPABILITY_NAMED_IAMSee Cloudformation: create stack for --parameters option

- Prepare task.json

- Prepare dataset and upload to S3

SRC_BUCKETbucket - Upload task.json to the S3 bucket created after the deployment.

- Go to

AWS_BUCKET_NAMEto see the end results for generated IIIF tiles and manifests. - Test manifests in Mirador (Note: you need to configure S3 access permission and CORS settings)

- See our Live Demo

To delete the sample application that you created, use the AWS CLI. Assuming you used your project name for the stack name, you can run the following:

aws cloudformation delete-stack --stack-name stackname- Compute Environment: Type:

EC2, MinvCpus:0, MaxvCpus:128, InstanceTypes:optimal - Job Definition: Type:

container, Image:DockerImage, Vcpus:2, Memory:2000 - Job Queue: Priority:

10

- SRC_BUCKET: For raw images and CSV files to be processed

- Raw image files

- CSV files

- AWS_BUCKET_NAME: For saving tiles and manifests files

- index.py: Submit a batch job when a task file is upload to a S3 bucket

- example: task.json

| Name | Description |

|---|---|

| jobName | Batch job name |

| jobQueue | Batch job queue name |

| jobDefinition | Batch job definition name |

| command | "./createiiif.sh" |

| AWS_REGION | AWS region, e.g. us-east-1 |

| SRC_BUCKET | S3 bucket which stores the images need to be processed. (Source S3 bucket) |

| AWS_BUCKET_NAME | S3 bucket which stores the generated tile images and manifests files. (Target S3 bucket) |

| ACCESS_DIR | Path to the image folder under the SRC_BUCKET |

| CSV_NAME | A CSV file with title and description of the images |

| CSV_PATH | Path to the csv folder under the SRC_BUCKET |

| DEST_BUCKET | Folder to store the generated tile images and manifests files inside AWS_BUCKET_NAME |

| DEST_URL | Root URL for accessing the manifests e.g. https://s3.amazonaws.com/AWS_BUCKET_NAME |

| UPLOAD_BOOL | Default is false. Set it to true if you want to use upload_to_s3 iiifS3 feature and use your customized docker image. |

- iiif_s3_docker

- Image at Docker Hub: yinlinchen/vtl:iiifs3_v3