Code for reproducing results in the paper

The code was tested with python 3.6, pytorch 0.4.1, and torchvision 0.2.1.

Run

pip install -r requirements.txt

First go to the experiments directory:

cd experiments

python -u celeba.py --config configs/celebA256-conv.json --batch-size 40 --batch-steps 10 --image-size 256 --n_bits 5 --dequant uniform --data_path '<data_path>' --model_path '<model_path>'python -u celeba.py --config configs/celebA256-vdq.json --batch-size 40 --batch-steps 10 --image-size 256 --n_bits 5 --dequant variational --data_path '<data_path>' --model_path '<model_path>' --train_k 2python -u lsun.py --config configs/lsun128-vdq.json --category [church_outdorr|tower|bedroom] --image-size 128 --batch-size 160 --batch-steps 16 --data_path '<data_path>' --model_path '<model_path>' --dequant uniform --n_bits 5python -u lsun.py --config configs/lsun128-vdq.json --category [church_outdorr|tower|bedroom] --image-size 128 --batch-size 160 --batch-steps 16 --data_path '<data_path>' --model_path '<model_path>' --dequant variational --n_bits 5 --train_k 3Remeber to setup the paths for data and models with arguments --data_path and --model_path.

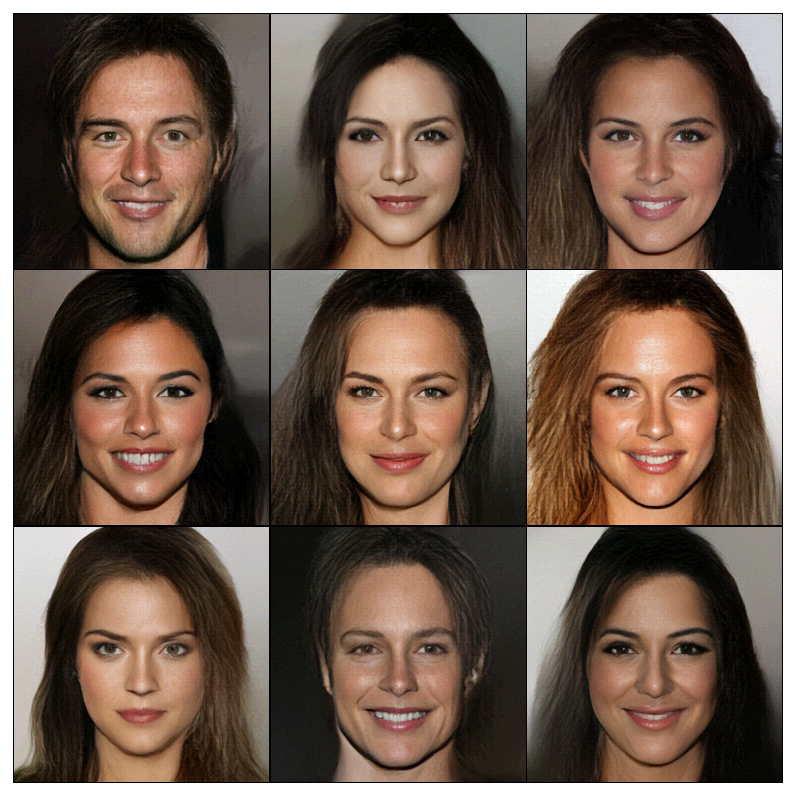

Sampled images after each epoch are stored in the directory: <model_path>/images.

The argument --batch-steps is used for accumulated gradients to trade speed for memory.

The size of each segment of data batch is batch-size / (num_gpus * batch-steps)