This repository contains an implementation of TR3D, a 3D object detection method introduced in the paper:

TR3D: Towards Real-Time Indoor 3D Object Detection

Danila Rukhovich, Anna Vorontsova, Anton Konushin

Samsung Research

https://arxiv.org/abs/2302.02858

The following implementation of TR3D accounts for all three rotation in all 3 dimensions (axes), i.e. yaw, pitch and roll.

You can install all required packages manually. This implementation is based on mmdetection3d framework.

Please refer to the original installation guide getting_started.md, including MinkowskiEngine installation, replacing open-mmlab/mmdetection3d with samsunglabs/tr3d.

Most of the TR3D-related code locates in the following files:

detectors/mink_single_stage.py,

detectors/tr3d_ff.py,

dense_heads/tr3d_head.py,

necks/tr3d_neck.py.

Click to expand installation trials

| Software | Version | Status |

|---|---|---|

| CUDA | 11.3 | Cannot Install : Due to the driver version mismatch |

| CUDA | 11.7 | Cannot Install : Due to the driver version mismatch |

| CUDA | 12.3 | Working |

| Pytorch | 1.12.1 + cu 11.3 | Working |

| cudatoolkit | 11.7 | Working |

| cudatoolkit | 11.3 | Cannot Install X : Due to unmet dependencies |

| Minkowski Engine | 0.5.3 | Working |

| gcc and g++ (important) | 9.5.0 | Working |

Create a conda env with python 3.8

conda create -n tr3d python=3.8Use the above version of packages and install them. You may refer to https://github.com/SamsungLabs/tr3d/blob/main/docker/Dockerfile for the versions of all the packages.

Set the gcc and g++ to be of version 9.5.0 (or upto 10)

Make sure nvcc is installed

nvcc --versionClone the Minkowski Engine repository and make sure to follow the below instructions

export CUDA_HOME=/usr/local/cuda-11.x

pip install -U git+https://github.com/NVIDIA/MinkowskiEngine -v --no-deps --install-option="--blas_include_dirs=${CONDA_PREFIX}/include" --install-option="--blas=openblas"

# Or if you want local MinkowskiEngine

git clone https://github.com/NVIDIA/MinkowskiEngine.git

cd MinkowskiEngine

python setup.py install --blas_include_dirs=${CONDA_PREFIX}/include --blas=openblasThe code that worked for my system (Ubuntu 20.04 with CUDA 12.1)

pip install -U git+https://github.com/NVIDIA/MinkowskiEngine -v --no-depsPlease see getting_started.md for basic usage examples. We follow the mmdetection3d data preparation protocol described in scannet, sunrgbd, and s3dis.

For physion data preperation refer to physion.

Setting up config files

One can refer to configs/tr3d/tr3d_physion_fly_config.py as a template.

Training

To start training, run train with TR3D configs:

python tools/train.py configs/tr3d/tr3d_physion_fly_config.pyNote: In order to control the interval of evaluation, change interval at this line.

To train without validation:

python tools/train.py configs/tr3d/tr3d_physion_fly_config.py --no-validateTesting

Test pre-trained model using test with TR3D configs:

python tools/test.py configs/tr3d/tr3d_physion_fly_config.py \

work_dirs/<location of trained model>.pth --eval mAPVisualization

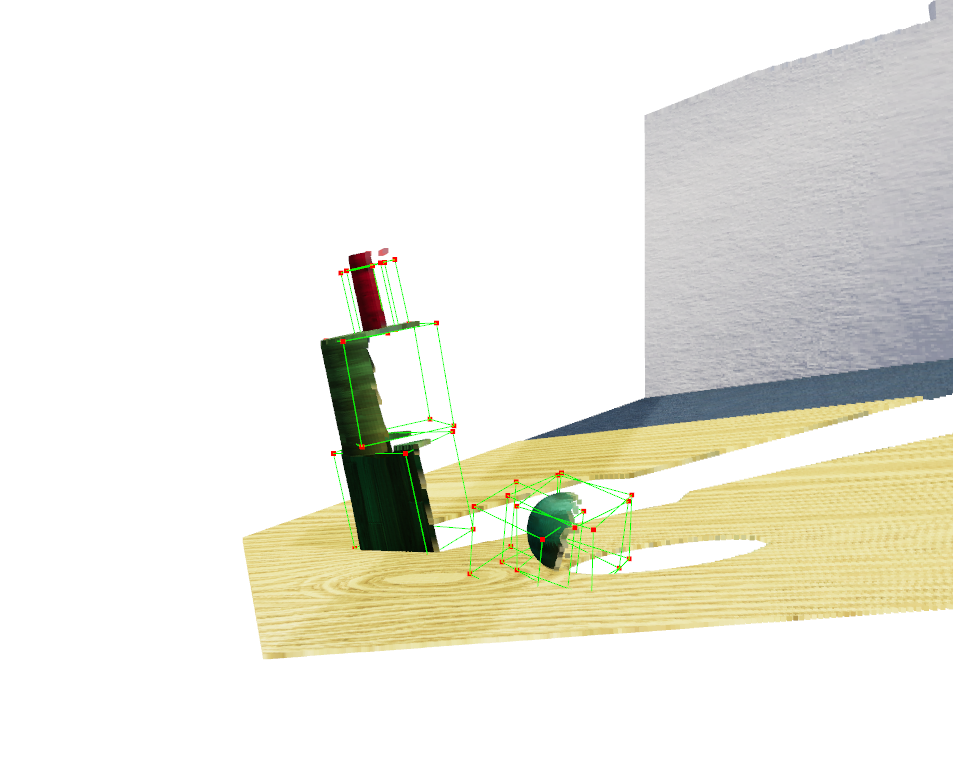

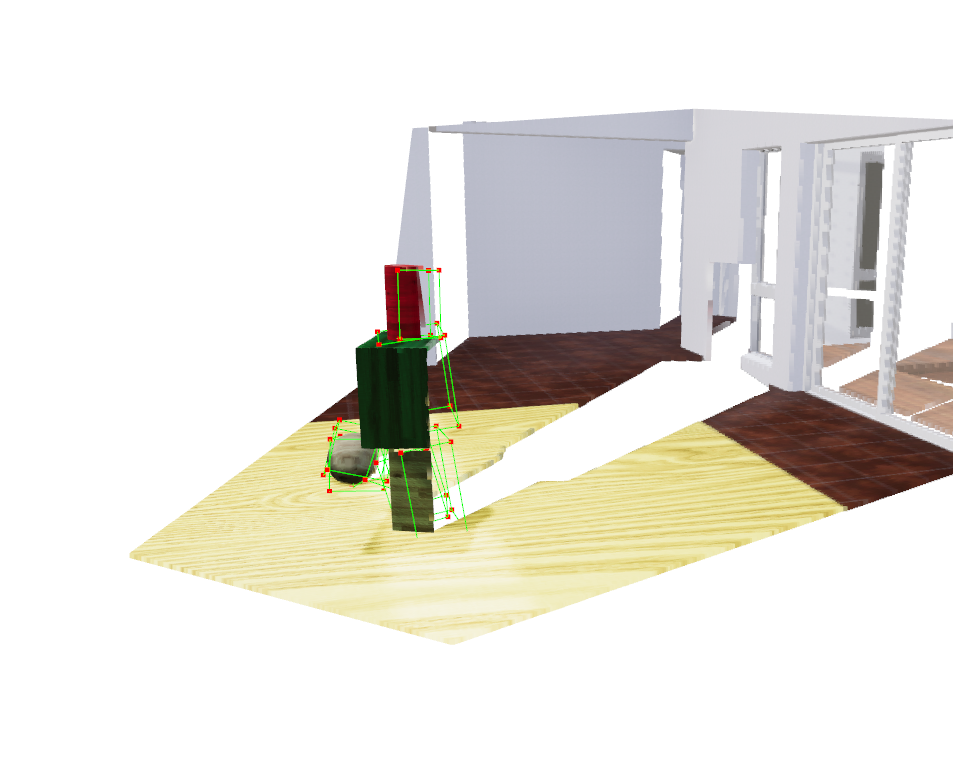

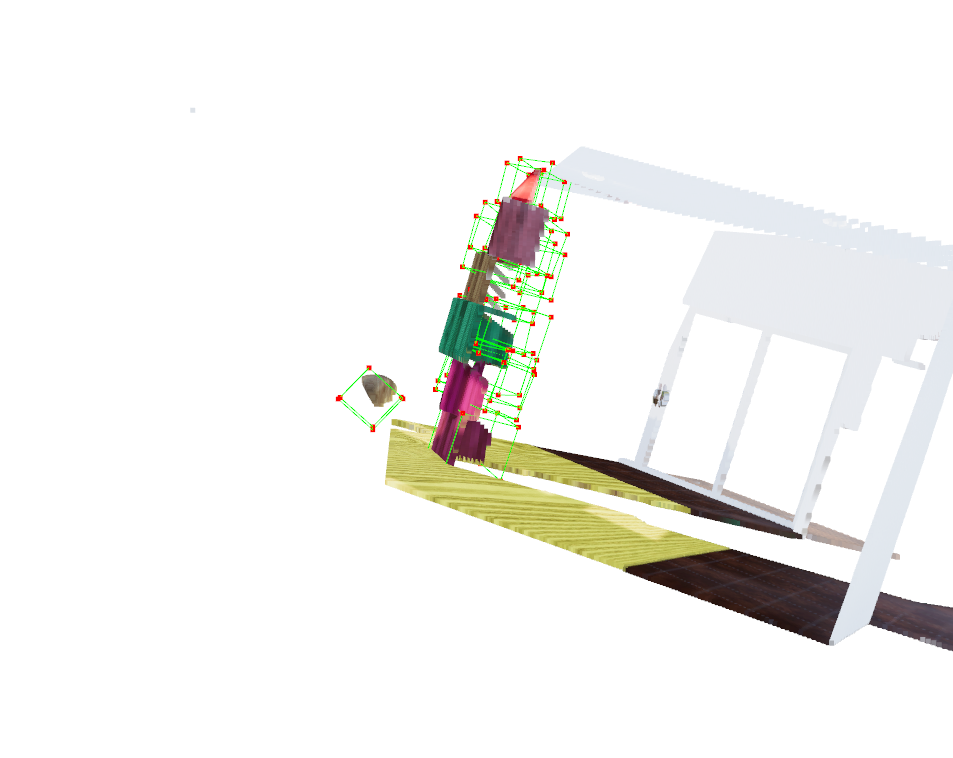

Visualizations can be created with test script.

For better visualizations, you may set score_thr in configs to 0.3:

python tools/test.py configs/tr3d/tr3d_scannet-3d-18class.py \

work_dirs/tr3d_scannet-3d-18class/latest.pth --show \

--show-dir work_dirs/<location to store output visualizations>The above command stores the output in the location provided.

To view the output visualization:

python physion/output_visualizer.py pilot_towers_nb2_fr015_SJ010_mono0_dis0_occ0_tdwroom_unstable_0014<(location of file)>The metrics are obtained in 5 training runs which utilizes the support type of videos.

The runs are on a single Nvidia RTX 3080Ti (12GB) GPU. The following table is the result of validation runs.

The mAP and mAR calcuations depend on the exact 3D IOU that has been slightly modified to handle certain edge cases

Note : The access to the models will be shortly updated. Results for

dominoeswill also be updated.

TR3D 3D Detection

| Dataset | Loss | Class | AP@0.25 | AR@0.25 | AP@0.50 | AR@0.50 | Download |

|---|---|---|---|---|---|---|---|

| Physion Support | Corners + Huber loss | object | 0.1608 | 0.5322 | 0.0803 | 0.3606 | TBA |

| Physion Support | Corners + Chamfer loss | object | 0.7278 | 0.8733 | 0.4813 | 0.7212 | TBA |

| Physion Support | 12 direct + Huber loss | object | 0.6609 | 0.9669 | 0.2871 | 0.6472 | TBA |

cornersregards to usage of loss wrt the 8 corners obtained from the12dvalues learnt.12d directregards to directly using thos values with the loss.