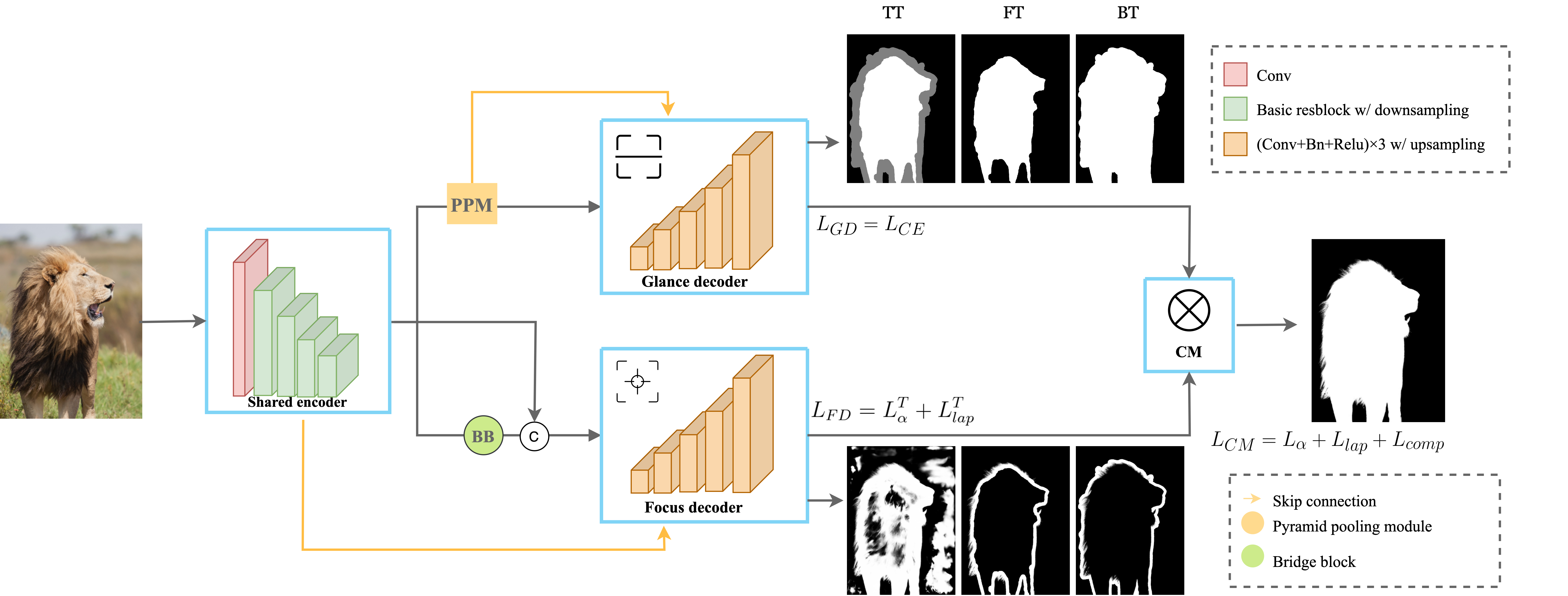

This repository contains the code, datasets, models, test results and a video demo for the paper End-to-end Animal Image Matting. We propose a novel Glance and Focus Matting network (GFM), which employs a shared encoder and two separate decoders to learn both tasks in a collaborative manner for end-to-end animal matting. We also establish a novel Animal Matting dataset (AM-2k) to serve for end-to-end matting task. Furthermore, we investigate the domain gap issue between composition images and natural images systematically, propose a carefully designed composite route RSSN and a large-scale high-resolution background dataset (BG-20k) to serve as better candidates for composition.

Here is a video demo to illustrate the motivation, the network, the datasets, and the test results on an animal video.

We have released the inference code and a pretrained model, which can be found in the following sections. Since the paper is currently under review, the two datasets (AM-2k and BG-20k), training code and the rest pretrained models will be made public after review.

The architecture of our proposed end-to-end method GFM is illustrated below. We adopt three kinds of Representation of Semantic and Transition Area (RoSTa) -TT, -FT, -BT within our method.

We trained GFM with three backbones, -(d) (DenseNet-121), -(r) (ResNet-34), and -(r2b) (ResNet-34 with 2 extra blocks). The trained model for each backbone can be downloaded via the link listed below.

| GFM(d)-TT | GFM(r)-TT | GFM(r2b)-TT |

|---|---|---|

| coming soon | coming soon | model |

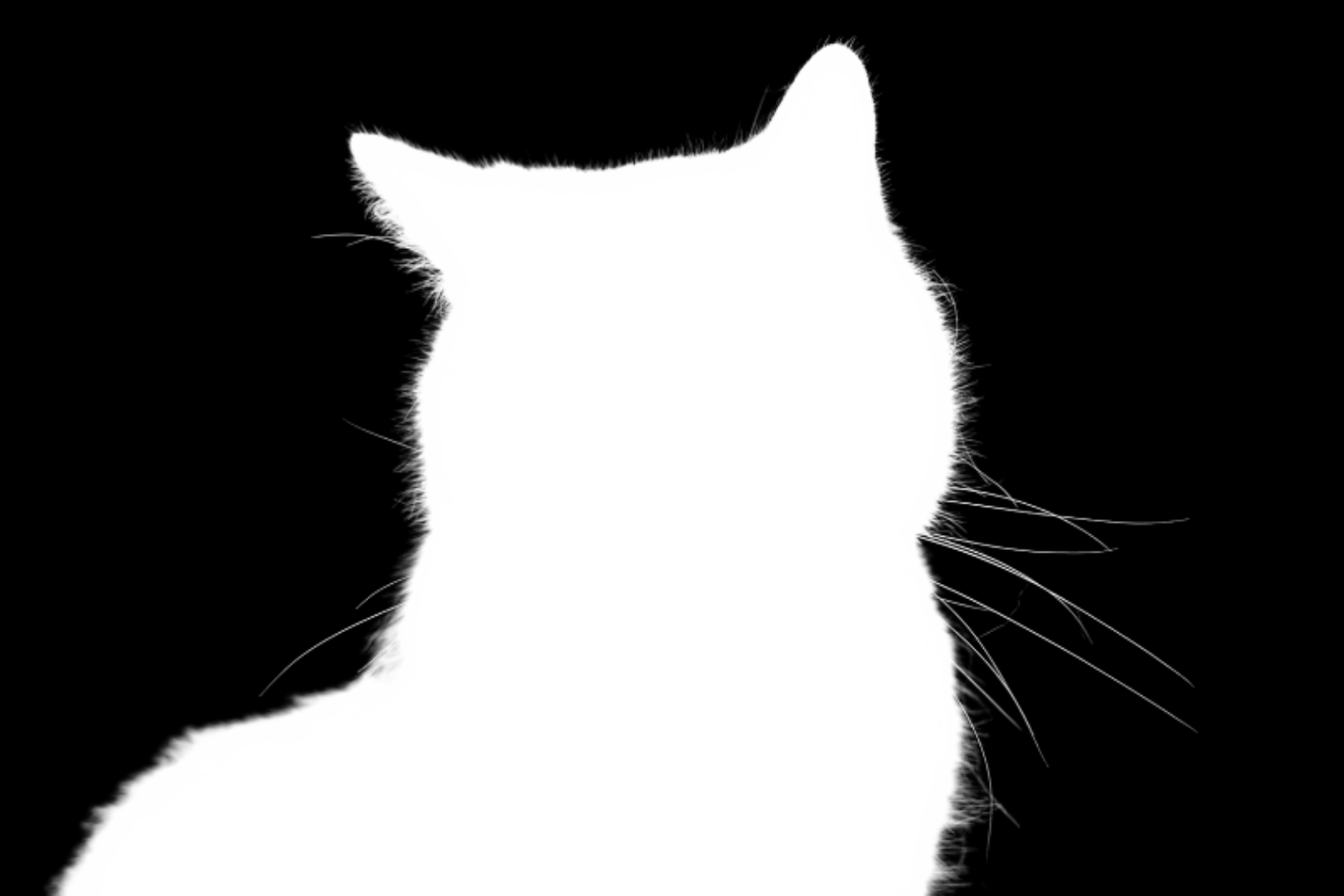

Our proposed AM-2k contains 2,000 high-resolution natural animal images from 20 categories along with manually labeled alpha mattes. Some examples are shown as below, more can be viewed in the video demo.

Our proposed BG-20k contains 20,000 high-resolution background images excluded salient objects. Some examples are shown as below, more can be viewed in the video demo.

We test GFM on our AM-2k test dataset and show the results as below. More results on AM-2k test set can be found here.

Requirements:

- Python 3.6.5+ with Numpy and scikit-image

- Pytorch (version 1.4.0)

- Torchvision (version 0.5.0)

-

Clone this repository

git clone https://github.com/JizhiziLi/animal-matting.git -

Go into the repository

cd animal-matting -

Create conda environment and activate

conda create -n animalmatting python=3.6.5conda activate animalmatting -

Install dependencies, install pytorch and torchvision separately if you need

pip install -r requirements.txtconda install pytorch==1.4.0 torchvision==0.5.0 cudatoolkit=10.1 -c pytorch

Our code has been tested with Python 3.6.5, Pytorch 1.4.0, Torchvision 0.5.0, CUDA 10.1 on Ubuntu 18.04.

Here we provide the procedure of testing on sample images by our pretrained models:

-

Download pretrained models as shown in section GFM, unzip to folder

models/ -

Save your sample images in folder

samples/original/. -

Setup parameters in

scripts/deploy_samples.shand run itchmod +x scripts/*./scripts/deploy_samples.sh -

The results of alpha matte and transparent color image will be saved in folder

samples/result_alpha/.andsamples/result_color/.

We show some sample images from the internet, the predicted alpha mattes, and their transparent results as below. (We adopt arch='e2e_resnet34_2b_gfm_tt' and use hybrid testing strategy.)

This project is for research purpose only, please contact us for the licence of commercial use. For any other questions please contact jili8515@uni.sydney.edu.au.