Kaggle PS4E02 - Multi-Class Prediction of Obesity Risk

- Team name: I'm unemployed, please hire me (Ranked 484/3587 - top 14%)

- Public test: 0.91112

- Private test: 0.90823

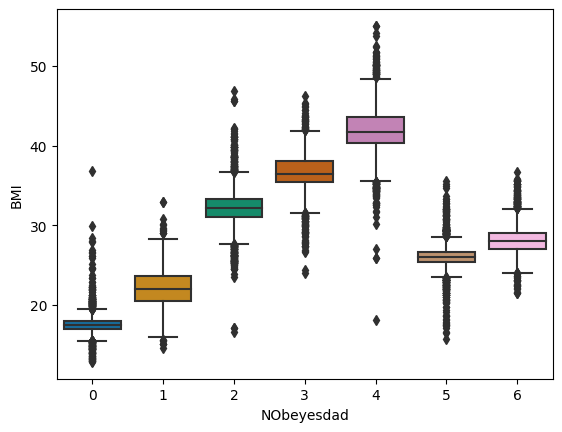

By a common sense, obesity risk of a person is reflected by his BMI. However, this contest use a generated dataset, which f#cked up the common sense really, really bad. For example, by inspecting BMIs we can easily point out some outliers of obesity risk labeled 5 has the same BMI with label 1.

This leads to the fact that, all submissions in public test cannot pass the threshold of 0.93.

Therefore, combining original data with generated data seems to be a good approach.

- Replace

FrequentlywithAlways: by a common sense, these are equivalent (and noFrequentlycould be found in the test set). - Adding BMI, BMI_prime (BMI/25)

Still, this contest highlighted the importance of stacking & voting.

- lazy-classifier shows that best classifiers to this problem are Extra Tree, Random Forest, Decision Tree, Bagging Classiifer, SVC, Logistic Regression, XGB and LGBM.

- Using optuna to find best parameters.

Stacking these results and we achieved our highest score, reported above. Also, this contest highlighted a new approach using AutoML - AutoGluon. We achieved a near-highest score with AutoGluon (0.90787 and 0.90751 for public and private test, respectively).

Submissions are evaluated using the accuracy score.

This project is licensed under The GNU GPL v3.0