This is a TypeScript version of karpathy/micrograd repo.

A tiny scalar-valued autograd engine and a neural net on top of it (~200 lines of TS code).

This repo might be useful for those who want to get a basic understanding of how neural networks work, using a TypeScript environment for experimentation.

- micrograd/ — this folder is the core/purpose of the repo

- engine.ts — the scalar

Valueclass that supports basic math operations likeadd,sub,div,mul,pow,exp,tanhand has abackward()method that calculates a derivative of the expression, which is required for back-propagation flow. - nn.ts — the

Neuron,Layer, andMLP(multi-layer perceptron) classes that implement a neural network on top of the differentiable scalarValues.

- engine.ts — the scalar

- demo/ - demo React application to experiment with the micrograd code

- src/demos/ - several playgrounds where you can experiment with the

Neuron,Layer, andMLPclasses.

- src/demos/ - several playgrounds where you can experiment with the

See the 🎬 The spelled-out intro to neural networks and back-propagation: building micrograd YouTube video for the detailed explanation of how neural networks and back propagation work. The video also explains in detail what the Neuron, Layer, MLP, and Value classes do.

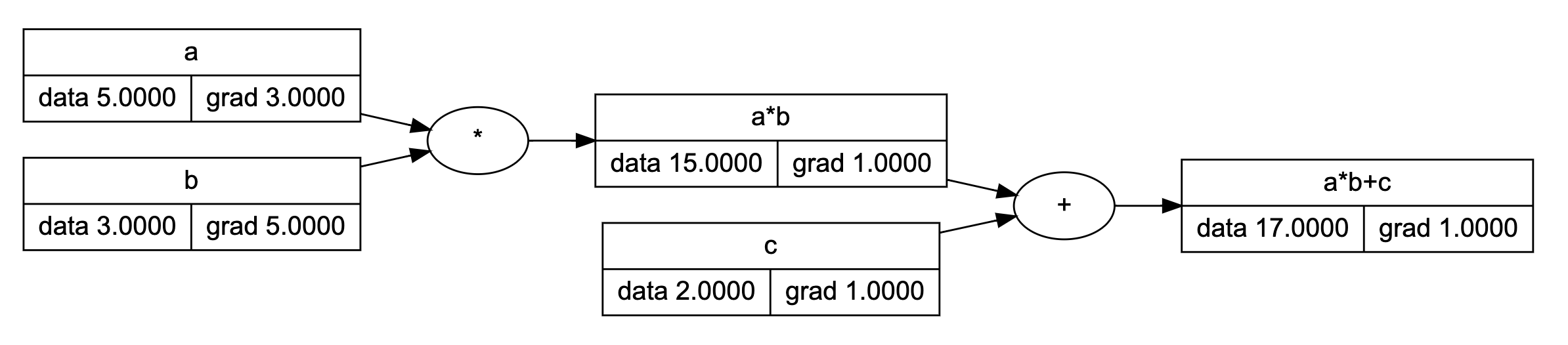

Briefly, the Value class allows you to build a computation graph for some expression that consists of scalar values.

Here is an example of how the computation graph for the a * b + c expression looks like:

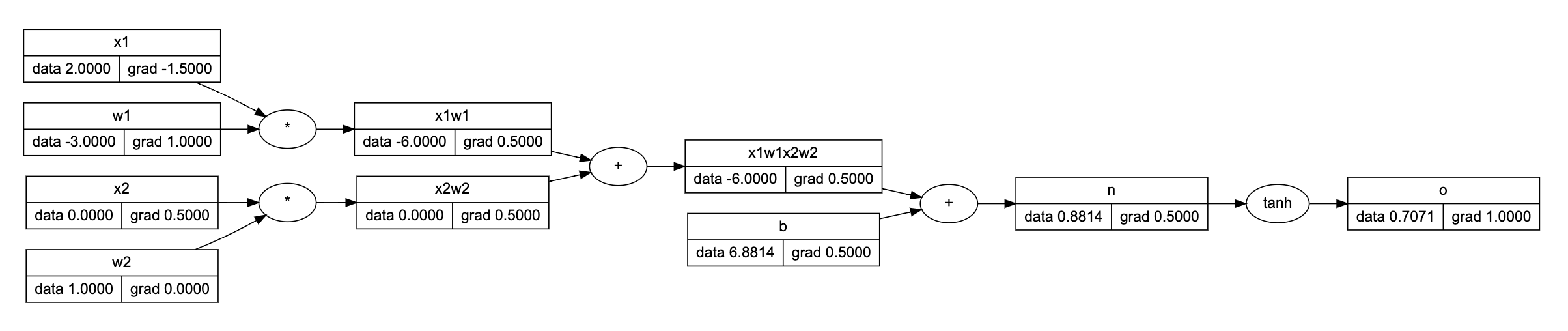

Based on the Value class we can build a Neuron expression X * W + b. Here we're simulating a dot-product of matrix X (input features) and matrix W (neuron weights):

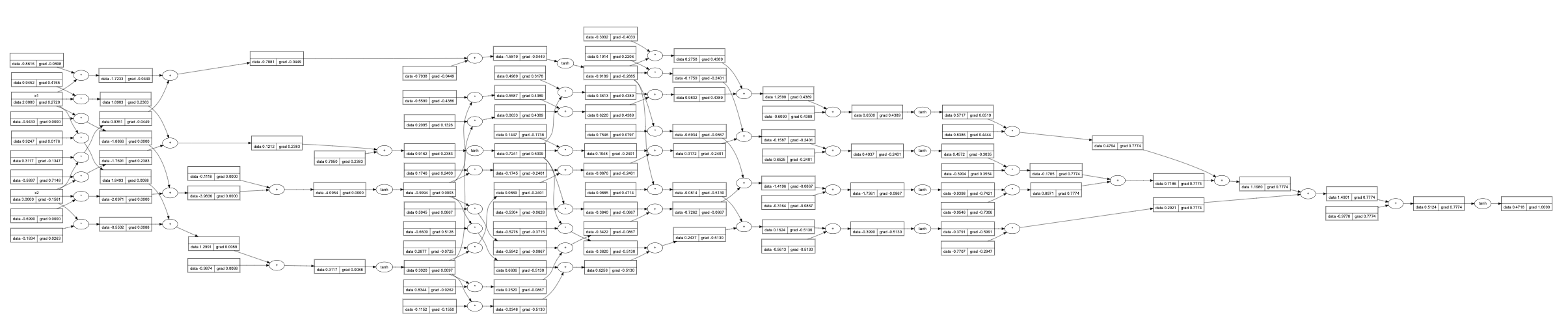

Out of Neurons, we can build the MLP network class that consists of several Layers of Neurons. The computation graph in this case may look a bit complex to be displayed here, but a simplified version might look like this:

The main idea is that the computation graphs above "know" how to do automatic back propagation (in other words, how to calculate derivatives). This allows us to train the MLP network for several epochs and adjust the network weights in a way that reduces the ultimate loss:

To see the online demo/playground, check the following link:

If you want to experiment with the code locally, follow the instructions below.

Clone the current repo locally.

Switch to the demo folder:

cd ./demoSetup node v18 using nvm (optional):

nvm useInstall dependencies:

npm iLaunch demo app:

npm run devThe demo app will be available at http://localhost:5173/micrograd-ts

Go to the ./demo/src/demos/ to explore several playgrounds for the Neuron, Layer, and MLP classes.

The TypeScript version of the karpathy/micrograd repo by @trekhleb