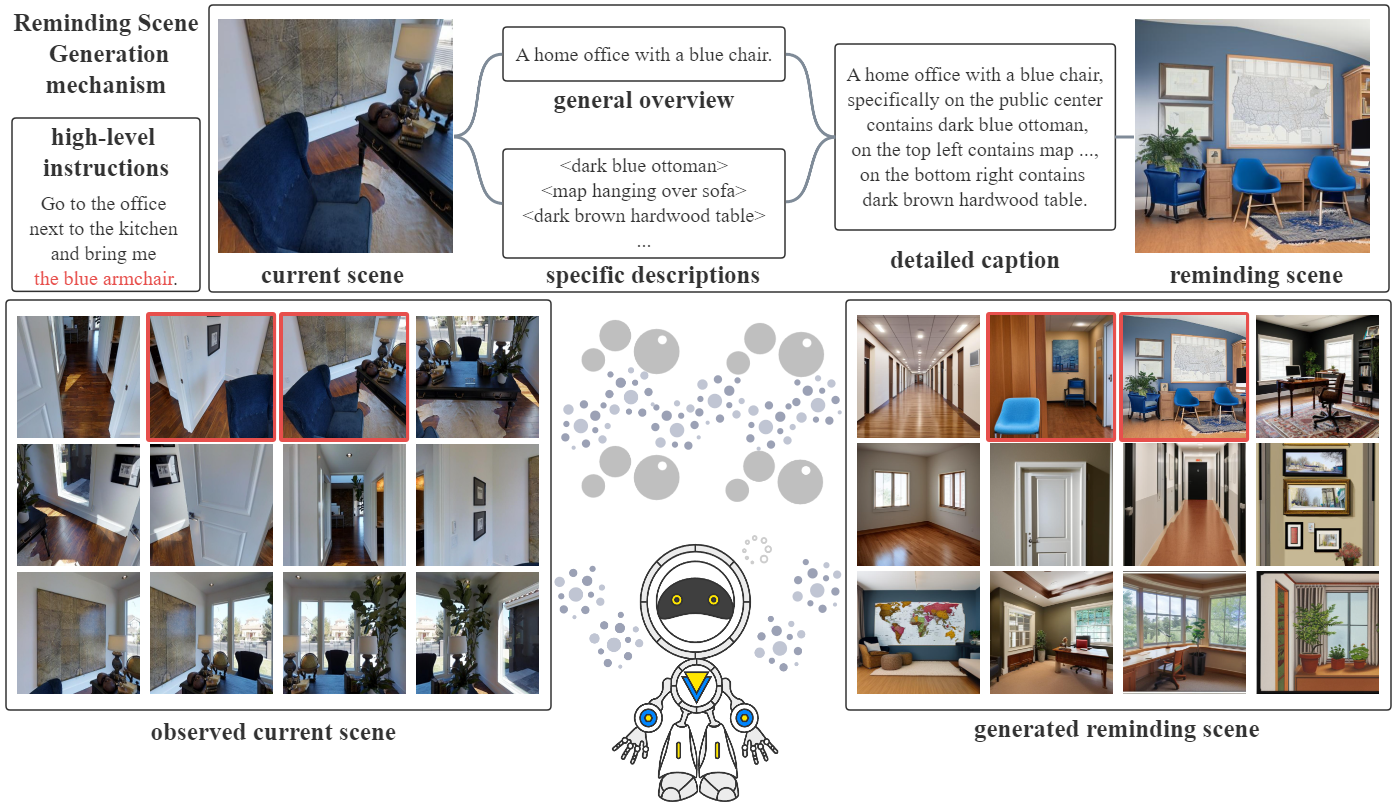

This repository is the implementation for the paper "VISIONARY: Vision-aware enhancement with reminding scenes generated by captions via multimodal transformer for embodied referring expression".

Authors: Zhengwu Yuan, Peixian Tang, Xinguang Sang, Fan Zhang, Zheqi Zhang

-

Please follow the baseline work DUET and LAD to complete the environment preparation and download of related data.

-

Download the additional generated content for VISIONARY from here, and put the data in

datasetsdirectory.

After configuring the training strategy, run the following script to train:

cd training_src

sh scripts/final_frt_gd_finetuning_stable.sh

Replace resumedir in eval.sh and run this script to evaluate the model. Furthermore, the result file could be submitted to the online leaderboard to get the test performance.

cd training_src

sh scripts/eval.sh

P.S. The final checkpoints of VISIONARY model can be found here.

The code is mainly based on LAD, DUET, and this work is inspired by PanoGen, KERM. Thanks for their awesome works!