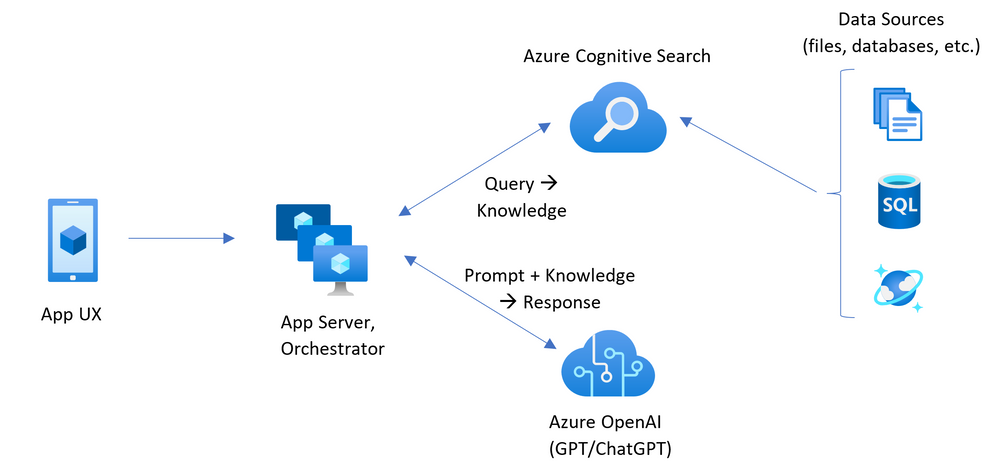

This sample demonstrates a few approaches for creating ChatGPT-like experiences on data from Azure Data Manager for Energy using the Retrieval Augmented Generation pattern. It uses Azure OpenAI Service to access the ChatGPT model (gpt-35-turbo), and Azure Cognitive Search for data indexing and retrieval.

The repo includes sample data so it's ready to try end to end. In this sample application we use a fictitious company called Contoso Energy, and the experience allows its employees to ask questions about their fields, wells, wellbores, logs, trajectories etc.

- Chat and Q&A interfaces

- Explores various options to help users evaluate the trustworthiness of responses with citations, tracking of source content, etc.

- Shows possible approaches for data preparation, prompt construction, and orchestration of interaction between model (ChatGPT) and retriever (Cognitive Search)

- Settings directly in the UX to tweak the behavior and experiment with options

IMPORTANT: In order to deploy and run this example, you'll need an Azure subscription with access enabled for the Azure OpenAI service. You can request access here. You can also visit here to get some free Azure credits to get you started.

AZURE RESOURCE COSTS by default this sample will create Azure App Service, Databricks and Azure Cognitive Search resources that have a monthly cost. You can switch them to free versions of each of them if you want to avoid this cost by changing the parameters file under the infra folder (though there are some limits to consider; for example, you can have up to 1 free Cognitive Search resource per subscription, and the free Form Recognizer resource only analyzes the first 2 pages of each document.)

- Azure Developer CLI

- Python 3+

- Important: Python and the pip package manager must be in the path in Windows for the setup scripts to work.

- Important: Ensure you can run

python --versionfrom console. On Ubuntu, you might need to runsudo apt install python-is-python3to linkpythontopython3.

- Node.js

- Git

- Powershell 7+ (pwsh) - For Windows users only.

- Important: Ensure you can run

pwsh.exefrom a PowerShell command. If this fails, you likely need to upgrade PowerShell.

- Important: Ensure you can run

NOTE: Your Azure Account must have

Microsoft.Authorization/roleAssignments/writepermissions, such as User Access Administrator or Owner.

You can run this repo virtually by using GitHub Codespaces or VS Code Remote Containers. Click on one of the buttons below to open this repo in one of those options.

- Create a new folder and switch to it in the terminal

- Run

azd auth login - Run

azd init -t azure-data-manager-for-energy-openai-demo- For the target location, the regions that currently support the models used in this sample are East US or South Central US. For an up-to-date list of regions and models, check here

Execute the following command, if you don't have any pre-existing Azure services and want to start from a fresh deployment.

- Run

az login --scope https://graph.microsoft.com//.default- This is used to perform the Databricks role assignments during deployment (in the absence of Bicep support (https://github.com/Azure/bicep/issues/11035)). - Check that you are logged in to the right subscription by running the command:

az account show --query "{SubscriptionName:name, SubscriptionId:id}". If you need to change to the right subscription then runaz account set --subscription <correct_subscription_id> - Run

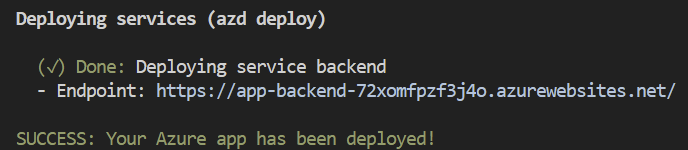

azd up- This will provision Azure resources and deploy this sample to those resources, including building the search index based on the files found in the./datafolder. - After the application has been successfully deployed you will see a URL printed to the console. Click that URL to interact with the application in your browser.

It will look like the following:

NOTE: It may take a minute for the application to be fully deployed. If you see a "Python Developer" welcome screen, then wait a minute and refresh the page.

- Run

azd env set AZURE_OPENAI_SERVICE {Name of existing OpenAI service} - Run

azd env set AZURE_OPENAI_RESOURCE_GROUP {Name of existing resource group that OpenAI service is provisioned to} - Run

azd env set AZURE_OPENAI_CHATGPT_DEPLOYMENT {Name of existing ChatGPT deployment}. Only needed if your ChatGPT deployment is not the default 'chat'. - Run

azd env set AZURE_OPENAI_GPT_DEPLOYMENT {Name of existing GPT deployment}. Only needed if your ChatGPT deployment is not the default 'davinci'. - Run

azd up

NOTE: You can also use existing Search and Storage Accounts. See

./infra/main.parameters.jsonfor list of environment variables to pass toazd env setto configure those existing resources.

- Simply run

azd up

- Run

azd login - Change dir to

app - Run

./start.ps1or./start.shor run the "VS Code Task: Start App" to start the project locally.

Run the following if you want to give someone else access to completely deployed and existing environment.

- Install the Azure CLI

- Run

azd init -t azure-data-manager-for-energy-openai-demo - Run

azd env refresh -e {environment name}- Note that they will need the azd environment name, subscription Id, and location to run this command - you can find those values in your./azure/{env name}/.envfile. This will populate their azd environment's .env file with all the settings needed to run the app locally. - Run

pwsh ./scripts/roles.ps1- This will assign all of the necessary roles to the user so they can run the app locally. If they do not have the necessary permission to create roles in the subscription, then you may need to run this script for them. Just be sure to set theAZURE_PRINCIPAL_IDenvironment variable in the azd .env file or in the active shell to their Azure Id, which they can get withaz account show.

- In Azure: navigate to the Azure WebApp deployed by azd. The URL is printed out when azd completes (as "Endpoint"), or you can find it in the Azure portal.

- Running locally: navigate to 127.0.0.1:5000

Once in the web app:

- Try different topics in chat or Q&A context. For chat, try follow up questions, clarifications, ask to simplify or elaborate on answer, etc.

- Explore citations and sources

- Click on "settings" to try different options, tweak prompts, etc.

- Revolutionize your Enterprise Data with ChatGPT: Next-gen Apps w/ Azure OpenAI and Cognitive Search

- Azure Data Manager for Energy

- Azure Cognitive Search

- Azure OpenAI Service

Note: The information contained in the sample documents is only for demonstration purposes and does not reflect the opinions or beliefs of Microsoft. Microsoft makes no representations or warranties of any kind, express or implied, about the completeness, accuracy, reliability, suitability or availability with respect to the information contained in this document. All rights reserved to Microsoft.

Question: Which data are you using?

Answer: In this demo we are using the open-source TNO dataset, available in the OSDU Forum GitLab. Specifically we are using the indexed versions of the master data and work product components (WPC):

- Master Data: Field

- Master Data: GeoPoliticalEntity

- Master Data: Organisation

- Master Data: Well

- Master Data: Wellbore

- Work Product Component: WellboreMarkerSet

- Work Product Component: WellLog

- Work Product Component: WellboreTrajectory

Question: Why do we need to break up the JSON documents into chunks when Azure Cognitive Search supports searching large documents?

Answer: Chunking allows us to limit the amount of information we send to OpenAI due to token limits. By breaking up the content, it allows us to easily find potential chunks of text that we can inject into OpenAI.

Question: Why can I not retrieve aggregated summaries from the data?

Answer: There's only a certain amount of data that can be retrieved and provided to the AI model due to token limitations. Therefore, the AI cannot consume all the JSON documents and aggregate across. For this use-case you would need to parse the documents to a table structure or similar. The LLM can then create the necessary programmatic queries (i.e. SQL, KQL) and retrieve the aggregated data from the table source directly.

If you see this error while running azd deploy: read /tmp/azd1992237260/backend_env/lib64: is a directory, then delete the ./app/backend/backend_env folder and re-run the azd deploy command. This issue is being tracked here: Azure/azure-dev#1237

If the web app fails to deploy and you receive a '404 Not Found' message in your browser, run 'azd deploy'.