This repository contains all code to run a super simple AI LLM model - such as Mistral 7b; probably currently the best model to run locally - for inference; it includes simple RAG functionalities. Most importantly it exposes metrics about how long it took to create a response, as well as how long it took to generate the tokens.

Currently, uses llama_cpp.

This is for testing only; Use at your own risk! Main purpose is to learn hands-up on how this stuff works and to

intrument and characterize the behaviour of AI LLMs.

The following key metrics are exposed through Prometheus:

- token_creation_duration - Histogram for the time it took to generate the tokens.

- inference_response_duration - Histogram for the time it took to generate the full response (includes tokenization and embedding additional context).

- embedding_duration - Histogram for the time it took to create a vector representation of the query and lookup contextual information in the knowledge base.

- TODO: add more such as time it took to tokenize, read from KV store etc; also check if we can add tracing.

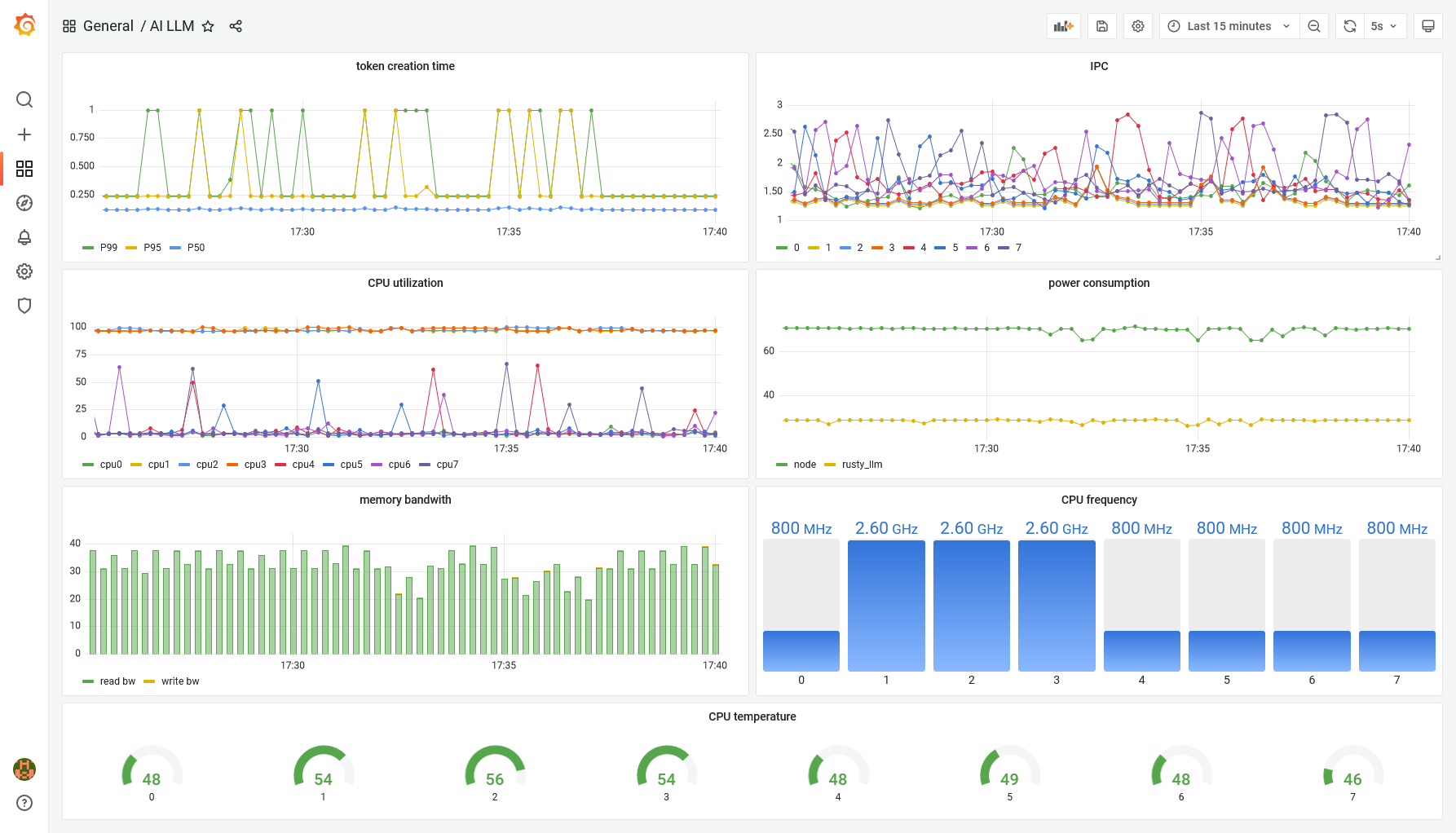

Here is an example dashboard that capture the metrics described as well as some host metrics such as power, CPU utilisation etc.:

You will need to download a model and embedding model:

- This mistral-7b-instruct-v0.2.Q4_K_M.gguf model seems to give reasonable good results. Otherwise, give the Phi-3.5-mini-instruct-Q4_K_S.gguf a try.

- This bge-base-en-v1.5.Q8_0.gguf embedding model seem to work as well.

Best to put both files into a model/ folder as model.gguf and embed.gguf.

This service can be configured through environment variables. The following variables are supported:

| Environment variable | Description | Example/Default |

|---|---|---|

| DATA_PATH | Directory path from which to read text files into the knowledge base. | data |

| EMBEDDING_MODEL | Full path of the embedding model to use. | model/embed.gguf |

| HTTP_ADDRESS | Bind address to use. | 127.0.0.1:8080 |

| HTTP_WORKERS | Number of threads to run with the HTTP server. | 1 |

| MAIN_GPU | Identifies which GPU we should use. | 0 |

| MODEL_BATCH_SIZE | Batch size to use. | 8 |

| MODEL_GPU_LAYERS | Number of layers to offload to GPU. | 0 |

| MODEL_MAX_TOKEN | Maximum number of tokens to generate. | 128 |

| MODEL_PATH | Full path to the gguf file of the model. | model/model.gguf |

| MODEL_THREADS | Number of threads we'll use for inference. | 6 |

| PROMETHEUS_HTTP_ADDRESS | Bind address to use for prometheus. | 127.0.0.1:8081 |

| PROMPT_TEMPLATE | A prompt template - should contain {context} and {query} elements. | Mistral prompt |

Other environment variables such as RUST_LOG can also be used.

The following curl commands show the format the service understands:

$ curl -X POST localhost:8080/query -d '{"query": "Who was Albert Einstein?"}' -H "Content-Type: application/json"

{"response":"[INST]Using this information: [] answer the Question: Who was Albert Einstein?[/INST] Albert Einstein

(14 March 1879 – 18 April 1955) was a German-born theoretical physicist [...]"}

You can also test if the RAG works by running the following query - notice how easy it is to trick these word prediction machines:

$ curl -X POST localhost:8080/query -d '{"query": "Who was thom Rhubarb?"}' -H "Content-Type: application/json"

The Dockerfile to build the image is best used on a machine with the same CPU as were it will be deployed as it uses target-cpu=native flag. Note that is also can optionally also include options to build with CLBlast for GPU support.

Use the following example manifest to deploy this application:

kubectl apply -f k8s_deployment.yaml

Note: make sure to adapt the docker image & paths - the manifest above uses hostPaths!

- 0.1.0 - initial release.

- 0.2.0 - switch to llama_cpp as llm stopped development.

- 0.3.0 - replaced the way we store knowledge.

Some of the following links can be useful: