Things different from original implementation of MultiMAE

- Aim to train with Python 3.10 on Kaggle

- Use

typed-argument-parserinstead ofargparse.ArgumentParser=> Provide type hints, better for understanding code and ease of debugging.

Kaggle Notebook: Pretrain MultiMAE for RGB-D SOD

More details on Wandb report

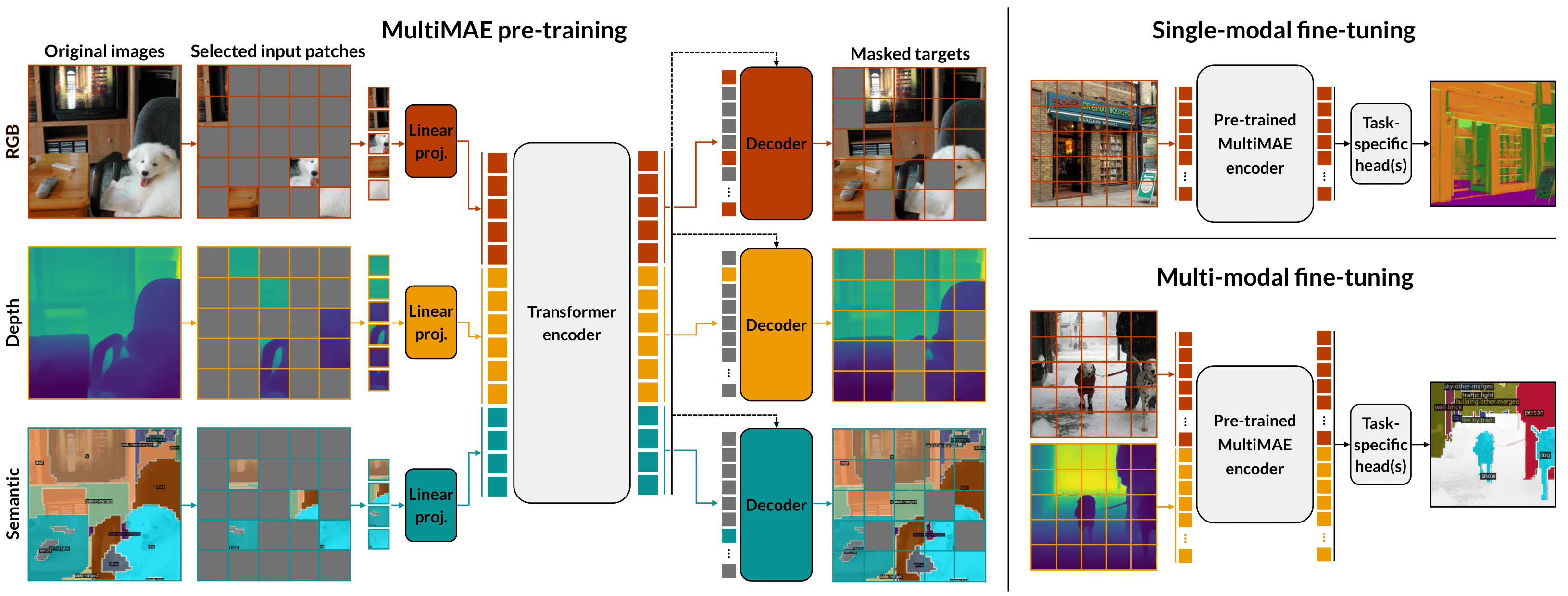

This project is inspired by MultiMAE

@article{bachmann2022multimae,

title={MultiMAE: Multi-modal Multi-task Masked Autoencoders},

author={Bachmann, Roman and Mizrahi, David and Atanov, Andrei and Zamir, Amir},

journal={arXiv preprint arXiv:2204.01678},

year={2022}

}