Simplified instructions to install Kubeflow Pipelines (KFP) on OpenShift, running on OpenShift Pipelines (Tekton).

- OpenShift 4 Cluster

- Admin access to cluster

- OpenShift CLI

Login to the cluster using oc login and admin credentials.

Install Open Data Hub

- Login to the cluster using the console and admin credentials.

- Navigate to OperatorHub and search for

Open Data Hub Operator - Install using the

betachannel. The version installed in this guide is1.0. - Under the install operators, you should see the status of the operator change to

Succeeded

Install OpenShift Pipelines (Tekton)

- Login to the cluster using the console and admin credentials

- Navigate to OperatorHub and search for

OpenShift Pipelines - Pick the channel corresponding to your version of OpenShift

- Install and verify the status of the operator changes to

Succeeded

Follow these instructions to prepare your environment and download the tkn and kfctl binaries.

Deploy KFP

export KFDEF_DIR=<set-your-path>

mkdir -p ${KFDEF_DIR}

cd ${KFDEF_DIR}

export CONFIG_URI=https://raw.githubusercontent.com/IBM/KubeflowDojo/master/OpenShift/manifests/kfctl_tekton_openshift_minimal.v1.1.0.yaml

kfctl apply -V -f ${CONFIG_URI}Open KFP console in your browser

oc expose svc ml-pipeline-ui -n kubeflow

echo $(oc get route ml-pipeline-ui -n kubeflow --template='http://{{.spec.host}}')Install the KFP-Tekton-SDK

Remember to add

dsl-compile-tektonto your path. If you have both Python2 and Python3, you might have to add the binary to your path, e.g.export PATH=$PATH:/Users/.../Library/Python/3.8/bin

Make a local copy of the echo pipeline example as echo_pipeline.py.

Make a local copy of the sequential example as sequential.py.

Compile both examples

dsl-compile-tekton --py echo_pipeline.py --output echo_pipeline.yaml

dsl-compile-tekton --py sequential.py --output sequential.tar.gzOpen KFP console in your browser

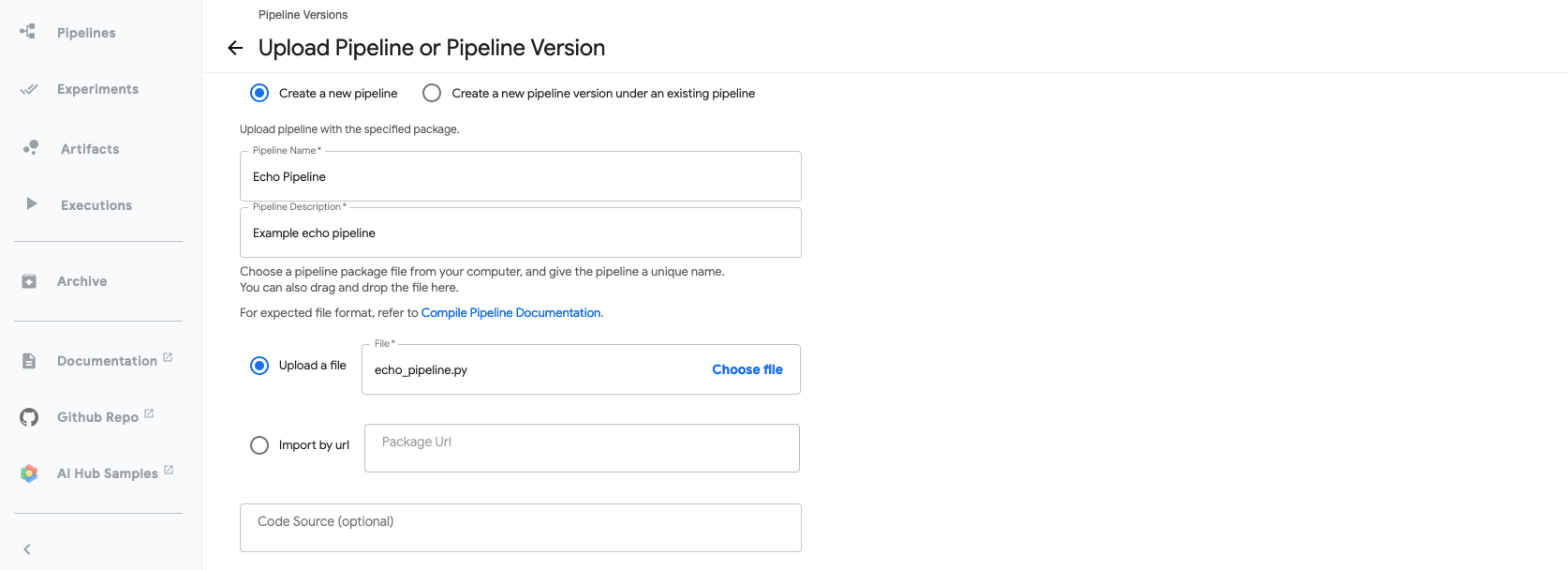

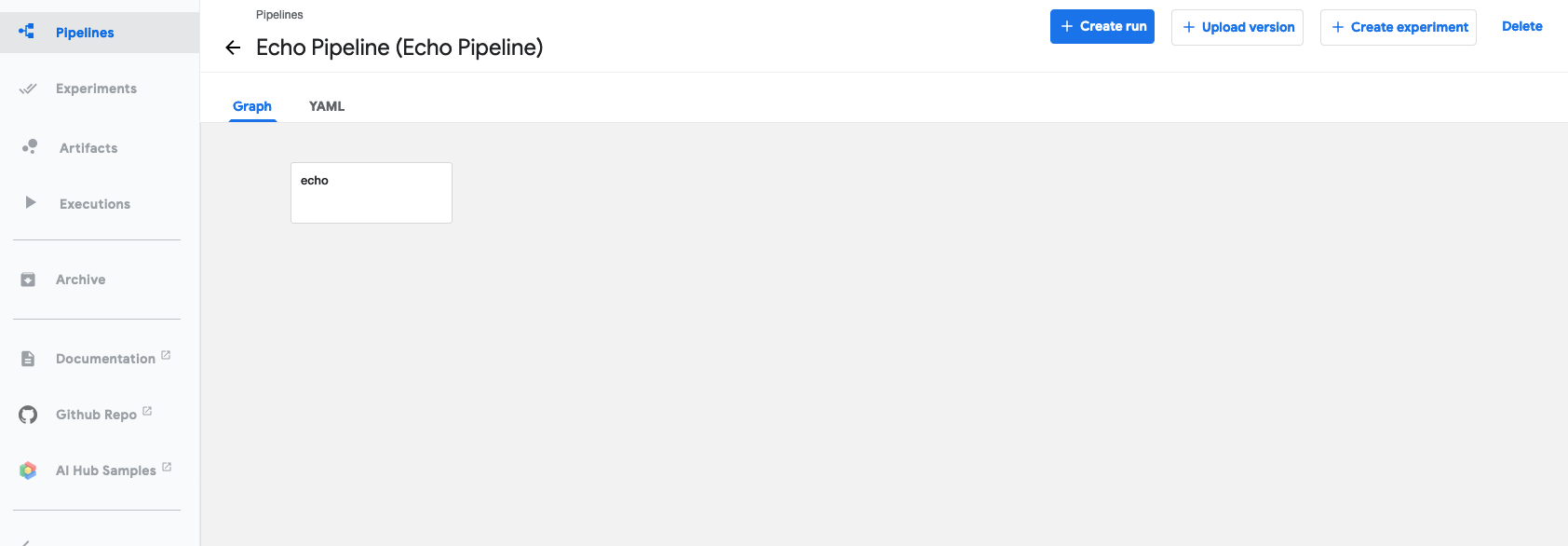

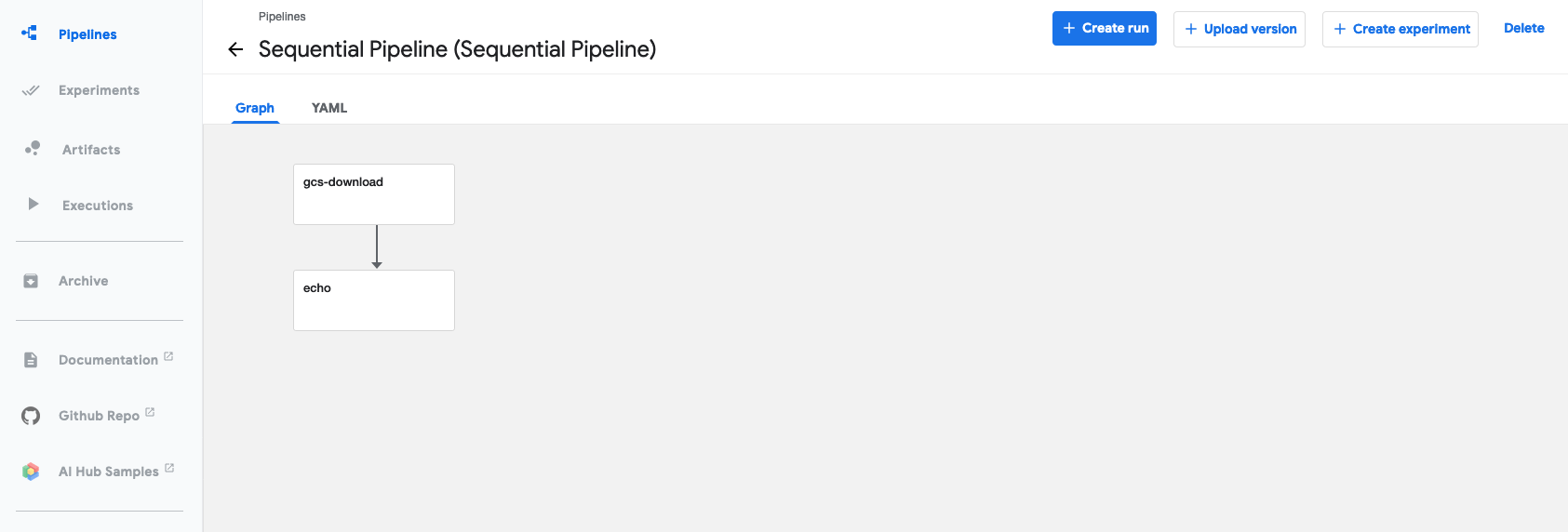

echo $(oc get route ml-pipeline-ui -n kubeflow --template='http://{{.spec.host}}')Upload pipelines echo_pipeline.yaml and sequential.tar.gz. For example,

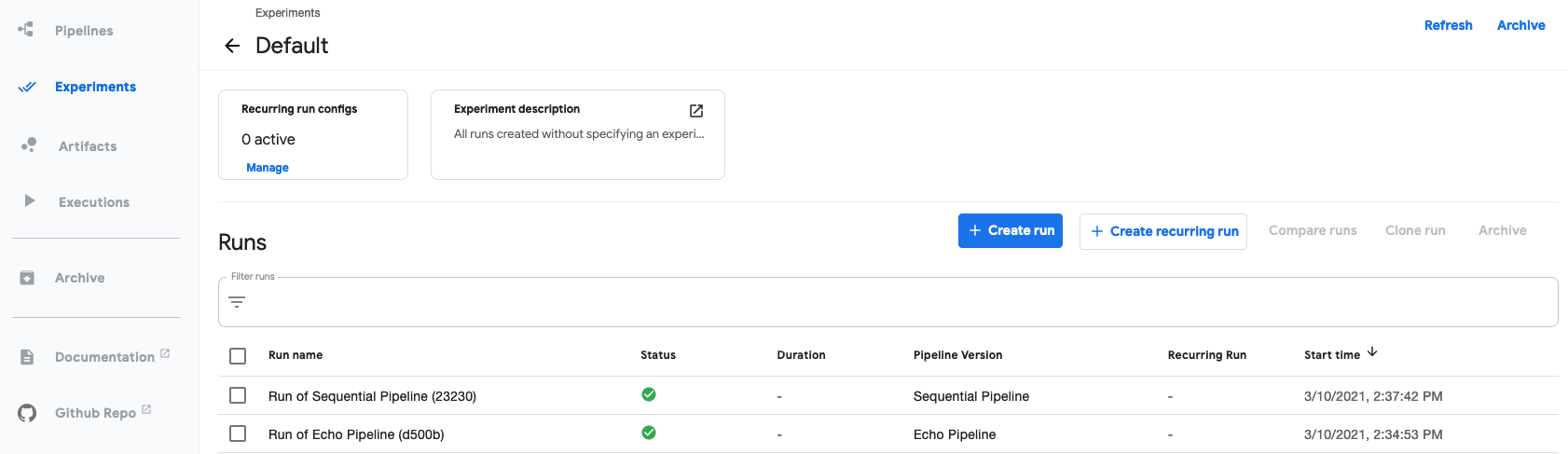

For each pipeline, start a Pipeline Run in the Default Experiment.

If you navigate to your Default Experiment, you should see both pipeline runs complete.