Create synthetic datasets for object detection using Blender.

Blender Synthetics is a repository designed to help you create synthetic datasets for object detection tasks. It automates the process of generating random scenes in Blender, importing custom 3D models with randomized configurations, and rendering numerous images with annotations. These datasets can be used to train and evaluate object detection models.

This repo was tested on the following:

- Python 3.10

- Blender 3.2 or 3.3 (Linux), Blender 3.6.4 (Windows)

git clone https://github.com/tehwenyi/blender-synthetics.git-

Install Blender:

-

Follow the installation instructions here.

-

Add Blender to your PATH by running the following command:

echo 'export PATH=/path/to/blender/directory:$PATH' >> ~/.bashrc

-

-

Verify GPU Compatibility:

Ensure your GPU is supported by Blender. Refer to the official documentation for compatibility details.

-

Install Required Packages:

sh install_requirements.sh

-

Install Blender:

Download Blender from the official website: Blender Downloads.

-

(Anaconda) Create a Virtual Environment with Anaconda:

-

Follow Anaconda installation instructions: Anaconda Installation.

-

Launch Anaconda Prompt and create a virtual environment for Blender:

conda create --name blender python=3.10

-

-

(Anaconda) Add Blender to the PATH:

-

Create a batch script (a .bat file) that will launch Blender. You can do this using a text editor. Create a new file with a

.bat extension(e.g., blender_launcher.bat) and add the following line (replace the path with the actual Blender executable path.):"C:\Program Files\Blender Foundation\Blender 3.6\blender.exe" -

Copy the batch script to your Anaconda environment's

Scriptsdirectory. (eg."C:\Anaconda3\envs\your_env_name\Scripts") -

If you are not using Anaconda, you will have to do the equivalent of this for your virtual environment/PowerShell.

-

-

(Anaconda) Activate Your Anaconda Environment:

conda activate blender

-

Install bpycv in Blender:

You may run this in PowerShell or your virtual environment.

blender -b --python-expr "from subprocess import sys, call; call([sys.executable,'-m','ensurepip'])" blender -b --python-expr "from subprocess import sys, call; call([sys.executable]+'-m pip install --target="$TARGET" -U pip setuptools wheel'.split())" blender -b --python-expr "from subprocess import sys, call; call([sys.executable]+'-m pip install --target="$TARGET" -U bpycv'.split())"

-

Install Required Python Packages in Blender:

- Locate Blender's Python executable in the Blender installation directory. (eg.

"C:\Program Files\Blender Foundation\Blender 3.6\3.6\python\bin\python.exe") - Ensure you are in the folder of this repo (containing

requirements.txt). - Option 1: Run the following in PowerShell (with administrative rights):

& "C:\Program Files\Blender Foundation\Blender 3.6\3.6\python\bin\python.exe" -m pip install -r requirements.txt - (Anaconda) Option 2: Run the following command in your Anaconda Prompt:

"C:\Program Files\Blender Foundation\Blender 3.6\3.6\python\bin\python.exe" -m pip install -r requirements.txt - Option 3: Install the packages via your virtual environment.

- Locate Blender's Python executable in the Blender installation directory. (eg.

-

Setup Completed

You have completed the setup. Proceed to Generate synthetics to run the synthetic dataset generation process.

Generating Images can be done from PowerShell or your virtual environment, but Generating Annotations must be done in your virtual environment.

To generate synthetic datasets for object detection:

-

Update

config/models.yamlandconfig/render_parameters.yamlas needed. Refer to Models and Parameters for more details. -

Generate Images:

blender -b -P src/render_blender.py -

Generate Annotations:

python3 src/create_labels.py

Currently supports fbx/obj/blend. Ensure your models only contain one object that has the same name as its filename.

Your targets of interest. Bounding boxes will be drawn around these objects.

Other objects which will be present in the scene. These won't be annotated.

Textures that your scene may have. Explore possible textures from texture haven and store all texture subfolders in a main folder.

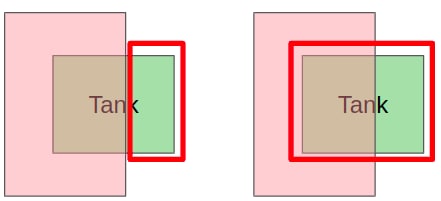

When not occlusion aware, bounding boxes will surround regions of the object that aren't visible by the camera.

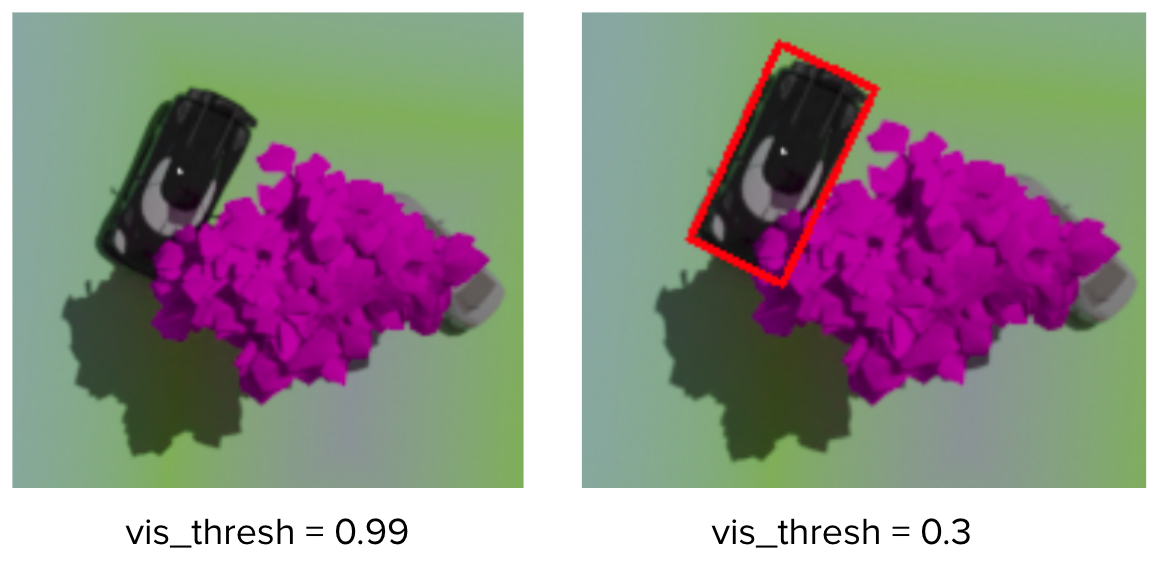

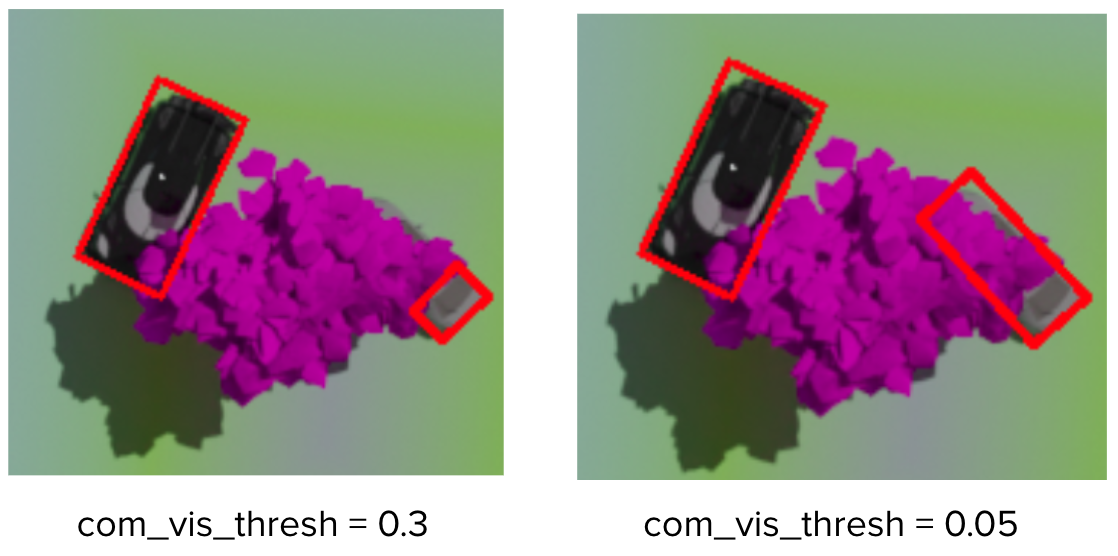

The fraction of an object that must be visible by the camera for it to be considered visible to a human annotator.

The fraction of an object components that must be visible by the camera for it to be considered visible to a human annotator.

The minimum amount of pixels of an object that must be visible by the camera for it to be considered visible to a human annotator.

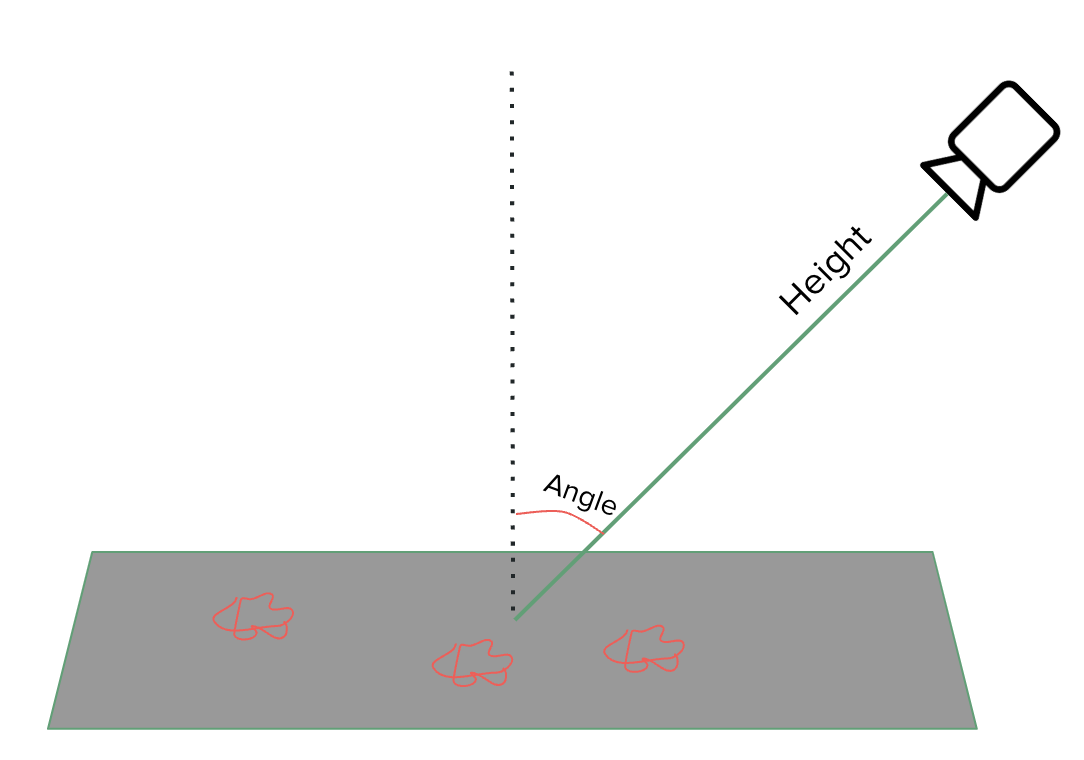

The sun's energy is the light intensity on the scene. The tilt is responsible for casting shadows and works similar to the camera's tilt.