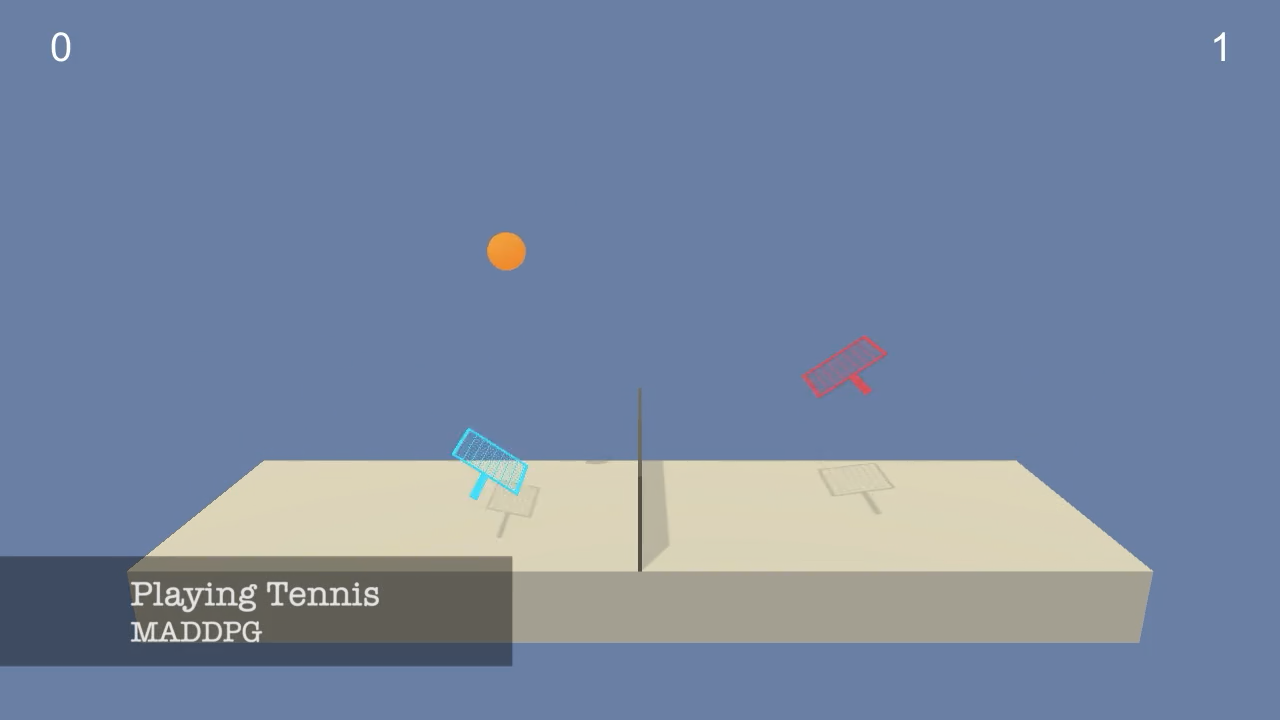

In this environment, two agents control rackets to bounce a ball over a net. If an agent hits the ball over the net, it receives a reward of +0.1. If an agent lets a ball hit the ground or hits the ball out of bounds, it receives a reward of -0.01. Thus, the goal of each agent is to keep the ball in play.

The observation space consists of 8 variables corresponding to the position and velocity of the ball and racket. Each agent receives its own, local observation. Two continuous actions are available, corresponding to movement toward (or away from) the net, and jumping.

The observation space consists of 24 variables corresponding to position, rotation, velocity, and angular velocities of the arm. A sample observation for one of the agents looks like this :

0. 0. 0. 0. 0. 0.

0. 0. 0. 0. 0. 0.

0. 0. 0. 0. -6.65278625 -1.5

-0. 0. 6.83172083 6. -0. 0. Each action is a vector with 2 numbers, corresponding to velocity applicable to the racket. Every entry in the action vector should be a number between -1 and 1.

The task is episodic, and the score for one episode is the maximum score between two agents. In order to solve the environment, agents must get an average score of +0.5 (over 100 consecutive episodes, after taking the maximum over both agents).

- Use

MADDPG-Train.ipynbnotebook for Training. - Use

MADDPG-Test.ipynbnotebook for Training. - For Report check out

Report.ipynbnotebook.

agents.pycontains a code for a MADDPG and DDPG Agent.brains.pycontains the definition of Neural Networks (Brains) used inside an Agent.

TrainedAgentsfolder contains saved weights for trained agents.Benchmarkfolder contains saved weights and scores for benchmarking.Imagesfolder contains images used in the notebooks.Moviesfolder contains recorded movies from each Agent.

It is highly recommended to create a separate python environment for running codes in this repository. The instructions are the same as in the Udacity's Deep Reinforcement Learning Nanodegree Repository. Here are the instructions:

-

Create (and activate) a new environment with Python 3.6.

- Linux or Mac:

conda create --name drlnd python=3.6 source activate drlnd- Windows:

conda create --name drlnd python=3.6 activate drlnd

-

Follow the instructions in this repository to perform a minimal install of OpenAI gym.

-

Here are quick commands to install a minimal gym, If you ran into an issue, head to the original repository for latest installation instruction:

pip install box2d-py pip install gym

- Clone the repository (if you haven't already!), and navigate to the

python/folder. Then, install several dependencies.

git clone https://github.com/taesiri/udacity_drlnd_project3

cd udacity_drlnd_project3/

pip install .- Create an IPython kernel for the

drlndenvironment.

python -m ipykernel install --user --name drlnd --display-name "drlnd"- Open the notebook you like to explore.

See it in action here: