This repository contains codes for quadtree attention. This repo contains codes for feature matching, image classficiation, object detection and semantic segmentation.

- Compile the quadtree attention operation

cd QuadTreeAttention&&python setup.py install - Install the package for each task according to each README.md in the separate directory.

We provide baselines results and model zoo in the following.

News! QuadTree Attention achieves the best single model performance among all public available pretrained models in image matching chanllenge 2022. Please refer to this [post].

- Quadtree on Feature matching

| Method | AUC@5 | AUC@10 | AUC@20 | Model |

|---|---|---|---|---|

| ScanNet | 24.9 | 44.7 | 61.8 | [Google]/[GitHub] |

| Megadepth | 53.5 | 70.2 | 82.2 | [Google]/[GitHub] |

- Quadtree on ImageNet-1K

| Method | Flops | Acc@1 | Model |

|---|---|---|---|

| Quadtree-B-b0 | 0.6 | 72.0 | [Google]/[GitHub] |

| Quadtree-B-b1 | 2.3 | 80.0 | [Google]/[GitHub] |

| Quadtree-B-b2 | 4.5 | 82.7 | [Google]/[GitHub] |

| Quadtree-B-b3 | 7.8 | 83.8 | [Google]/[GitHub] |

| Quadtree-B-b4 | 11.5 | 84.0 | [Google]/[GitHub] |

- Quadtree on COCO

| Method | Backbone | Pretrain | Lr schd | Aug | Box AP | Mask AP | Model |

|---|---|---|---|---|---|---|---|

| RetinaNet | Quadtree-B-b0 | ImageNet-1K | 1x | No | 38.4 | - | [Google]/[GitHub] |

| RetinaNet | Quadtree-B-b1 | ImageNet-1K | 1x | No | 42.6 | - | [Google]/[GitHub] |

| RetinaNet | Quadtree-B-b2 | ImageNet-1K | 1x | No | 46.2 | - | [Google]/[GitHub] |

| RetinaNet | Quadtree-B-b3 | ImageNet-1K | 1x | No | 47.3 | - | [Google]/[GitHub] |

| RetinaNet | Quadtree-B-b4 | ImageNet-1K | 1x | No | 47.9 | - | [Google]/[GitHub] |

| Mask R-CNN | Quadtree-B-b0 | ImageNet-1K | 1x | No | 38.8 | 36.5 | [Google]/[GitHub] |

| Mask R-CNN | Quadtree-B-b1 | ImageNet-1K | 1x | No | 43.5 | 40.1 | [Google]/[GitHub] |

| Mask R-CNN | Quadtree-B-b2 | ImageNet-1K | 1x | No | 46.7 | 42.4 | [Google]/[GitHub] |

| Mask R-CNN | Quadtree-B-b3 | ImageNet-1K | 1x | No | 48.3 | 43.3 | [Google]/[GitHub] |

| Mask R-CNN | Quadtree-B-b4 | ImageNet-1K | 1x | No | 48.6 | 43.6 | [Google]/[GitHub] |

- Quadtree on ADE20K

| Method | Backbone | Pretrain | Iters | mIoU | Model |

|---|---|---|---|---|---|

| Semantic FPN | Quadtree-b0 | ImageNet-1K | 160K | 39.9 | [Google]/[GitHub] |

| Semantic FPN | Quadtree-b1 | ImageNet-1K | 160K | 44.7 | [Google]/[GitHub] |

| Semantic FPN | Quadtree-b2 | ImageNet-1K | 160K | 48.7 | [Google]/[GitHub] |

| Semantic FPN | Quadtree-b3 | ImageNet-1K | 160K | 50.0 | [Google]/[GitHub] |

| Semantic FPN | Quadtree-b4 | ImageNet-1K | 160K | 50.6 | [Google]/[GitHub] |

@article{tang2022quadtree,

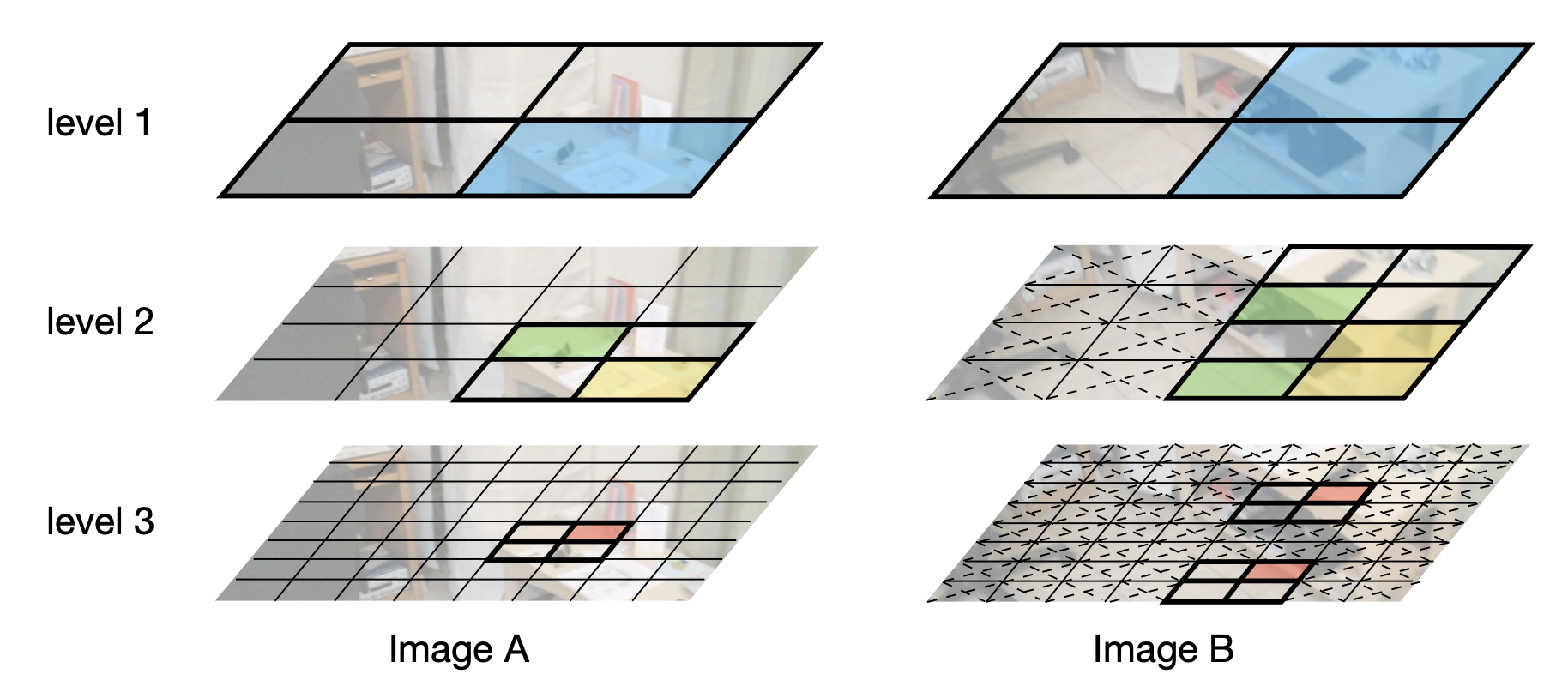

title={QuadTree Attention for Vision Transformers},

author={Tang, Shitao and Zhang, Jiahui and Zhu, Siyu and Tan, Ping},

journal={ICLR},

year={2022}

}