Before following this tutorial, if you are not familiar with docker we highly recommend that you get familiar with docker.

You do not need to be an expert but you need to know:

- What is a Docker image

- What is a Docker container

- How to read a Dockerfile

This video might help.

Disclaimer

This tutorial has been made on windows with WSL 2 (ubuntu).

If you are on Mac, Windows or another distribution and some of the commands are not recognized, you might need to change them. For example 'sudo service docker start' will not work on Mac or on the Powershell of Windows.

Remember to use a search engine or a chatbot to help.

Here are the main steps to run a job on the cluster using RunAI:

- Write your scripts (train, eval, preprocessed, etc...)

- Write and build a docker image that can run your scripts

- Upload your image on EPFL's ic registry (it will be available on the cloud)

- Run the image on the cluster using RunAI

Remember to make sure that your scripts and docker are working locally before submitting anything to the cluster (think twice, compute once).

Basic image

In this section, we will see how to build and run a simple docker image that saves a text file on you local machine using python.

Below is the Dockerfile

# Use the minimalistic Python Alpine image for smaller size.

FROM python:3.9-alpine

# Set the working directory in docker

WORKDIR /app

# Create a directory for the data volume

RUN mkdir /data

# Copy the Python script into the container at /app

COPY write_text.py .

# Always use the Python script as the entry point

ENTRYPOINT ["python", "write_text.py"]

# By default, write "hello world" to the file.

CMD ["--text", "hello world"]

Starting docker (mac users can also just start the docker desktop app to start docker)

sudo service docker startBuild a Docker image with the tag helloworld-image from the current directory (indicated by the . at the end).

docker build -t helloworld-image .Run the image. Will execute the ENTRYPOINT with the default parameter in CMD.

docker run helloworld-imageNothing is created on our machine.

To deal with this: option -v maps a directory from your local machine (host) to a directory inside the container.

docker run -v $(pwd):/data helloworld-imageBut our python script has an argument: "--text"

If we specify it in when running the container, it will override CMD (the default value)

docker run -v $(pwd):/data helloworld-image --text="New Hello Word"If you want to remove all your docker images

docker system prune -aRunning image with RunAI

First let us login to RunAI

runai loginYou should be prompted with a link to get a password

Now let us login to the registry. (try with sudo if does not work)

docker login ic-registry.epfl.chUse your Tequila credentials.

Tag your image to the ic-registry, replace d-vet by your lab, otherwise, you will not be able to push.

docker tag helloworld-image ic-registry.epfl.ch/d-vet/helloworld-imageIf you forgot the name of your image:

docker imagesNow we can push our image:

docker push ic-registry.epfl.ch/d-vet/helloworld-imageChecking the existing RunAI projects

runai list projectSubmit your job. After -p put your project name.

runai submit --name hello1 -p ml4ed-frej -i ic-registry.epfl.ch/d-vet/helloworld-image --cpu-limit 1 --gpu 0How to check the job:

runai describe job hello1 -p ml4ed-frejChecking the logs:

kubectl logs hello1-0-0 -n runai-ml4ed-frejHow to get all jobs

runai list jobs -p ml4ed-frejHow to delete the job:

runai delete job -p ml4ed-frej hello2 hello1How to pass the arguments ? Separate them with --

runai submit --name hello2 -p ml4ed-frej -i ic-registry.epfl.ch/d-vet/helloworld-image --cpu-limit 1 --gpu 0 -- --text="hahaha"How do we get our file ?: Persistent Volumes.

PVC

Check the name of the Persistent Volumes you lab has access to:

kubectl get pvc -n runai-ml4ed-frejLaunch with the pvc

runai submit --name hello1 -p ml4ed-frej -i ic-registry.epfl.ch/d-vet/helloworld-image --cpu-limit 1 --gpu 0 --pvc runai-ml4ed-frej-ml4eddata1:/dataIt fails.

Why?

Security.

New way of launching a job on runai (change the yaml file with your IDs):

kubectl create -f runai-job-default.yamlapiVersion: run.ai/v1 # Specifies the version of the Run.ai API this resource is written against.

kind: RunaiJob # Specifies the kind of resource, in this case, a Run.ai Job.

metadata:

name: hello1 # The name of the job.

namespace: runai-ml4ed-frej # The namespace in which the job will be created.

labels:

user: frej # REPLACE Tequila user

spec:

template:

metadata:

labels:

user: firstname.lastname # REPLACE

spec:

hostIPC: true # Do not change this

schedulerName: runai-scheduler # Do not change this

restartPolicy: Never # Specifies the pod's restart policy. Here, the pod won't be restarted if it terminates.

securityContext:

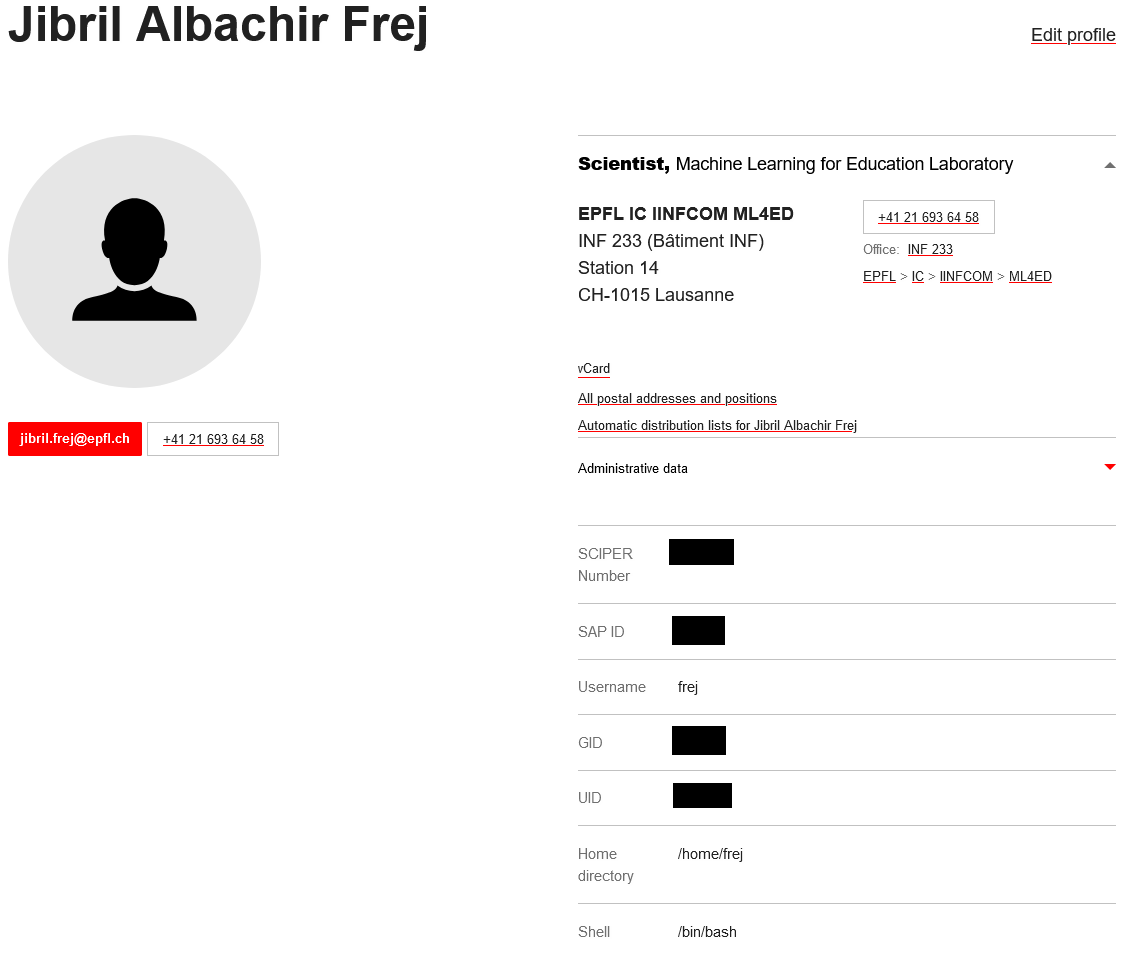

runAsUser: UID # Get this from https://people.epfl.ch/firstname.lastname

runAsGroup: GID # Get this from https://people.epfl.ch/firstname.lastname

fsGroup: GID # Get this from https://people.epfl.ch/firstname.lastname

containers:

- name: container-name # No idea why we have this, we already have the job name

image: ic-registry.epfl.ch/d-vet/helloworld-image # The container image to use.

args: # Arguments passed to the container.

- "--text"

- "Goodbye World"

resources:

limits:

cpu: "1" # Limit the container to use 1 CPU core.

nvidia.com/gpu: 0 # Specifies no GPU for this container.

volumeMounts:

- mountPath: /data # Path in the container at which the volume should be mounted.

name: data-volume # Refers to the name of the volume to be mounted.

volumes:

- name: data-volume

persistentVolumeClaim:

claimName: runai-ml4ed-frej-ml4eddata1 # The name of the PVC that this volume will use.To get your UserID and GroupID, visit your profile on the EPFL website:

Where is my file? Where can I access it? Need to see with your lab or with IC where is the PVC connected to.

ML4ED

For ML4ED (ask me for the password):

ssh root@icvm0018.xaas.epfl.chand then it should be in: /mnt/ic1files_epfl_ch_u13722_ic_ml4ed_001_files_nfs

Bonus: on the jumpbox icvm0018.xaas.epfl.ch, our lab server is also mounted.

It is located in /mnt/ic1files_epfl_ch_D-VET