Rectified Rotary Position Embeddings (ReRoPE)

Using ReRoPE, we can more effectively extend the context length of LLM without the need for fine-tuning.

Blog

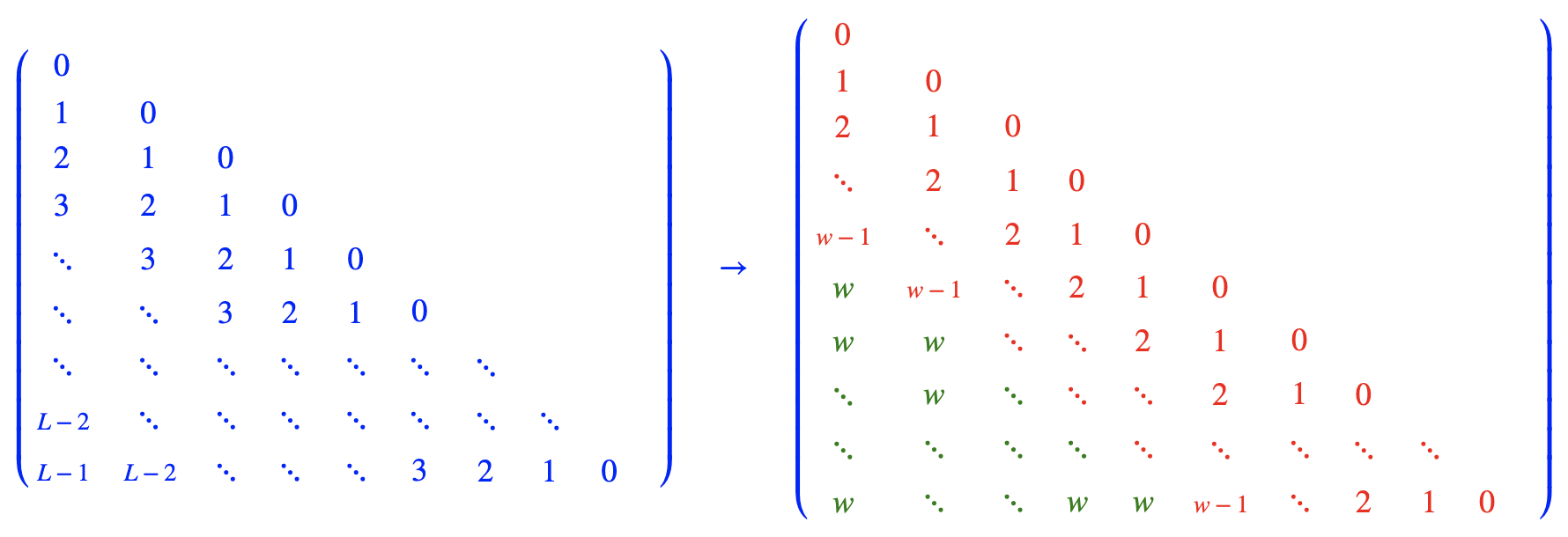

Idea

Usage

Dependency: transformers 4.31.0

Run: python test.py

From here and here, we can see what modifications ReRoPE/Leaky ReRoPE has made compared to the original llama implementation.

Cite

@misc{rerope2023,

title={Rectified Rotary Position Embeddings},

author={Jianlin Su},

year={2023},

howpublished={\url{https://github.com/bojone/rerope}},

}

Communication

QQ discussion group: 67729435, for WeChat group, please add the robot WeChat ID spaces_ac_cn