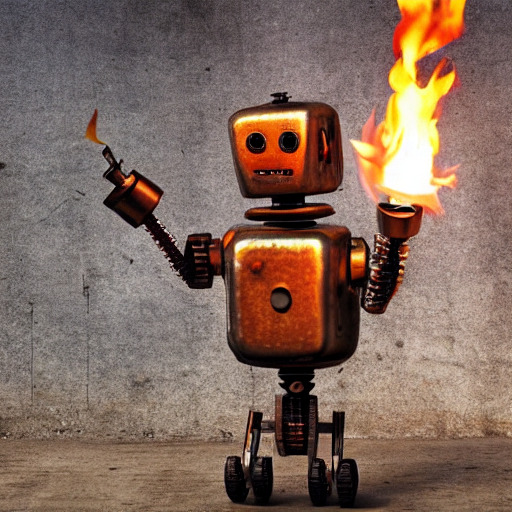

A rusty robot holding a fire torch, generated by stable diffusion using Rust and libtorch.

The diffusers crate is a Rust equivalent to Huggingface's amazing

diffusers Python library.

It is based on the tch crate.

The implementation is complete enough so as to be able to run Stable Diffusion

v1.5 and v2.1.

In order to run the models, one has to get the weights from the huggingface

model repo v2.1

or v1.5, move them in

the data/ directory and then can run the following command.

cargo run --example stable-diffusion --features clap -- --prompt "A rusty robot holding a fire torch."The final image is named sd_final.png by default.

The only supported scheduler is the Denoising Diffusion Implicit Model scheduler (DDIM). The

original paper and some code can be found in the associated repo.

This requires a GPU with more than 8GB of memory, as a fallback the CPU version can be used but is slower.

cargo run --example stable-diffusion --features clap -- --prompt "A very rusty robot holding a fire torch." --cpu allFor a GPU with 8GB, one can use the fp16 weights for the UNet and put only the UNet on the GPU.

PYTORCH_CUDA_ALLOC_CONF=garbage_collection_threshold:0.6,max_split_size_mb:128 RUST_BACKTRACE=1 CARGO_TARGET_DIR=target2 cargo run \

--example stable-diffusion --features clap -- --cpu vae --cpu clip --unet-weights data/unet-fp16.otA bunch of rusty robots holding some torches!

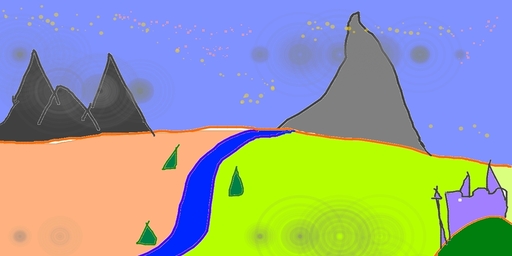

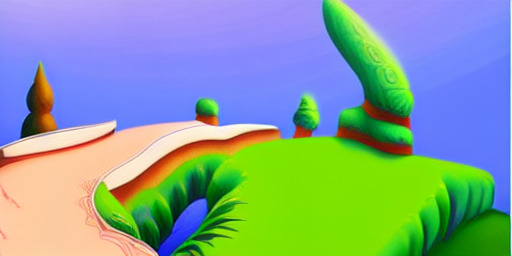

The stable diffusion model can also be used to generate an image based on another image. The following command runs this image to image pipeline:

cargo run --example stable-diffusion-img2img --features clap -- --input-image media/in_img2img.jpgThe default prompt is "A fantasy landscape, trending on artstation.", but can

be changed via the -prompt flag.

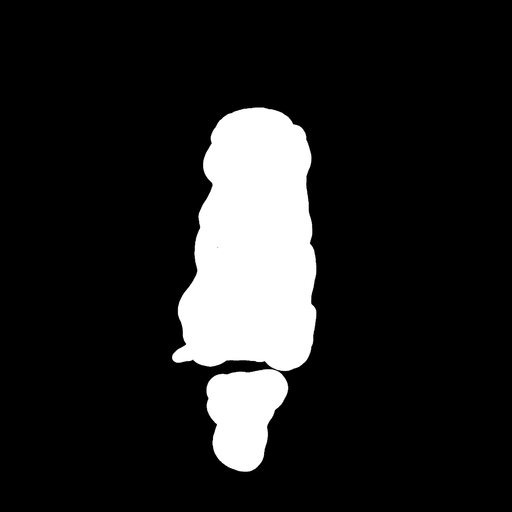

Inpainting can be used to modify an existing image based on a prompt and modifying the part of the

initial image specified by a mask.

This requires different unet weights unet-inpaint.ot that could also be retrieved from this

repo and should also be

placed in the data/ directory.

The following command runs this image to image pipeline:

wget https://raw.githubusercontent.com/CompVis/latent-diffusion/main/data/inpainting_examples/overture-creations-5sI6fQgYIuo.png -O sd_input.png

wget https://raw.githubusercontent.com/CompVis/latent-diffusion/main/data/inpainting_examples/overture-creations-5sI6fQgYIuo_mask.png -O sd_mask.png

cargo run --example stable-diffusion-inpaint --features clap --input-image sd_input.png --mask-image sd_mask.pngThe default prompt is "Face of a yellow cat, high resolution, sitting on a park bench.", but can

be changed via the -prompt flag.

The weights can be retrieved as .ot files from

huggingface v2.1.

or v1.5.

It is also possible to download the weights for the original stable diffusion

model and convert them to .ot files by following the instructions below, these

instructions are for version 1.5 but can easily be adapted for version 2.1

using this model repo

instead.

First get the vocabulary file and uncompress it.

mkdir -p data && cd data

wget https://github.com/openai/CLIP/raw/main/clip/bpe_simple_vocab_16e6.txt.gz

gunzip bpe_simple_vocab_16e6.txt.gzFor the clip encoding weights, start by downloading the weight file.

wget https://huggingface.co/openai/clip-vit-large-patch14/resolve/main/pytorch_model.binThen using Python, load the weights and save them in a .npz file.

import numpy as np

import torch

model = torch.load("./pytorch_model.bin")

np.savez("./pytorch_model.npz", **{k: v.numpy() for k, v in model.items() if "text_model" in k})Finally use tensor-tools from the examples directory to convert this to a .ot file that tch can use.

cargo run --release --example tensor-tools cp ./data/pytorch_model.npz ./data/pytorch_model.otThe weight files can be downloaded from huggingface's hub but it first requires you to log in (and to accept the terms of use the first time). Then you can download the VAE weights and Unet weights.

After downloading the files, use Python to convert them to npz files.

import numpy as np

import torch

model = torch.load("./vae.bin")

np.savez("./vae.npz", **{k: v.numpy() for k, v in model.items()})

model = torch.load("./unet.bin")

np.savez("./unet.npz", **{k: v.numpy() for k, v in model.items()})And again convert this to a .ot file via tensor-tools.

cargo run --release --example tensor-tools cp ./data/vae.npz ./data/vae.ot

cargo run --release --example tensor-tools cp ./data/unet.npz ./data/unet.ot